This guide is intended for administrators who need to set up, configure, and maintain clusters with SUSE® Linux Enterprise High Availability. For quick and efficient configuration and administration, the product includes both a graphical user interface and a command line interface (CLI). For performing key tasks, both approaches are covered in this guide. Thus, you can choose the appropriate tool that matches your needs.

- Preface

- I Installation and setup

- II Configuration and administration

- 5 Configuration and administration basics

- 6 Configuring cluster resources

- 6.1 Types of resources

- 6.2 Supported resource agent classes

- 6.3 Timeout values

- 6.4 Creating primitive resources

- 6.5 Creating resource groups

- 6.6 Creating clone resources

- 6.7 Creating promotable clones (multi-state resources)

- 6.8 Creating resource templates

- 6.9 Creating STONITH resources

- 6.10 Configuring resource monitoring

- 6.11 Loading resources from a file

- 6.12 Resource options (meta attributes)

- 6.13 Instance attributes (parameters)

- 6.14 Resource operations

- 7 Configuring resource constraints

- 7.1 Types of constraints

- 7.2 Scores and infinity

- 7.3 Resource templates and constraints

- 7.4 Adding location constraints

- 7.5 Adding colocation constraints

- 7.6 Adding order constraints

- 7.7 Using resource sets to define constraints

- 7.8 Specifying resource failover nodes

- 7.9 Specifying resource failback nodes (resource stickiness)

- 7.10 Placing resources based on their load impact

- 7.11 For more information

- 8 Managing cluster resources

- 9 Managing services on remote hosts

- 10 Adding or modifying resource agents

- 11 Monitoring clusters

- 12 Fencing and STONITH

- 13 Storage protection and SBD

- 13.1 Conceptual overview

- 13.2 Overview of manually setting up SBD

- 13.3 Requirements and restrictions

- 13.4 Number of SBD devices

- 13.5 Calculation of timeouts

- 13.6 Setting up the watchdog

- 13.7 Setting up SBD with devices

- 13.8 Setting up diskless SBD

- 13.9 Testing SBD and fencing

- 13.10 Additional mechanisms for storage protection

- 13.11 Changing SBD configuration

- 13.12 For more information

- 14 QDevice and QNetd

- 15 Access control lists

- 16 Network device bonding

- 17 Load balancing

- 18 High Availability for virtualization

- 19 Geo clusters (multi-site clusters)

- III Storage and data replication

- IV Maintenance and upgrade

- 28 Executing maintenance tasks

- 28.1 Preparing and finishing maintenance work

- 28.2 Different options for maintenance tasks

- 28.3 Putting the cluster into maintenance mode

- 28.4 Stopping the cluster services for the whole cluster

- 28.5 Putting a node into maintenance mode

- 28.6 Putting a node into standby mode

- 28.7 Stopping the cluster services on a node

- 28.8 Putting a resource into maintenance mode

- 28.9 Putting a resource into unmanaged mode

- 28.10 Stopping the cluster services while in maintenance mode

- 29 Upgrading your cluster and updating software packages

- 28 Executing maintenance tasks

- V Appendix

- Glossary

- E GNU licenses

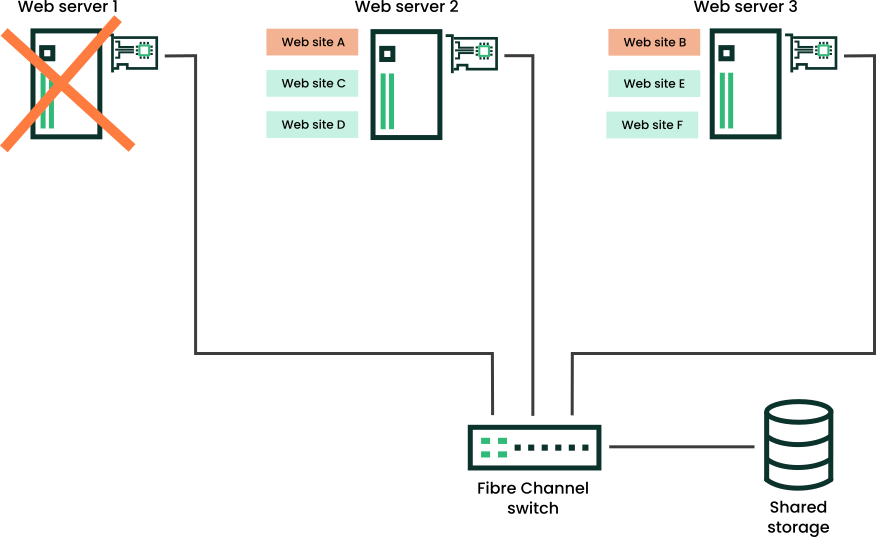

- 1.1 Three-server cluster

- 1.2 Three-server cluster after one server fails

- 1.3 Typical Fibre Channel cluster configuration

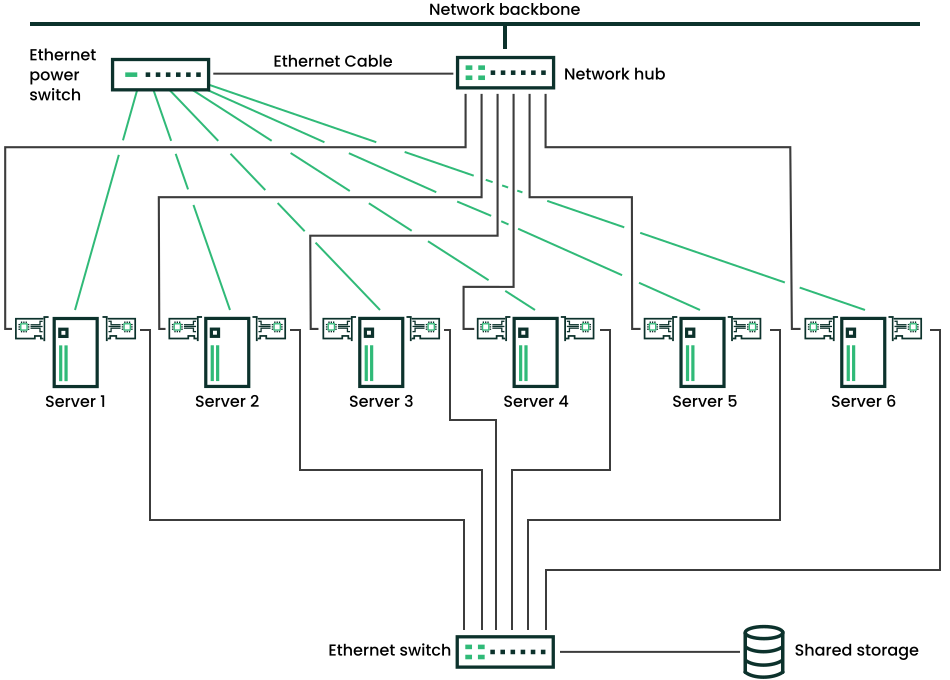

- 1.4 Typical iSCSI cluster configuration

- 1.5 Typical cluster configuration without shared storage

- 1.6 Architecture

- 4.1 YaST —multicast configuration

- 4.2 YaST —unicast configuration

- 4.3 YaST —security

- 4.4 YaST —

conntrackd - 4.5 YaST —services

- 4.6 YaST —Csync2

- 5.1 Hawk2—cluster configuration

- 5.2 Hawk2—wizard for Apache web server

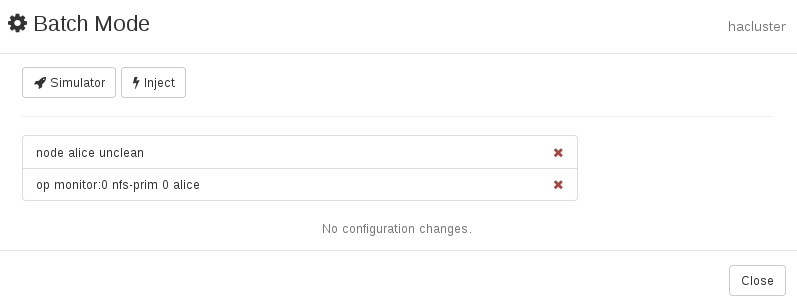

- 5.3 Hawk2 batch mode activated

- 5.4 Hawk2 batch mode—injected invents and configuration changes

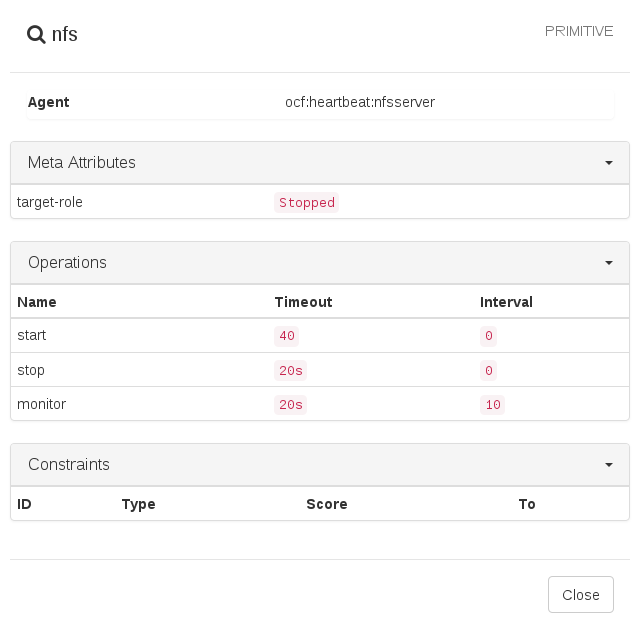

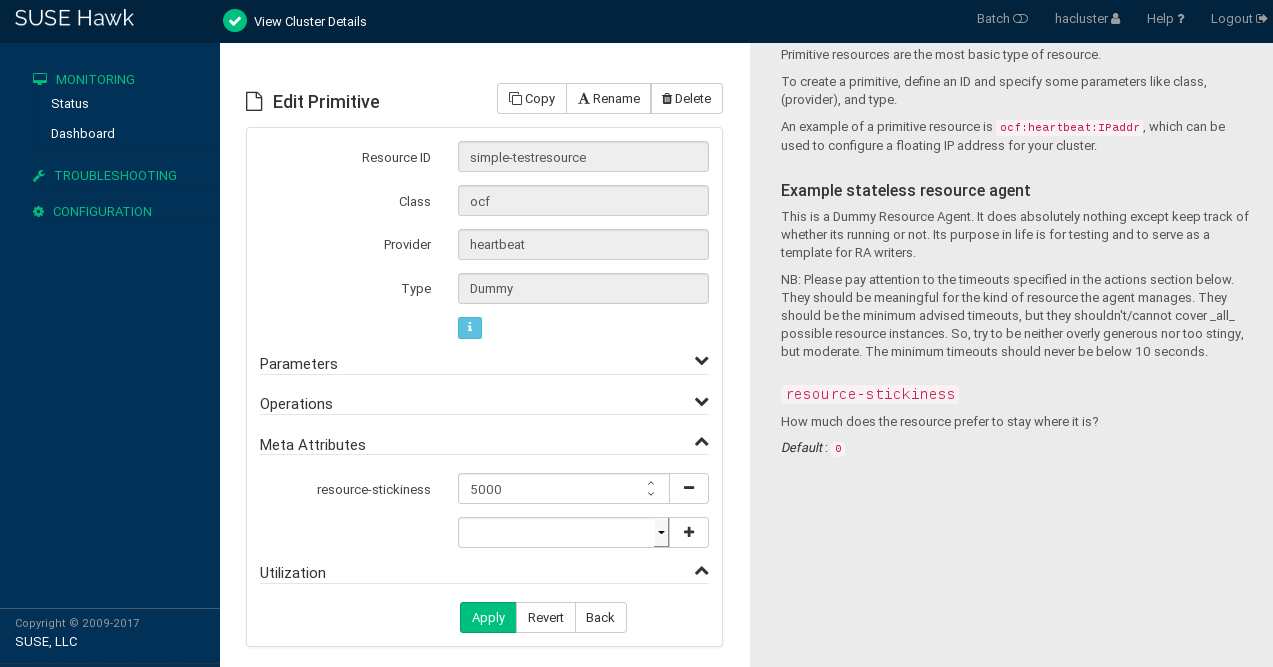

- 6.1 Hawk2—primitive resource

- 6.2 Group resource

- 6.3 Hawk2—resource group

- 6.4 Hawk2—clone resource

- 6.5 Hawk2—STONITH resource

- 6.6 Operation values

- 6.7 Hawk2—resource details

- 7.1 Hawk2—location constraint

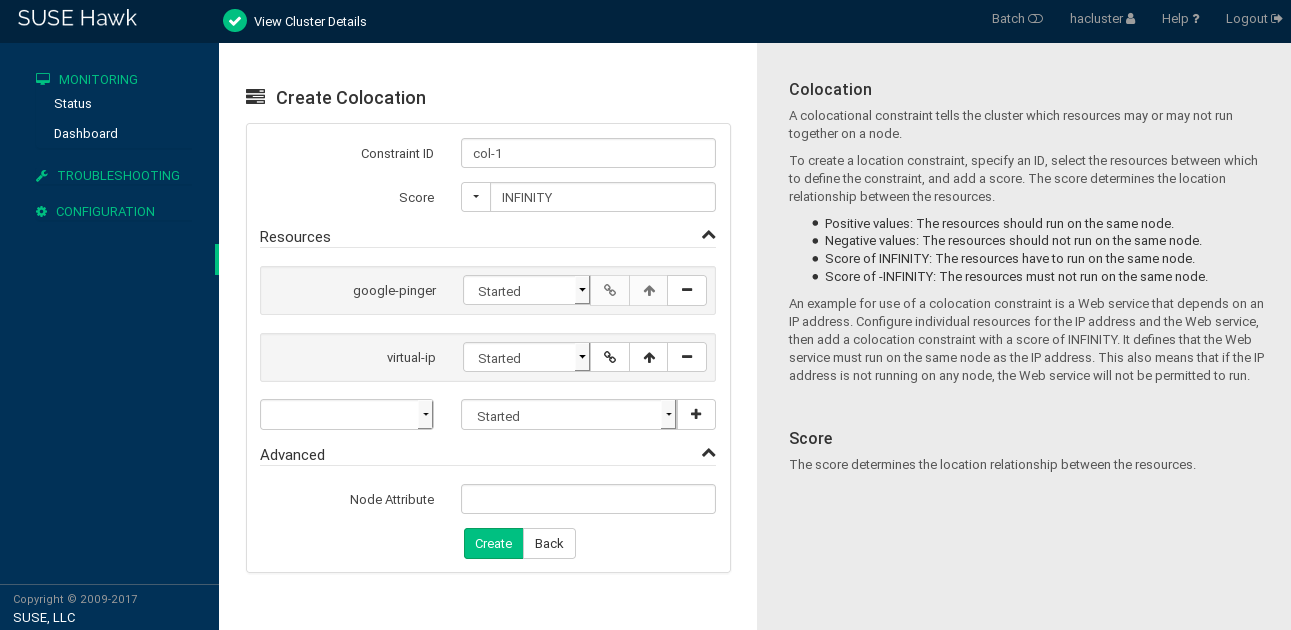

- 7.2 Hawk2—colocation constraint

- 7.3 Hawk2—order constraint

- 7.4 Hawk2—two resource sets in a colocation constraint

- 7.5 Hawk2: meta attributes for resource failover

- 7.6 Hawk2: meta attributes for resource failback

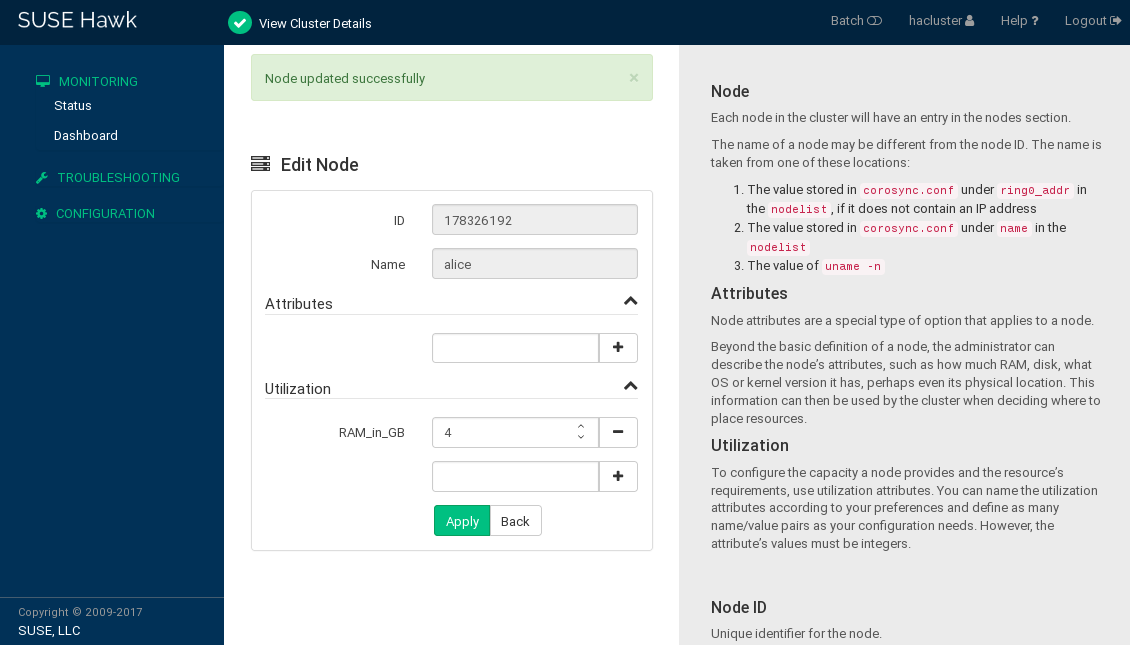

- 7.7 Hawk2: edit node utilization attributes

- 8.1 Hawk2—editing a primitive resource

- 8.2 Hawk2—tag

- 11.1 Hawk2—cluster status

- 11.2 Hawk2 dashboard with one cluster site (

amsterdam) - 11.3 Hawk2 add cluster details

- 11.4 Recent events table

- 11.5 Hawk2—history explorer main view

- 11.6 Hawk2 report details

- 15.1 Hawk2 Create Role

- 17.1 YaST IP load balancing—global parameters

- 17.2 YaST IP load balancing—virtual services

- 21.1 Hawk2 summary screen of OCFS2 CIB changes

- 23.1 Position of DRBD within Linux

- 23.2 Resource configuration

- 23.3 Resource stacking

- 23.4 Showing a good connection by

drbdmon - 23.5 Showing a bad connection by

drbdmon - 24.1 Setup of a shared disk with Cluster LVM

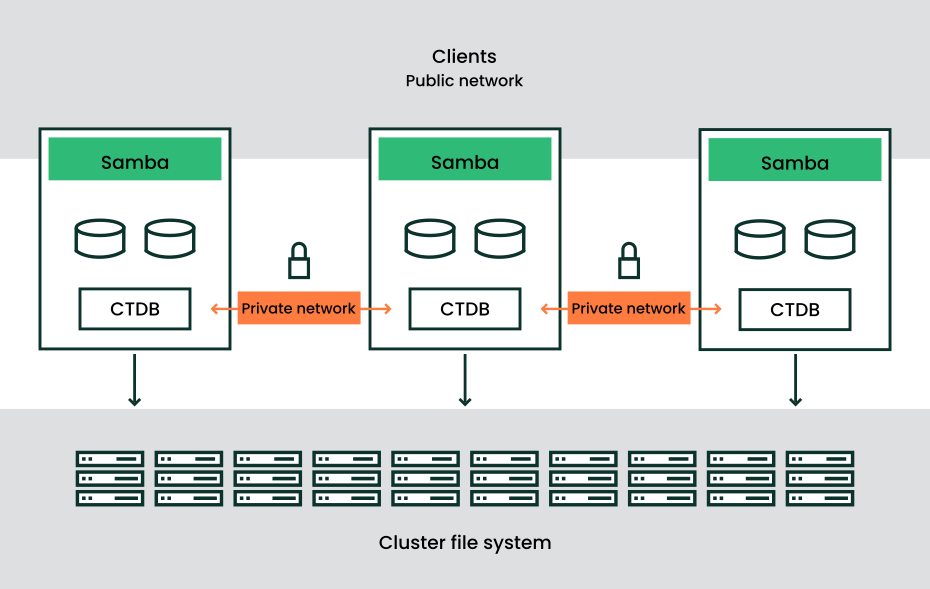

- 26.1 Structure of a CTDB cluster

- 29.1 Overview of supported upgrade paths

- 5.1 Excerpt of Corosync configuration for a two-node cluster

- 5.2 Excerpt of Corosync configuration for an n-node cluster

- 5.3 A simple

crmshshell script - 6.1 Resource group for a web server

- 7.1 A resource set for location constraints

- 7.2 A chain of colocated resources

- 7.3 A chain of ordered resources

- 7.4 A chain of ordered resources expressed as resource set

- 7.5 Migration threshold—process flow

- 7.6 Creating resource agents for KVM with

hv_memorydisabled - 7.7 Creating resource agents for Xen with

host_memorydisabled - 9.1 Configuring resources for monitoring plug-ins

- 12.1 Configuration of an IBM RSA lights-out device

- 12.2 Configuration of a UPS fencing device

- 12.3 Configuration of a Kdump device

- 14.1 Status of QDevice

- 14.2 Status of QNetd server

- 15.1 Excerpt of a cluster configuration in XML

- 17.1 Simple ldirectord configuration

- 23.1 Configuration of a three-node stacked DRBD resource

- 27.1 Using an NFS server to store the file backup

- 27.2 Backing up Btrfs subvolumes with

tar - 27.3 Using third-party backup tools like EMC NetWorker

- 27.4 Backing up multipath devices

- 27.5 Booting your system with UEFI

- 27.6 Creating a recovery system with a basic

tarbackup - 27.7 Creating a recovery system with a third-party backup

- A1 Stopped resources

Copyright © 2006–2026 SUSE LLC and contributors. All rights reserved.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.2 or (at your option) version 1.3; with the Invariant Section being this copyright notice and license. A copy of the license version 1.2 is included in the section entitled “GNU Free Documentation License”.

For SUSE trademarks, see https://www.suse.com/company/legal/. All third-party trademarks are the property of their respective owners. Trademark symbols (®, ™ etc.) denote trademarks of SUSE and its affiliates. Asterisks (*) denote third-party trademarks.

All information found in this book has been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. Neither SUSE LLC, its affiliates, the authors nor the translators shall be held liable for possible errors or the consequences thereof.

1 Available documentation #

- Online documentation

Our documentation is available online at https://documentation.suse.com. Browse or download the documentation in various formats.

Note: Latest updatesThe latest updates are usually available in the English-language version of this documentation.

- SUSE Knowledgebase

If you run into an issue, check out the Technical Information Documents (TIDs) that are available online at https://www.suse.com/support/kb/. Search the SUSE Knowledgebase for known solutions driven by customer need.

- Release notes

For release notes, see https://www.suse.com/releasenotes/.

- On your system

For offline use, the release notes are also available under

/usr/share/doc/release-noteson your system. The documentation for individual packages is available at/usr/share/doc/packages.Many commands are also described in their manual pages. To view them, run

man, followed by a specific command name. If themancommand is not installed on your system, install it withsudo zypper install man.

2 Improving the documentation #

Your feedback and contributions to this documentation are welcome. The following channels for giving feedback are available:

- Service requests and support

For services and support options available for your product, see https://www.suse.com/support/.

To open a service request, you need a SUSE subscription registered at SUSE Customer Center. Go to https://scc.suse.com/support/requests, log in and click .

- Bug reports

Report issues with the documentation at https://bugzilla.suse.com/.

To simplify this process, click the icon next to a headline in the HTML version of this document. This preselects the right product and category in Bugzilla and adds a link to the current section. You can start typing your bug report right away.

A Bugzilla account is required.

- Contributions

To contribute to this documentation, click the icon next to a headline in the HTML version of this document. This will take you to the source code on GitHub, where you can open a pull request.

A GitHub account is required.

Note: only available for EnglishThe icons are only available for the English version of each document. For all other languages, use the icons instead.

For more information about the documentation environment used for this documentation, see the repository's README.

You can also report errors and send feedback concerning the documentation to <doc-team@suse.com>. Include the document title, the product version, and the publication date of the document. Additionally, include the relevant section number and title (or provide the URL) and provide a concise description of the problem.

3 Documentation conventions #

The following notices and typographic conventions are used in this document:

/etc/passwd: Directory names and file namesPLACEHOLDER: Replace PLACEHOLDER with the actual value

PATH: An environment variablels,--help: Commands, options and parametersuser: The name of a user or grouppackage_name: The name of a software package

Alt, Alt–F1: A key to press or a key combination. Keys are shown in uppercase, as on a keyboard.

, › : Menu items, buttons

AMD/Intel This paragraph is only relevant for the AMD64/Intel 64 architectures. The arrows mark the beginning and the end of the text block.

IBM Z, POWER This paragraph is only relevant for the architectures

IBM ZandPOWER. The arrows mark the beginning and the end of the text block.Chapter 1, “Example chapter”: A cross-reference to another chapter in this guide.

Commands that must be run with

rootprivileges. You can also prefix these commands with thesudocommand to run them as a non-privileged user:#command>sudocommandCommands that can be run by non-privileged users:

>commandCommands can be split into two or multiple lines by a backslash character (

\) at the end of a line. The backslash informs the shell that the command invocation will continue after the end of the line:>echoa b \ c dA code block that shows both the command (preceded by a prompt) and the respective output returned by the shell:

>commandoutputCommands executed in the interactive CRM Shell.

crm(live)#Notices

Warning: Warning noticeVital information you must know before proceeding. Warns you about security issues, potential loss of data, damage to hardware, or physical hazards.

Important: Important noticeImportant information you should know before proceeding.

Note: Note noticeAdditional information, for example about differences in software versions.

Tip: Tip noticeHelpful information, like a guideline or a piece of practical advice.

Compact Notices

Additional information, for example, about differences in software versions.

Helpful information, like a guideline or a piece of practical advice.

For an overview of naming conventions for cluster nodes and names, resources and constraints, see Appendix B, Naming conventions.

4 Support #

Find the support statement for SUSE Linux Enterprise High Availability and general information about technology previews below. For details about the product lifecycle, see https://www.suse.com/lifecycle.

If you are entitled to support, find details on how to collect information for a support ticket at https://documentation.suse.com/sles-15/html/SLES-all/cha-adm-support.html.

4.1 Support statement for SUSE Linux Enterprise High Availability #

To receive support, you need an appropriate subscription with SUSE. To view the specific support offers available to you, go to https://www.suse.com/support/ and select your product.

The support levels are defined as follows:

- L1

Problem determination, which means technical support designed to provide compatibility information, usage support, ongoing maintenance, information gathering and basic troubleshooting using available documentation.

- L2

Problem isolation, which means technical support designed to analyze data, reproduce customer problems, isolate a problem area and provide a resolution for problems not resolved by Level 1 or prepare for Level 3.

- L3

Problem resolution, which means technical support designed to resolve problems by engaging engineering to resolve product defects which have been identified by Level 2 Support.

For contracted customers and partners, SUSE Linux Enterprise High Availability is delivered with L3 support for all packages, except for the following:

Technology previews.

Sound, graphics, fonts, and artwork.

Packages that require an additional customer contract.

Some packages shipped as part of the module Workstation Extension are L2-supported only.

Packages with names ending in -devel (containing header files and similar developer resources) will only be supported together with their main packages.

SUSE will only support the usage of original packages. That is, packages that are unchanged and not recompiled.

4.2 Technology previews #

Technology previews are packages, stacks, or features delivered by SUSE to provide glimpses into upcoming innovations. Technology previews are included for your convenience to give you a chance to test new technologies within your environment. We would appreciate your feedback. If you test a technology preview, please contact your SUSE representative and let them know about your experience and use cases. Your input is helpful for future development.

Technology previews have the following limitations:

Technology previews are still in development. Therefore, they may be functionally incomplete, unstable, or otherwise not suitable for production use.

Technology previews are not supported.

Technology previews may only be available for specific hardware architectures.

Details and functionality of technology previews are subject to change. As a result, upgrading to subsequent releases of a technology preview may be impossible and require a fresh installation.

SUSE may discover that a preview does not meet customer or market needs, or does not comply with enterprise standards. Technology previews can be removed from a product at any time. SUSE does not commit to providing a supported version of such technologies in the future.

For an overview of technology previews shipped with your product, see the release notes at https://www.suse.com/releasenotes.

Part I Installation and setup #

- 1 Product overview

SUSE® Linux Enterprise High Availability is an integrated suite of open source clustering technologies. It enables you to implement highly available physical and virtual Linux clusters, and to eliminate single points of failure. It ensures the high availability and manageability of critical network resources including data, applications, and services. Thus, it helps you maintain business continuity, protect data integrity, and reduce unplanned downtime for your mission-critical Linux workloads.

It ships with essential monitoring, messaging, and cluster resource management functionality (supporting failover, failback, and migration (load balancing) of individually managed cluster resources).

This chapter introduces the main product features and benefits of SUSE Linux Enterprise High Availability. Inside you will find several example clusters and learn about the components making up a cluster. The last section provides an overview of the architecture, describing the individual architecture layers and processes within the cluster.

For explanations of some common terms used in the context of High Availability clusters, refer to Glossary.

- 2 System requirements and recommendations

The following section informs you about system requirements and prerequisites for SUSE® Linux Enterprise High Availability. It also includes recommendations for cluster setup.

- 3 Installing SUSE Linux Enterprise High Availability

If you are setting up a High Availability cluster with SUSE® Linux Enterprise High Availability for the first time, the easiest way is to start with a basic two-node cluster. You can also use the two-node cluster to run some tests. Afterward, you can add more nodes by cloning existing cluster nodes with AutoYaST. The cloned nodes will have the same packages installed and the same system configuration as the original ones.

If you want to upgrade an existing cluster that runs an older version of SUSE Linux Enterprise High Availability, refer to Chapter 29, Upgrading your cluster and updating software packages.

- 4 Using the YaST cluster module

The YaST cluster module allows you to set up a cluster manually (from scratch) or to modify options for an existing cluster.

However, if you prefer an automated approach for setting up a cluster, refer to Article “Installation and Setup Quick Start”. It describes how to install the needed packages and leads you to a basic two-node cluster, which is set up with the bootstrap scripts provided by the CRM Shell.

Use the same setup method for all nodes in the cluster. Mixing the setup methods is not recommended.

1 Product overview #

SUSE® Linux Enterprise High Availability is an integrated suite of open source clustering technologies. It enables you to implement highly available physical and virtual Linux clusters, and to eliminate single points of failure. It ensures the high availability and manageability of critical network resources including data, applications, and services. Thus, it helps you maintain business continuity, protect data integrity, and reduce unplanned downtime for your mission-critical Linux workloads.

It ships with essential monitoring, messaging, and cluster resource management functionality (supporting failover, failback, and migration (load balancing) of individually managed cluster resources).

This chapter introduces the main product features and benefits of SUSE Linux Enterprise High Availability. Inside you will find several example clusters and learn about the components making up a cluster. The last section provides an overview of the architecture, describing the individual architecture layers and processes within the cluster.

For explanations of some common terms used in the context of High Availability clusters, refer to Glossary.

1.1 Availability as a module or extension #

High Availability is available as a module or extension for several products. For details, see https://documentation.suse.com/sles/15/html/SLES-all/article-modules.html#art-modules-high-availability.

1.2 Key features #

SUSE® Linux Enterprise High Availability helps you ensure and manage the availability of your network resources. The following sections highlight some key features:

1.2.1 Wide range of clustering scenarios #

SUSE Linux Enterprise High Availability supports the following scenarios:

Active/active configurations

Active/passive configurations: N+1, N+M, N to 1, N to M

Hybrid physical and virtual clusters, allowing virtual servers to be clustered with physical servers. This improves service availability and resource usage.

Local clusters

Metro clusters (“stretched” local clusters)

Geo clusters (geographically dispersed clusters)

All nodes belonging to a cluster should have the same processor platform: x86, IBM Z, or POWER. Clusters of mixed architectures are not supported.

Your cluster can contain up to 32 Linux servers. Using pacemaker_remote, the cluster can be extended to include additional Linux servers beyond this limit. Any server in the cluster can restart resources (applications, services, IP addresses, and file systems) from a failed server in the cluster.

1.2.2 Flexibility #

SUSE Linux Enterprise High Availability ships with Corosync messaging and membership layer and Pacemaker Cluster Resource Manager. Using Pacemaker, administrators can continually monitor the health and status of their resources, and manage dependencies. They can automatically stop and start services based on highly configurable rules and policies. SUSE Linux Enterprise High Availability allows you to tailor a cluster to the specific applications and hardware infrastructure that fit your organization. Time-dependent configuration enables services to automatically migrate back to repaired nodes at specified times.

1.2.3 Storage and data replication #

With SUSE Linux Enterprise High Availability you can dynamically assign and reassign server storage as needed. It supports Fibre Channel or iSCSI storage area networks (SANs). Shared disk systems are also supported, but they are not a requirement. SUSE Linux Enterprise High Availability also comes with a cluster-aware file system (OCFS2) and the cluster Logical Volume Manager (Cluster LVM). For replication of your data, use DRBD* to mirror the data of a High Availability service from the active node of a cluster to its standby node. Furthermore, SUSE Linux Enterprise High Availability also supports CTDB (Cluster Trivial Database), a technology for Samba clustering.

1.2.4 Support for virtualized environments #

SUSE Linux Enterprise High Availability supports the mixed clustering of both physical and virtual Linux servers. SUSE Linux Enterprise Server 15 SP6 ships with Xen, an open source virtualization hypervisor, and with KVM (Kernel-based Virtual Machine). KVM is a virtualization software for Linux which is based on hardware virtualization extensions. The cluster resource manager in SUSE Linux Enterprise High Availability can recognize, monitor, and manage services running within virtual servers and services running in physical servers. Guest systems can be managed as services by the cluster.

Use caution when performing live migration of nodes in an active cluster. The cluster stack might not tolerate an operating system freeze caused by the live migration process, which could lead to the node being fenced.

We recommend either of the following actions to help avoid node fencing during live migration:

Increase the Corosync token timeout and the SBD watchdog timeout, along with any other related settings. The appropriate values depend on your specific setup. For more information, see Section 13.5, “Calculation of timeouts”.

Before performing live migration, stop the cluster services on either the node or the whole cluster. For more information, see Section 28.2, “Different options for maintenance tasks”.

You must thoroughly test this setup before attempting live migration in a production environment.

1.2.5 Support of local, metro, and Geo clusters #

SUSE Linux Enterprise High Availability supports different geographical scenarios, including geographically dispersed clusters (Geo clusters).

- Local clusters

A single cluster in one location (for example, all nodes are located in one data center). The cluster uses multicast or unicast for communication between the nodes and manages failover internally. Network latency can be neglected. Storage is typically accessed synchronously by all nodes.

- Metro clusters

A single cluster that can stretch over multiple buildings or data centers, with all sites connected by fibre channel. The cluster uses multicast or unicast for communication between the nodes and manages failover internally. Network latency is usually low (<5 ms for distances of approximately 20 miles). Storage is frequently replicated (mirroring or synchronous replication).

- Geo clusters (multi-site clusters)

Multiple, geographically dispersed sites with a local cluster each. The sites communicate via IP. Failover across the sites is coordinated by a higher-level entity. Geo clusters need to cope with limited network bandwidth and high latency. Storage is replicated asynchronously.

Note: Geo clustering and SAP workloadsCurrently Geo clusters support neither SAP HANA system replication nor SAP S/4HANA and SAP NetWeaver enqueue replication setups.

The greater the geographical distance between individual cluster nodes, the more factors may potentially disturb the high availability of services the cluster provides. Network latency, limited bandwidth and access to storage are the main challenges for long-distance clusters.

1.2.6 Resource agents #

SUSE Linux Enterprise High Availability includes many resource agents to manage

resources such as Apache, IPv4, IPv6 and many more. It also ships with

resource agents for popular third party applications such as IBM

WebSphere Application Server. For an overview of Open Cluster Framework

(OCF) resource agents included with your product, use the crm

ra command as described in

Section 5.5.4, “Displaying information about OCF resource agents”.

1.2.7 User-friendly administration tools #

SUSE Linux Enterprise High Availability ships with a set of powerful tools. Use them for basic installation and setup of your cluster and for effective configuration and administration:

- YaST

A graphical user interface for general system installation and administration. Use it to install SUSE Linux Enterprise High Availability on top of SUSE Linux Enterprise Server as described in the Installation and Setup Quick Start. YaST also provides the following modules in the High Availability category to help configure your cluster or individual components:

Cluster: Basic cluster setup. For details, refer to Chapter 4, Using the YaST cluster module.

DRBD: Configuration of a Distributed Replicated Block Device.

IP Load Balancing: Configuration of load balancing with Linux Virtual Server or HAProxy. For details, refer to Chapter 17, Load balancing.

- Hawk2

A user-friendly Web-based interface with which you can monitor and administer your High Availability clusters from Linux or non-Linux machines alike. Hawk2 can be accessed from any machine inside or outside of the cluster by using a (graphical) Web browser. Therefore it is the ideal solution even if the system on which you are working only provides a minimal graphical user interface. For details, Section 5.4, “Introduction to Hawk2”.

crmShellA powerful unified command line interface to configure resources and execute all monitoring or administration tasks. For details, refer to Section 5.5, “Introduction to

crmsh”.

1.3 Benefits #

SUSE Linux Enterprise High Availability allows you to configure up to 32 Linux servers into a high-availability cluster (HA cluster). Resources can be dynamically switched or moved to any node in the cluster. Resources can be configured to automatically migrate if a node fails, or they can be moved manually to troubleshoot hardware or balance the workload.

SUSE Linux Enterprise High Availability provides high availability from commodity components. Lower costs are obtained through the consolidation of applications and operations onto a cluster. SUSE Linux Enterprise High Availability also allows you to centrally manage the complete cluster. You can adjust resources to meet changing workload requirements (thus, manually “load balance” the cluster). Allowing clusters of more than two nodes also provides savings by allowing several nodes to share a “hot spare”.

An equally important benefit is the potential reduction of unplanned service outages and planned outages for software and hardware maintenance and upgrades.

Reasons that you would want to implement a cluster include:

Increased availability

Improved performance

Low cost of operation

Scalability

Disaster recovery

Data protection

Server consolidation

Storage consolidation

Shared disk fault tolerance can be obtained by implementing RAID on the shared disk subsystem.

The following scenario illustrates some benefits SUSE Linux Enterprise High Availability can provide.

Example cluster scenario#

Suppose you have configured a three-node cluster, with a Web server installed on each of the three nodes in the cluster. Each of the nodes in the cluster hosts two Web sites. All the data, graphics, and Web page content for each Web site are stored on a shared disk subsystem connected to each of the nodes in the cluster. The following figure depicts how this setup might look.

During normal cluster operation, each node is in constant communication with the other nodes in the cluster and performs periodic polling of all registered resources to detect failure.

Suppose Web Server 1 experiences hardware or software problems and the users depending on Web Server 1 for Internet access, e-mail, and information lose their connections. The following figure shows how resources are moved when Web Server 1 fails.

Web Site A moves to Web Server 2 and Web Site B moves to Web Server 3. IP addresses and certificates also move to Web Server 2 and Web Server 3.

When you configured the cluster, you decided where the Web sites hosted on each Web server would go should a failure occur. In the previous example, you configured Web Site A to move to Web Server 2 and Web Site B to move to Web Server 3. This way, the workload formerly handled by Web Server 1 continues to be available and is evenly distributed between any surviving cluster members.

When Web Server 1 failed, the High Availability software did the following:

Detected a failure and verified with STONITH that Web Server 1 was really dead. STONITH is an acronym for “Shoot The Other Node In The Head”. It is a means of bringing down misbehaving nodes to prevent them from causing trouble in the cluster.

Remounted the shared data directories that were formerly mounted on Web server 1 on Web Server 2 and Web Server 3.

Restarted applications that were running on Web Server 1 on Web Server 2 and Web Server 3.

Transferred IP addresses to Web Server 2 and Web Server 3.

In this example, the failover process happened quickly and users regained access to Web site information within seconds, usually without needing to log in again.

Now suppose the problems with Web Server 1 are resolved, and Web Server 1 is returned to a normal operating state. Web Site A and Web Site B can either automatically fail back (move back) to Web Server 1, or they can stay where they are. This depends on how you configured the resources for them. Migrating the services back to Web Server 1 will incur some down-time. Therefore SUSE Linux Enterprise High Availability also allows you to defer the migration until a period when it will cause little or no service interruption. There are advantages and disadvantages to both alternatives.

SUSE Linux Enterprise High Availability also provides resource migration capabilities. You can move applications, Web sites, etc. to other servers in your cluster as required for system management.

For example, you could have manually moved Web Site A or Web Site B from Web Server 1 to either of the other servers in the cluster. Use cases for this are upgrading or performing scheduled maintenance on Web Server 1, or increasing performance or accessibility of the Web sites.

1.4 Cluster configurations: storage #

Cluster configurations with SUSE Linux Enterprise High Availability might or might not include a shared disk subsystem. The shared disk subsystem can be connected via high-speed Fibre Channel cards, cables, and switches, or it can be configured to use iSCSI. If a node fails, another designated node in the cluster automatically mounts the shared disk directories that were previously mounted on the failed node. This gives network users continuous access to the directories on the shared disk subsystem.

When using a shared disk subsystem with LVM, that subsystem must be connected to all servers in the cluster from which it needs to be accessed.

Typical resources might include data, applications, and services. The following figures show how a typical Fibre Channel cluster configuration might look. The green lines depict connections to an Ethernet power switch. Such a device can be controlled over a network and can reboot a node when a ping request fails.

Although Fibre Channel provides the best performance, you can also configure your cluster to use iSCSI. iSCSI is an alternative to Fibre Channel that can be used to create a low-cost Storage Area Network (SAN). The following figure shows how a typical iSCSI cluster configuration might look.

Although most clusters include a shared disk subsystem, it is also possible to create a cluster without a shared disk subsystem. The following figure shows how a cluster without a shared disk subsystem might look.

1.5 Architecture #

This section provides a brief overview of SUSE Linux Enterprise High Availability architecture. It identifies and provides information on the architectural components, and describes how those components interoperate.

1.5.1 Architecture layers #

SUSE Linux Enterprise High Availability has a layered architecture. Figure 1.6, “Architecture” illustrates the different layers and their associated components.

1.5.1.1 Membership and messaging layer (Corosync) #

This component provides reliable messaging, membership, and quorum information about the cluster. This is handled by the Corosync cluster engine, a group communication system.

1.5.1.2 Cluster resource manager (Pacemaker) #

Pacemaker as cluster resource manager is the “brain”

which reacts to events occurring in the cluster. It is implemented as

pacemaker-controld, the cluster

controller, which coordinates all actions. Events can be nodes that join

or leave the cluster, failure of resources, or scheduled activities such

as maintenance, for example.

- Local resource manager

The local resource manager is located between the Pacemaker layer and the resources layer on each node. It is implemented as

pacemaker-execddaemon. Through this daemon, Pacemaker can start, stop, and monitor resources.- Cluster Information Database (CIB)

On every node, Pacemaker maintains the cluster information database (CIB). It is an XML representation of the cluster configuration (including cluster options, nodes, resources, constraints and the relationship to each other). The CIB also reflects the current cluster status. Each cluster node contains a CIB replica, which is synchronized across the whole cluster. The

pacemaker-baseddaemon takes care of reading and writing cluster configuration and status.- Designated Coordinator (DC)

The DC is elected from all nodes in the cluster. This happens if there is no DC yet or if the current DC leaves the cluster for any reason. The DC is the only entity in the cluster that can decide that a cluster-wide change needs to be performed, such as fencing a node or moving resources around. All other nodes get their configuration and resource allocation information from the current DC.

- Policy Engine

The policy engine runs on every node, but the one on the DC is the active one. The engine is implemented as

pacemaker-schedulerddaemon. When a cluster transition is needed, based on the current state and configuration,pacemaker-schedulerdcalculates the expected next state of the cluster. It determines what actions need to be scheduled to achieve the next state.

1.5.1.3 Resources and resource agents #

In a High Availability cluster, the services that need to be highly available are called resources. Resource agents (RAs) are scripts that start, stop, and monitor cluster resources.

1.5.2 Process flow #

The pacemakerd daemon launches and

monitors all other related daemons. The daemon that coordinates all actions,

pacemaker-controld, has an instance on

each cluster node. Pacemaker centralizes all cluster decision-making by

electing one of those instances as a primary. Should the elected pacemaker-controld daemon fail, a new primary is

established.

Many actions performed in the cluster will cause a cluster-wide change. These actions can include things like adding or removing a cluster resource or changing resource constraints. It is important to understand what happens in the cluster when you perform such an action.

For example, suppose you want to add a cluster IP address resource. To do this, you can use the CRM Shell or the Web interface to modify the CIB. It is not required to perform the actions on the DC. You can use either tool on any node in the cluster and they will be relayed to the DC. The DC will then replicate the CIB change to all cluster nodes.

Based on the information in the CIB, the pacemaker-schedulerd then computes the ideal

state of the cluster and how it should be achieved. It feeds a list of

instructions to the DC. The DC sends commands via the messaging/infrastructure

layer which are received by the pacemaker-controld peers on

other nodes. Each of them uses its local resource agent executor (implemented

as pacemaker-execd) to perform

resource modifications. The pacemaker-execd is not cluster-aware and interacts

directly with resource agents.

All peer nodes report the results of their operations back to the DC.

After the DC concludes that all necessary operations are successfully

performed in the cluster, the cluster will go back to the idle state and

wait for further events. If any operation was not carried out as

planned, the pacemaker-schedulerd

is invoked again with the new information recorded in

the CIB.

In some cases, it might be necessary to power off nodes to protect shared

data or complete resource recovery. In a Pacemaker cluster, the implementation

of node level fencing is STONITH. For this, Pacemaker comes with a

fencing subsystem, pacemaker-fenced.

STONITH devices must be configured as cluster resources (that use

specific fencing agents), because this allows monitoring of the fencing devices.

When clients detect a failure, they send a request to pacemaker-fenced,

which then executes the fencing agent to bring down the node.

2 System requirements and recommendations #

The following section informs you about system requirements and prerequisites for SUSE® Linux Enterprise High Availability. It also includes recommendations for cluster setup.

2.1 Hardware requirements #

The following list specifies hardware requirements for a cluster based on SUSE® Linux Enterprise High Availability. These requirements represent the minimum hardware configuration. Additional hardware might be necessary, depending on how you intend to use your cluster.

- Servers

1 to 32 Linux servers with software as specified in Section 2.2, “Software requirements”.

The servers can be bare metal or virtual machines. They do not require identical hardware (memory, disk space, etc.), but they must have the same architecture. Cross-platform clusters are not supported.

Using

pacemaker_remote, the cluster can be extended to include additional Linux servers beyond the 32-node limit.- Communication channels

At least two TCP/IP communication media per cluster node. The network equipment must support the communication means you want to use for cluster communication: multicast or unicast. The communication media should support a data rate of 100 Mbit/s or higher. For a supported cluster setup, two or more redundant communication paths are required. This can be done via:

Network Device Bonding (preferred)

A second communication channel in Corosync

For details, refer to Chapter 16, Network device bonding and Procedure 4.3, “Defining a redundant communication channel”, respectively.

- Node fencing/STONITH

To avoid a “split-brain” scenario, clusters need a node fencing mechanism. In a split-brain scenario, cluster nodes are divided into two or more groups that do not know about each other (because of a hardware or software failure or because of a cut network connection). A fencing mechanism isolates the node in question (usually by resetting or powering off the node). This is also called STONITH (“Shoot the other node in the head”). A node fencing mechanism can be either a physical device (a power switch) or a mechanism like SBD (STONITH by disk) in combination with a watchdog. Using SBD requires shared storage.

Unless SBD is used, each node in the High Availability cluster must have at least one STONITH device. We strongly recommend multiple STONITH devices per node.

Important: No Support Without STONITHYou must have a node fencing mechanism for your cluster.

The global cluster options

stonith-enabledandstartup-fencingmust be set totrue. When you change them, you lose support.

2.2 Software requirements #

All nodes need at least the following modules and extensions:

Basesystem Module 15 SP6

Server Applications Module 15 SP6

SUSE Linux Enterprise High Availability 15 SP6

Depending on the system role you select during installation, the following software patterns are installed by default:

- HA Node system role

High Availability (

sles_ha)Enhanced Base System (

enhanced_base)- HA GEO Node system role

Geo Clustering for High Availability (

ha_geo)Enhanced Base System (

enhanced_base)

An installation via those system roles results in a minimal installation only. You might need to add more packages manually, if required.

For machines that originally had another system role assigned, you need to

manually install the sles_ha or

ha_geo patterns and any further packages that you

need.

2.3 Storage requirements #

Some services require shared storage. If using an external NFS share, it must be reliably accessible from all cluster nodes via redundant communication paths.

To make data highly available, a shared disk system (Storage Area Network, or SAN) is recommended for your cluster. If a shared disk subsystem is used, ensure the following:

The shared disk system is properly set up and functional according to the manufacturer’s instructions.

The disks contained in the shared disk system should be configured to use mirroring or RAID to add fault tolerance to the shared disk system.

If you are using iSCSI for shared disk system access, ensure that you have properly configured iSCSI initiators and targets.

When using DRBD* to implement a mirroring RAID system that distributes data across two machines, make sure to only access the device provided by DRBD—never the backing device. To make use of the redundancy it is possible to use the same NICs as the rest of the cluster.

When using SBD as STONITH mechanism, additional requirements apply for the shared storage. For details, see Section 13.3, “Requirements and restrictions”.

2.4 Other requirements and recommendations #

For a supported and useful High Availability setup, consider the following recommendations:

- Number of cluster nodes

For clusters with more than two nodes, it is strongly recommended to use an odd number of cluster nodes to have quorum. For more information about quorum, see Section 5.2, “Quorum determination”. A regular cluster can contain up to 32 nodes. With the

pacemaker_remoteservice, High Availability clusters can be extended to include additional nodes beyond this limit. See Pacemaker Remote Quick Start for more details.- Time synchronization

Cluster nodes must synchronize to an NTP server outside the cluster. Since SUSE Linux Enterprise High Availability 15, chrony is the default implementation of NTP. For more information, see the Administration Guide for SUSE Linux Enterprise Server 15 SP6.

The cluster might not work properly if the nodes are not synchronized, or even if they are synchronized but have different timezones configured. In addition, log files and cluster reports are very hard to analyze without synchronization. If you use the bootstrap scripts, you will be warned if NTP is not configured yet.

- Network Interface Card (NIC) names

Must be identical on all nodes.

- Host name and IP address

Use static IP addresses.

Only the primary IP address is supported.

List all cluster nodes in the

/etc/hostsfile with their fully qualified host name and short host name. It is essential that members of the cluster can find each other by name. If the names are not available, internal cluster communication will fail.For details on how Pacemaker gets the node names, see also https://clusterlabs.org/projects/pacemaker/doc/2.1/Pacemaker_Explained/html/nodes.html#where-pacemaker-gets-the-node-name.

- SSH

All cluster nodes must be able to access each other via SSH. Tools like

crm report(for troubleshooting) and Hawk2's require passwordless SSH access between the nodes, otherwise they can only collect data from the current node.If you set up the cluster with the bootstrap scripts, the SSH keys are automatically created and copied. If you set up the cluster with the YaST cluster module, you must configure the SSH keys yourself.

Note: Regulatory requirementsBy default, the cluster performs operations as the

rootuser. If passwordlessrootSSH access does not comply with regulatory requirements, you can set up the cluster as a user withsudoprivileges instead. This user can run cluster operations, includingcrm report.Additionally, if you cannot store SSH keys directly on the nodes, you can use local SSH keys instead via SSH agent forwarding, as described in Section 5.5.1, “Logging in”.

For even stricter security policies, you can set up a non-root user with only limited

sudoprivileges specifically forcrm report, as described in Section D1, “Configuring limitedsudoprivileges for a non-root user”.For the there is currently no alternative for passwordless login.

3 Installing SUSE Linux Enterprise High Availability #

If you are setting up a High Availability cluster with SUSE® Linux Enterprise High Availability for the first time, the easiest way is to start with a basic two-node cluster. You can also use the two-node cluster to run some tests. Afterward, you can add more nodes by cloning existing cluster nodes with AutoYaST. The cloned nodes will have the same packages installed and the same system configuration as the original ones.

If you want to upgrade an existing cluster that runs an older version of SUSE Linux Enterprise High Availability, refer to Chapter 29, Upgrading your cluster and updating software packages.

3.1 Manual installation #

For the manual installation of the packages for High Availability refer to Article “Installation and Setup Quick Start”. It leads you through the setup of a basic two-node cluster.

3.2 Mass installation and deployment with AutoYaST #

After you have installed and set up a two-node cluster, you can extend the cluster by cloning existing nodes with AutoYaST and adding the clones to the cluster.

AutoYaST uses profiles that contains installation and configuration data. A profile tells AutoYaST what to install and how to configure the installed system to get a ready-to-use system in the end. This profile can then be used for mass deployment in different ways (for example, to clone existing cluster nodes).

For detailed instructions on how to use AutoYaST in various scenarios, see the AutoYaST Guide for SUSE Linux Enterprise Server 15 SP6.

Procedure 3.1, “Cloning a cluster node with AutoYaST” assumes you are rolling out SUSE Linux Enterprise High Availability 15 SP6 to a set of machines with identical hardware configurations.

If you need to deploy cluster nodes on non-identical hardware, refer to the Deployment Guide for SUSE Linux Enterprise Server 15 SP6, chapter Automated Installation, section Rule-Based Autoinstallation.

Make sure the node you want to clone is correctly installed and configured. For details, see the Installation and Setup Quick Start or Chapter 4, Using the YaST cluster module.

Follow the description outlined in the SUSE Linux Enterprise 15 SP6 Deployment Guide for simple mass installation. This includes the following basic steps:

Creating an AutoYaST profile. Use the AutoYaST GUI to create and modify a profile based on the existing system configuration. In AutoYaST, choose the module and click the button. If needed, adjust the configuration in the other modules and save the resulting control file as XML.

If you have configured DRBD, you can select and clone this module in the AutoYaST GUI, too.

Determining the source of the AutoYaST profile and the parameter to pass to the installation routines for the other nodes.

Determining the source of the SUSE Linux Enterprise Server and SUSE Linux Enterprise High Availability installation data.

Determining and setting up the boot scenario for autoinstallation.

Passing the command line to the installation routines, either by adding the parameters manually or by creating an

infofile.Starting and monitoring the autoinstallation process.

After the clone has been successfully installed, execute the following steps to make the cloned node join the cluster:

Transfer the key configuration files from the already configured nodes to the cloned node with Csync2 as described in Section 4.7, “Transferring the configuration to all nodes”.

To bring the node online, start the cluster services on the cloned node as described in Section 4.8, “Bringing the cluster online”.

The cloned node now joins the cluster because the

/etc/corosync/corosync.conf file has been applied to

the cloned node via Csync2. The CIB is automatically synchronized

among the cluster nodes.

4 Using the YaST cluster module #

The YaST cluster module allows you to set up a cluster manually (from scratch) or to modify options for an existing cluster.

However, if you prefer an automated approach for setting up a cluster, refer to Article “Installation and Setup Quick Start”. It describes how to install the needed packages and leads you to a basic two-node cluster, which is set up with the bootstrap scripts provided by the CRM Shell.

Use the same setup method for all nodes in the cluster. Mixing the setup methods is not recommended.

4.1 Definition of terms #

Several key terms used in the YaST cluster module and in this chapter are defined below.

- Bind network address (

bindnetaddr) The network address the Corosync executive should bind to. To simplify sharing configuration files across the cluster, Corosync uses network interface netmask to mask only the address bits that are used for routing the network. For example, if the local interface is

192.168.5.92with netmask255.255.255.0, setbindnetaddrto192.168.5.0. If the local interface is192.168.5.92with netmask255.255.255.192, setbindnetaddrto192.168.5.64.If

nodelistwithringX_addris explicitly configured in/etc/corosync/corosync.conf,bindnetaddris not strictly required.Note: Network address for all nodesAs the same Corosync configuration is used on all nodes, make sure to use a network address as

bindnetaddr, not the address of a specific network interface.conntrackToolsAllow interaction with the in-kernel connection tracking system for enabling stateful packet inspection for iptables. Used by SUSE Linux Enterprise High Availability to synchronize the connection status between cluster nodes. For detailed information, refer to https://conntrack-tools.netfilter.org/.

- Csync2

A synchronization tool that can be used to replicate configuration files across all nodes in the cluster, and even across Geo clusters. Csync2 can handle any number of hosts, sorted into synchronization groups. Each synchronization group has its own list of member hosts and its include/exclude patterns that define which files should be synchronized in the synchronization group. The groups, the host names belonging to each group, and the include/exclude rules for each group are specified in the Csync2 configuration file,

/etc/csync2/csync2.cfg.For authentication, Csync2 uses the IP addresses and pre-shared keys within a synchronization group. You need to generate one key file for each synchronization group and copy it to all group members.

For more information about Csync2, refer to https://oss.linbit.com/csync2/paper.pdf

- Existing cluster

The term “existing cluster” is used to refer to any cluster that consists of at least one node. Existing clusters have a basic Corosync configuration that defines the communication channels, but they do not necessarily have resource configuration yet.

- Multicast

A technology used for a one-to-many communication within a network that can be used for cluster communication. Corosync supports both multicast and unicast.

Note: Switches and multicastTo use multicast for cluster communication, make sure your switches support multicast.

- Multicast address (

mcastaddr) IP address to be used for multicasting by the Corosync executive. The IP address can either be IPv4 or IPv6. If IPv6 networking is used, node IDs must be specified. You can use any multicast address in your private network.

- Multicast port (

mcastport) The port to use for cluster communication. Corosync uses two ports: the specified

mcastportfor receiving multicast, andmcastport -1for sending multicast.- Redundant Ring Protocol (RRP)

Allows the use of multiple redundant local area networks for resilience against partial or total network faults. This way, cluster communication can still be kept up as long as a single network is operational. Corosync supports the Totem Redundant Ring Protocol. A logical token-passing ring is imposed on all participating nodes to deliver messages in a reliable and sorted manner. A node is allowed to broadcast a message only if it holds the token.

When having defined redundant communication channels in Corosync, use RRP to tell the cluster how to use these interfaces. RRP can have three modes (

rrp_mode):If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.If set to

none, RRP is disabled.

- Unicast

A technology for sending messages to a single network destination. Corosync supports both multicast and unicast. In Corosync, unicast is implemented as UDP-unicast (UDPU).

4.2 YaST module #

Start YaST and select › . Alternatively, start the module from command line:

sudo yast2 cluster

The following list shows an overview of the available screens in the YaST cluster module. It also mentions whether the screen contains parameters that are required for successful cluster setup or whether its parameters are optional.

- Communication channels (required)

Allows you to define one or two communication channels for communication between the cluster nodes. As transport protocol, either use multicast (UDP) or unicast (UDPU). For details, see Section 4.3, “Defining the communication channels”.

Important: Redundant communication pathsFor a supported cluster setup, two or more redundant communication paths are required. The preferred way is to use network device bonding as described in Chapter 16, Network device bonding.

If this is impossible, you need to define a second communication channel in Corosync.

- Security (optional but recommended)

Allows you to define the authentication settings for the cluster. HMAC/SHA1 authentication requires a shared secret used to protect and authenticate messages. For details, see Section 4.4, “Defining authentication settings”.

- Configure Csync2 (optional but recommended)

Csync2 helps you to keep track of configuration changes and to keep files synchronized across the cluster nodes. For details, see Section 4.7, “Transferring the configuration to all nodes”.

- Configure conntrackd (optional)

Allows you to configure the user space

conntrackd. Use the conntrack tools for stateful packet inspection for iptables. For details, see Section 4.5, “Synchronizing connection status between cluster nodes”.- Service (required)

Allows you to configure the service for bringing the cluster node online. Define whether to start the cluster services at boot time and whether to open the ports in the firewall that are needed for communication between the nodes. For details, see Section 4.6, “Configuring services”.

If you start the cluster module for the first time, it appears as a wizard, guiding you through all the steps necessary for basic setup. Otherwise, click the categories on the left panel to access the configuration options for each step.

Certain settings in the YaST cluster module apply only to the current node. Other settings may automatically be transferred to all nodes with Csync2. Find detailed information about this in the following sections.

4.3 Defining the communication channels #

For successful communication between the cluster nodes, define at least one communication channel. As transport protocol, either use multicast (UDP) or unicast (UDPU) as described in Procedure 4.1 or Procedure 4.2, respectively. To define a second, redundant channel (Procedure 4.3), both communication channels must use the same protocol.

For deploying SUSE Linux Enterprise High Availability in public cloud platforms, use unicast as transport protocol. Multicast is generally not supported by the cloud platforms themselves.

All settings defined in the YaST

screen are written to /etc/corosync/corosync.conf. Find example

files for a multicast and a unicast setup in

/usr/share/doc/packages/corosync/.

If you are using IPv4 addresses, node IDs are optional. If you are using IPv6 addresses, node IDs are required. Instead of specifying IDs manually for each node, the YaST cluster module contains an option to automatically generate a unique ID for every cluster node.

When using multicast, the same bindnetaddr,

mcastaddr, and mcastport

is used for all cluster nodes. All nodes in the cluster know each

other by using the same multicast address. For different clusters, use

different multicast addresses.

Start the YaST cluster module and switch to the category.

Set the protocol to

Multicast.Define the . Set the value to the subnet you will use for cluster multicast.

Define the .

Define the .

To automatically generate a unique ID for every cluster node keep enabled.

Define a .

Enter the number of . This is important for Corosync to calculate quorum in case of a partitioned cluster. By default, each node has

1vote. The number of must match the number of nodes in your cluster.Confirm your changes.

If needed, define a redundant communication channel in Corosync as described in Procedure 4.3, “Defining a redundant communication channel”.

To use unicast instead of multicast for cluster communication, proceed as follows.

Start the YaST cluster module and switch to the category.

Set the protocol to

Unicast.Define the .

For unicast communication, Corosync needs to know the IP addresses of all nodes in the cluster. For each node, click and enter the following details:

(only required if you use a second communication channel in Corosync)

(only required if the option is disabled)

To modify or remove any addresses of cluster members, use the or buttons.

To automatically generate a unique ID for every cluster node keep enabled.

Define a .

Enter the number of . This is important for Corosync to calculate quorum in case of a partitioned cluster. By default, each node has

1vote. The number of must match the number of nodes in your cluster.Confirm your changes.

If needed, define a redundant communication channel in Corosync as described in Procedure 4.3, “Defining a redundant communication channel”.

If network device bonding cannot be used for any reason, the second best choice is to define a redundant communication channel (a second ring) in Corosync. That way, two physically separate networks can be used for communication. If one network fails, the cluster nodes can still communicate via the other network.

The additional communication channel in

Corosync forms a second token-passing ring. In

/etc/corosync/corosync.conf, the first channel you

configured is the primary ring and gets the ring number

0. The second ring (redundant channel) gets the ring number

1.

When having defined redundant communication channels in Corosync, use RRP to tell the cluster how to use these interfaces. With RRP, two physically separate networks are used for communication. If one network fails, the cluster nodes can still communicate via the other network.

RRP can have three modes:

If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.If set to

none, RRP is disabled.

/etc/hosts If multiple rings are configured in Corosync, each node can

have multiple IP addresses. This needs to be reflected in the

/etc/hosts file of all nodes.

Start the YaST cluster module and switch to the category.

Activate . The redundant channel must use the same protocol as the first communication channel you defined.

If you use multicast, enter the following parameters: the to use, the and the for the redundant channel.

If you use unicast, define the following parameters: the to use, and the . Enter the IP addresses of all nodes that will be part of the cluster.

To tell Corosync how and when to use the different channels, select the to use:

If only one communication channel is defined, is automatically disabled (value

none).If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.

When RRP is used, SUSE Linux Enterprise High Availability monitors the status of the current rings and automatically re-enables redundant rings after faults.

Alternatively, check the ring status manually with

corosync-cfgtool. View the available options with-h.Confirm your changes.

4.4 Defining authentication settings #

To define the authentication settings for the cluster, you can use HMAC/SHA1 authentication. This requires a shared secret used to protect and authenticate messages. The authentication key (password) you specify is used on all nodes in the cluster.

Start the YaST cluster module and switch to the category.

Activate .

For a newly created cluster, click . An authentication key is created and written to

/etc/corosync/authkey.If you want the current machine to join an existing cluster, do not generate a new key file. Instead, copy the

/etc/corosync/authkeyfrom one of the nodes to the current machine (either manually or with Csync2).Confirm your changes. YaST writes the configuration to

/etc/corosync/corosync.conf.

4.5 Synchronizing connection status between cluster nodes #

To enable stateful packet inspection for iptables, configure and use the conntrack tools. This requires the following basic steps:

conntrackd with YaST #

Use the YaST cluster module to configure the user space

conntrackd (see Figure 4.4, “YaST —conntrackd”). It needs a

dedicated network interface that is not used for other communication

channels. The daemon can be started via a resource agent afterward.

Start the YaST cluster module and switch to the category.

Define the to be used for synchronizing the connection status.

In , define a numeric ID for the group to synchronize the connection status to.

Click to create the configuration file for

conntrackd.If you modified any options for an existing cluster, confirm your changes and close the cluster module.

For further cluster configuration, click and proceed with Section 4.6, “Configuring services”.

Select a for synchronizing the connection status. The IPv4 address of the selected interface is automatically detected and shown in YaST. It must already be configured and it must support multicast.

conntrackd #After having configured the conntrack tools, you can use them for Linux Virtual Server (see Load balancing).

4.6 Configuring services #

In the YaST cluster module define whether to start certain services on a node at boot time. You can also use the module to start and stop the services manually. To bring the cluster nodes online and start the cluster resource manager, Pacemaker must be running as a service.

In the YaST cluster module, switch to the category.

To start the cluster services each time this cluster node is booted, select the respective option in the group. If you select in the group, you must start the cluster services manually each time this node is booted. To start the cluster services manually, use the command:

#crm cluster startTo start or stop the cluster services immediately, click the respective button.

To open the ports in the firewall that are needed for cluster communication on the current machine, activate .

Confirm your changes. Note that the configuration only applies to the current machine, not to all cluster nodes.

4.7 Transferring the configuration to all nodes #

Instead of copying the resulting configuration files to all nodes

manually, use the csync2 tool for replication across

all nodes in the cluster.

This requires the following basic steps:

Csync2 helps you to keep track of configuration changes and to keep files synchronized across the cluster nodes:

You can define a list of files that are important for operation.

You can show changes to these files (against the other cluster nodes).

You can synchronize the configured files with a single command.

With a simple shell script in

~/.bash_logout, you can be reminded about unsynchronized changes before logging out of the system.

Find detailed information about Csync2 at https://oss.linbit.com/csync2/ and https://oss.linbit.com/csync2/paper.pdf.

4.7.1 Configuring Csync2 with YaST #

Start the YaST cluster module and switch to the category.

To specify the synchronization group, click in the group and enter the local host names of all nodes in your cluster. For each node, you must use exactly the strings that are returned by the

hostnamecommand.Tip: Host name resolutionIf host name resolution does not work properly in your network, you can also specify a combination of host name and IP address for each cluster node. To do so, use the string HOSTNAME@IP such as

alice@192.168.2.100, for example. Csync2 then uses the IP addresses when connecting.Click to create a key file for the synchronization group. The key file is written to

/etc/csync2/key_hagroup. After it has been created, it must be copied manually to all members of the cluster.To populate the list with the files that usually need to be synchronized among all nodes, click .

To , or files from the list of files to be synchronized use the respective buttons. You must enter the absolute path for each file.

Activate Csync2 by clicking . This executes the following command to start Csync2 automatically at boot time:

#systemctl enable csync2.socketClick . YaST writes the Csync2 configuration to

/etc/csync2/csync2.cfg.

4.7.2 Synchronizing changes with Csync2 #

Before running Csync2 for the first time, you need to make the following preparations:

Copy the file

/etc/csync2/csync2.cfgmanually to all nodes after you have configured it as described in Section 4.7.1, “Configuring Csync2 with YaST”.Copy the file

/etc/csync2/key_hagroupthat you have generated on one node in Step 3 of Section 4.7.1 to all nodes in the cluster. It is needed for authentication by Csync2. However, do not regenerate the file on the other nodes—it needs to be the same file on all nodes.Execute the following command on all nodes to start the service now:

#systemctl start csync2.socket

To initially synchronize all files once, execute the following command on the machine that you want to copy the configuration from:

#csync2 -xvThis synchronizes all the files once by pushing them to the other nodes. If all files are synchronized successfully, Csync2 finishes with no errors.

If one or several files that are to be synchronized have been modified on other nodes (not only on the current one), Csync2 reports a conflict with an output similar to the one below:

While syncing file /etc/corosync/corosync.conf: ERROR from peer hex-14: File is also marked dirty here! Finished with 1 errors.

If you are sure that the file version on the current node is the “best” one, you can resolve the conflict by forcing this file and resynchronizing:

#csync2 -f /etc/corosync/corosync.conf#csync2 -x

For more information on the Csync2 options, run

#csync2 -help

Csync2 only pushes changes. It does not continuously synchronize files between the machines.

Each time you update files that need to be synchronized, you need to

push the changes to the other machines by running csync2

-xv on the machine where you did the changes. If you run

the command on any of the other machines with unchanged files, nothing

happens.

4.8 Bringing the cluster online #

Before starting the cluster, make sure passwordless SSH is configured between the nodes.

If you did not already configure passwordless SSH before setting up the cluster, you can

do so now by using the ssh stage of the bootstrap scripts:

On the first node, run

crm cluster init ssh.On the rest of the nodes, run

crm cluster join ssh -c NODE1.

After the initial cluster configuration is done, start the cluster services on all cluster nodes to bring the stack online:

Log in to an existing node.

Start the cluster services on all cluster nodes:

#crm cluster start --allCheck the cluster status with the

crm statuscommand. If all nodes are online, the output should be similar to the following:#crm status Cluster Summary: * Stack: corosync * Current DC: alice (version ...) - partition with quorum * Last updated: ... * Last change: ... by hacluster via crmd on bob * 2 nodes configured * 1 resource instance configured Node List: * Online: [ alice bob ] ...This output indicates that the cluster resource manager is started and is ready to manage resources.

After the basic configuration is done and the nodes are online, you can

start to configure cluster resources. Use one of the cluster management

tools like the CRM Shell (crmsh) or Hawk2. For more

information, see Section 5.5, “Introduction to crmsh” or

Section 5.4, “Introduction to Hawk2”.

Part II Configuration and administration #

- 5 Configuration and administration basics

The main purpose of an HA cluster is to manage user services. Typical examples of user services are an Apache Web server or a database. From the user's point of view, the services do something specific when ordered to do so. To the cluster, however, they are only resources which may be started or stopped—the nature of the service is irrelevant to the cluster.

This chapter introduces some basic concepts you need to know when administering your cluster. The following chapters show you how to execute the main configuration and administration tasks with each of the management tools SUSE Linux Enterprise High Availability provides.

- 6 Configuring cluster resources

As a cluster administrator, you need to create cluster resources for every resource or application you run on servers in your cluster. Cluster resources can include Web sites, e-mail servers, databases, file systems, virtual machines and any other server-based applications or services you want to make available to users at all times.

- 7 Configuring resource constraints

Having all the resources configured is only part of the job. Even if the cluster knows all needed resources, it still might not be able to handle them correctly. Resource constraints let you specify which cluster nodes resources can run on, what order resources load in, and what other resources a specific resource is dependent on.

- 8 Managing cluster resources

After configuring the resources in the cluster, use the cluster management tools to start, stop, clean up, remove or migrate the resources. This chapter describes how to use Hawk2 or

crmshfor resource management tasks.- 9 Managing services on remote hosts

The possibilities for monitoring and managing services on remote hosts has become increasingly important during the last few years. SUSE Linux Enterprise High Availability 11 SP3 offered fine-grained monitoring of services on remote hosts via monitoring plug-ins. The recent addition of the

pacemaker_remoteservice now allows SUSE Linux Enterprise High Availability 15 SP6 to fully manage and monitor resources on remote hosts just as if they were a real cluster node—without the need to install the cluster stack on the remote machines.- 10 Adding or modifying resource agents

All tasks that need to be managed by a cluster must be available as a resource. There are two major groups to consider: resource agents and STONITH agents. For both categories, you can add your own agents, extending the abilities of the cluster to your own needs.

- 11 Monitoring clusters

This chapter describes how to monitor a cluster's health and view its history.

- 12 Fencing and STONITH

Fencing is an important concept in computer clusters for HA (High Availability). A cluster sometimes detects that one of the nodes is behaving strangely and needs to remove it. This is called fencing and is commonly done with a STONITH resource. Fencing may be defined as a method to bring an HA cluster to a known state.

Every resource in a cluster has a state attached. For example: “resource r1 is started on alice”. In an HA cluster, such a state implies that “resource r1 is stopped on all nodes except alice”, because the cluster must make sure that every resource may be started on only one node. Every node must report every change that happens to a resource. The cluster state is thus a collection of resource states and node states.

When the state of a node or resource cannot be established with certainty, fencing comes in. Even when the cluster is not aware of what is happening on a given node, fencing can ensure that the node does not run any important resources.

- 13 Storage protection and SBD

SBD (STONITH Block Device) provides a node fencing mechanism for Pacemaker-based clusters through the exchange of messages via shared block storage (SAN, iSCSI, FCoE, etc.). This isolates the fencing mechanism from changes in firmware version or dependencies on specific firmware controllers. SBD needs a watchdog on each node to ensure that misbehaving nodes are really stopped. Under certain conditions, it is also possible to use SBD without shared storage, by running it in diskless mode.

The cluster bootstrap scripts provide an automated way to set up a cluster with the option of using SBD as fencing mechanism. For details, see the Article “Installation and Setup Quick Start”. However, manually setting up SBD provides you with more options regarding the individual settings.

This chapter explains the concepts behind SBD. It guides you through configuring the components needed by SBD to protect your cluster from potential data corruption in case of a split-brain scenario.

In addition to node level fencing, you can use additional mechanisms for storage protection, such as LVM exclusive activation or OCFS2 file locking support (resource level fencing). They protect your system against administrative or application faults.

- 14 QDevice and QNetd

QDevice and QNetd participate in quorum decisions. With assistance from the arbitrator

corosync-qnetd,corosync-qdeviceprovides a configurable number of votes, allowing a cluster to sustain more node failures than the standard quorum rules allow. We recommend deployingcorosync-qnetdandcorosync-qdevicefor clusters with an even number of nodes, and especially for two-node clusters.- 15 Access control lists

The cluster administration tools like CRM Shell (