This guide covers system administration tasks like maintaining, monitoring and customizing an initially installed system.

- Preface

- I Common tasks

- 1 Bash and Bash scripts

- 2

sudobasics - 3 Using YaST

- 4 YaST in text mode

- 5 YaST online update

- 6 Managing software with command line tools

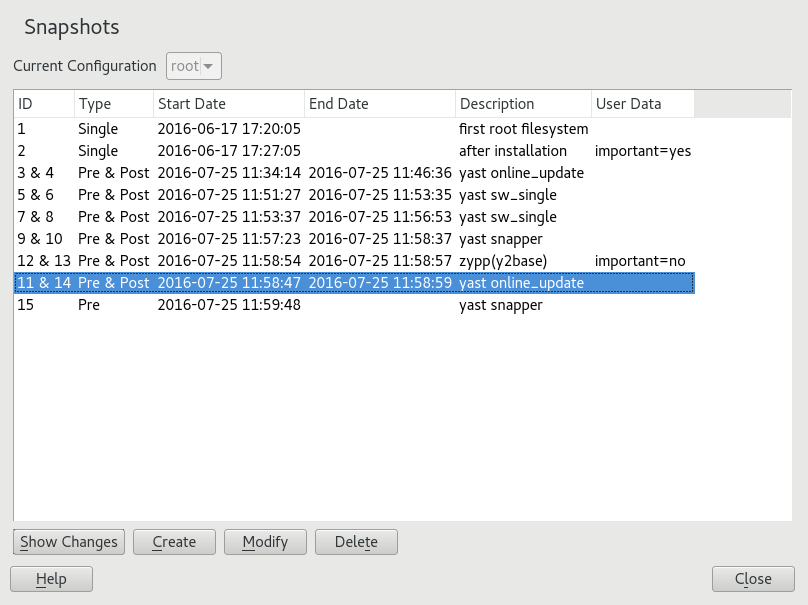

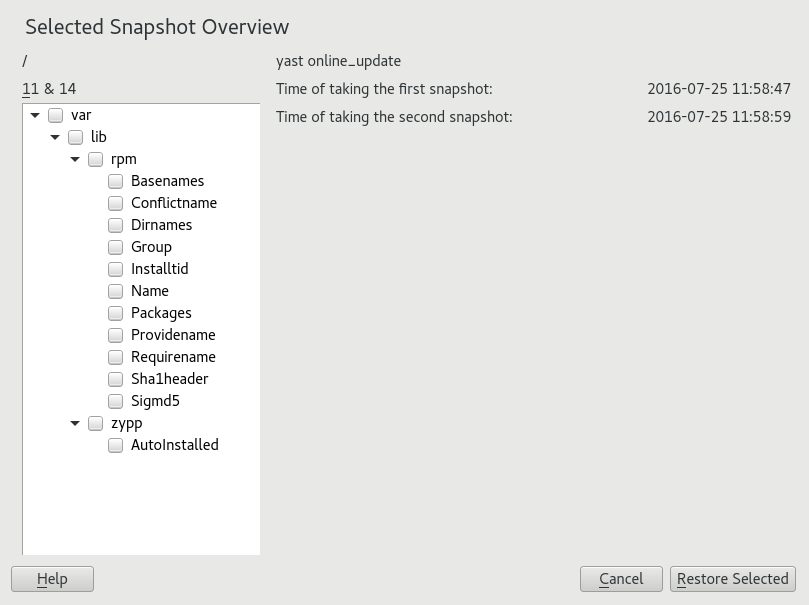

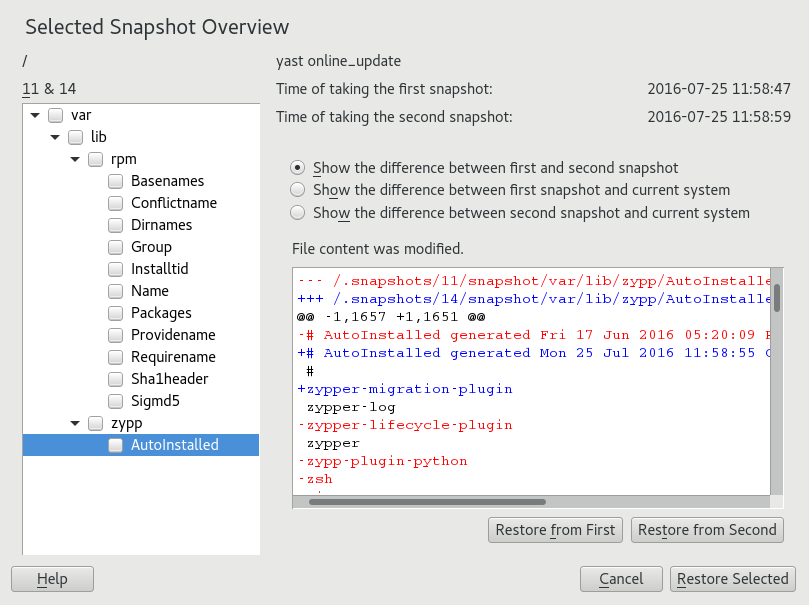

- 7 System recovery and snapshot management with Snapper

- 7.1 Default setup

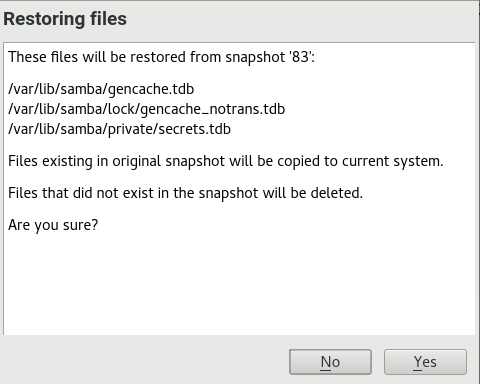

- 7.2 Using Snapper to undo changes

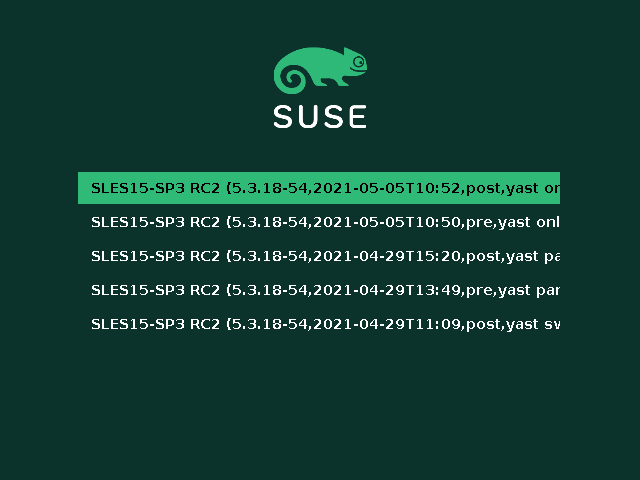

- 7.3 System rollback by booting from snapshots

- 7.4 Enabling Snapper in user home directories

- 7.5 Creating and modifying Snapper configurations

- 7.6 Manually creating and managing snapshots

- 7.7 Automatic snapshot clean-up

- 7.8 Showing exclusive disk space used by snapshots

- 7.9 Frequently asked questions

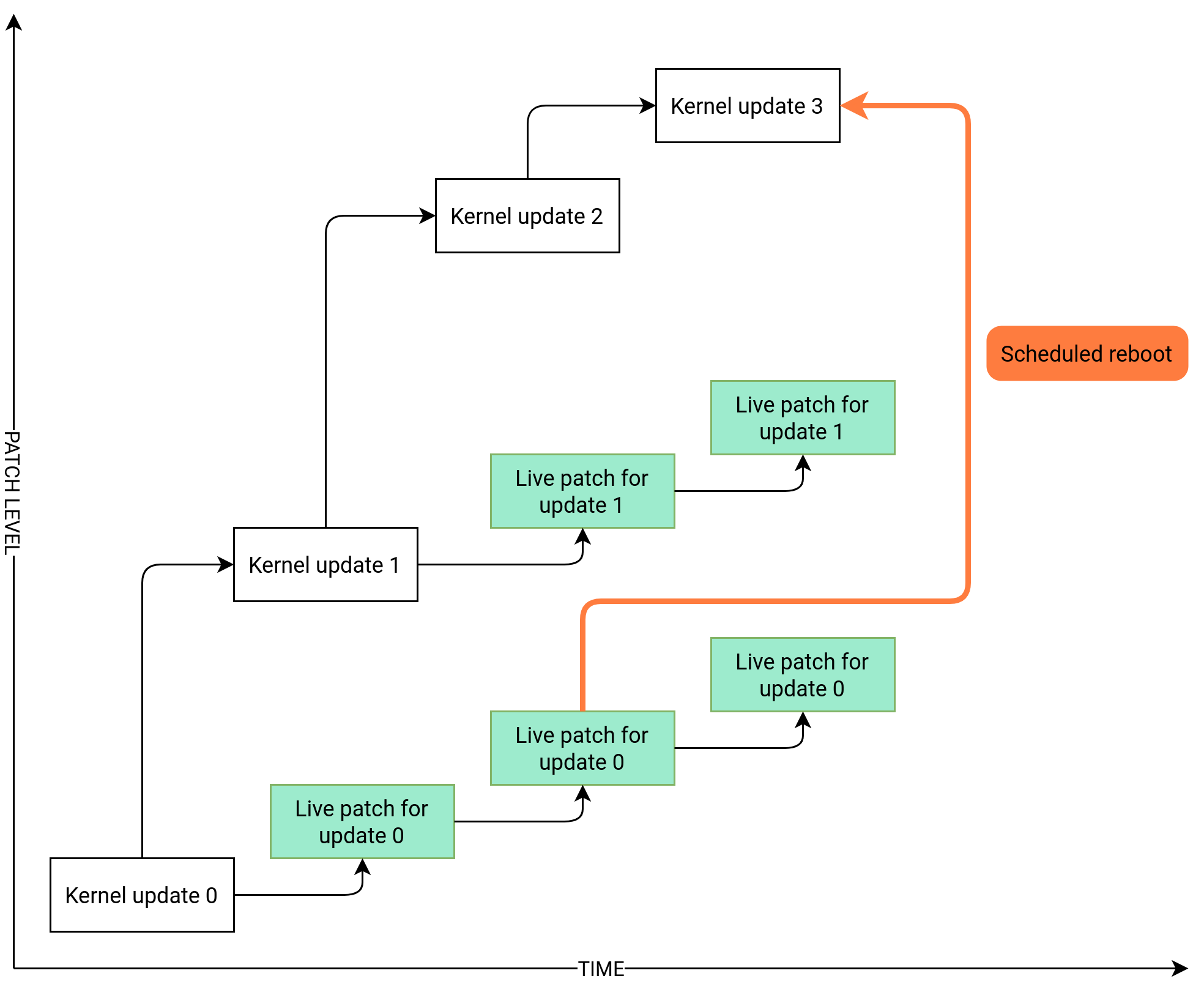

- 8 Live kernel patching with KLP

- 9 Transactional updates

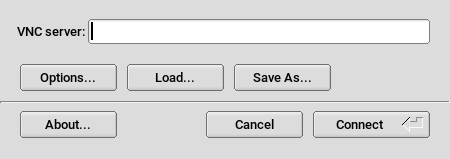

- 10 Remote graphical sessions with VNC

- 11 File copying with RSync

- II Booting a Linux system

- III System

- 16 32-bit and 64-bit applications in a 64-bit system environment

- 17

journalctl: Query thesystemdjournal - 18

update-alternatives: Managing multiple versions of commands and files - 19 Basic networking

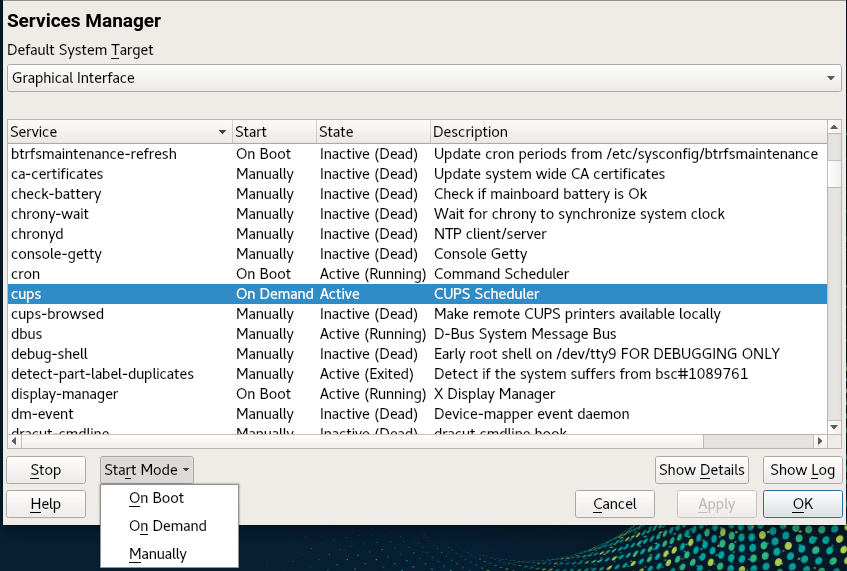

- 19.1 IP addresses and routing

- 19.2 IPv6—the next generation Internet

- 19.3 Name resolution

- 19.4 Configuring a network connection with YaST

- 19.5 Configuring a network connection manually

- 19.6 Basic router setup

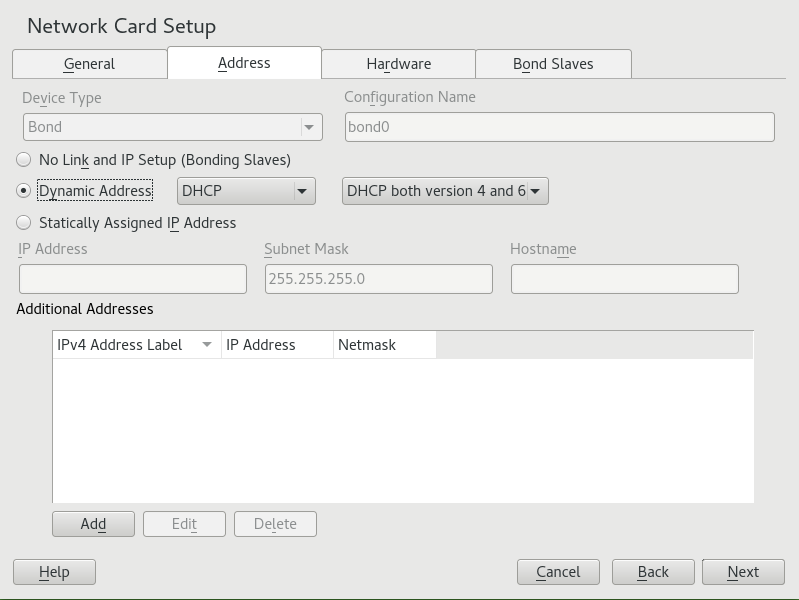

- 19.7 Setting up bonding devices

- 19.8 Setting up team devices for Network Teaming

- 19.9 Software-defined networking with Open vSwitch

- 20 Printer operation

- 21 Graphical user interface

- 22 Accessing file systems with FUSE

- 23 Managing kernel modules

- 24 Dynamic kernel device management with

udev- 24.1 The

/devdirectory - 24.2 Kernel

ueventsandudev - 24.3 Drivers, kernel modules and devices

- 24.4 Booting and initial device setup

- 24.5 Monitoring the running

udevdaemon - 24.6 Influencing kernel device event handling with

udevrules - 24.7 Persistent device naming

- 24.8 Files used by

udev - 24.9 More information

- 24.1 The

- 25 Special system features

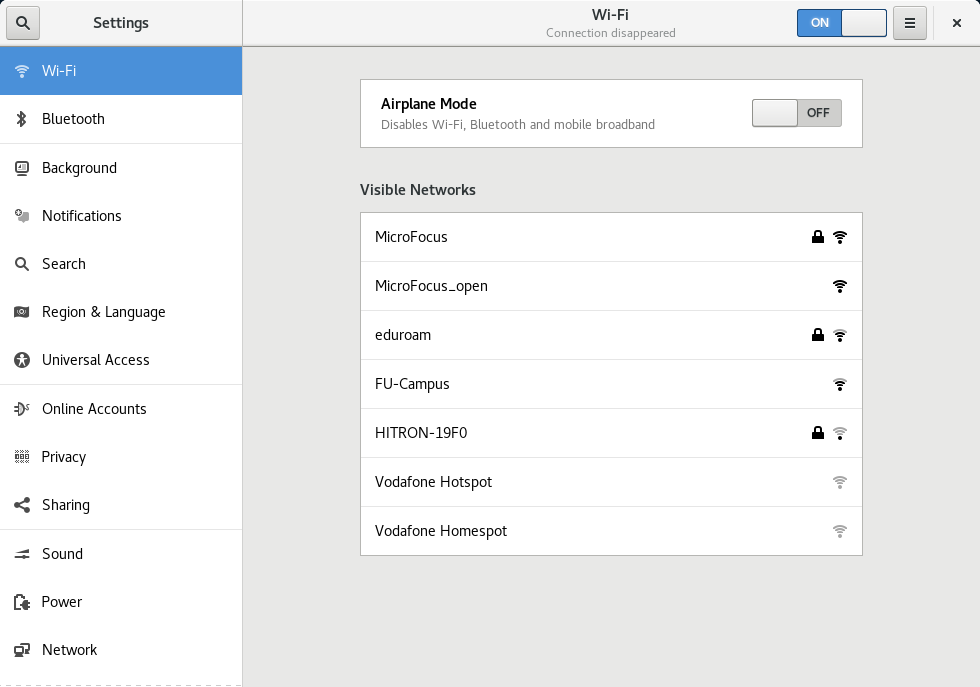

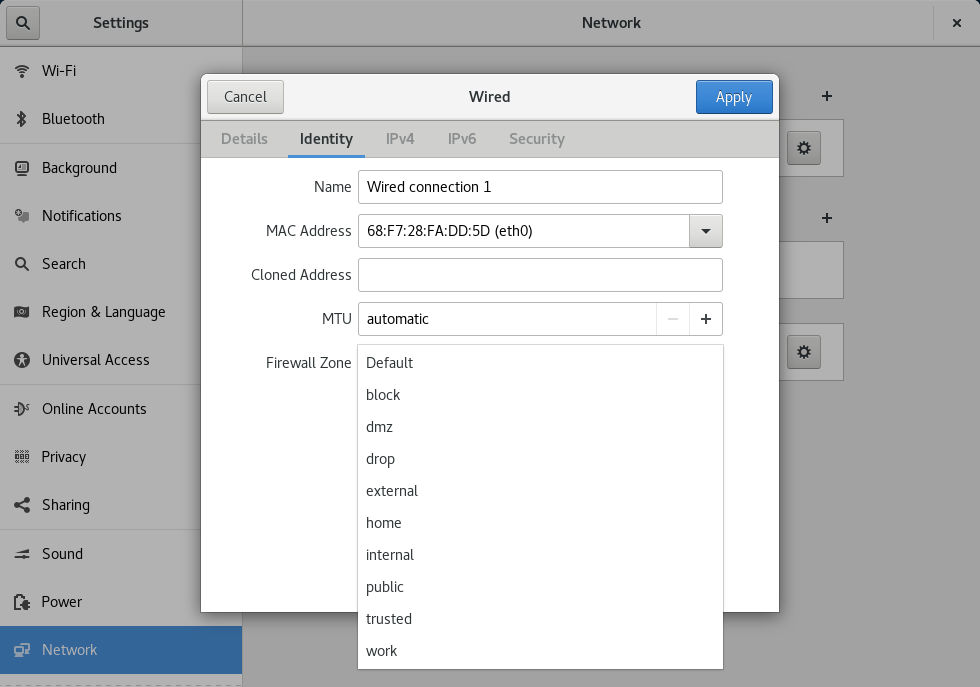

- 26 Using NetworkManager

- 27 Power management

- 28 Persistent memory

- IV Services

- 29 Service management with YaST

- 30 Time synchronization with NTP

- 31 The domain name system

- 32 DHCP

- 33 SLP

- 34 The Apache HTTP server

- 34.1 Quick start

- 34.2 Configuring Apache

- 34.3 Starting and stopping Apache

- 34.4 Installing, activating, and configuring modules

- 34.5 Enabling CGI scripts

- 34.6 Setting up a secure Web server with SSL

- 34.7 Running multiple Apache instances on the same server

- 34.8 Avoiding security problems

- 34.9 Troubleshooting

- 34.10 More information

- 35 Setting up an FTP server with YaST

- 36 Squid caching proxy server

- 36.1 Some facts about proxy servers

- 36.2 System requirements

- 36.3 Basic usage of Squid

- 36.4 The YaST Squid module

- 36.5 The Squid configuration file

- 36.6 Configuring a transparent proxy

- 36.7 Using the Squid cache manager CGI interface (

cachemgr.cgi) - 36.8 Cache report generation with Calamaris

- 36.9 More information

- 37 Web Based Enterprise Management using SFCB

- V Troubleshooting

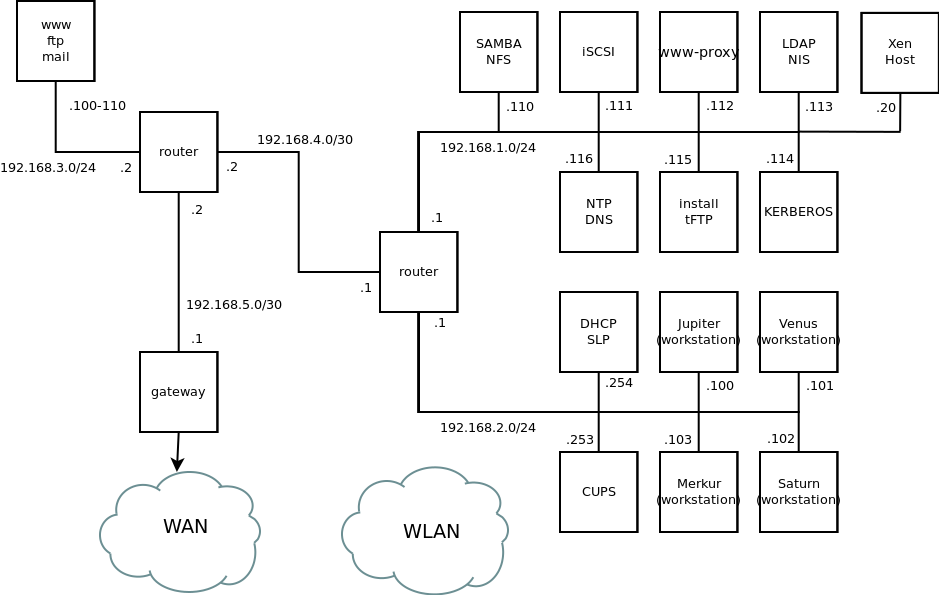

- A An example network

- B GNU licenses

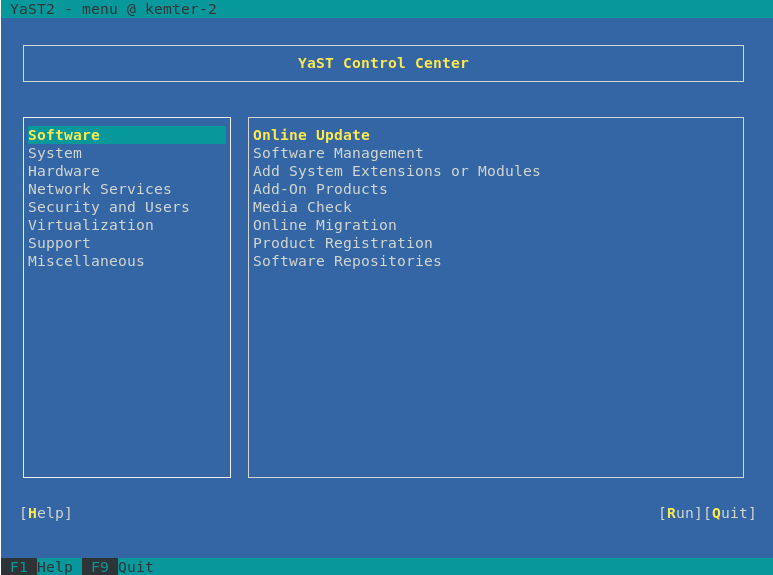

- 4.1 Main window of YaST in text mode

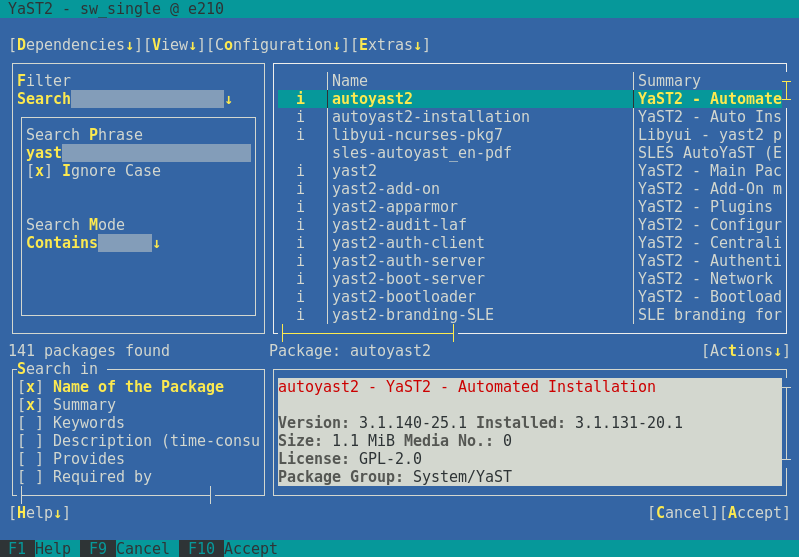

- 4.2 The software installation module

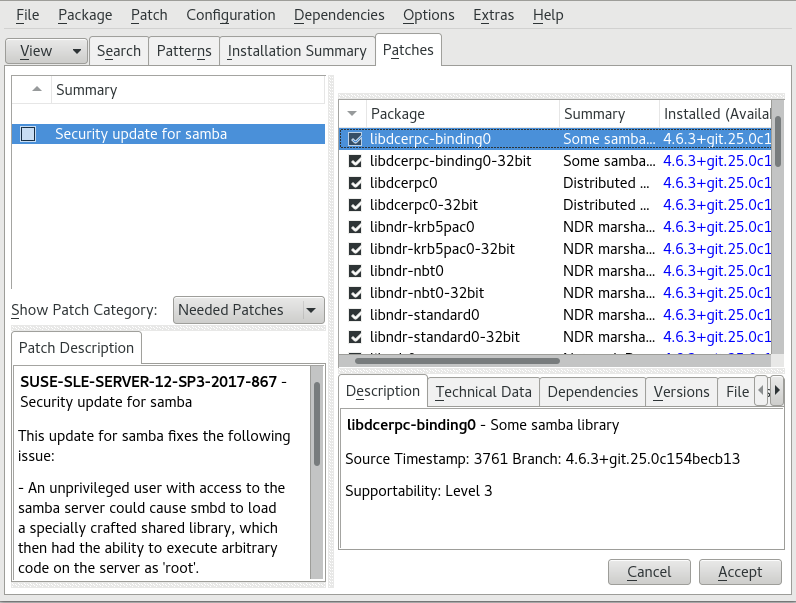

- 5.1 YaST online update

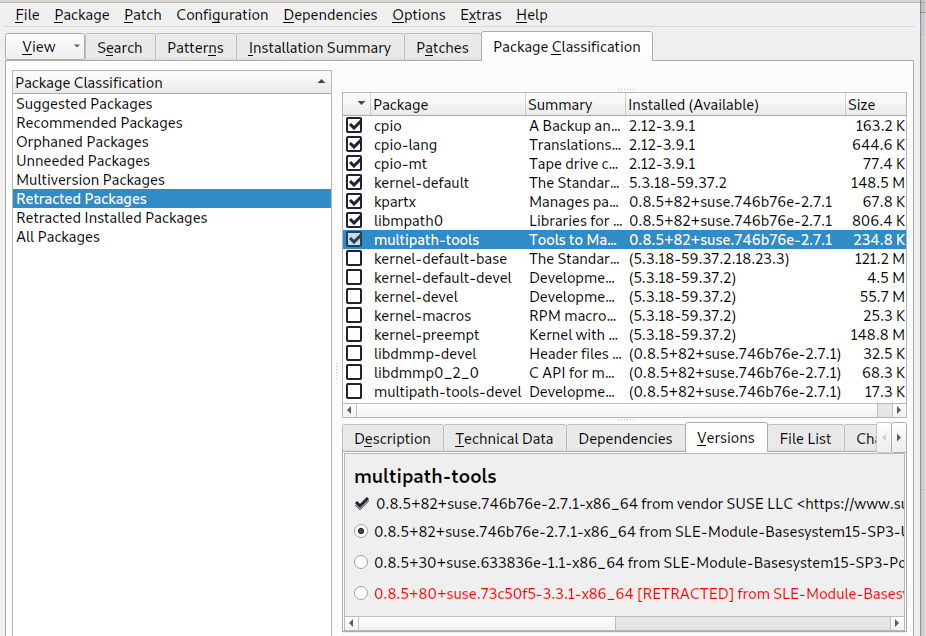

- 5.2 Viewing retracted patches and history

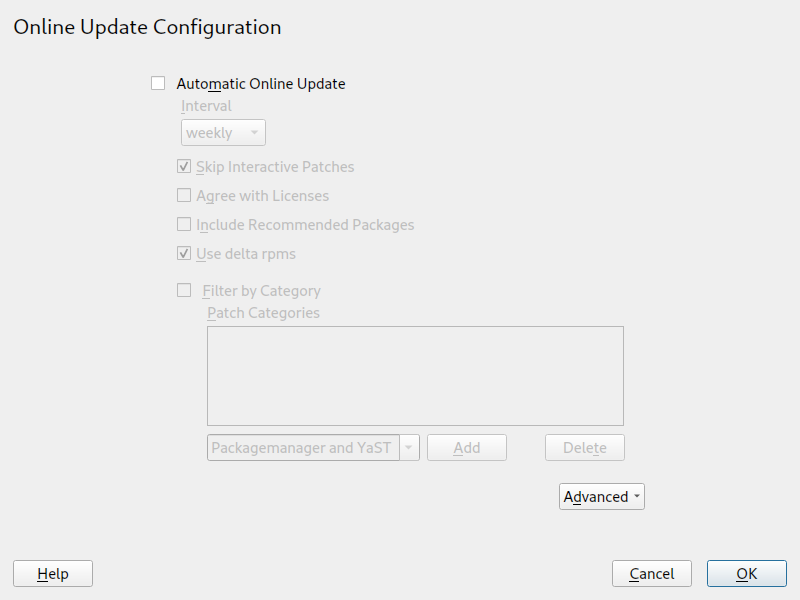

- 5.3 YaST online update configuration

- 7.1 Boot loader: snapshots

- 10.1 vncviewer

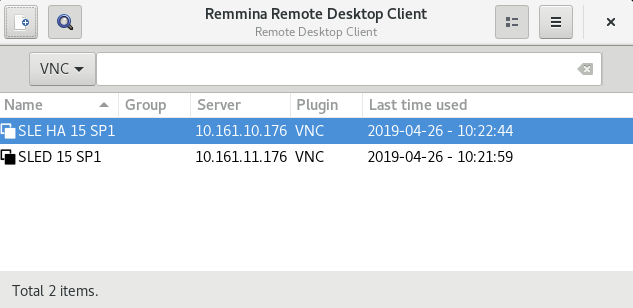

- 10.2 Remmina's main window

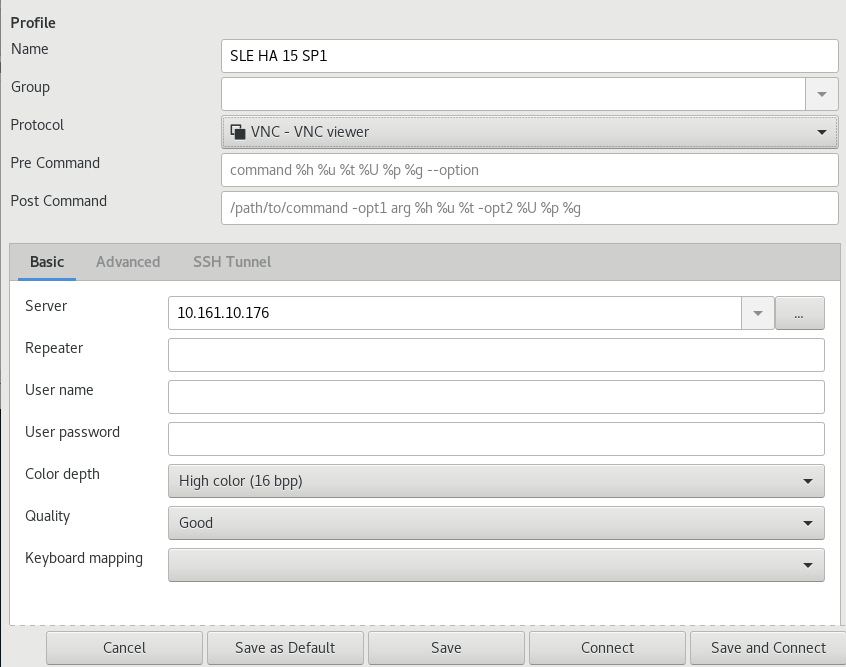

- 10.3 Remote desktop preference

- 10.4 Quick-starting

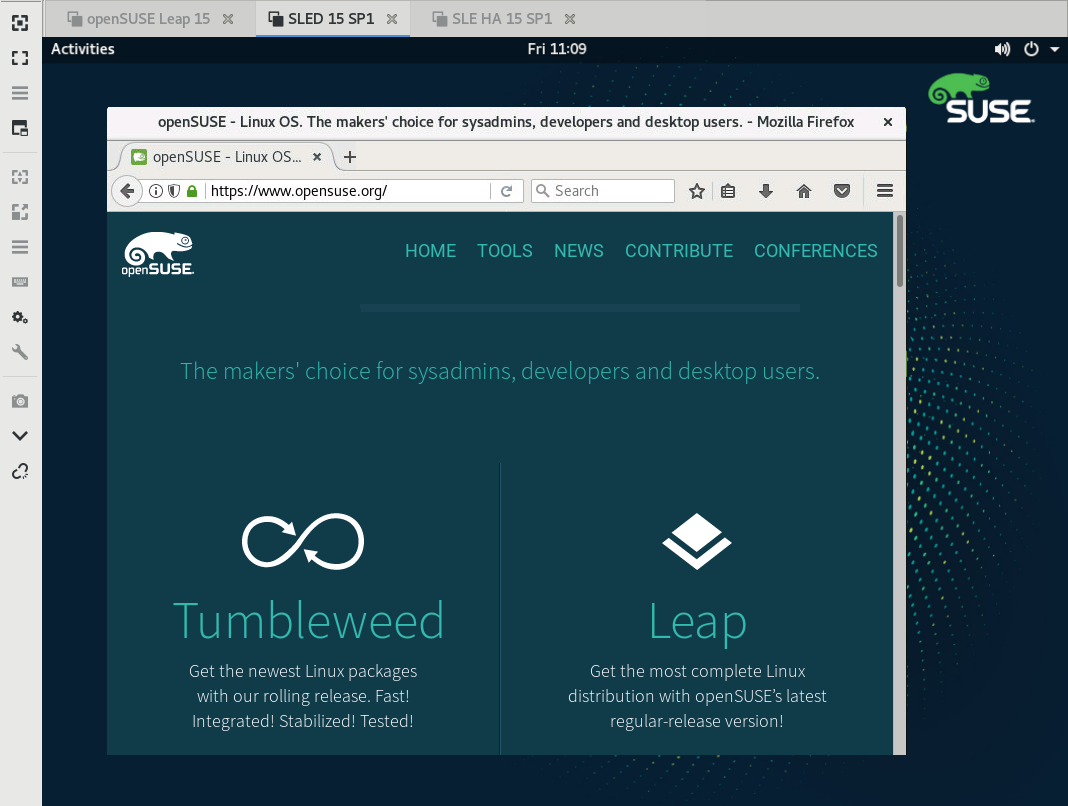

- 10.5 Remmina viewing remote session

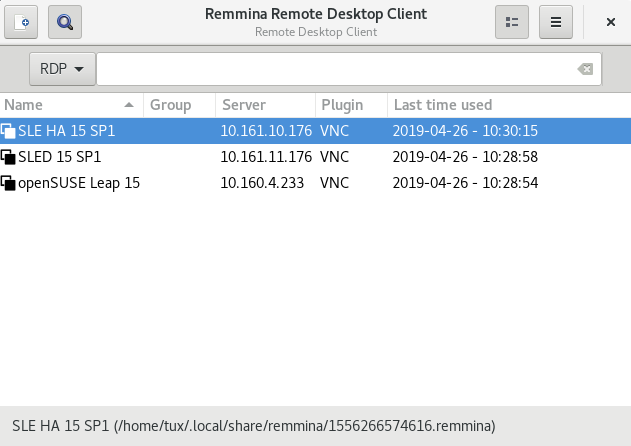

- 10.6 Reading path to the profile file

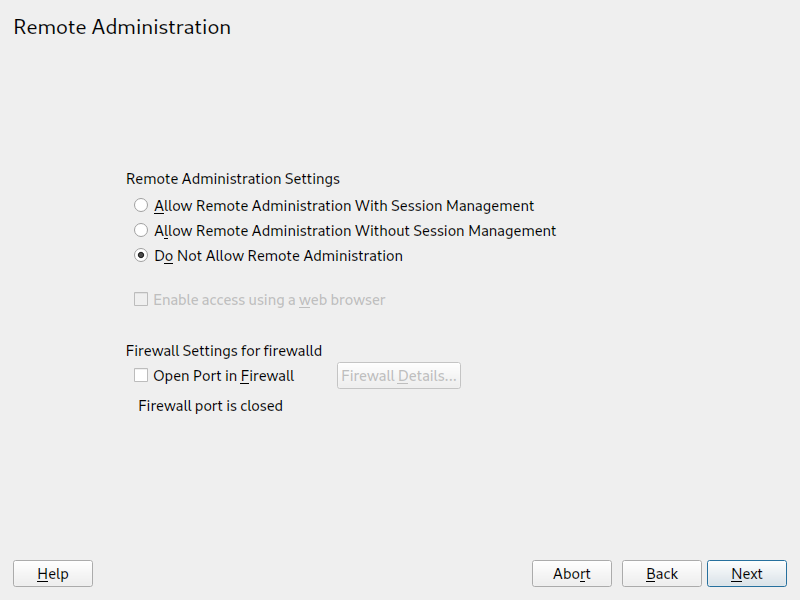

- 10.7 Remote administration

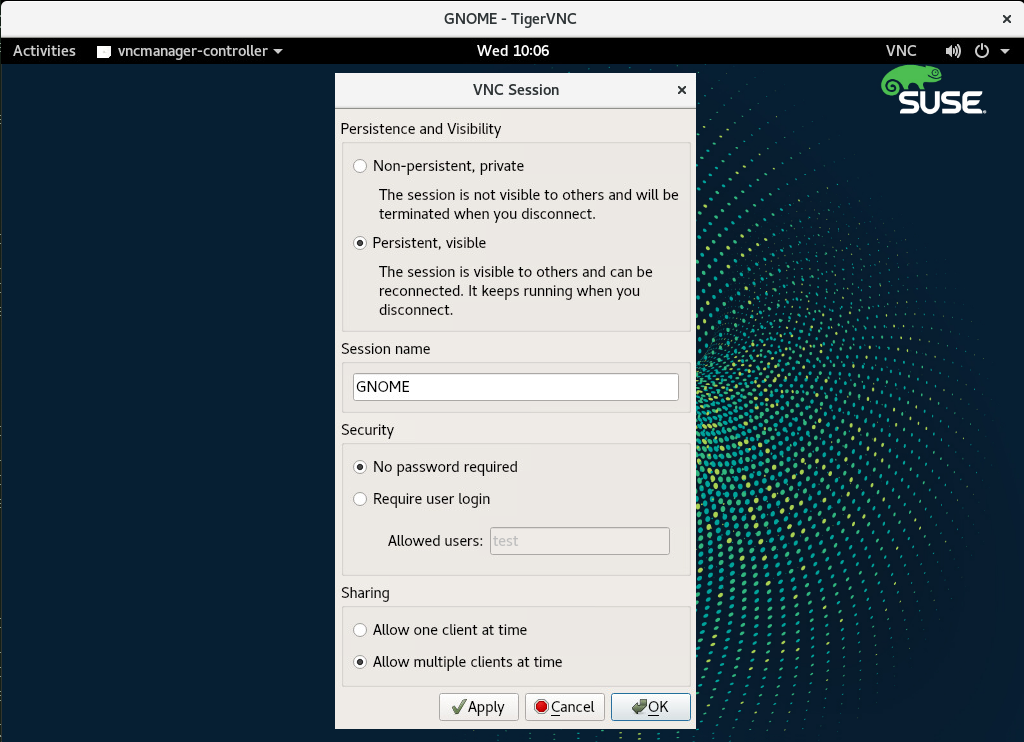

- 10.8 VNC session settings

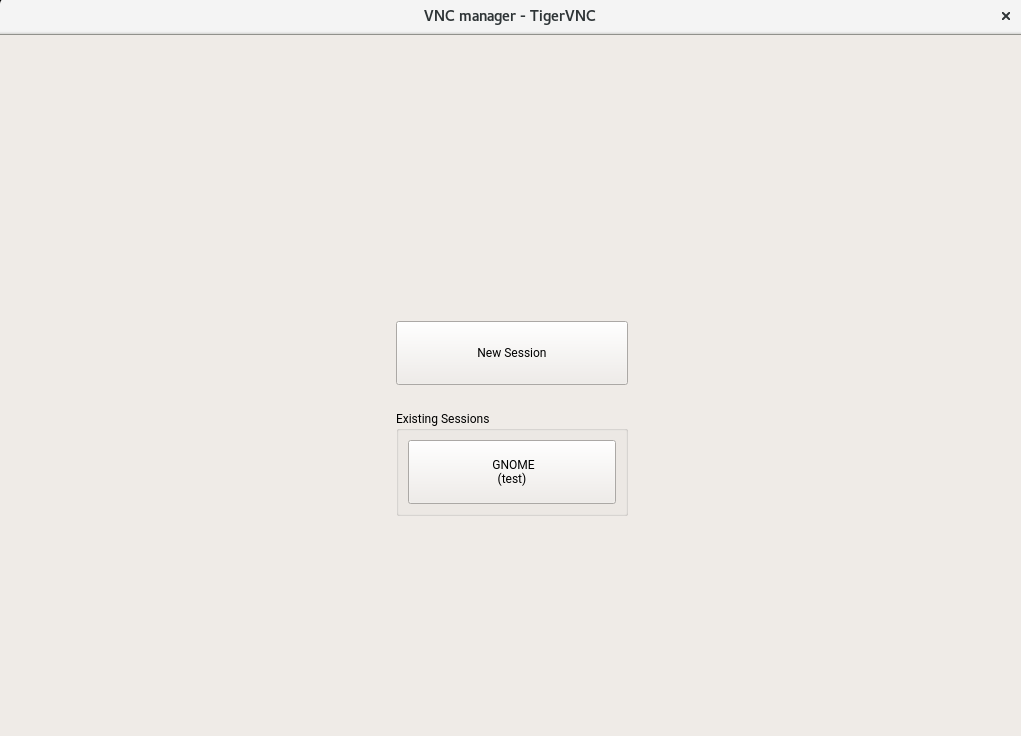

- 10.9 Joining a persistent VNC session

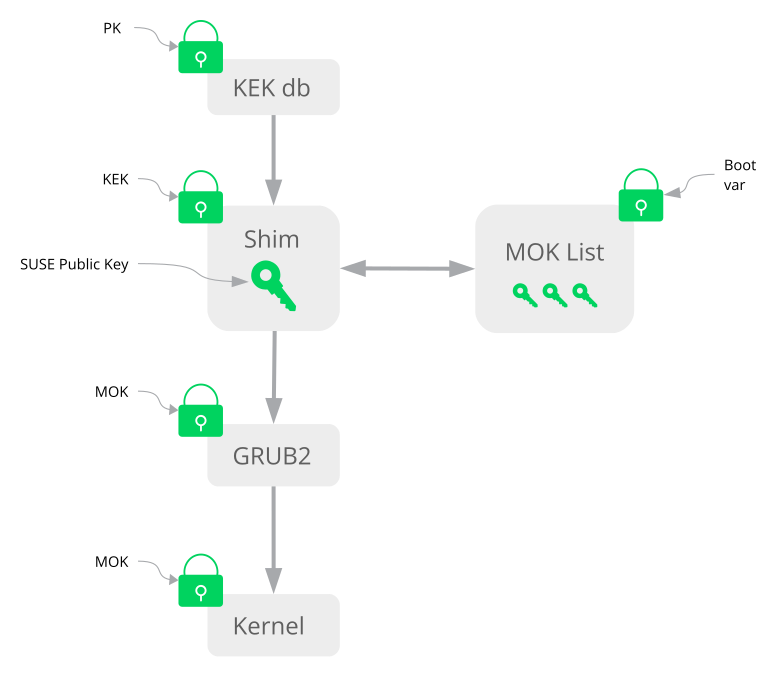

- 13.1 Secure boot support

- 13.2 UEFI: secure boot process

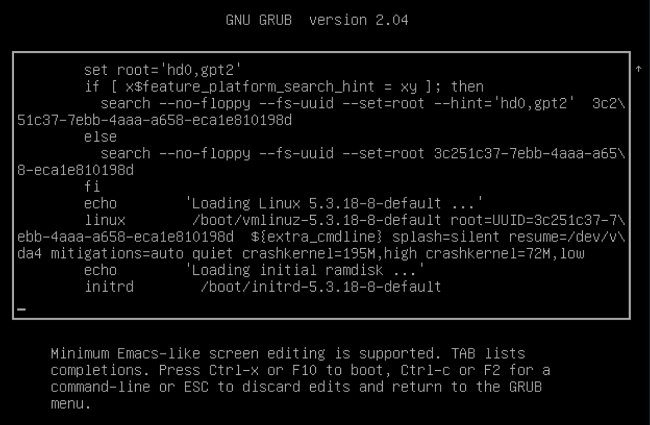

- 14.1 GRUB 2 boot editor

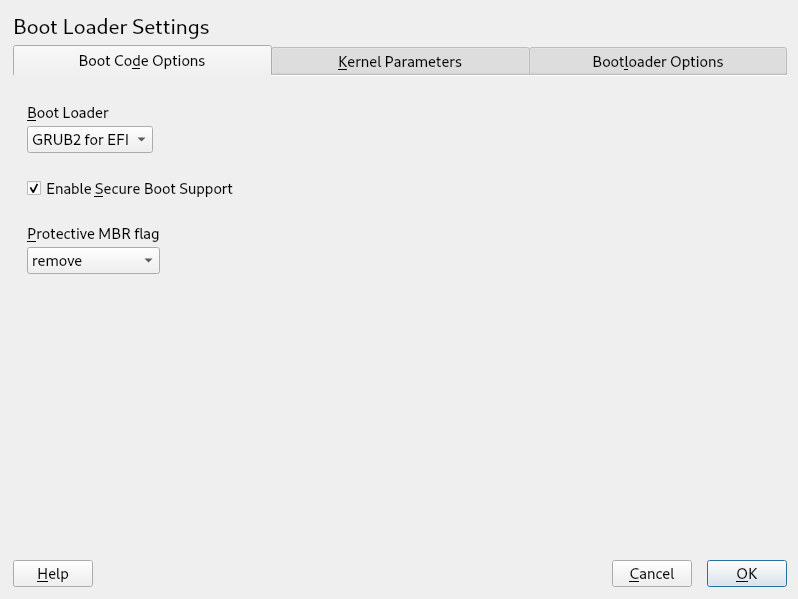

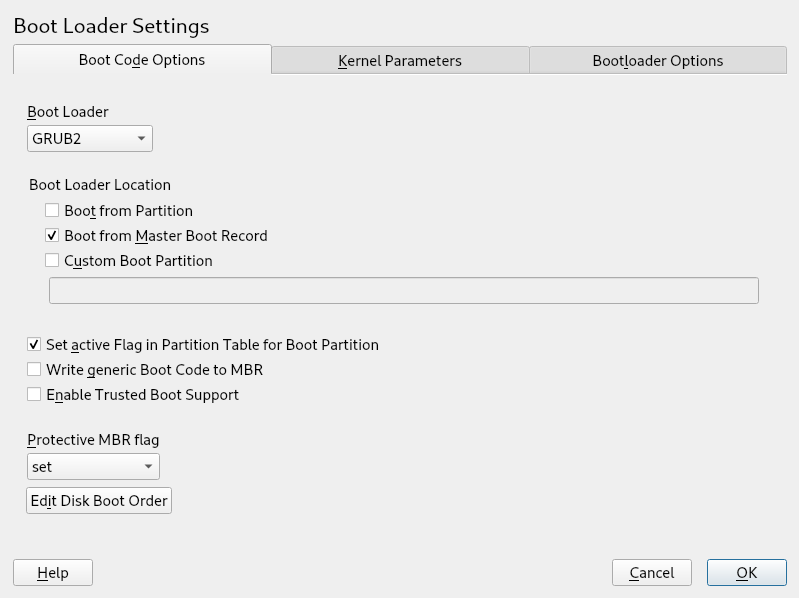

- 14.2 Boot code options

- 14.3 Code options

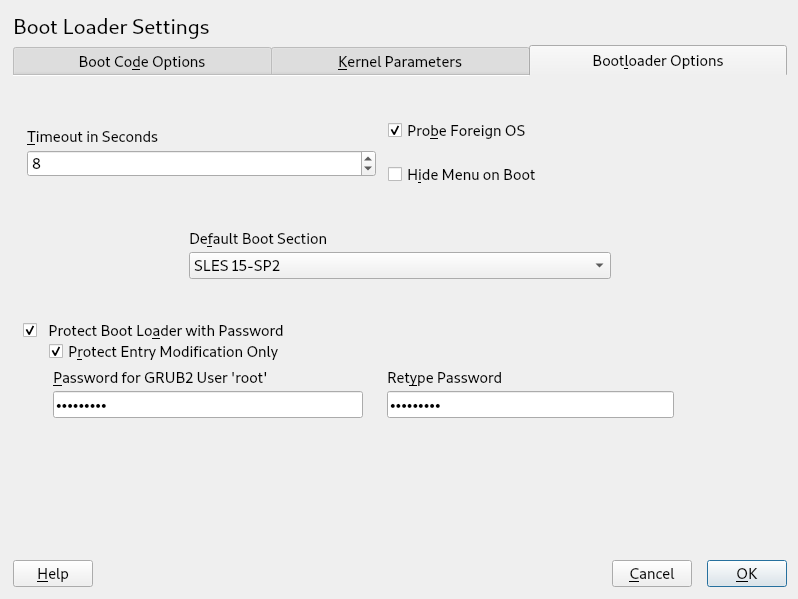

- 14.4 Boot loader options

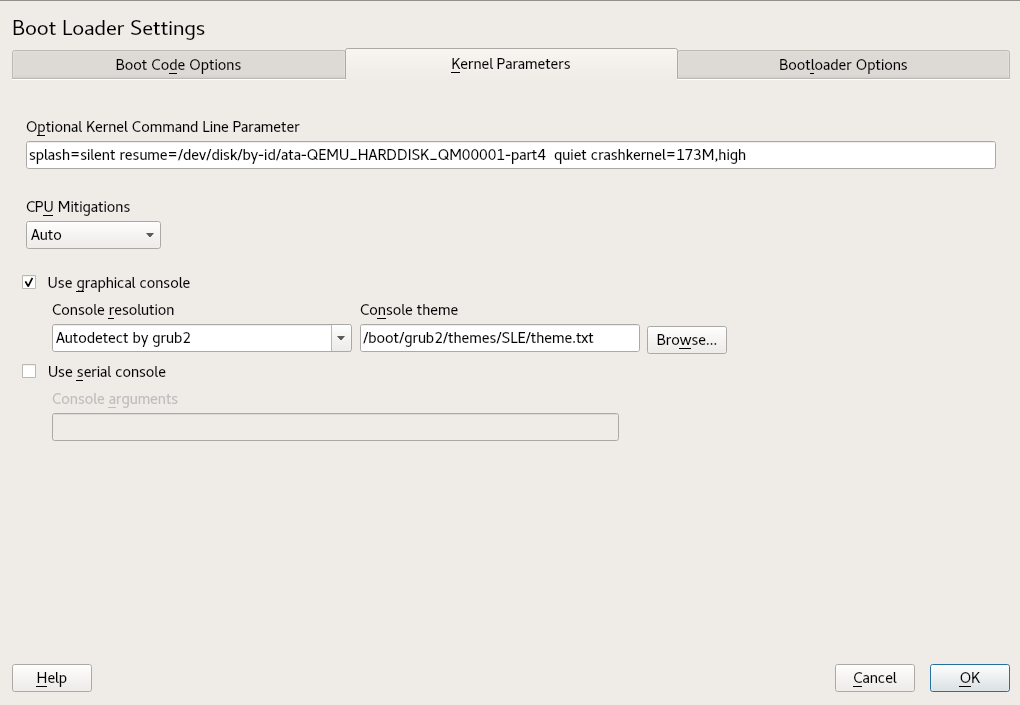

- 14.5 Kernel parameters

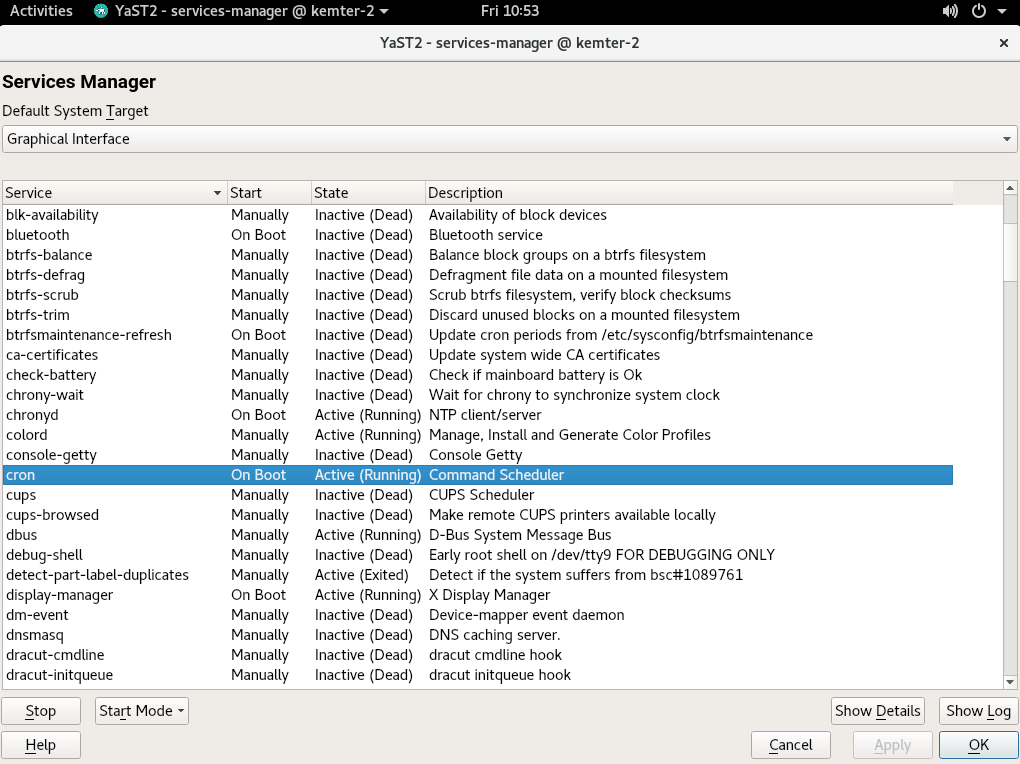

- 15.1 Services Manager

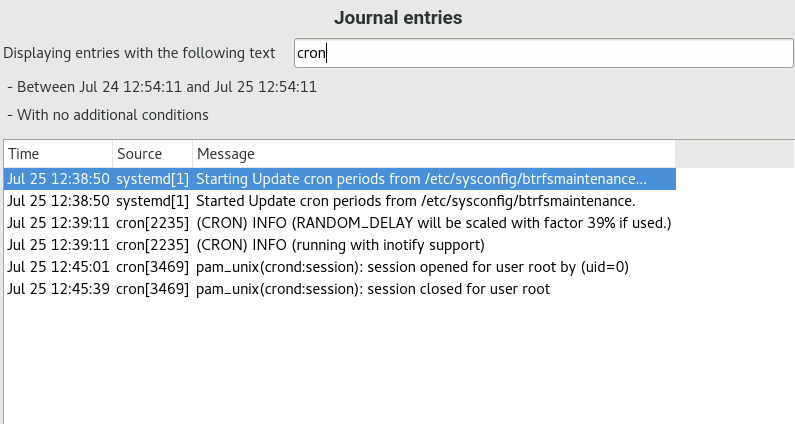

- 17.1 YaST systemd journal

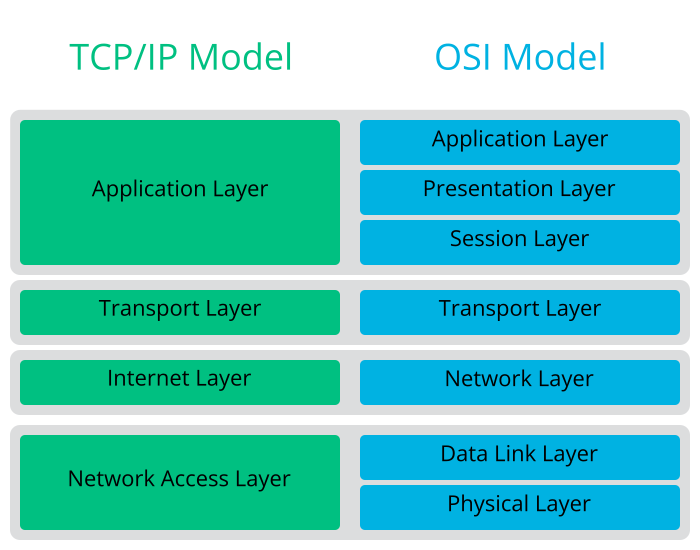

- 19.1 Simplified layer model for TCP/IP

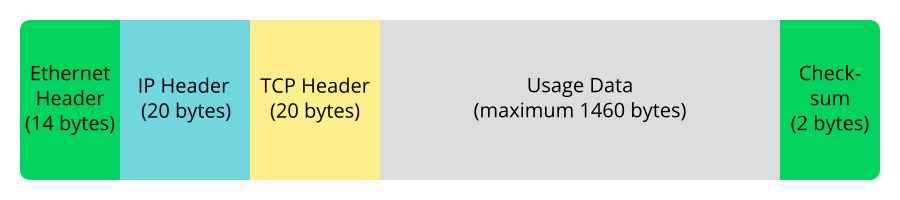

- 19.2 TCP/IP ethernet packet

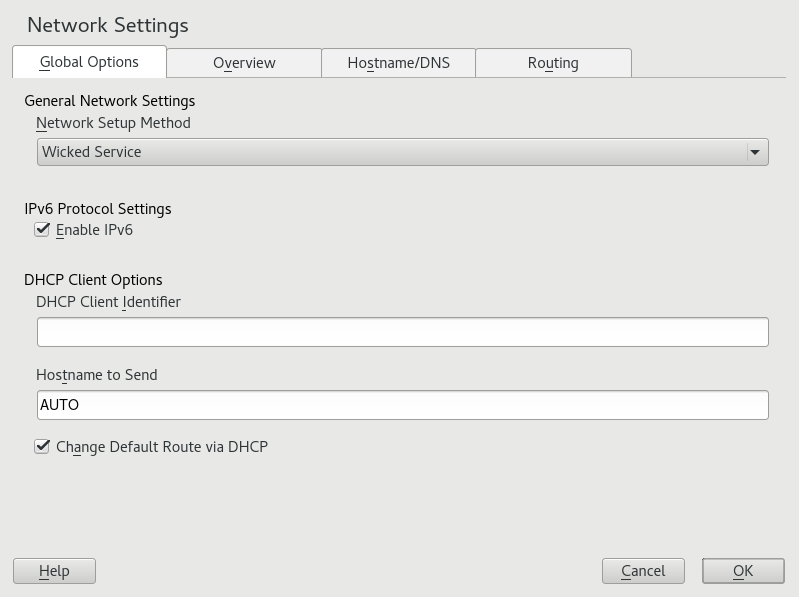

- 19.3 Configuring network settings

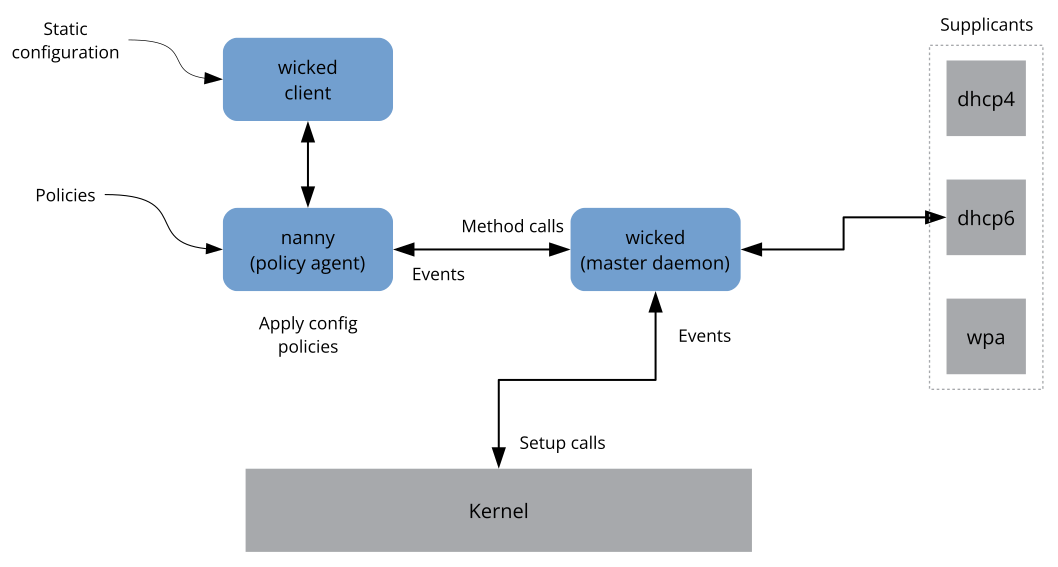

- 19.4

wickedarchitecture - 26.1 GNOME Network Connections dialog

- 26.2

firewalldzones in NetworkManager - 29.1 YaST service manager

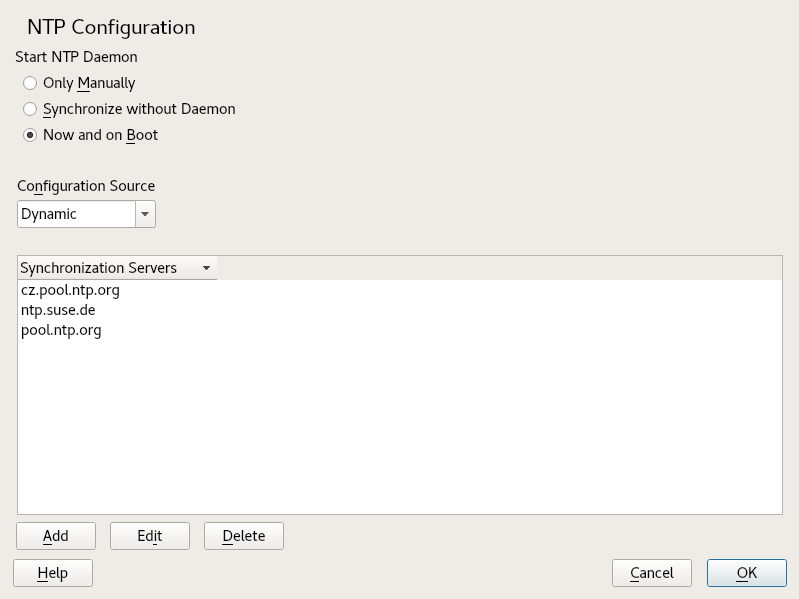

- 30.1 NTP configuration window

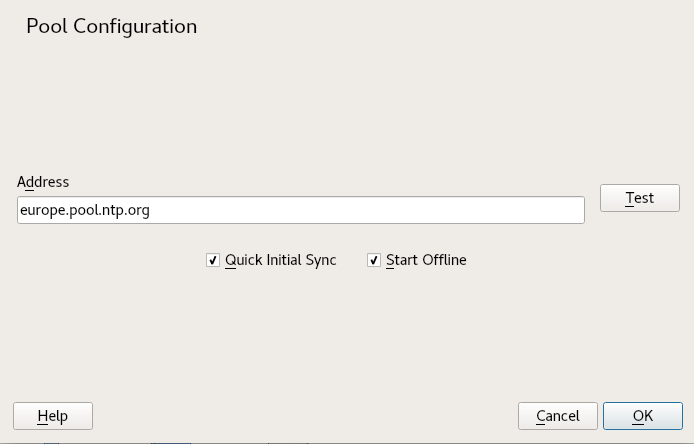

- 30.2 Adding a time server

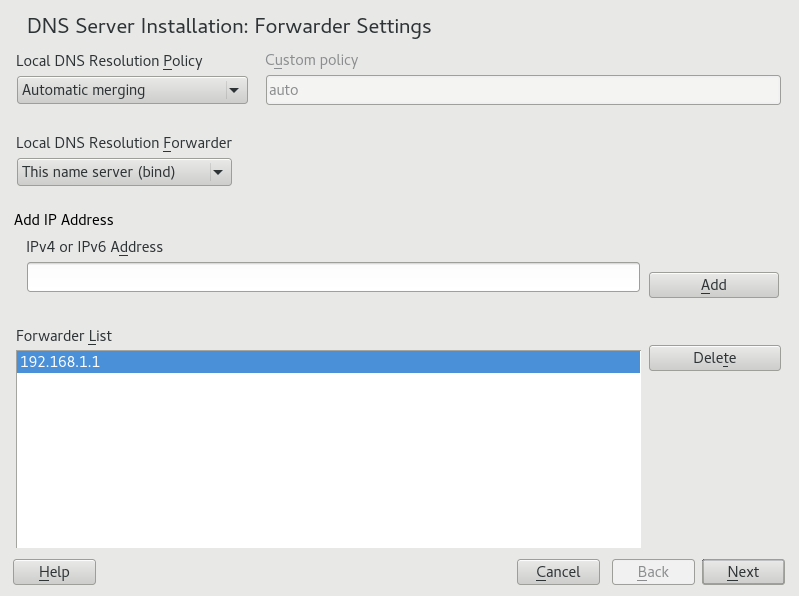

- 31.1 DNS server installation: forwarder settings

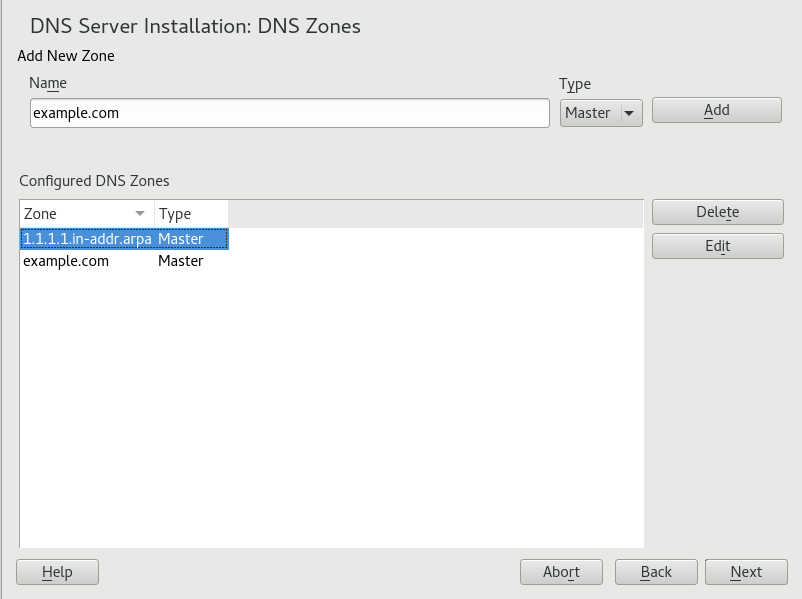

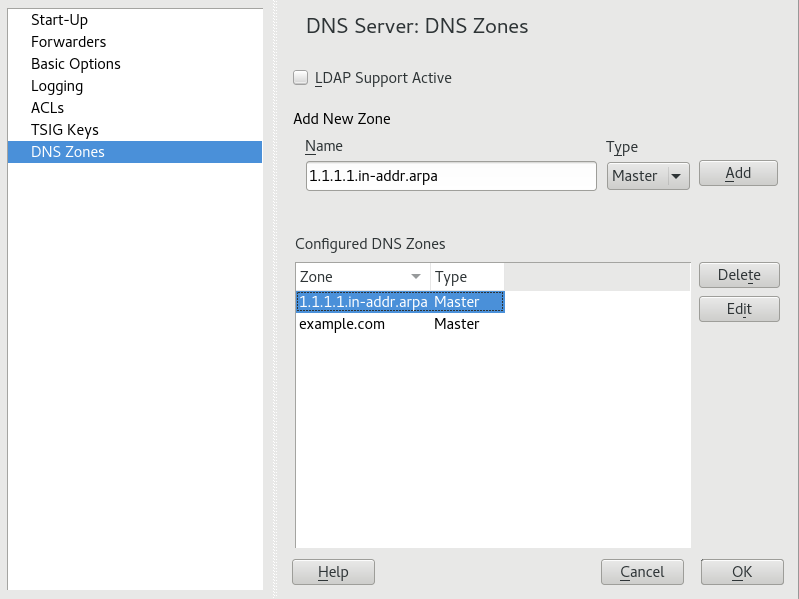

- 31.2 DNS server installation: DNS zones

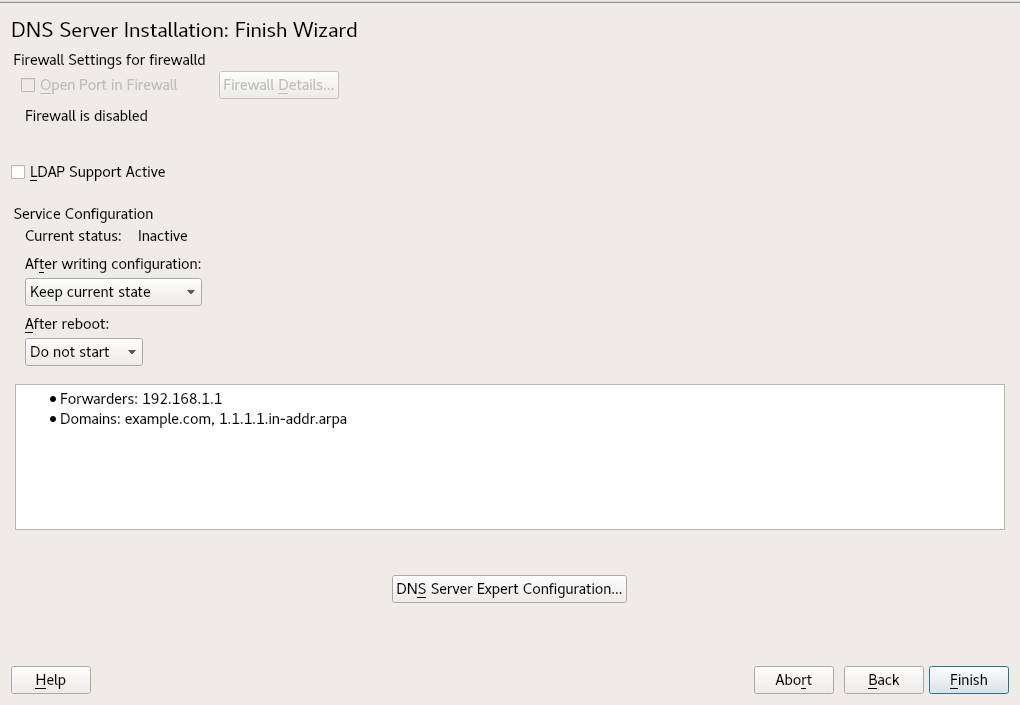

- 31.3 DNS server installation: finish wizard

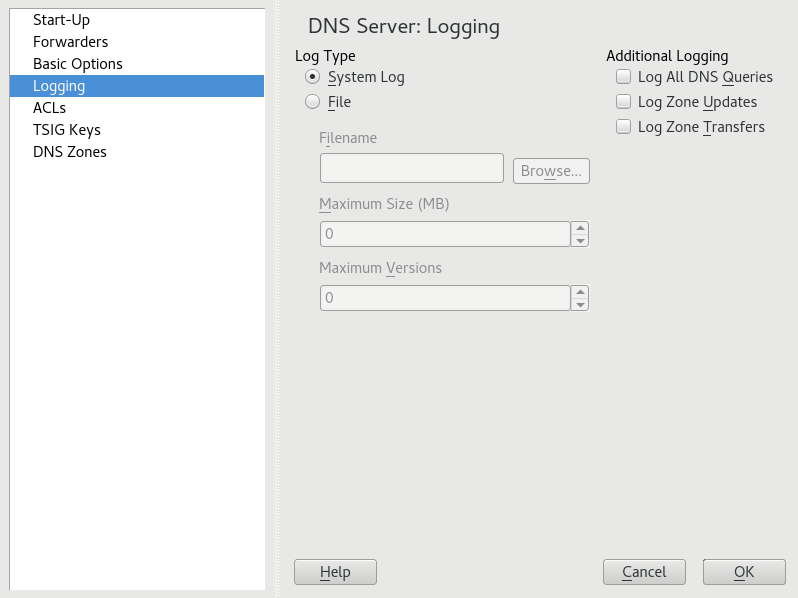

- 31.4 DNS server: logging

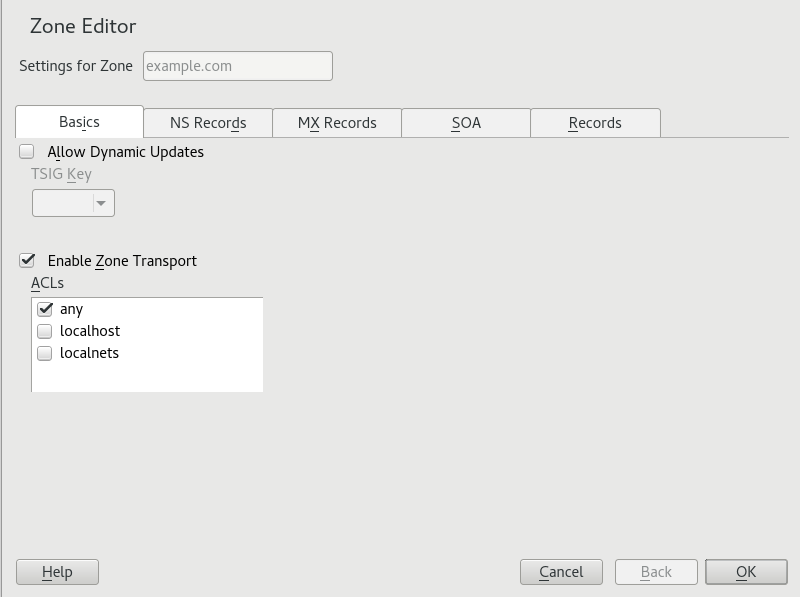

- 31.5 DNS server: Zone Editor (Basics)

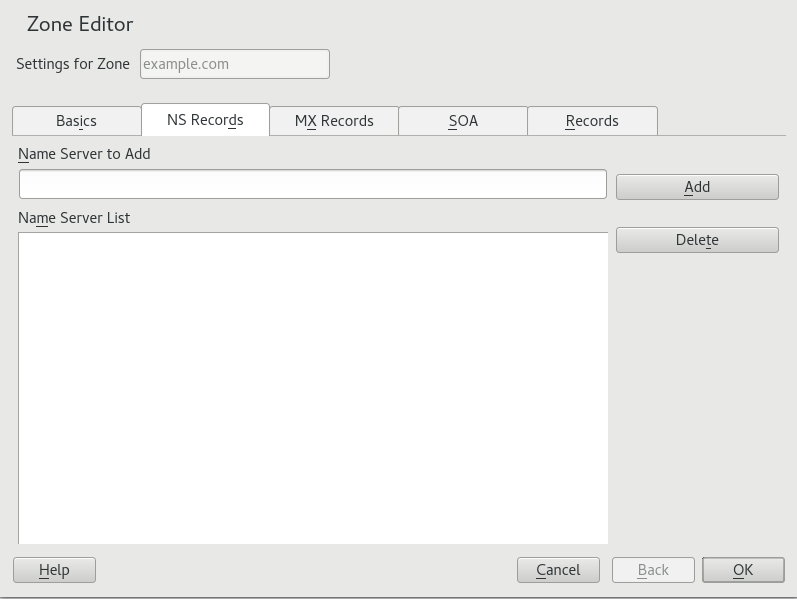

- 31.6 DNS server: Zone Editor (NS Records)

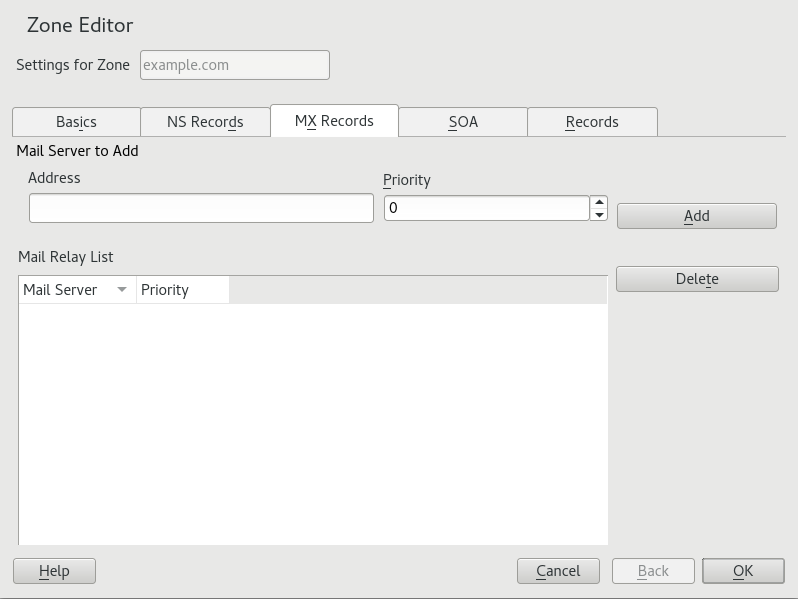

- 31.7 DNS server: Zone Editor (MX Records)

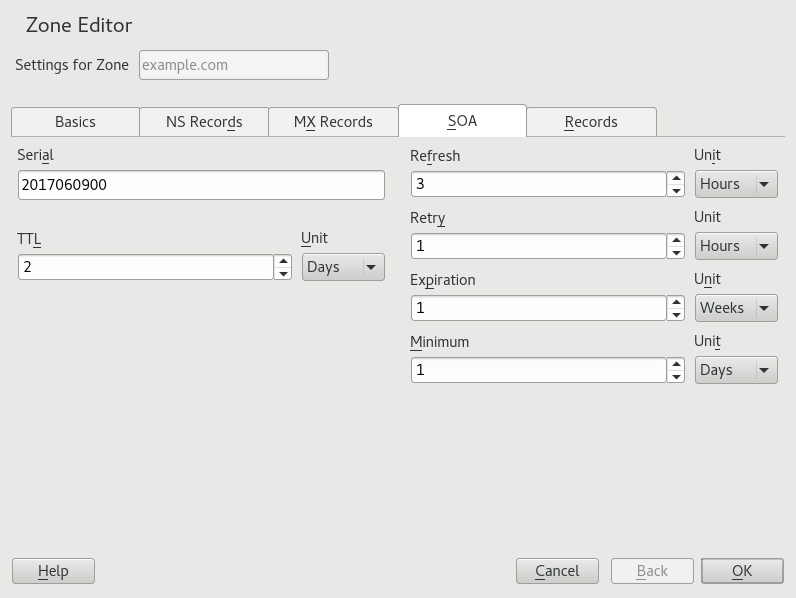

- 31.8 DNS server: Zone Editor (SOA)

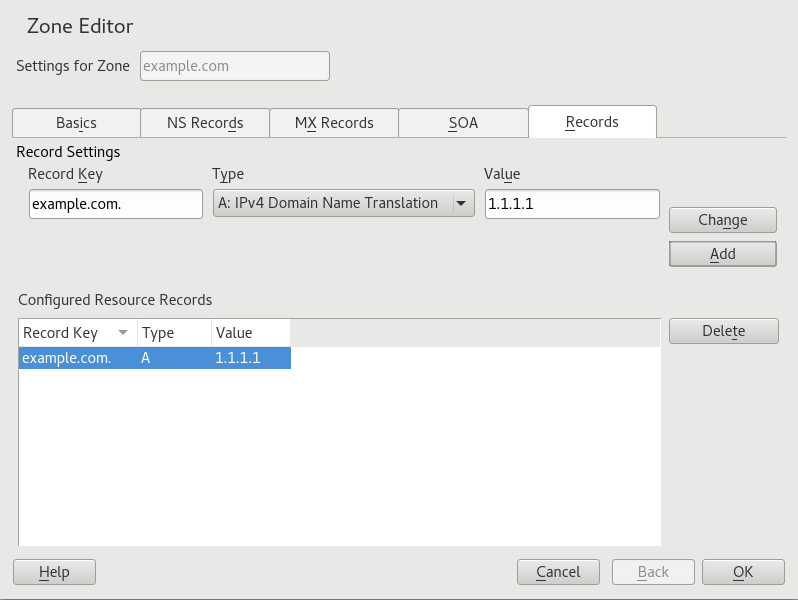

- 31.9 Adding a record for a master zone

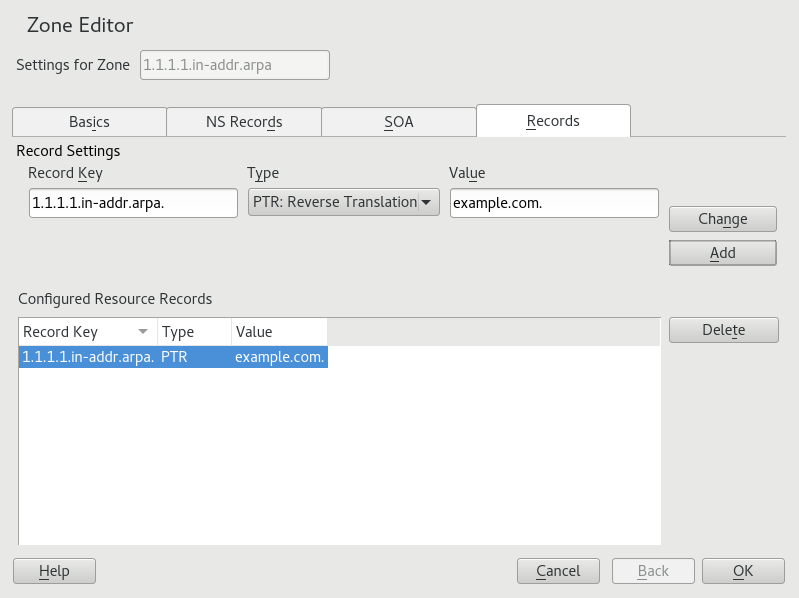

- 31.10 Adding a reverse zone

- 31.11 Adding a reverse record

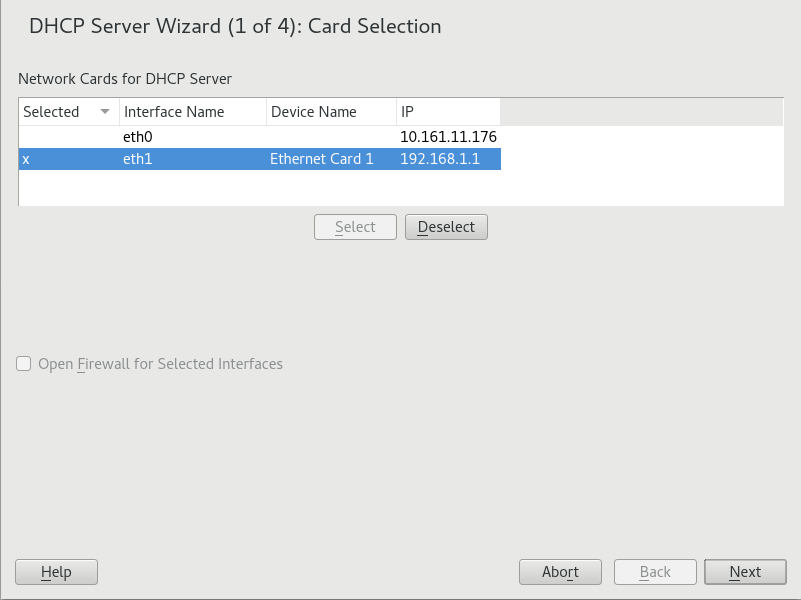

- 32.1 DHCP server: card selection

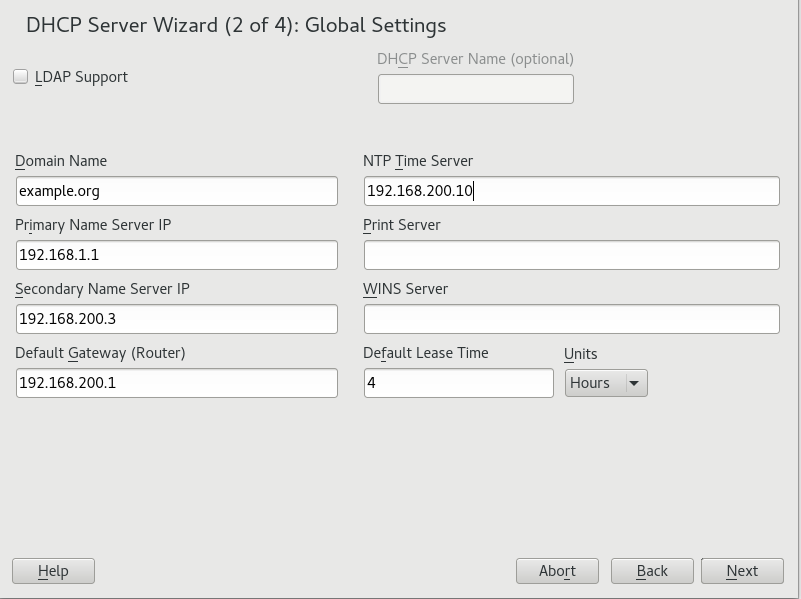

- 32.2 DHCP server: global settings

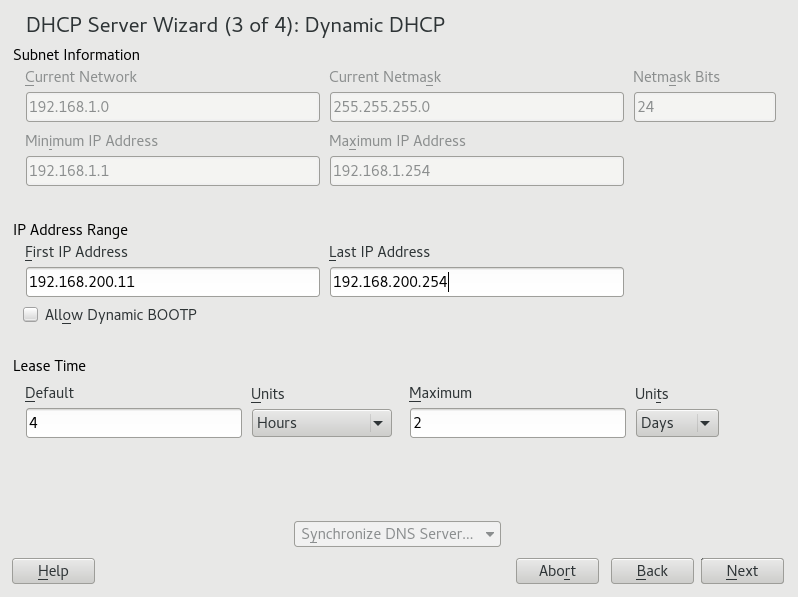

- 32.3 DHCP server: dynamic DHCP

- 32.4 DHCP server: start-up

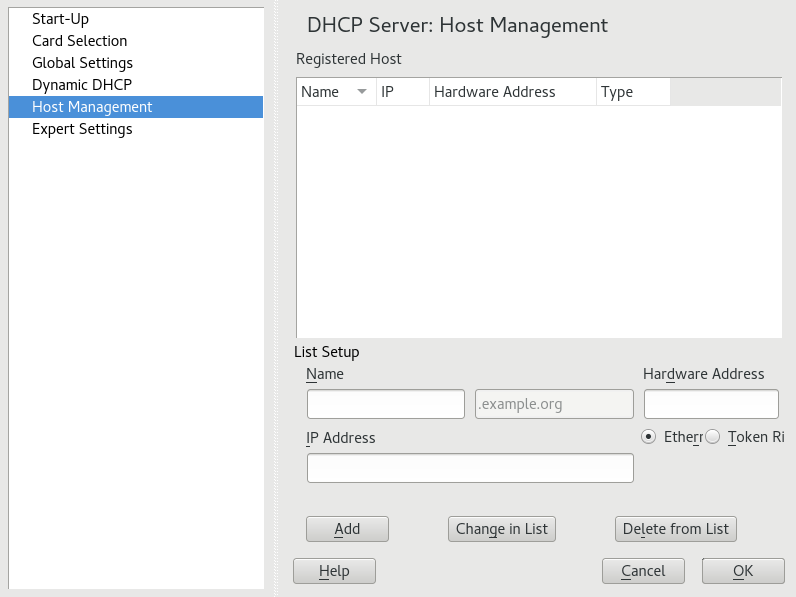

- 32.5 DHCP server: host management

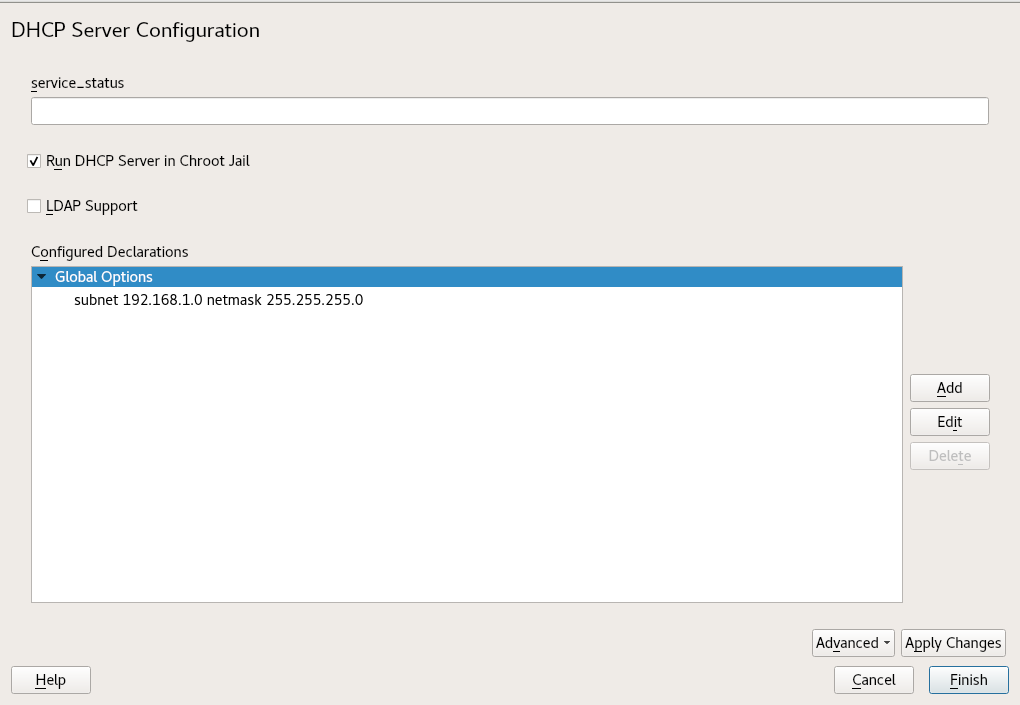

- 32.6 DHCP server: chroot jail and declarations

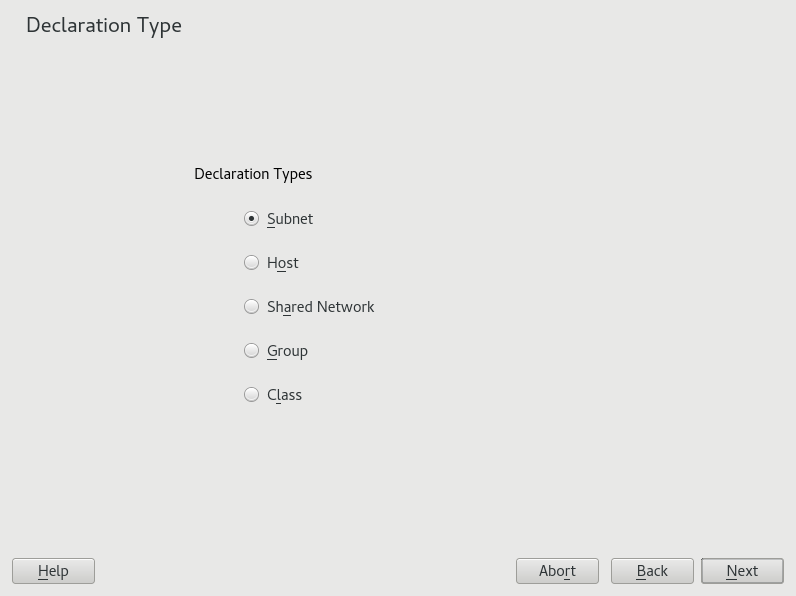

- 32.7 DHCP server: selecting a declaration type

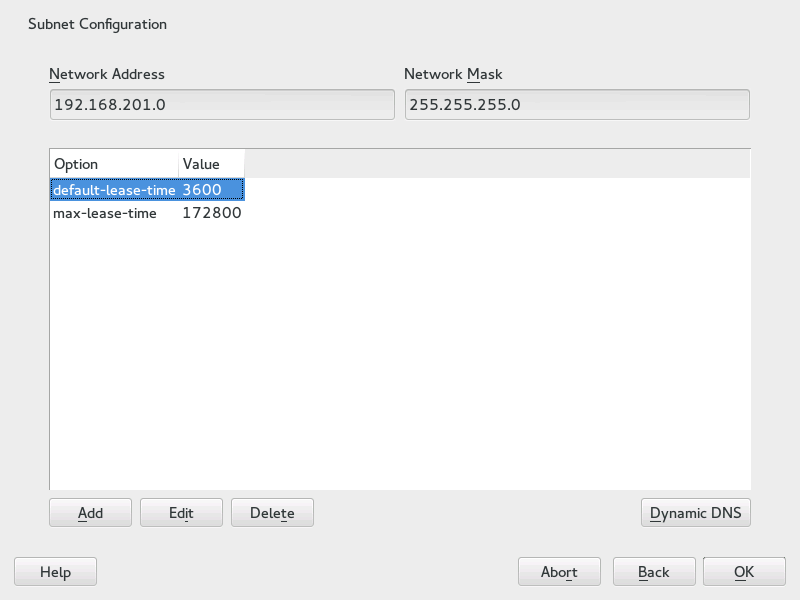

- 32.8 DHCP server: configuring subnets

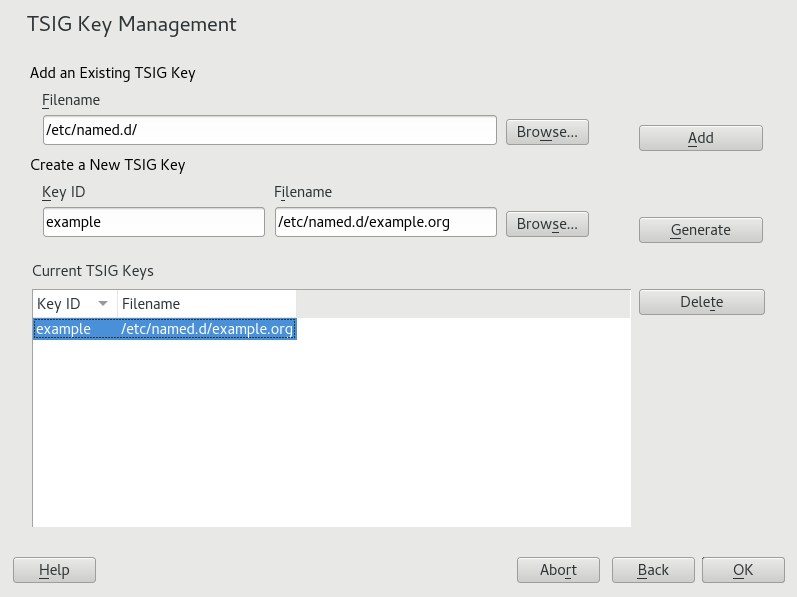

- 32.9 DHCP server: TSIG configuration

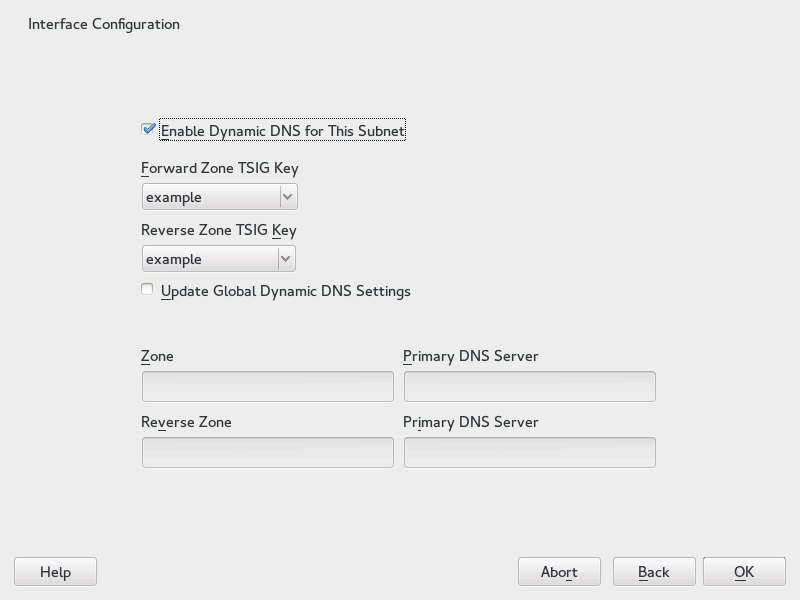

- 32.10 DHCP server: interface configuration for dynamic DNS

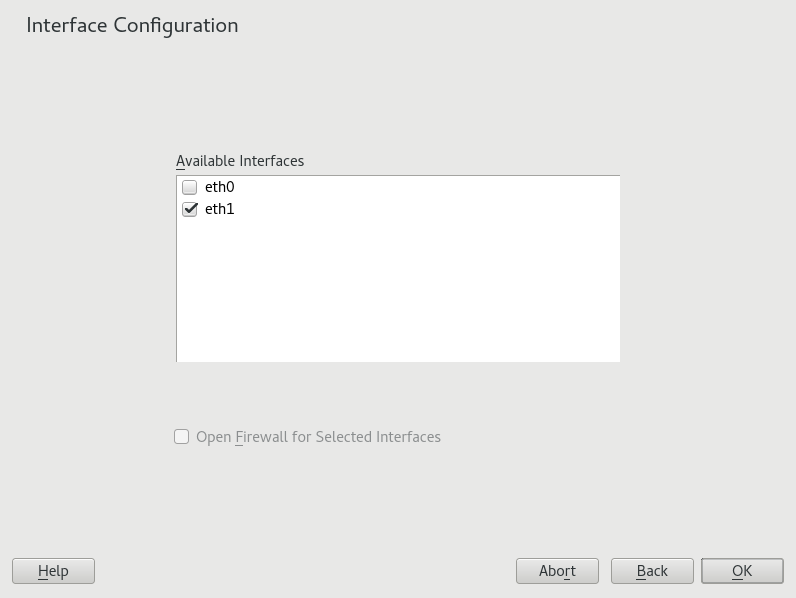

- 32.11 DHCP server: network interface and firewall

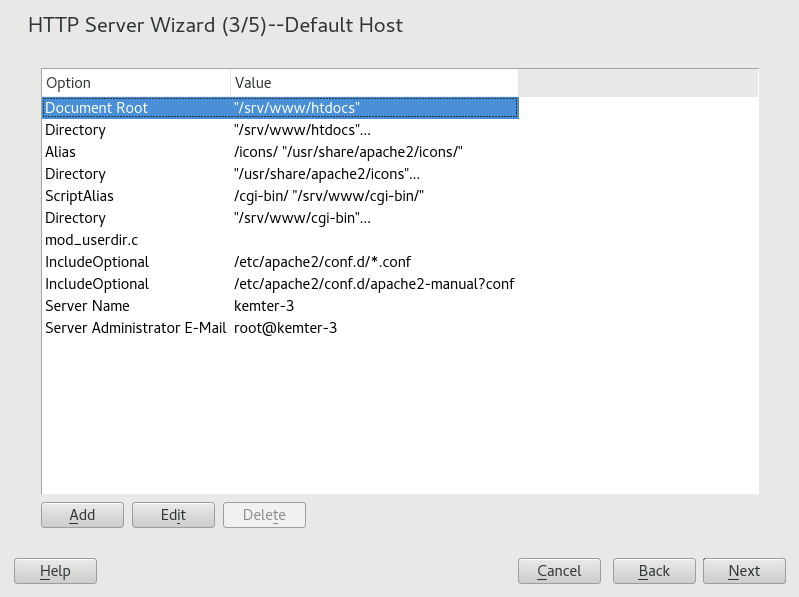

- 34.1 HTTP server wizard: default host

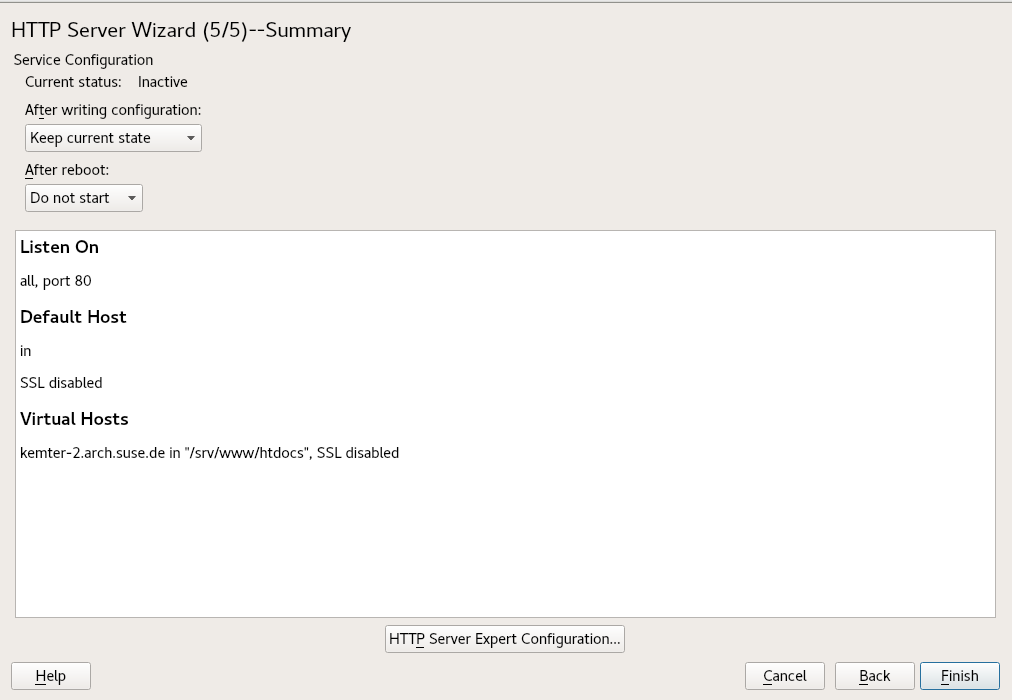

- 34.2 HTTP server wizard: summary

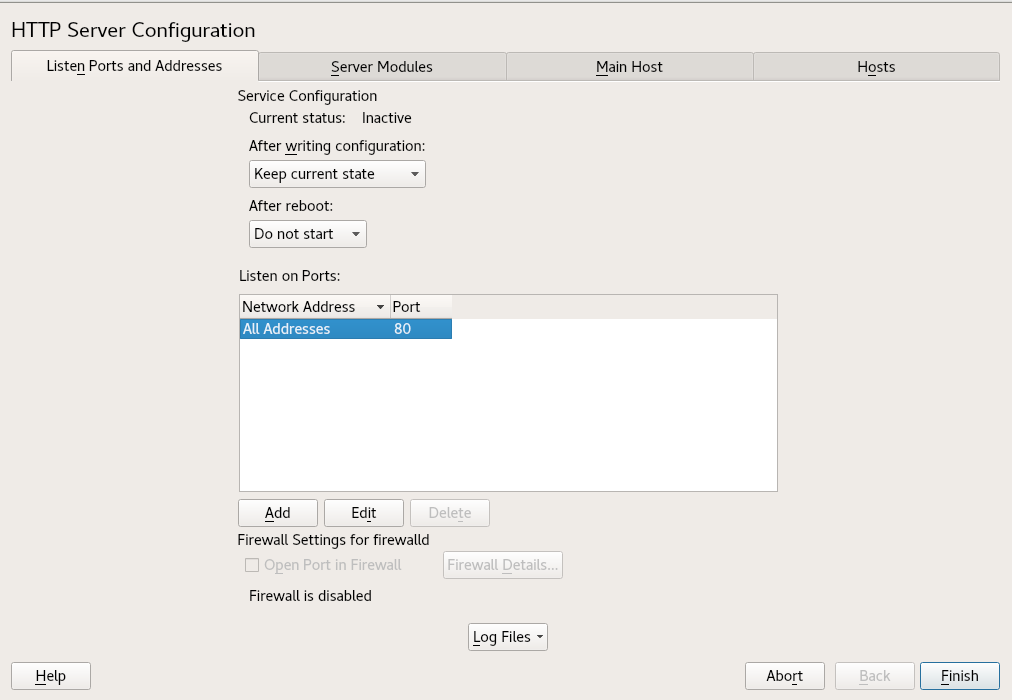

- 34.3 HTTP server configuration: listen ports and addresses

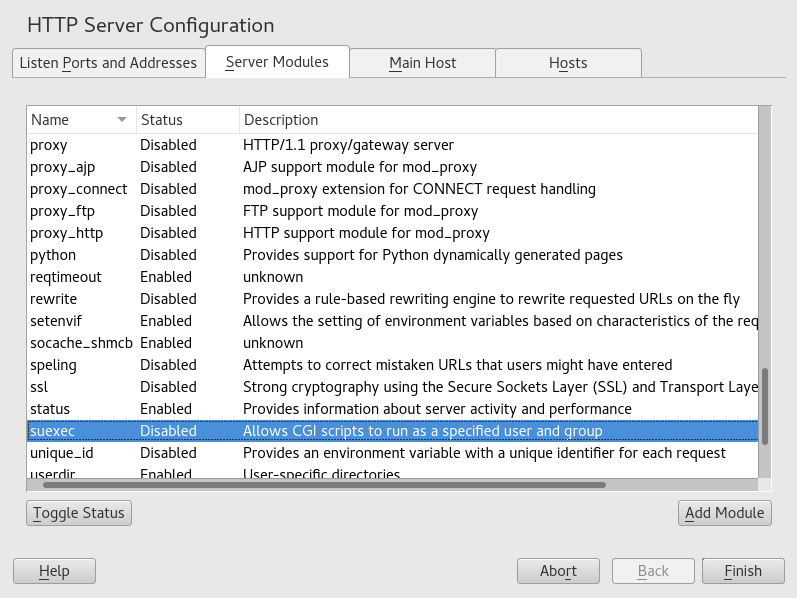

- 34.4 HTTP server configuration: server modules

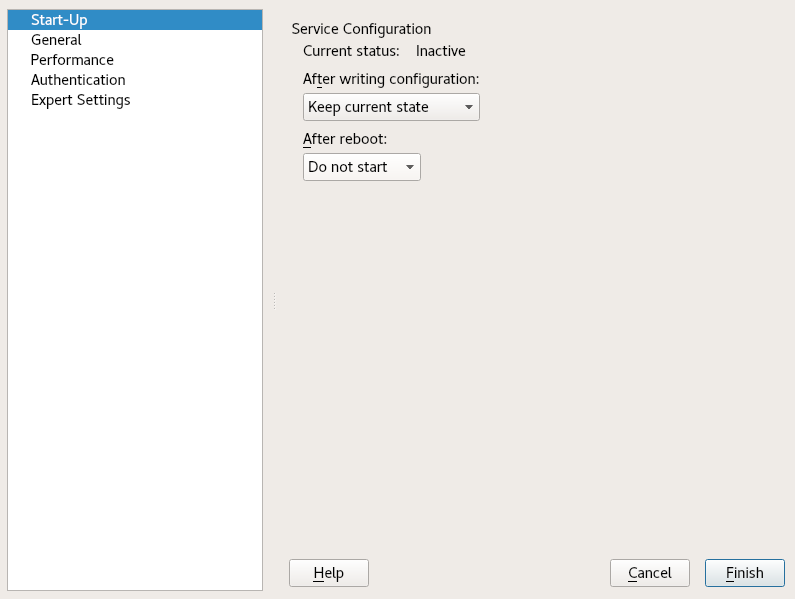

- 35.1 FTP server configuration — start-up

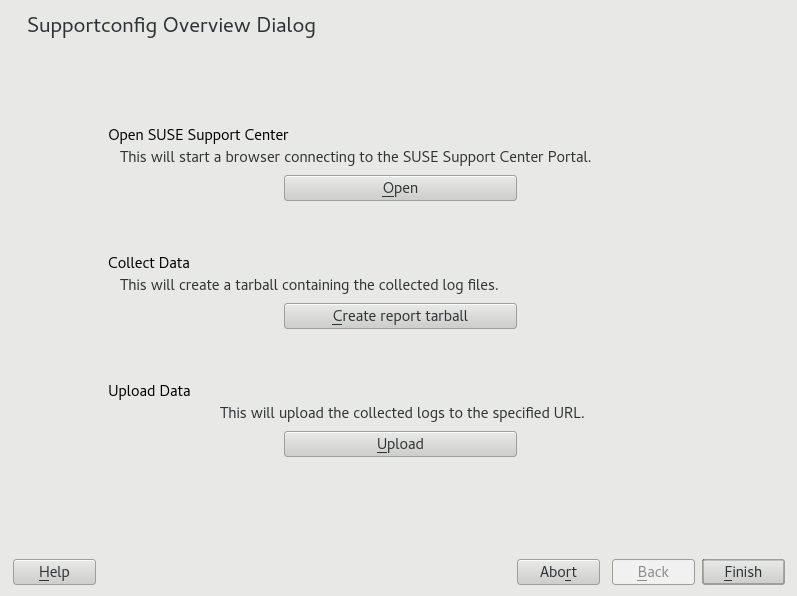

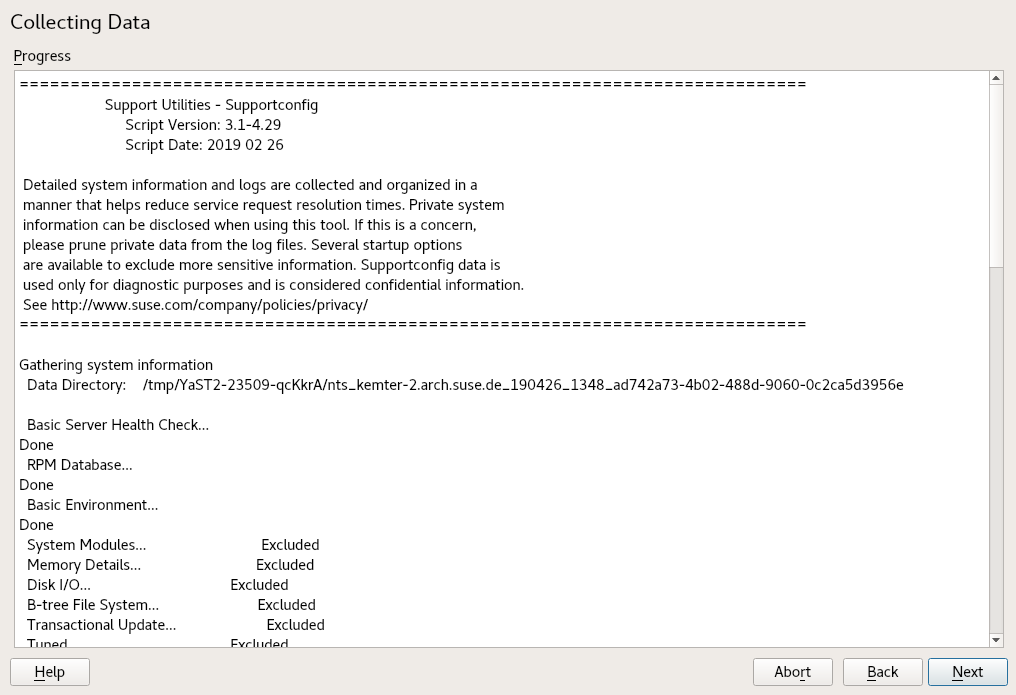

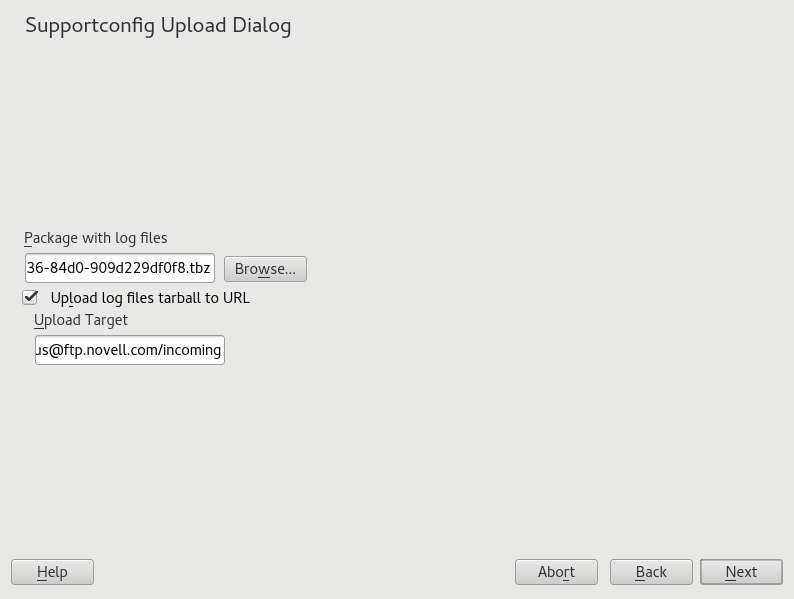

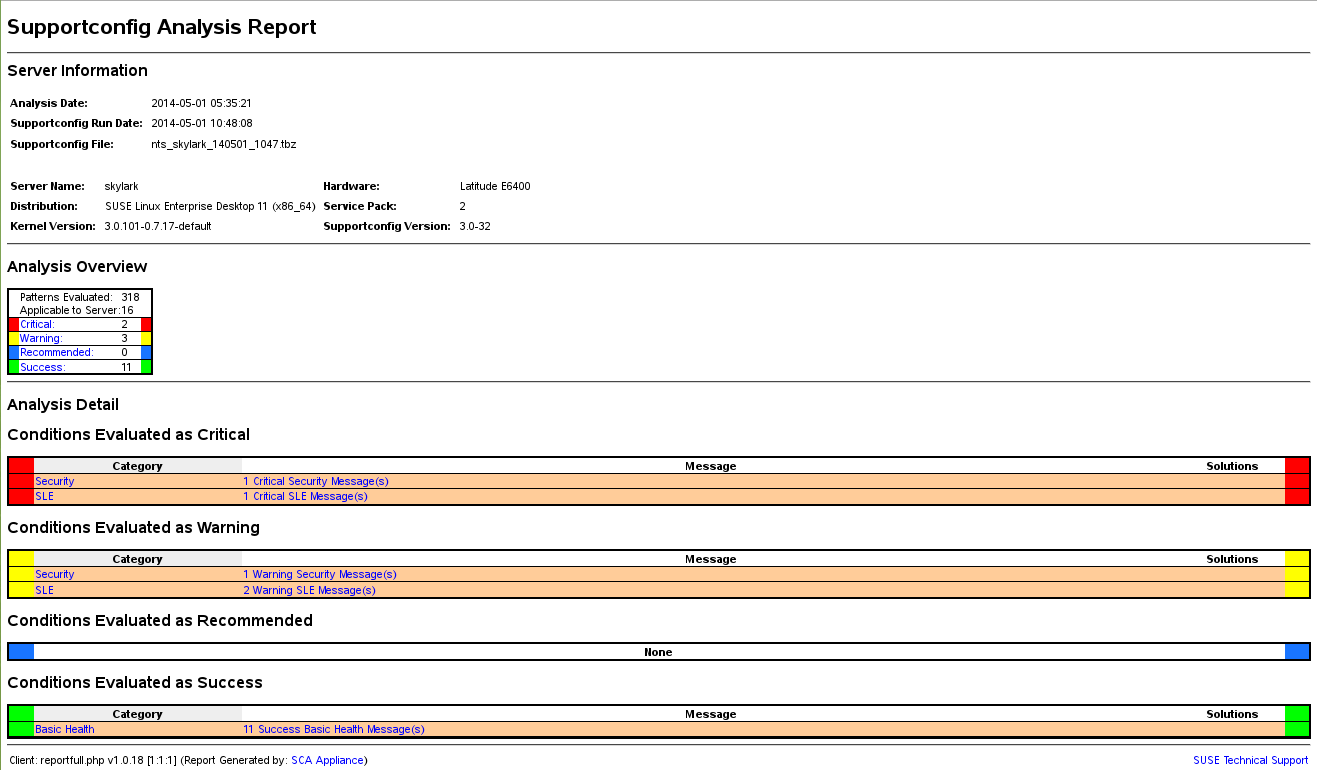

- 39.1 HTML report generated by SCA tool

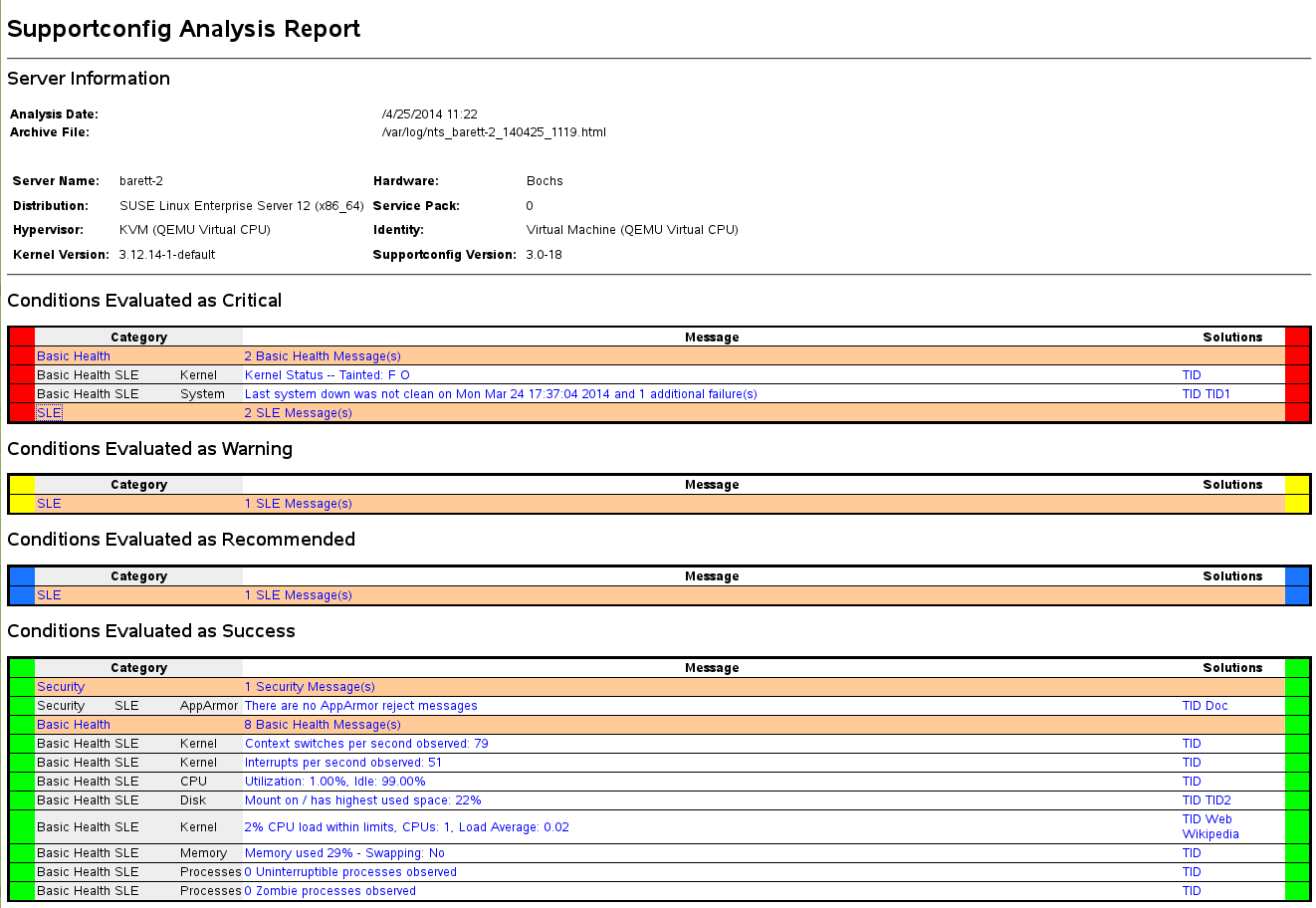

- 39.2 HTML report generated by SCA appliance

- 1.1 Bash configuration files for login shells

- 1.2 Bash configuration files for non-login shells

- 1.3 Special files for Bash

- 1.4 Overview of a standard directory tree

- 1.5 Useful environment variables

- 6.1 Essential RPM query options

- 6.2 RPM verify options

- 15.1 Service management commands

- 15.2 Commands for enabling and disabling services

- 15.3 System V runlevels and

systemdtarget units - 19.1 Private IP address domains

- 19.2 Parameters for /etc/host.conf

- 19.3 Databases available via /etc/nsswitch.conf

- 19.4 Configuration options for NSS “databases”

- 19.5 Feature comparison between bonding and team

- 21.1 Generating PFL from fontconfig rules

- 21.2 Results from generating PFL from fontconfig rules with changed order

- 21.3 Results from generating PFL from fontconfig rules

- 25.1

ulimit: Setting resources for the user - 37.1 Commands for managing sfcbd

- 38.1 Man pages—categories and descriptions

- 39.1 Comparison of features and file names in the TAR archive

- 40.1 Log files

- 40.2 System information with the

/procfile system - 40.3 System information with the

/sysfile system

- 1.1 A shell script printing a text

- 6.1 Zypper—list of known repositories

- 6.2

rpm -q -i wget - 6.3 Script to search for packages

- 7.1 Example timeline configuration

- 14.1 Usage of grub2-mkconfig

- 14.2 Usage of grub2-mkrescue

- 14.3 Usage of grub2-script-check

- 14.4 Usage of grub2-once

- 15.1 List active services

- 15.2 List failed services

- 15.3 List all processes belonging to a service

- 18.1 Alternatives System of the

javacommand - 19.1 Writing IP addresses

- 19.2 Linking IP addresses to the netmask

- 19.3 Sample IPv6 address

- 19.4 IPv6 address specifying the prefix length

- 19.5 Common network interfaces and some static routes

- 19.6

/var/run/netconfig/resolv.conf - 19.7

/etc/hosts - 19.8

/etc/networks - 19.9

/etc/host.conf - 19.10

/etc/nsswitch.conf - 19.11 Output of the command ping

- 19.12 Configuration for load balancing with Network Teaming

- 19.13 Configuration for DHCP Network Teaming device

- 20.1 Error message from

lpd - 20.2 Broadcast from the CUPS network server

- 21.1 Specifying rendering algorithms

- 21.2 Aliases and family name substitutions

- 21.3 Aliases and family name substitutions

- 21.4 Aliases and family names substitutions

- 24.1 Example

udevrules - 25.1 Entry in /etc/crontab

- 25.2 /etc/crontab: remove time stamp files

- 25.3

ulimit: Settings in~/.bashrc - 31.1 Forwarding options in named.conf

- 31.2 A basic /etc/named.conf

- 31.3 Entry to disable logging

- 31.4 Zone entry for example.com

- 31.5 Zone entry for example.net

- 31.6 The /var/lib/named/example.com.zone file

- 31.7 Reverse lookup

- 32.1 The configuration file /etc/dhcpd.conf

- 32.2 Additions to the configuration file

- 34.1 Basic examples of name-based

VirtualHostentries - 34.2 Name-based

VirtualHostdirectives - 34.3 IP-based

VirtualHostdirectives - 34.4 Basic

VirtualHostconfiguration - 34.5 VirtualHost CGI configuration

- 36.1 A request with

squidclient - 36.2 Defining ACL rules

- 39.1 Output of

hostinfowhen logging in asroot

Copyright © 2006–2024 SUSE LLC and contributors. All rights reserved.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.2 or (at your option) version 1.3; with the Invariant Section being this copyright notice and license. A copy of the license version 1.2 is included in the section entitled “GNU Free Documentation License”.

For SUSE trademarks, see https://www.suse.com/company/legal/. All third-party trademarks are the property of their respective owners. Trademark symbols (®, ™ etc.) denote trademarks of SUSE and its affiliates. Asterisks (*) denote third-party trademarks.

All information found in this book has been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. Neither SUSE LLC, its affiliates, the authors nor the translators shall be held liable for possible errors or the consequences thereof.

Preface #

1 Available documentation #

- Online documentation

Our documentation is available online at https://documentation.suse.com. Browse or download the documentation in various formats.

Note: Latest updatesThe latest updates are usually available in the English-language version of this documentation.

- SUSE Knowledgebase

If you have run into an issue, also check out the Technical Information Documents (TIDs) that are available online at https://www.suse.com/support/kb/. Search the SUSE Knowledgebase for known solutions driven by customer need.

- Release notes

For release notes, see https://www.suse.com/releasenotes/.

- In your system

For offline use, the release notes are also available under

/usr/share/doc/release-noteson your system. The documentation for individual packages is available at/usr/share/doc/packages.Many commands are also described in their manual pages. To view them, run

man, followed by a specific command name. If themancommand is not installed on your system, install it withsudo zypper install man.

2 Improving the documentation #

Your feedback and contributions to this documentation are welcome. The following channels for giving feedback are available:

- Service requests and support

For services and support options available for your product, see https://www.suse.com/support/.

To open a service request, you need a SUSE subscription registered at SUSE Customer Center. Go to https://scc.suse.com/support/requests, log in, and click .

- Bug reports

Report issues with the documentation at https://bugzilla.suse.com/.

To simplify this process, click the icon next to a headline in the HTML version of this document. This preselects the right product and category in Bugzilla and adds a link to the current section. You can start typing your bug report right away.

A Bugzilla account is required.

- Contributions

To contribute to this documentation, click the icon next to a headline in the HTML version of this document. This will take you to the source code on GitHub, where you can open a pull request.

A GitHub account is required.

Note: only available for EnglishThe icons are only available for the English version of each document. For all other languages, use the icons instead.

For more information about the documentation environment used for this documentation, see the repository's README.

You can also report errors and send feedback concerning the documentation to <doc-team@suse.com>. Include the document title, the product version, and the publication date of the document. Additionally, include the relevant section number and title (or provide the URL) and provide a concise description of the problem.

3 Documentation conventions #

The following notices and typographic conventions are used in this document:

/etc/passwd: Directory names and file namesPLACEHOLDER: Replace PLACEHOLDER with the actual value

PATH: An environment variablels,--help: Commands, options, and parametersuser: The name of a user or grouppackage_name: The name of a software package

Alt, Alt–F1: A key to press or a key combination. Keys are shown in uppercase as on a keyboard.

, › : menu items, buttons

AMD/Intel This paragraph is only relevant for the AMD64/Intel 64 architectures. The arrows mark the beginning and the end of the text block.

IBM Z, POWER This paragraph is only relevant for the architectures

IBM ZandPOWER. The arrows mark the beginning and the end of the text block.Chapter 1, “Example chapter”: A cross-reference to another chapter in this guide.

Commands that must be run with

rootprivileges. You can also prefix these commands with thesudocommand to run them as a non-privileged user:#command>sudocommandCommands that can be run by non-privileged users:

>commandCommands can be split into two or multiple lines by a backslash character (

\) at the end of a line. The backslash informs the shell that the command invocation will continue after the line's end:>echoa b \ c dA code block that shows both the command (preceded by a prompt) and the respective output returned by the shell:

>commandoutputNotices

Warning: Warning noticeVital information you must be aware of before proceeding. Warns you about security issues, potential loss of data, damage to hardware, or physical hazards.

Important: Important noticeImportant information you should be aware of before proceeding.

Note: Note noticeAdditional information, for example about differences in software versions.

Tip: Tip noticeHelpful information, like a guideline or a piece of practical advice.

Compact Notices

Additional information, for example about differences in software versions.

Helpful information, like a guideline or a piece of practical advice.

4 Support #

Find the support statement for SUSE Linux Enterprise Server and general information about technology previews below. For details about the product lifecycle, see https://www.suse.com/lifecycle.

If you are entitled to support, find details on how to collect information for a support ticket at https://documentation.suse.com/sles-15/html/SLES-all/cha-adm-support.html.

4.1 Support statement for SUSE Linux Enterprise Server #

To receive support, you need an appropriate subscription with SUSE. To view the specific support offers available to you, go to https://www.suse.com/support/ and select your product.

The support levels are defined as follows:

- L1

Problem determination, which means technical support designed to provide compatibility information, usage support, ongoing maintenance, information gathering and basic troubleshooting using available documentation.

- L2

Problem isolation, which means technical support designed to analyze data, reproduce customer problems, isolate a problem area and provide a resolution for problems not resolved by Level 1 or prepare for Level 3.

- L3

Problem resolution, which means technical support designed to resolve problems by engaging engineering to resolve product defects which have been identified by Level 2 Support.

For contracted customers and partners, SUSE Linux Enterprise Server is delivered with L3 support for all packages, except for the following:

Technology previews.

Sound, graphics, fonts, and artwork.

Packages that require an additional customer contract.

Some packages shipped as part of the module Workstation Extension are L2-supported only.

Packages with names ending in -devel (containing header files and similar developer resources) will only be supported together with their main packages.

SUSE will only support the usage of original packages. That is, packages that are unchanged and not recompiled.

4.2 Technology previews #

Technology previews are packages, stacks, or features delivered by SUSE to provide glimpses into upcoming innovations. Technology previews are included for your convenience to give you a chance to test new technologies within your environment. We would appreciate your feedback. If you test a technology preview, please contact your SUSE representative and let them know about your experience and use cases. Your input is helpful for future development.

Technology previews have the following limitations:

Technology previews are still in development. Therefore, they may be functionally incomplete, unstable, or otherwise not suitable for production use.

Technology previews are not supported.

Technology previews may only be available for specific hardware architectures.

Details and functionality of technology previews are subject to change. As a result, upgrading to subsequent releases of a technology preview may be impossible and require a fresh installation.

SUSE may discover that a preview does not meet customer or market needs, or does not comply with enterprise standards. Technology previews can be removed from a product at any time. SUSE does not commit to providing a supported version of such technologies in the future.

For an overview of technology previews shipped with your product, see the release notes at https://www.suse.com/releasenotes.

Part I Common tasks #

- 1 Bash and Bash scripts

Today, many people use computers with a graphical user interface (GUI) like GNOME. Although GUIs offer many features, they're limited when performing automated task execution. Shells complement GUIs well, and this chapter gives an overview of some aspects of shells, in this case the Bash shell.

- 2

sudobasics Running certain commands requires root privileges. However, for security reasons and to avoid mistakes, it is not recommended to log in as

root. A safer approach is to log in as a regular user, and then usesudoto run commands with elevated privileges.- 3 Using YaST

YaST is a SUSE Linux Enterprise Server tool that provides a graphical interface for all essential installation and system configuration tasks. Whether you need to update packages, configure a printer, modify firewall settings, set up an FTP server, or partition a hard disk—you can do it using YaST. …

- 4 YaST in text mode

The ncurses-based pseudo-graphical YaST interface is designed primarily to help system administrators to manage systems without an X server. The interface offers several advantages compared to the conventional GUI. You can navigate the ncurses interface using the keyboard, and there are keyboard sho…

- 5 YaST online update

SUSE offers a continuous stream of software security updates for your product. By default, the update applet is used to keep your system up-to-date. Refer to Book “Deployment Guide”, Chapter 21 “Installing or removing software”, Section 21.5 “The GNOME package updater” for further information on the…

- 6 Managing software with command line tools

This chapter describes Zypper and RPM, two command line tools for managing software. For a definition of the terminology used in this context (for example,

repository,patch, orupdate) refer to Book “Deployment Guide”, Chapter 21 “Installing or removing software”, Section 21.1 “Definition of terms”.- 7 System recovery and snapshot management with Snapper

Snapper allows creating and managing file system snapshots. File system snapshots allow keeping a copy of the state of a file system at a certain point of time. The standard setup of Snapper is designed to allow rolling back system changes. However, you can also use it to create on-disk backups of user data. As the basis for this functionality, Snapper uses the Btrfs file system or thinly-provisioned LVM volumes with an XFS or Ext4 file system.

- 8 Live kernel patching with KLP

This document describes the basic principles of the Kernel Live Patching (KLP) technology, and provides usage guidelines for the SLE Live Patching service.

- 9 Transactional updates

Transactional updates are available in SUSE Linux Enterprise Server as a technology preview, for updating SLES when the root file system is read-only. Transactional updates are atomic (all updates are applied only if all updates succeed) and support rollbacks. It does not affect a running system as no changes are activated until after the system is rebooted. As reboots are disruptive, the admin must decide if a reboot is more expensive than disturbing running services. If reboots are too expensive then do not use transactional updates.

Transactional updates are run daily by the

transactional-updatescript. The script checks for available updates. If there are any updates, it creates a new snapshot of the root file system in the background, and then fetches updates from the release channels. After the new snapshot is completely updated, it is marked as active and will be the new default root file system after the next reboot of the system. Whentransactional-updateis set to run automatically (which is the default behavior) it also reboots the system. Both the time that the update runs and the reboot maintenance window are configurable.Only packages that are part of the snapshot of the root file system can be updated. If packages contain files that are not part of the snapshot, the update could fail or break the system.

RPMs that require a license to be accepted cannot be updated.

- 10 Remote graphical sessions with VNC

Virtual Network Computing (VNC) enables you to access a remote computer via a graphical desktop, and run remote graphical applications. VNC is platform-independent and accesses the remote machine from any operating system. This chapter describes how to connect to a VNC server with the desktop clients vncviewer and Remmina, and how to operate a VNC server.

SUSE Linux Enterprise Server supports two different kinds of VNC sessions: One-time sessions that “live” as long as the VNC connection from the client is kept up, and persistent sessions that “live” until they are explicitly terminated.

A VNC server can offer both kinds of sessions simultaneously on different ports, but an open session cannot be converted from one type to the other.

- 11 File copying with RSync

Today, a typical user has several computers: home and workplace machines, a laptop, a smartphone or a tablet. This makes the task of keeping files and documents in synchronization across multiple devices all the more important.

1 Bash and Bash scripts #

Today, many people use computers with a graphical user interface (GUI) like GNOME. Although GUIs offer many features, they're limited when performing automated task execution. Shells complement GUIs well, and this chapter gives an overview of some aspects of shells, in this case the Bash shell.

1.1 What is “the shell”? #

Traditionally, the Linux shell is Bash (Bourne again Shell). When this chapter speaks about “the shell” it means Bash. There are more shells available (ash, csh, ksh, zsh, …), each employing different features and characteristics.

1.1.1 Bash configuration files #

A shell can be invoked as an:

Interactive login shell. This is used when logging in to a machine, invoking Bash with the

--loginoption or when logging in to a remote machine with SSH.Interactive non-login shell. This is normally the case when starting xterm, konsole, gnome-terminal, or similar command-line interface (CLI) tools.

Non-interactive non-login shell. This is invoked when invoking a shell script at the command line.

Depending on the type of shell you use, different configuration files will be read. The following tables show the login and non-login shell configuration files.

Bash looks for its configuration files in a specific order depending on

the type of shell where it is run. Find more details on the Bash man

page (man 1 bash). Search for the headline

INVOCATION.

|

File |

Description |

|---|---|

|

|

Do not modify this file, otherwise your modifications may be destroyed during your next update! |

|

|

Use this file if you extend |

|

|

Contains system-wide configuration files for specific programs |

|

|

Insert user specific configuration for login shells here |

Note that the login shell also sources the configuration files listed under Table 1.2, “Bash configuration files for non-login shells”.

|

|

Do not modify this file, otherwise your modifications may be destroyed during your next update! |

|

|

Use this file to insert your system-wide modifications for Bash only |

|

|

Insert user specific configuration here |

Additionally, Bash uses some more files:

|

File |

Description |

|---|---|

|

|

Contains a list of all commands you have typed |

|

|

Executed when logging out |

|

|

User defined aliases of frequently used commands. See

|

No-Login Shells#

There are special shells that block users from logging into

the system: /bin/false and

/sbin/nologin. Both fail silently

when the user attempts to log into the system. This was intended

as a security measure for system users, though modern

Linux operating systems have more effective tools for controlling system

access, such as PAM and AppArmor.

The default on SUSE Linux Enterprise Server is to assign /bin/bash

to human users, and /bin/false or

/sbin/nologin to system users.

The nobody

user has /bin/bash for historical reasons, as

it is a minimally-privileged user that used to be the default for system users.

However, whatever little bit of security gained by using

nobody is lost when

multiple system users use it. It should be possible to change it to

/sbin/nologin; the fastest way to test it is change

it and see if it breaks any services or applications.

Use the following command to list which shells are assigned to all users,

system and human users, in /etc/passwd. The output

varies according to the services and users on your system:

> sort -t: -k 7 /etc/passwd | awk -F: '{print $1"\t" $7}' | column -t

tux /bin/bash

nobody /bin/bash

root /bin/bash

avahi /bin/false

chrony /bin/false

dhcpd /bin/false

dnsmasq /bin/false

ftpsecure /bin/false

lightdm /bin/false

mysql /bin/false

postfix /bin/false

rtkit /bin/false

sshd /bin/false

tftp /bin/false

unbound /bin/false

bin /sbin/nologin

daemon /sbin/nologin

ftp /sbin/nologin

lp /sbin/nologin

mail /sbin/nologin

man /sbin/nologin

nscd /sbin/nologin

polkitd /sbin/nologin

pulse /sbin/nologin

qemu /sbin/nologin

radvd /sbin/nologin

rpc /sbin/nologin

statd /sbin/nologin

svn /sbin/nologin

systemd-coredump /sbin/nologin

systemd-network /sbin/nologin

systemd-timesync /sbin/nologin

usbmux /sbin/nologin

vnc /sbin/nologin

wwwrun /sbin/nologin

messagebus /usr/bin/false

scard /usr/sbin/nologin1.1.2 The directory structure #

The following table provides a short overview of the most important higher-level directories that you find on a Linux system. Find more detailed information about the directories and important subdirectories in the following list.

|

Directory |

Contents |

|---|---|

|

|

Root directory—the starting point of the directory tree. |

|

|

Essential binary files, such as commands that are needed by both the system administrator and normal users. Usually also contains the shells, such as Bash. |

|

|

Static files of the boot loader. |

|

|

Files needed to access host-specific devices. |

|

|

Host-specific system configuration files. |

|

|

Holds the home directories of all users who have accounts on the system.

However, |

|

|

Essential shared libraries and kernel modules. |

|

|

Mount points for removable media. |

|

|

Mount point for temporarily mounting a file system. |

|

|

Add-on application software packages. |

|

|

Home directory for the superuser |

|

|

Essential system binaries. |

|

|

Data for services provided by the system. |

|

|

Temporary files. |

|

|

Secondary hierarchy with read-only data. |

|

|

Variable data such as log files. |

|

|

Only available if you have both Microsoft Windows* and Linux installed on your system. Contains the Windows data. |

The following list provides more detailed information and gives some examples of which files and subdirectories can be found in the directories:

/binContains the basic shell commands that may be used both by

rootand by other users. These commands includels,mkdir,cp,mv,rmandrmdir./binalso contains Bash, the default shell in SUSE Linux Enterprise Server./bootContains data required for booting, such as the boot loader, the kernel, and other data that is used before the kernel begins executing user-mode programs.

/devHolds device files that represent hardware components.

/etcContains local configuration files that control the operation of programs like the X Window System. The

/etc/init.dsubdirectory contains LSB init scripts that can be executed during the boot process./home/USERNAMEHolds the private data of every user who has an account on the system. The files located here can only be modified by their owner or by the system administrator. By default, your e-mail directory and personal desktop configuration are located here in the form of hidden files and directories, such as

.gconf/and.config.Note: Home directory in a network environmentIf you are working in a network environment, your home directory may be mapped to a directory in the file system other than

/home./libContains the essential shared libraries needed to boot the system and to run the commands in the root file system. The Windows equivalent for shared libraries are DLL files.

/mediaContains mount points for removable media, such as CD-ROMs, flash disks, and digital cameras (if they use USB).

/mediagenerally holds any type of drive except the hard disk of your system. When your removable medium has been inserted or connected to the system and has been mounted, you can access it from here./mntThis directory provides a mount point for a temporarily mounted file system.

rootmay mount file systems here./optReserved for the installation of third-party software. Optional software and larger add-on program packages can be found here.

/rootHome directory for the

rootuser. The personal data ofrootis located here./runA tmpfs directory used by

systemdand various components./var/runis a symbolic link to/run./sbinAs the

sindicates, this directory holds utilities for the superuser./sbincontains the binaries essential for booting, restoring and recovering the system in addition to the binaries in/bin./srvHolds data for services provided by the system, such as FTP and HTTP.

/tmpThis directory is used by programs that require temporary storage of files.

Important: Cleaning up/tmpat boot timeData stored in

/tmpis not guaranteed to survive a system reboot. It depends, for example, on settings made in/etc/tmpfiles.d/tmp.conf./usr/usrhas nothing to do with users, but is the acronym for Unix system resources. The data in/usris static, read-only data that can be shared among various hosts compliant with theFilesystem Hierarchy Standard(FHS). This directory contains all application programs including the graphical desktops such as GNOME and establishes a secondary hierarchy in the file system./usrholds several subdirectories, such as/usr/bin,/usr/sbin,/usr/local, and/usr/share/doc./usr/binContains generally accessible programs.

/usr/sbinContains programs reserved for the system administrator, such as repair functions.

/usr/localIn this directory the system administrator can install local, distribution-independent extensions.

/usr/share/docHolds various documentation files and the release notes for your system. In the

manualsubdirectory find an online version of this manual. If more than one language is installed, this directory may contain versions of the manuals for different languages.Under

packagesfind the documentation included in the software packages installed on your system. For every package, a subdirectory/usr/share/doc/packages/PACKAGENAMEis created that often holds README files for the package and sometimes examples, configuration files or additional scripts.If HOWTOs are installed on your system

/usr/share/docalso holds thehowtosubdirectory in which to find additional documentation on many tasks related to the setup and operation of Linux software./varWhereas

/usrholds static, read-only data,/varis for data which is written during system operation and thus is variable data, such as log files or spooling data. For an overview of the most important log files you can find under/var/log/, refer to Table 40.1, “Log files”./windowsOnly available if you have both Microsoft Windows and Linux installed on your system. Contains the Windows data available on the Windows partition of your system. Whether you can edit the data in this directory depends on the file system your Windows partition uses. If it is FAT32, you can open and edit the files in this directory. For NTFS, SUSE Linux Enterprise Server also includes write access support. However, the driver for the NTFS-3g file system has limited functionality.

1.2 Writing shell scripts #

Shell scripts provide a convenient way to perform a wide range of tasks: collecting data, searching for a word or phrase in a text and other useful things. The following example shows a small shell script that prints a text:

#!/bin/sh 1 # Output the following line: 2 echo "Hello World" 3

The first line begins with the Shebang

characters ( | |

The second line is a comment beginning with the hash sign. We recommend that you comment difficult lines. With proper commenting, you can remember the purpose and function of the line. Also, other readers will hopefully understand your script. Commenting is considered good practice in the development community. | |

The third line uses the built-in command |

Before you can run this script, there are a few prerequisites:

Every script should contain a Shebang line (as in the example above). If the line is missing, you need to call the interpreter manually.

You can save the script wherever you want. However, it is a good idea to save it in a directory where the shell can find it. The search path in a shell is determined by the environment variable

PATH. Usually a normal user does not have write access to/usr/bin. Therefore it is recommended to save your scripts in the users' directory~/bin/. The above example gets the namehello.sh.The script needs executable permissions. Set the permissions with the following command:

>chmod +x ~/bin/hello.sh

If you have fulfilled all of the above prerequisites, you can execute the script in the following ways:

As absolute path. The script can be executed with an absolute path. In our case, it is

~/bin/hello.sh.Everywhere. If the

PATHenvironment variable contains the directory where the script is located, you can execute the script withhello.sh.

1.3 Redirecting command events #

Each command can use three channels, either for input or output:

Standard output. This is the default output channel. Whenever a command prints something, it uses the standard output channel.

Standard input. If a command needs input from users or other commands, it uses this channel.

Standard error. Commands use this channel for error reporting.

To redirect these channels, there are the following possibilities:

Command > FileSaves the output of the command into a file, an existing file will be deleted. For example, the

lscommand writes its output into the filelisting.txt:>ls > listing.txtCommand >> FileAppends the output of the command to a file. For example, the

lscommand appends its output to the filelisting.txt:>ls >> listing.txtCommand < FileReads the file as input for the given command. For example, the

readcommand reads in the content of the file into the variable:>read a < fooCommand1 | Command2Redirects the output of the left command as input for the right command. For example, the

catcommand outputs the content of the/proc/cpuinfofile. This output is used bygrepto filter only those lines which containcpu:>cat /proc/cpuinfo | grep cpu

Every channel has a file descriptor: 0 (zero) for

standard input, 1 for standard output and 2 for standard error. It is

allowed to insert this file descriptor before a < or

> character. For example, the following line searches

for a file starting with foo, but suppresses its errors

by redirecting it to /dev/null:

> find / -name "foo*" 2>/dev/null1.4 Using aliases #

An alias is a shortcut definition of one or more commands. The syntax for an alias is:

alias NAME=DEFINITION

For example, the following line defines an alias lt that

outputs a long listing (option -l), sorts it by

modification time (-t), and prints it in reverse sorted order (-r):

> alias lt='ls -ltr'

To view all alias definitions, use alias. Remove your

alias with unalias and the corresponding alias name.

1.5 Using variables in Bash #

A shell variable can be global or local. Global variables, or environment variables, can be accessed in all shells. In contrast, local variables are visible in the current shell only.

To view all environment variables, use the printenv

command. If you need to know the value of a variable, insert the name of

your variable as an argument:

> printenv PATH

A variable, be it global or local, can also be viewed with

echo:

> echo $PATHTo set a local variable, use a variable name followed by the equal sign, followed by the value:

> PROJECT="SLED"

Do not insert spaces around the equal sign, otherwise you get an error. To

set an environment variable, use export:

> export NAME="tux"

To remove a variable, use unset:

> unset NAMEThe following table contains some common environment variables which can be used in you shell scripts:

|

|

the home directory of the current user |

|

|

the current host name |

|

|

when a tool is localized, it uses the language from this environment

variable. English can also be set to |

|

|

the search path of the shell, a list of directories separated by colon |

|

|

specifies the normal prompt printed before each command |

|

|

specifies the secondary prompt printed when you execute a multi-line command |

|

|

current working directory |

|

|

the current user |

1.5.1 Using argument variables #

For example, if you have the script foo.sh you can

execute it like this:

> foo.sh "Tux Penguin" 2000

To access all the arguments which are passed to your script, you need

positional parameters. These are $1 for the first argument,

$2 for the second, and so on. You can have up to nine

parameters. To get the script name, use $0.

The following script foo.sh prints all arguments from 1

to 4:

#!/bin/sh echo \"$1\" \"$2\" \"$3\" \"$4\"

If you execute this script with the above arguments, you get:

"Tux Penguin" "2000" "" ""

1.5.2 Using variable substitution #

Variable substitutions apply a pattern to the content of a variable either from the left or right side. The following list contains the possible syntax forms:

${VAR#pattern}removes the shortest possible match from the left:

>file=/home/tux/book/book.tar.bz2>echo ${file#*/} home/tux/book/book.tar.bz2${VAR##pattern}removes the longest possible match from the left:

>file=/home/tux/book/book.tar.bz2>echo ${file##*/} book.tar.bz2${VAR%pattern}removes the shortest possible match from the right:

>file=/home/tux/book/book.tar.bz2>echo ${file%.*} /home/tux/book/book.tar${VAR%%pattern}removes the longest possible match from the right:

>file=/home/tux/book/book.tar.bz2>echo ${file%%.*} /home/tux/book/book${VAR/pattern_1/pattern_2}substitutes the content of VAR from the PATTERN_1 with PATTERN_2:

>file=/home/tux/book/book.tar.bz2>echo ${file/tux/wilber} /home/wilber/book/book.tar.bz2

1.6 Grouping and combining commands #

Shells allow you to concatenate and group commands for conditional execution. Each command returns an exit code which determines the success or failure of its operation. If it is 0 (zero) the command was successful, everything else marks an error which is specific to the command.

The following list shows, how commands can be grouped:

Command1 ; Command2executes the commands in sequential order. The exit code is not checked. The following line displays the content of the file with

catand then prints its file properties withlsregardless of their exit codes:>cat filelist.txt ; ls -l filelist.txtCommand1 && Command2runs the right command, if the left command was successful (logical AND). The following line displays the content of the file and prints its file properties only, when the previous command was successful (compare it with the previous entry in this list):

>cat filelist.txt && ls -l filelist.txtCommand1 || Command2runs the right command, when the left command has failed (logical OR). The following line creates only a directory in

/home/wilber/barwhen the creation of the directory in/home/tux/foohas failed:>mkdir /home/tux/foo || mkdir /home/wilber/barfuncname(){ ... }creates a shell function. You can use the positional parameters to access its arguments. The following line defines the function

helloto print a short message:>hello() { echo "Hello $1"; }You can call this function like this:

>hello Tuxwhich prints:

Hello Tux

1.7 Working with common flow constructs #

To control the flow of your script, a shell has while,

if, for and case

constructs.

1.7.1 The if control command #

The if command is used to check expressions. For

example, the following code tests whether the current user is Tux:

if test $USER = "tux"; then echo "Hello Tux." else echo "You are not Tux." fi

The test expression can be as complex or simple as possible. The following

expression checks if the file foo.txt exists:

if test -e /tmp/foo.txt ; then echo "Found foo.txt" fi

The test expression can also be abbreviated in square brackets:

if [ -e /tmp/foo.txt ] ; then echo "Found foo.txt" fi

Find more useful expressions at https://bash.cyberciti.biz/guide/If..else..fi.

1.7.2 Creating loops with the for command #

The for loop allows you to execute commands to a list of

entries. For example, the following code prints some information about PNG

files in the current directory:

for i in *.png; do ls -l $i done

1.8 More information #

Important information about Bash is provided in the man pages man

bash. More about this topic can be found in the following list:

https://tldp.org/LDP/Bash-Beginners-Guide/html/index.html—Bash Guide for Beginners

https://tldp.org/HOWTO/Bash-Prog-Intro-HOWTO.html—BASH Programming - Introduction HOW-TO

https://tldp.org/LDP/abs/html/index.html—Advanced Bash-Scripting Guide

http://www.grymoire.com/Unix/Sh.html—Sh - the Bourne Shell

2 sudo basics #

Running certain commands requires root privileges. However, for security

reasons and to avoid mistakes, it is not recommended to log in as

root. A safer approach is to log in as a regular user, and

then use sudo to run commands with elevated privileges.

On SUSE Linux Enterprise Server, sudo is configured to work similarly to su. However,

sudo provides a flexible mechanism that allows users to run commands with

privileges of any other user. This can be used to assign roles with specific

privileges to certain users and groups. For example, it is possible to allow

members of the group users to run a command with the privileges of

user wilber. Access to the command can be further restricted by

disallowing any command options. While su always requires the root

password for authentication with PAM, sudo can be configured to

authenticate with your own credentials. This means that the users do not have

to share the root password, which improves security.

2.1 Basic sudo usage #

The following chapter provides an introduction to basic usage of sudo.

2.1.1 Running a single command #

As a regular user, you can run any command as root by

adding sudo before it. This prompts you to provide the root password. If

authenticated successfully, this runs the command as root:

>id -un1 tux>sudoid -unroot's password:2 root>id -untux3>sudoid -un4 root

The | |

The password is not shown during input, neither as clear text nor as masking characters. | |

Only commands that start with | |

The elevated privileges persist for a certain period of time, so you

do not need to provide the |

When using sudo, I/O redirection does not work:

>sudoecho s > /proc/sysrq-trigger bash: /proc/sysrq-trigger: Permission denied>sudocat < /proc/1/maps bash: /proc/1/maps: Permission denied

In the example above, only the echo and

cat commands run with elevated privileges. The

redirection is done by the user's shell with user privileges. To perform

redirection with elevated privileges, either start a shell as in Section 2.1.2, “Starting a shell” or use the dd utility:

echo s | sudo dd of=/proc/sysrq-trigger sudo dd if=/proc/1/maps | cat

2.1.2 Starting a shell #

Using sudo every time to run a command with elevated privileges is not

always practical. While you can use the sudo bash

command, it is recommended to use one of the built-in mechanisms to start a

shell:

sudo -s (<command>)Starts a shell specified by the

SHELLenvironment variable or the target user's default shell. If a command is specified, it is passed to the shell (with the-coption). Otherwise the shell runs in interactive mode.tux:~ >sudo -s root's password:root:/home/tux #exittux:~ >sudo -i (<command>)Similar to

-s, but starts the shell as a login shell. This means that the shell's start-up files (.profileetc.) are processed, and the current working directory is set to the target user's home directory.tux:~ >sudo -i root's password:root:~ #exittux:~ >

By default, sudo does not propagate environment variables. This behavior

can be changed using the env_reset option (see Useful flags and options).

2.2 Configuring sudo #

sudo provides a wide range on configurable options.

If you accidentally locked yourself out of sudo, use su

- and the root password to start a root shell.

To fix the error, run visudo.

2.2.1 Editing the configuration files #

The main policy configuration file for sudo is

/etc/sudoers. As it is possible to lock yourself out

of the system if the file is malformed, it is strongly recommended to use

visudo for editing. It prevents editing conflicts and

checks for syntax errors before saving the modifications.

You can use another editor instead of vi by setting the

EDITOR environment variable, for example:

sudo EDITOR=/usr/bin/nano visudo

Keep in mind that the /etc/sudoers file is supplied by

the system packages, and modifications done directly in the file may break

updates. Therefore, it is recommended to put custom configuration into

files in the /etc/sudoers.d/ directory. Use the

following command to create or edit a file:

sudo visudo -f /etc/sudoers.d/NAME

The command bellow opens the file using a different editor (in this case,

nano):

sudo EDITOR=/usr/bin/nano visudo -f /etc/sudoers.d/NAME

/etc/sudoers.d

The #includedir directive in

/etc/sudoers ignores files that end with the

~ (tilde) character or contain the .

(dot) character.

For more information on the visudo command, run

man 8 visudo.

2.2.2 Basic sudoers configuration syntax #

The sudoers configuration files contain two types of options: strings and flags. While strings can contain any value, flags can be turned either ON or OFF. The most important syntax constructs for sudoers configuration files are as follows:

# Everything on a line after # is ignored 1 Defaults !insults # Disable the insults flag 2 Defaults env_keep += "DISPLAY HOME" # Add DISPLAY and HOME to env_keep tux ALL = NOPASSWD: /usr/bin/frobnicate, PASSWD: /usr/bin/journalctl 3

There are two exceptions: | |

Remove the | |

-

targetpw This flag controls whether the invoking user is required to enter the password of the target user (ON) (for example

root) or the invoking user (OFF).Defaults targetpw # Turn targetpw flag ON

-

rootpw If set,

sudoprompts for therootpassword. The default is OFF.Defaults !rootpw # Turn rootpw flag OFF

-

env_reset If set,

sudoconstructs a minimal environment withTERM,PATH,HOME,MAIL,SHELL,LOGNAME,USER,USERNAME, andSUDO_*. Additionally, variables listed inenv_keepare imported from the calling environment. The default is ON.Defaults env_reset # Turn env_reset flag ON

-

env_keep List of environment variables to keep when the

env_resetflag is ON.# Set env_keep to contain EDITOR and PROMPT Defaults env_keep = "EDITOR PROMPT" Defaults env_keep += "JRE_HOME" # Add JRE_HOME Defaults env_keep -= "JRE_HOME" # Remove JRE_HOME

-

env_delete List of environment variables to remove when the

env_resetflag is OFF.# Set env_delete to contain EDITOR and PROMPT Defaults env_delete = "EDITOR PROMPT" Defaults env_delete += "JRE_HOME" # Add JRE_HOME Defaults env_delete -= "JRE_HOME" # Remove JRE_HOME

The Defaults token can also be used to create aliases

for a collection of users, hosts, and commands. Furthermore, it is possible

to apply an option only to a specific set of users.

For detailed information about the /etc/sudoers

configuration file, consult man 5 sudoers.

2.2.3 Basic sudoers rules #

Each rule follows the following scheme

([] marks optional parts):

#Who Where As whom Tag What User_List Host_List = [(User_List)] [NOPASSWD:|PASSWD:] Cmnd_List

User_ListOne or several (separated by comma) identifiers: either a user name, a group in the format

%GROUPNAME, or a user ID in the format#UID. Negation can be specified with the!prefix.Host_ListOne or several (separated by comma) identifiers: either a (fully qualified) host name or an IP address. Negation can be specified with the

!prefix.ALLis a common choice forHost_List.NOPASSWD:|PASSWD:The user is not prompted for a password when running commands matching

Cmd_ListafterNOPASSWD:.PASSWDis the default. It only needs to be specified when bothPASSWDandNOPASSWDare on the same line:tux ALL = PASSWD: /usr/bin/foo, NOPASSWD: /usr/bin/bar

Cmnd_ListOne or several (separated by comma) specifiers: A path to an executable, followed by an optional allowed argument.

/usr/bin/foo # Anything allowed /usr/bin/foo bar # Only "/usr/bin/foo bar" allowed /usr/bin/foo "" # No arguments allowed

ALL can be used as User_List,

Host_List, and Cmnd_List.

A rule that allows tux to run all commands as root without

entering a password:

tux ALL = NOPASSWD: ALL

A rule that allows tux to run systemctl restart

apache2:

tux ALL = /usr/bin/systemctl restart apache2

A rule that allows tux to run wall as

admin with no arguments:

tux ALL = (admin) /usr/bin/wall ""

Do not use rules like ALL ALL =

ALL without Defaults targetpw. Otherwise

anyone can run commands as root.

2.3 sudo use cases #

While the default configuration works for standard usage scenarios, you can customize the default configuration to meet your specific needs.

2.3.1 Using sudo without root password #

By design, members of the group

wheel can run all commands

with sudo as root. The following procedure explains how to add a user

account to the wheel group.

Verify that the

wheelgroup exists:>getent group wheelIf the previous command returned no result, install the system-group-wheel package that creates the

wheelgroup:>sudozypper install system-group-wheelAdd your user account to the group

wheel.If your user account is not already a member of the

wheelgroup, add it using thesudo usermod -a -G wheel USERNAMEcommand. Log out and log in again to enable the change. Verify that the change was successful by running thegroups USERNAMEcommand.Authenticate with the user account's normal password.

Create the file

/etc/sudoers.d/userpwusing thevisudocommand (see Section 2.2.1, “Editing the configuration files”) and add the following:Defaults !targetpw

Select a new default rule.

Depending on whether you want users to re-enter their passwords, uncomment the appropriate line in

/etc/sudoersand comment out the default rule.## Uncomment to allow members of group wheel to execute any command # %wheel ALL=(ALL) ALL ## Same thing without a password # %wheel ALL=(ALL) NOPASSWD: ALL

Make the default rule more restrictive.

Comment out or remove the allow-everything rule in

/etc/sudoers:ALL ALL=(ALL) ALL # WARNING! Only use this together with 'Defaults targetpw'!

Warning: Dangerous rule in sudoersDo not skip this step. Otherwise any user can execute any command as

root!Test the configuration.

Run

sudoas member and non-member ofwheel.tux:~ >groups users wheeltux:~ >sudo id -un tux's password: rootwilber:~ >groups userswilber:~ >sudo id -un wilber is not in the sudoers file. This incident will be reported.

2.3.2 Using sudo with X.Org applications #

Starting graphical applications with sudo usually results in the following

error:

>sudoxterm xterm: Xt error: Can't open display: %s xterm: DISPLAY is not set

A simple workaround is to use xhost to temporarily allow the root user to access the local user's X session. This is done using the following command:

xhost si:localuser:root

The command below removes the granted access:

xhost -si:localuser:root

Running graphical applications with root privileges has security implications. It is recommended to enable root access for a graphical application only as an exception. It is also recommended to revoke the granted root access as soon as the graphical application is closed.

2.4 More information #

The sudo --help command offers a brief overview of the

available command line options, while the man sudoers

command provides detailed information about sudoers

and its configuration.

3 Using YaST #

YaST is a SUSE Linux Enterprise Server tool that provides a graphical interface for all essential installation and system configuration tasks. Whether you need to update packages, configure a printer, modify firewall settings, set up an FTP server, or partition a hard disk—you can do it using YaST. Written in Ruby, YaST features an extensible architecture that makes it possible to add new functionality via modules.

Additional information about YaST is available on the project's official Web site at https://yast.opensuse.org/.

3.1 YaST interface overview #

YaST has two graphical interfaces: one for use with graphical desktop environments like KDE and GNOME, and an ncurses-based pseudo-graphical interface for use on systems without an X server (see Chapter 4, YaST in text mode).

In the graphical version of YaST, all modules in YaST are grouped by category, and the navigation sidebar allows you to quickly access modules in the desired category. The search field at the top makes it possible to find modules by their names. To find a specific module, enter its name into the search field, and you should see the modules that match the entered string as you type.

The list of installed modules for the ncurses-based and GUI version of YaST may differ. Before starting any YaST module, verify that it is installed for the version of YaST that you are using.

3.2 Useful key combinations #

The graphical version of YaST supports keyboard shortcuts

- Print Screen

Take and save a screenshot. It may not work on certain desktop environments.

- Shift–F4

Enable and disable the color palette optimized for visually-impaired users.

- Shift–F7

Enable/disable logging of debug messages.

- Shift–F8

Open a file dialog to save log files to a user-defined location.

- Ctrl–Shift–Alt–D

Send a DebugEvent. YaST modules can react to this by executing special debugging actions. The result depends on the specific YaST module.

- Ctrl–Shift–Alt–M

Start and stop macro recorder.

- Ctrl–Shift–Alt–P

Replay macro.

- Ctrl–Shift–Alt–S

Show style sheet editor.

- Ctrl–Shift–Alt–T

Dump widget tree to the log file.

- Ctrl–Shift–Alt–X

Open a terminal window (xterm). Useful for installation process via VNC.

- Ctrl–Shift–Alt–Y

Show widget tree browser.

4 YaST in text mode #

The ncurses-based pseudo-graphical YaST interface is designed primarily to help system administrators to manage systems without an X server. The interface offers several advantages compared to the conventional GUI. You can navigate the ncurses interface using the keyboard, and there are keyboard shortcuts for practically all interface elements. The ncurses interface is light on resources, and runs fast even on modest hardware. You can run the ncurses-based version of YaST via an SSH connection, so you can administer remote systems. Keep in mind that the minimum supported size of the terminal emulator in which to run YaST is 80x25 characters.

To launch the ncurses-based version of YaST, open the terminal and run the

sudo yast2 command. Use the Tab or

arrow keys to navigate between interface elements like menu

items, fields, and buttons. All menu items and buttons in YaST can be

accessed using the appropriate function keys or keyboard shortcuts. For

example, you can cancel the current operation by pressing

F9, while the F10 key can be used to accept

the changes. Each menu item and button in YaST's ncurses-based interface

has a highlighted letter in its label. This letter is part of the keyboard

shortcut assigned to the interface element. For example, the letter

Q is highlighted in the

button. This means that you can activate the button by pressing

Alt–Alt+Q.

If a YaST dialog gets corrupted or distorted (for example, while resizing the window), press Ctrl–L to refresh and restore its contents.

4.2 Advanced key combinations #

The ncurses-based version of YaST offers several advanced key combinations.

- Shift–F1

List advanced hotkeys.

- Shift–F4

Change color schema.

- Ctrl–Q

Quit the application.

- Ctrl–L

Refresh screen.

- Ctrl–DF1

List advanced hotkeys.

- Ctrl–DShift–D

Dump dialog to the log file as a screenshot.

- Ctrl–DShift–Y

Open YDialogSpy to see the widget hierarchy.

4.3 Restriction of key combinations #

If your window manager uses global Alt combinations, the Alt combinations in YaST might not work. Keys like Alt or Shift can also be occupied by the settings of the terminal.

- Using Alt instead of Esc

Alt shortcuts can be executed with Esc instead of Alt. For example, Esc–H replaces Alt–H. (Press Esc, then press H.)

- Backward and forward navigation with Ctrl–F and Ctrl–B

If the Alt and Shift combinations are taken over by the window manager or the terminal, use the combinations Ctrl–F (forward) and Ctrl–B (backward) instead.

- Restriction of function keys

The function keys (F1 ... F12) are also used for functions. Certain function keys might be taken over by the terminal and may not be available for YaST. However, the Alt key combinations and function keys should always be fully available on a text-only console.

4.4 YaST command line options #

Besides the text mode interface, YaST provides a command line interface. To get a list of YaST command line options, use the following command:

>sudoyast -h

4.4.1 Installing packages from the command line #

If you know the package name, and the package is provided by an active

installation repository, you can use the command line option

-i to install the package:

>sudoyast -i package_name

or

>sudoyast --install -i package_name

package_name can be a single short package name (for example gvim) installed with dependency checking, or the full path to an RPM package, which is installed without dependency checking.

While YaST offers basic functionality for managing software from the command line, consider using Zypper for more advanced package management tasks. Find more information on using Zypper in Section 6.1, “Using Zypper”.

4.4.2 Working with individual modules #

To save time, you can start individual YaST modules using the following command:

>sudoyast module_name

View a list of all modules available on your system with yast

-l or yast --list.

4.4.3 Command line parameters of YaST modules #

To use YaST functionality in scripts, YaST provides command line support for individual modules. However, not all modules have command line support. To display the available options of a module, use the following command:

>sudoyast module_name help

If a module does not provide command line support, it is started in a text mode with the following message:

This YaST module does not support the command line interface.

The following sections describe all YaST modules with command line support, along with a brief explanation of all their commands and available options.

4.4.3.1 Common YaST module commands #

All YaST modules support the following commands:

- help

Lists all the module's supported commands with their description:

>sudoyast lan help- longhelp

Same as

help, but adds a detailed list of all command's options and their descriptions:>sudoyast lan longhelp- xmlhelp

Same as

longhelp, but the output is structured as an XML document and redirected to a file:>sudoyast lan xmlhelp xmlfile=/tmp/yast_lan.xml- interactive

Enters the interactive mode. This lets you run the module's commands without prefixing them with

sudo yast. Useexitto leave the interactive mode.

4.4.3.2 yast add-on #

Adds a new add-on product from the specified path:

>sudoyast add-on http://server.name/directory/Lang-AddOn-CD1/

You can use the following protocols to specify the source path: http:// ftp:// nfs:// disk:// cd:// or dvd://.

4.4.3.3 yast audit-laf #

Displays and configures the Linux Audit Framework. Refer to the Book “Security and Hardening Guide” for more details. yast audit-laf

accepts the following commands:

- set

Sets an option:

>sudoyast audit-laf set log_file=/tmp/audit.logFor a complete list of options, run

yast audit-laf set help.- show

Displays settings of an option:

>sudoyast audit-laf show diskspace space_left: 75 space_left_action: SYSLOG admin_space_left: 50 admin_space_left_action: SUSPEND action_mail_acct: root disk_full_action: SUSPEND disk_error_action: SUSPENDFor a complete list of options, run

yast audit-laf show help.

4.4.3.4 yast dhcp-server #

Manages the DHCP server and configures its settings. yast

dhcp-server accepts the following commands:

- disable

Disables the DHCP server service.

- enable

Enables the DHCP server service.

- host

Configures settings for individual hosts.

- interface

Specifies to which network interface to listen to:

>sudoyast dhcp-server interface current Selected Interfaces: eth0 Other Interfaces: bond0, pbu, eth1For a complete list of options, run

yast dhcp-server interface help.- options

Manages global DHCP options. For a complete list of options, run

yast dhcp-server options help.- status

Prints the status of the DHCP service.

- subnet

Manages the DHCP subnet options. For a complete list of options, run

yast dhcp-server subnet help.

4.4.3.5 yast dns-server #

Manages the DNS server configuration. yast dns-server

accepts the following commands:

- acls

Displays access control list settings:

>sudoyast dns-server acls show ACLs: ----- Name Type Value ---------------------------- any Predefined localips Predefined localnets Predefined none Predefined- dnsrecord

Configures zone resource records:

>sudoyast dnsrecord add zone=example.org query=office.example.org type=NS value=ns3For a complete list of options, run

yast dns-server dnsrecord help.- forwarders

Configures DNS forwarders:

>sudoyast dns-server forwarders add ip=10.0.0.100>sudoyast dns-server forwarders show [...] Forwarder IP ------------ 10.0.0.100For a complete list of options, run

yast dns-server forwarders help.- host

Handles 'A' and its related 'PTR' record at once:

>sudoyast dns-server host show zone=example.orgFor a complete list of options, run

yast dns-server host help.- logging

Configures logging settings:

>sudoyast dns-server logging set updates=no transfers=yesFor a complete list of options, run

yast dns-server logging help.- mailserver

Configures zone mail servers:

>sudoyast dns-server mailserver add zone=example.org mx=mx1 priority=100For a complete list of options, run

yast dns-server mailserver help.- nameserver

Configures zone name servers:

>sudoyast dns-server nameserver add zone=example.com ns=ns1For a complete list of options, run

yast dns-server nameserver help.- soa

Configures the start of authority (SOA) record:

>sudoyast dns-server soa set zone=example.org serial=2006081623 ttl=2D3H20SFor a complete list of options, run

yast dns-server soa help.- startup

Manages the DNS server service:

>sudoyast dns-server startup atbootFor a complete list of options, run

yast dns-server startup help.- transport

Configures zone transport rules. For a complete list of options, run

yast dns-server transport help.- zones

Manages DNS zones:

>sudoyast dns-server zones add name=example.org zonetype=masterFor a complete list of options, run

yast dns-server zones help.

4.4.3.6 yast disk #

Prints information about all disks or partitions. The only supported

command is list followed by either of the following

options:

- disks

Lists all configured disks in the system:

>sudoyast disk list disks Device | Size | FS Type | Mount Point | Label | Model ---------+------------+---------+-------------+-------+------------- /dev/sda | 119.24 GiB | | | | SSD 840 /dev/sdb | 60.84 GiB | | | | WD1003FBYX-0- partitions

Lists all partitions in the system:

>sudoyast disk list partitions Device | Size | FS Type | Mount Point | Label | Model ---------------+------------+---------+-------------+-------+------ /dev/sda1 | 1.00 GiB | Ext2 | /boot | | /dev/sdb1 | 1.00 GiB | Swap | swap | | /dev/sdc1 | 698.64 GiB | XFS | /mnt/extra | | /dev/vg00/home | 580.50 GiB | Ext3 | /home | | /dev/vg00/root | 100.00 GiB | Ext3 | / | | [...]

4.4.3.7 yast ftp-server #

Configures FTP server settings. yast ftp-server accepts

the following options:

- SSL, TLS

Controls secure connections via SSL and TLS. SSL options are valid for the

vsftpdonly.>sudoyast ftp-server SSL enable>sudoyast ftp-server TLS disable- access

Configures access permissions:

>sudoyast ftp-server access authen_onlyFor a complete list of options, run

yast ftp-server access help.- anon_access

Configures access permissions for anonymous users:

>sudoyast ftp-server anon_access can_uploadFor a complete list of options, run

yast ftp-server anon_access help.- anon_dir

Specifies the directory for anonymous users. The directory must already exist on the server:

>sudoyast ftp-server anon_dir set_anon_dir=/srv/ftpFor a complete list of options, run

yast ftp-server anon_dir help.- chroot

Controls change root environment (chroot):

>sudoyast ftp-server chroot enable>sudoyast ftp-server chroot disable- idle-time

Sets the maximum idle time in minutes before FTP server terminates the current connection:

>sudoyast ftp-server idle-time set_idle_time=15- logging

Determines whether to save the log messages into a log file:

>sudoyast ftp-server logging enable>sudoyast ftp-server logging disable- max_clients

Specifies the maximum number of concurrently connected clients:

>sudoyast ftp-server max_clients set_max_clients=1500- max_clients_ip

Specifies the maximum number of concurrently connected clients via IP:

>sudoyast ftp-server max_clients_ip set_max_clients=20- max_rate_anon

Specifies the maximum data transfer rate permitted for anonymous clients (KB/s):

>sudoyast ftp-server max_rate_anon set_max_rate=10000- max_rate_authen

Specifies the maximum data transfer rate permitted for locally authenticated users (KB/s):

>sudoyast ftp-server max_rate_authen set_max_rate=10000- port_range

Specifies the port range for passive connection replies:

>sudoyast ftp-server port_range set_min_port=20000 set_max_port=30000For a complete list of options, run

yast ftp-server port_range help.- show

Displays FTP server settings.

- startup

Controls the FTP start-up method:

>sudoyast ftp-server startup atbootFor a complete list of options, run

yast ftp-server startup help.- umask

Specifies the file umask for

authenticated:anonymoususers:>sudoyast ftp-server umask set_umask=177:077- welcome_message

Specifies the text to display when someone connects to the FTP server:

>sudoyast ftp-server welcome_message set_message="hello everybody"

4.4.3.8 yast http-server #

Configures the HTTP server (Apache2). yast http-server

accepts the following commands:

- configure

Configures the HTTP server host settings:

>sudoyast http-server configure host=main servername=www.example.com \ serveradmin=admin@example.comFor a complete list of options, run

yast http-server configure help.

- hosts

Configures virtual hosts:

>sudoyast http-server hosts create servername=www.example.com \ serveradmin=admin@example.com documentroot=/var/wwwFor a complete list of options, run

yast http-server hosts help.

- listen

Specifies the ports and network addresses where the HTTP server should listen:

>sudoyast http-server listen add=81>sudoyast http-server listen list Listen Statements: ================== :80 :81>sudoyast http-server delete=80For a complete list of options, run

yast http-server listen help.

- mode

Enables or disables the wizard mode:

>sudoyast http-server mode wizard=on

- modules

Controls the Apache2 server modules:

>sudoyast http-server modules enable=php5,rewrite>sudoyast http-server modules disable=ssl>sudohttp-server modules list [...] Enabled rewrite Disabled ssl Enabled php5 [...]

4.4.3.9 yast kdump #

Configures kdump settings. For more information

on kdump, refer to the

Book “System Analysis and Tuning Guide”, Chapter 19 “Kexec and Kdump”, Section 19.7 “Basic Kdump configuration”. yast kdump

accepts the following commands:

- copykernel

Copies the kernel into the dump directory.

- customkernel

Specifies the kernel_string part of the name of the custom kernel. The naming scheme is

/boot/vmlinu[zx]-kernel_string[.gz].>sudoyast kdump customkernel kernel=kdumpFor a complete list of options, run

yast kdump customkernel help.- dumpformat

Specifies the (compression) format of the dump kernel image. Available formats are 'none', 'ELF', 'compressed', or 'lzo':

>sudoyast kdump dumpformat dump_format=ELF- dumplevel

Specifies the dump level number in the range from 0 to 31:

>sudoyast kdump dumplevel dump_level=24- dumptarget

Specifies the destination for saving dump images:

>sudokdump dumptarget taget=ssh server=name_server port=22 \ dir=/var/log/dump user=user_nameFor a complete list of options, run

yast kdump dumptarget help.- immediatereboot

Controls whether the system should reboot immediately after saving the core in the kdump kernel:

>sudoyast kdump immediatereboot enable>sudoyast kdump immediatereboot disable- keepolddumps

Specifies how many old dump images are kept. Specify zero to keep them all:

>sudoyast kdump keepolddumps no=5- kernelcommandline

Specifies the command line that needs to be passed off to the kdump kernel:

>sudoyast kdump kernelcommandline command="ro root=LABEL=/"- kernelcommandlineappend

Specifies the command line that you need to append to the default command line string:

>sudoyast kdump kernelcommandlineappend command="ro root=LABEL=/"- notificationcc

Specifies an e-mail address for sending copies of notification messages:

>sudoyast kdump notificationcc email="user1@example.com user2@example.com"- notificationto

Specifies an e-mail address for sending notification messages:

>sudoyast kdump notificationto email="user1@example.com user2@example.com"- show

Displays

kdumpsettings:>sudoyast kdump show Kdump is disabled Dump Level: 31 Dump Format: compressed Dump Target Settings target: file file directory: /var/crash Kdump immediate reboots: Enabled Numbers of old dumps: 5- smtppass

Specifies the file with the plain text SMTP password used for sending notification messages:

>sudoyast kdump smtppass pass=/path/to/file- smtpserver

Specifies the SMTP server host name used for sending notification messages:

>sudoyast kdump smtpserver server=smtp.server.com- smtpuser

Specifies the SMTP user name used for sending notification messages:

>sudoyast kdump smtpuser user=smtp_user- startup

Enables or disables start-up options:

>sudoyast kdump startup enable alloc_mem=128,256>sudoyast kdump startup disable

4.4.3.10 yast keyboard #

Configures the system keyboard for virtual consoles. It does not affect

the keyboard settings in graphical desktop environments, such as GNOME

or KDE. yast keyboard accepts the following commands:

- list

Lists all available keyboard layouts.

- set

Activates new keyboard layout setting:

>sudoyast keyboard set layout=czech- summary

Displays the current keyboard configuration.

4.4.3.11 yast lan #

Configures network cards. yast lan accepts the

following commands:

- add

Configures a new network card:

>sudoyast lan add name=vlan50 ethdevice=eth0 bootproto=dhcpFor a complete list of options, run

yast lan add help.- delete

Deletes an existing network card:

>sudoyast lan delete id=0- edit

Changes the configuration of an existing network card:

>sudoyast lan edit id=0 bootproto=dhcp- list

Displays a summary of network card configuration:

>sudoyast lan list id name, bootproto 0 Ethernet Card 0, NONE 1 Network Bridge, DHCP

4.4.3.12 yast language #

Configures system languages. yast language accepts the

following commands:

- list

Lists all available languages.

- set

Specifies the main system languages and secondary languages:

>sudoyast language set lang=cs_CZ languages=en_US,es_ES no_packages

4.4.3.13 yast mail #

Displays the configuration of the mail system:

>sudoyast mail summary

4.4.3.14 yast nfs #

Controls the NFS client. yast nfs accepts the following

commands:

- add

Adds a new NFS mount:

>sudoyast nfs add spec=remote_host:/path/to/nfs/share file=/local/mount/pointFor a complete list of options, run

yast nfs add help.- delete

Deletes an existing NFS mount:

>sudoyast nfs delete spec=remote_host:/path/to/nfs/share file=/local/mount/pointFor a complete list of options, run

yast nfs delete help.- edit