5 LVM configuration #

This chapter describes the principles behind Logical Volume Manager (LVM) and its basic features that make it useful under many circumstances. The YaST LVM configuration can be reached from the YaST Expert Partitioner. This partitioning tool enables you to edit and delete existing partitions and create new ones that should be used with LVM.

Using LVM might be associated with increased risk, such as data loss. Risks also include application crashes, power failures, and faulty commands. Save your data before implementing LVM or reconfiguring volumes. Never work without a backup.

5.1 Understanding the logical volume manager #

LVM enables flexible distribution of hard disk space over several physical volumes (hard disks, partitions, LUNs). It was developed because the need to change the segmentation of hard disk space might arise only after the initial partitioning has already been done during installation. Because it is difficult to modify partitions on a running system, LVM provides a virtual pool (volume group or VG) of storage space from which logical volumes (LVs) can be created as needed. The operating system accesses these LVs instead of the physical partitions. Volume groups can span more than one disk, so that several disks or parts of them can constitute one single VG. In this way, LVM provides a kind of abstraction from the physical disk space that allows its segmentation to be changed in a much easier and safer way than through physical repartitioning.

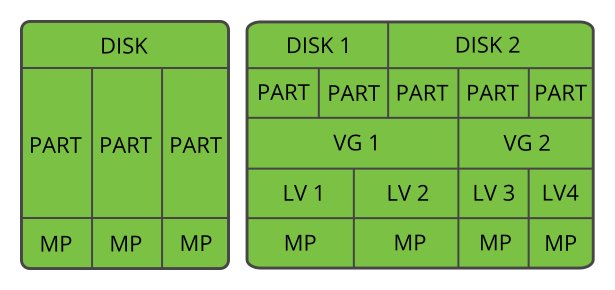

Figure 5.1, “Physical partitioning versus LVM” compares physical partitioning (left) with LVM segmentation (right). On the left side, one single disk has been divided into three physical partitions (PART), each with a mount point (MP) assigned so that the operating system can access them. On the right side, two disks have been divided into two and three physical partitions each. Two LVM volume groups (VG 1 and VG 2) have been defined. VG 1 contains two partitions from DISK 1 and one from DISK 2. VG 2 contains the remaining two partitions from DISK 2.

In LVM, the physical disk partitions that are incorporated in a volume group are called physical volumes (PVs). Within the volume groups in Figure 5.1, “Physical partitioning versus LVM”, four logical volumes (LV 1 through LV 4) have been defined, which can be used by the operating system via the associated mount points (MP). The border between different logical volumes need not be aligned with any partition border. See the border between LV 1 and LV 2 in this example.

LVM features:

Several hard disks or partitions can be combined in a large logical volume.

Provided the configuration is suitable, an LV (such as

/usr) can be enlarged when the free space is exhausted.Using LVM, it is possible to add hard disks or LVs in a running system. However, this requires hotpluggable hardware that is capable of such actions.

It is possible to activate a striping mode that distributes the data stream of a logical volume over several physical volumes. If these physical volumes reside on different disks, this can improve the reading and writing performance like RAID 0.

The snapshot feature enables consistent backups (especially for servers) in the running system.

Even though LVM also supports RAID levels 0, 1, 4, 5 and 6, we recommend

using mdraid (see Chapter 7, Software RAID configuration). However,

LVM works fine with RAID 0 and 1, as RAID 0 is similar to common

logical volume management (individual logical blocks are mapped onto blocks

on the physical devices). LVM used on top of RAID 1 can keep track of

mirror synchronization and is fully able to manage the synchronization

process. With higher RAID levels you need a management daemon that monitors

the states of attached disks and can inform administrators if there is a

problem in the disk array. LVM includes such a daemon, but in exceptional

situations such as a device failure, the daemon does not work properly.

If you configure the system with a root file system on LVM or software RAID

array, you must place /boot/zipl on a separate, non-LVM or

non-RAID partition, otherwise the system will fail to boot. The recommended

size for such a partition is 500 MB and the recommended file system is

Ext4.

With these features, using LVM already makes sense for heavily-used home PCs or small servers. If you have a growing data stock, as in the case of databases, music archives, or user directories, LVM is especially useful. It allows file systems that are larger than the physical hard disk. However, keep in mind that working with LVM is different from working with conventional partitions.

You can manage new or existing LVM storage objects by using the YaST Partitioner. Instructions and further information about configuring LVM are available in the official LVM HOWTO.

5.2 Creating volume groups #

An LVM volume group (VG) organizes the Linux LVM partitions into a logical pool of space. You can carve out logical volumes from the available space in the group. The Linux LVM partitions in a group can be on the same or different disks. You can add partitions or entire disks to expand the size of the group.

To use an entire disk, it must not contain any partitions. When using

partitions, they must not be mounted. YaST will automatically change their

partition type to 0x8E Linux LVM when adding them to a

VG.

Launch YaST and open the .

In case you need to reconfigure your existing partitioning setup, proceed as follows. Refer to Section 10.1, “Using the ” for details. Skip this step if you only want to use unused disks or partitions that already exist.

Warning: Physical volumes on unpartitioned disksYou can use an unpartitioned disk as a physical volume (PV) if that disk is not the one where the operating system is installed and from which it boots.

As unpartitioned disks appear as unused at the system level, they can easily be overwritten or wrongly accessed.

To use an entire hard disk that already contains partitions, delete all partitions on that disk.

To use a partition that is currently mounted, unmount it.

In the left panel, select .

A list of existing Volume Groups opens in the right panel.

At the lower left of the Volume Management page, click .

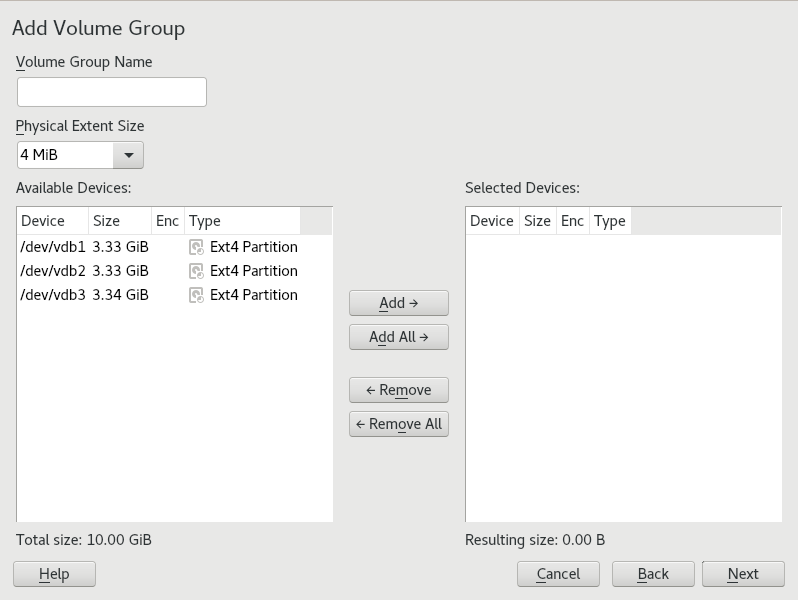

Define the volume group as follows:

Specify the .

If you are creating a volume group at install time, the name

systemis suggested for a volume group that will contain the SUSE Linux Enterprise Server system files.Specify the .

The defines the size of a physical block in the volume group. All the disk space in a volume group is handled in chunks of this size. Values can be from 1 KB to 16 GB in powers of 2. This value is normally set to 4 MB.

In LVM1, a 4 MB physical extent allowed a maximum LV size of 256 GB because it supports only up to 65534 extents per LV. LVM2, which is used on SUSE Linux Enterprise Server, does not restrict the number of physical extents. Having many extents has no impact on I/O performance to the logical volume, but it slows down the LVM tools.

Important: Physical extent sizesDifferent physical extent sizes should not be mixed in a single VG. The extent should not be modified after the initial setup.

In the list, select the Linux LVM partitions that you want to make part of this volume group, then click to move them to the list.

Click .

The new group appears in the list.

On the Volume Management page, click , verify that the new volume group is listed, then click .

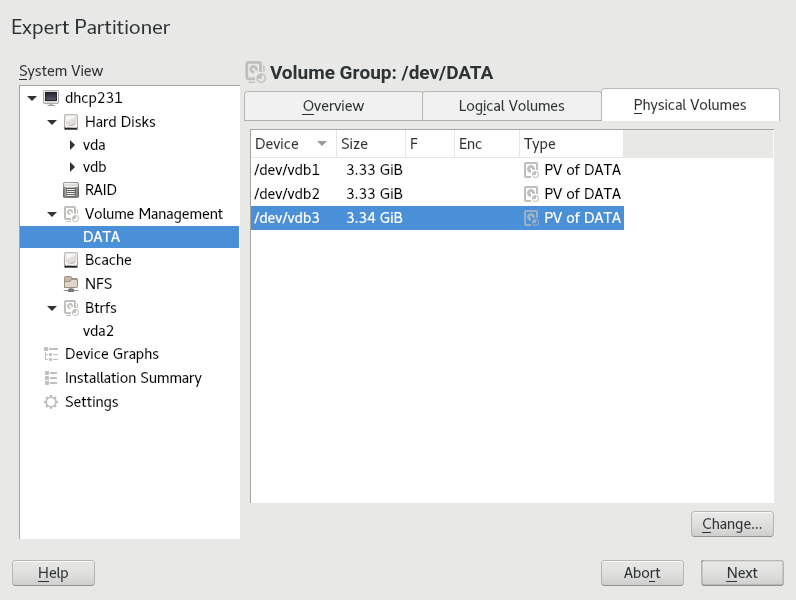

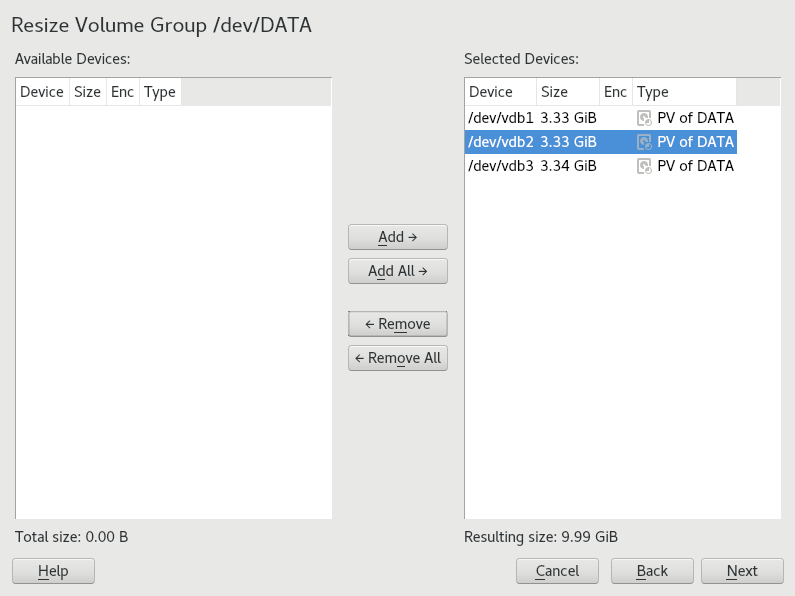

To check which physical devices are part of the volume group, open the YaST Partitioner at any time in the running system and click › › . Leave this screen with .

Figure 5.2: Physical volumes in the volume group named DATA #

5.3 Creating logical volumes #

A logical volume provides a pool of space similar to what a hard disk does. To make this space usable, you need to define logical volumes. A logical volume is similar to a regular partition—you can format and mount it.

Use The YaST Partitioner to create logical volumes from an existing volume group. Assign at least one logical volume to each volume group. You can create new logical volumes as needed until all free space in the volume group has been exhausted. An LVM logical volume can optionally be thinly provisioned, allowing you to create logical volumes with sizes that overbook the available free space (see Section 5.3.1, “Thinly provisioned logical volumes” for more information).

Normal volume: (Default) The volume’s space is allocated immediately.

Thin pool: The logical volume is a pool of space that is reserved for use with thin volumes. The thin volumes can allocate their needed space from it on demand.

Thin volume: The volume is created as a sparse volume. The volume allocates needed space on demand from a thin pool.

Mirrored volume: The volume is created with a defined count of mirrors.

Launch YaST and open the .

In the left panel, select . A list of existing Volume Groups opens in the right panel.

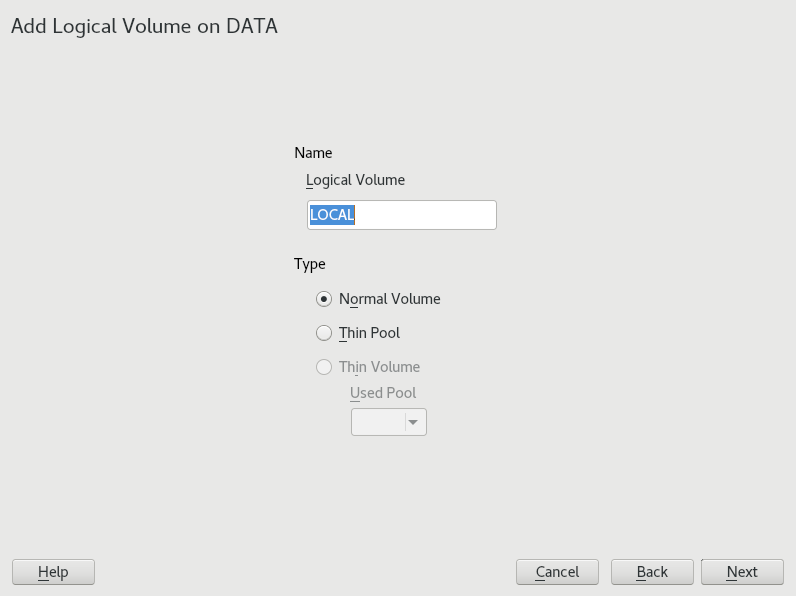

Select the volume group in which you want to create the volume and choose › .

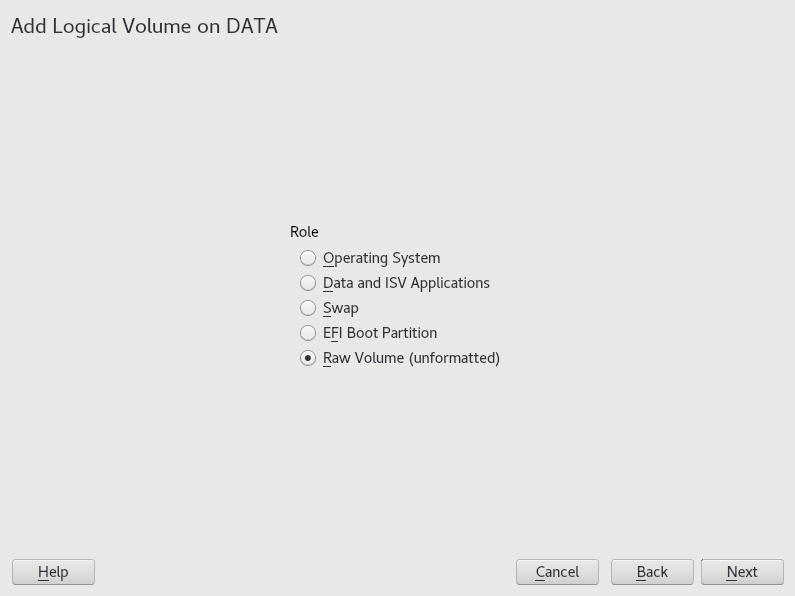

Provide a for the volume and choose (refer to Section 5.3.1, “Thinly provisioned logical volumes” for setting up thinly provisioned volumes). Proceed with .

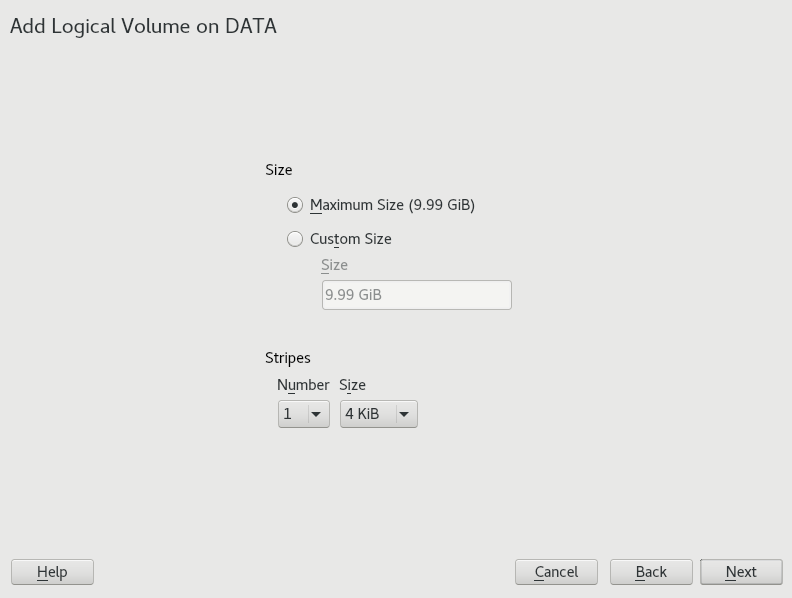

Specify the size of the volume and whether to use multiple stripes.

Using a striped volume, the data will be distributed among several physical volumes. If these physical volumes reside on different hard disks, this generally results in a better reading and writing performance (like RAID 0). The maximum number of available stripes is equal to the number of physical volumes. The default (

1is to not use multiple stripes.Choose a for the volume. Your choice here only affects the default values for the upcoming dialog. They can be changed in the next step. If in doubt, choose .

Under , select , then select the . The content of the menu depends on the file system. Usually there is no need to change the defaults.

Under , select , then select the mount point. Click to add special mounting options for the volume.

Click .

Click , verify that the changes are listed, then click .

5.3.1 Thinly provisioned logical volumes #

An LVM logical volume can optionally be thinly provisioned. Thin provisioning allows you to create logical volumes with sizes that overbook the available free space. You create a thin pool that contains unused space reserved for use with an arbitrary number of thin volumes. A thin volume is created as a sparse volume and space is allocated from a thin pool as needed. The thin pool can be expanded dynamically when needed for cost-effective allocation of storage space. Thinly provisioned volumes also support snapshots which can be managed with Snapper—see Chapter 7, System recovery and snapshot management with Snapper for more information.

To set up a thinly provisioned logical volume, proceed as described in Procedure 5.1, “Setting up a logical volume”. When it comes to choosing the volume type, do not choose , but rather or .

The logical volume is a pool of space that is reserved for use with thin volumes. The thin volumes can allocate their needed space from it on demand.

The volume is created as a sparse volume. The volume allocates needed space on demand from a thin pool.

To use thinly provisioned volumes in a cluster, the thin pool and the thin volumes that use it must be managed in a single cluster resource. This allows the thin volumes and thin pool to always be mounted exclusively on the same node.

5.3.2 Creating mirrored volumes #

A logical volume can be created with several mirrors. LVM ensures that data written to an underlying physical volume is mirrored onto a different physical volume. Thus even though a physical volume crashes, you can still access the data on the logical volume. LVM also keeps a log file to manage the synchronization process. The log contains information about which volume regions are currently undergoing synchronization with mirrors. By default the log is stored on disk and if possible on a different disk than are the mirrors. But you may specify a different location for the log, for example volatile memory.

Currently there are two types of mirror implementation available: "normal"

(non-raid) mirror logical volumes and

raid1 logical volumes.

After you create mirrored logical volumes, you can perform standard operations with mirrored logical volumes like activating, extending, and removing.

5.3.2.1 Setting up mirrored non-RAID logical volumes #

To create a mirrored volume use the lvcreate command.

The following example creates a 500 GB logical volume with two

mirrors called lv1, which uses a volume group

vg1.

>sudolvcreate -L 500G -m 2 -n lv1 vg1

Such a logical volume is a linear volume (without striping) that provides

three copies of the file system. The m option specifies

the count of mirrors. The L option specifies the size

of the logical volumes.

The logical volume is divided into regions of the 512 KB default size. If

you need a different size of regions, use the -R option

followed by the desired region size in megabytes. Or you can configure the

preferred region size by editing the mirror_region_size

option in the lvm.conf file.

5.3.2.2 Setting up raid1 logical volumes #

As LVM supports RAID you can implement mirroring by using RAID1. Such implementation provides the following advantages compared to the non-raid mirrors:

LVM maintains a fully redundant bitmap area for each mirror image, which increases its fault handling capabilities.

Mirror images can be temporarily split from the array and then merged back.

The array can handle transient failures.

The LVM RAID 1 implementation supports snapshots.

On the other hand, this type of mirroring implementation does not enable to create a logical volume in a clustered volume group.

To create a mirror volume by using RAID, issue the command

>sudolvcreate --type raid1 -m 1 -L 1G -n lv1 vg1

where the options/parameters have the following meanings:

--type- you need to specifyraid1, otherwise the command uses the implicit segment typemirrorand creates a non-raid mirror.-m- specifies the count of mirrors.-L- specifies the size of the logical volume.-n- by using this option you specify a name of the logical volume.vg1- is a name of the volume group used by the logical volume.

LVM creates a logical volume of one extent size for each data volume in the array. If you have two mirrored volumes, LVM creates another two volumes that stores metadata.

After you create a RAID logical volume, you can use the volume in the same way as a common logical volume. You can activate it, extend it, etc.

5.4 Automatically activating non-root LVM volume groups #

Activation behavior for non-root LVM volume groups is controlled in the

/etc/lvm/lvm.conf file and by the

auto_activation_volume_list parameter. By default,

the parameter is empty and all volumes are activated. To activate only some

volume groups, add the names in quotes and separate them with commas, for

example:

auto_activation_volume_list = [ "vg1", "vg2/lvol1", "@tag1", "@*" ]

If you have defined a list in the auto_activation_volume_list parameter, the following will happen:

Each logical volume is first checked against this list.

If it does not match, the logical volume will not be activated.

By default, non-root LVM volume groups are automatically activated on system restart by Dracut. This parameter allows you to activate all volume groups on system restart, or to activate only specified non-root LVM volume groups.

5.5 Resizing an existing volume group #

The space provided by a volume group can be expanded at any time in the running system without service interruption by adding physical volumes. This will allow you to add logical volumes to the group or to expand the size of existing volumes as described in Section 5.6, “Resizing a logical volume”.

It is also possible to reduce the size of the volume group by removing

physical volumes. YaST only allows to remove physical volumes that are

currently unused. To find out which physical volumes are currently in use,

run the following command. The partitions (physical volumes) listed in the

PE Ranges column are the ones in use:

>sudopvs -o vg_name,lv_name,pv_name,seg_pe_ranges root's password: VG LV PV PE Ranges /dev/sda1 DATA DEVEL /dev/sda5 /dev/sda5:0-3839 DATA /dev/sda5 DATA LOCAL /dev/sda6 /dev/sda6:0-2559 DATA /dev/sda7 DATA /dev/sdb1 DATA /dev/sdc1

Launch YaST and open the .

In the left panel, select . A list of existing Volume Groups opens in the right panel.

Select the volume group you want to change, activate the tab, then click .

Do one of the following:

Add: Expand the size of the volume group by moving one or more physical volumes (LVM partitions) from the list to the list.

Remove: Reduce the size of the volume group by moving one or more physical volumes (LVM partitions) from the list to the list.

Click .

Click , verify that the changes are listed, then click .

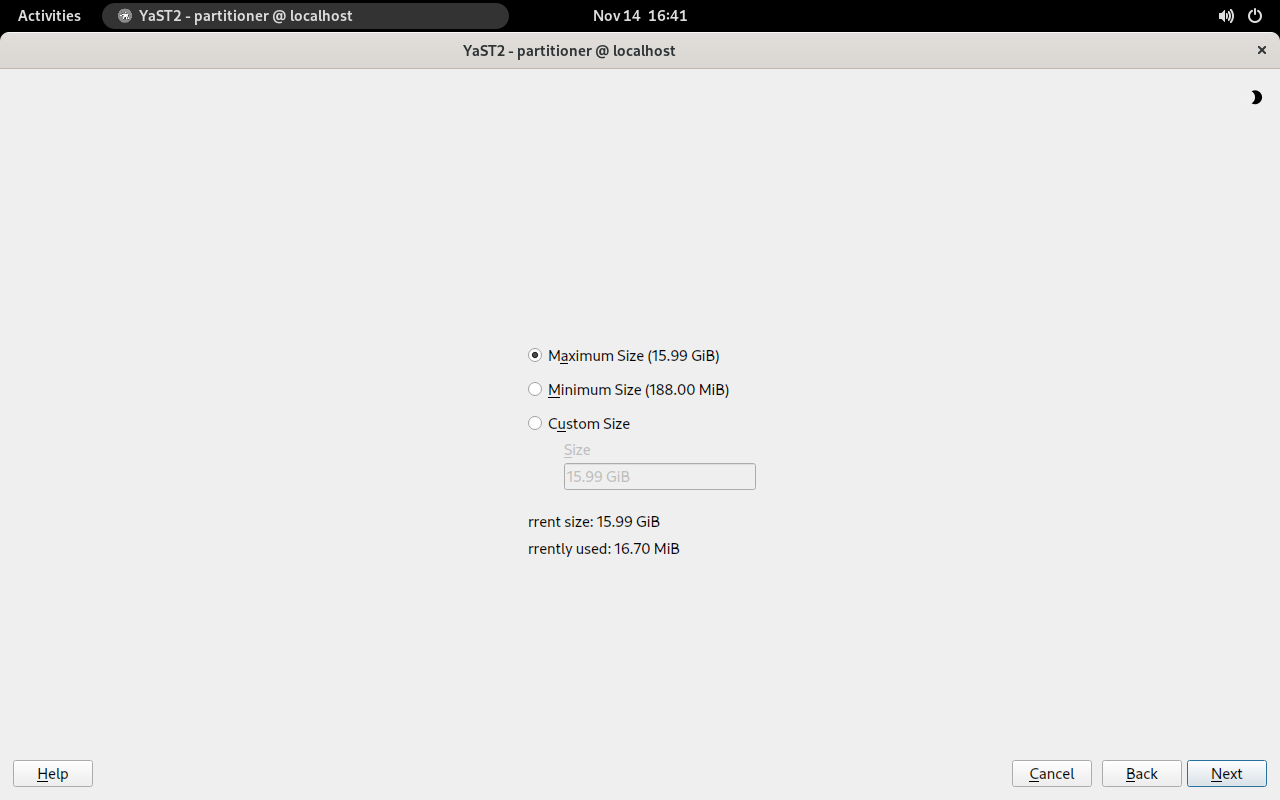

5.6 Resizing a logical volume #

In case there is unused free space available in the volume group, you can enlarge a logical volume to provide more usable space. You may also reduce the size of a volume to free space in the volume group that can be used by other logical volumes.

When reducing the size of a volume, YaST automatically resizes its file system, too. Whether a volume that is currently mounted can be resized “online” (that is while being mounted), depends on its file system. Growing the file system online is supported by Btrfs, XFS, Ext3, and Ext4.

Shrinking the file system online is only supported by Btrfs. To shrink the Ext2/3/4 file systems, you need to unmount them. Shrinking volumes formatted with XFS is not possible, since XFS does not support file system shrinking.

Launch YaST and under , open the .

In the left panel, select the LVM volume group.

Select the logical volume you want to change in the right panel.

Click and then .

Set the intended size by using one of the following options:

Maximum size. Expand the size of the logical volume to use all space left in the volume group.

Minimum size. Reduce the size of the logical volume to the size occupied by the data and the file system metadata.

Custom size. Specify the new size for the volume. The value must be within the range of the minimum and maximum values listed above. Use K, M, G, T for Kilobytes, Megabytes, Gigabytes and Terabytes (for example

20G).

Click , verify that the change is listed, then click .

5.7 Deleting a volume group or a logical volume #

Deleting a volume group destroys all of the data in each of its member partitions. Deleting a logical volume destroys all data stored on the volume.

Launch YaST and open the .

In the left panel, select . A list of existing volume groups opens in the right panel.

Select the volume group or the logical volume you want to remove and click .

Depending on your choice, warning dialogs are shown. Confirm them with .

Click , verify that the deleted volume group is listed—deletion is indicated by a red-colored font—then click .

5.8 Disabling LVM on boot #

If there is an error on the LVM storage, the scanning of LVM volumes may

prevent entering the emergency/rescue shell. Thus, further problem diagnosis

is not possible. To disable this scanning in case of an LVM storage failure,

you can pass the nolvm option on the kernel command line.

5.9 Using LVM commands #

For information about using LVM commands, see the man

pages for the commands described in the following table. All commands need

to be executed with root privileges. Either use

sudo COMMAND (recommended), or

execute them directly as root.

pvcreate DEVICEInitializes a device (such as

/dev/sdb1) for use by LVM as a physical volume. If there is any file system on the specified device, a warning appears. Bear in mind thatpvcreatechecks for existing file systems only ifblkidis installed (which is done by default). Ifblkidis not available,pvcreatewill not produce any warning and you may lose your file system without any warning.pvdisplay DEVICEDisplays information about the LVM physical volume, such as whether it is currently being used in a logical volume.

vgcreate -c y VG_NAME DEV1 [DEV2...]Creates a clustered volume group with one or more specified devices.

vgcreate --activationmode ACTIVATION_MODE VG_NAMEConfigures the mode of volume group activation. You can specify one of the following values:

complete- only the logical volumes that are not affected by missing physical volumes can be activated, even though the particular logical volume can tolerate such a failure.degraded- is the default activation mode. If there is a sufficient level of redundancy to activate a logical volume, the logical volume can be activated even though some physical volumes are missing.partial- the LVM tries to activate the volume group even though some physical volumes are missing. If a non-redundant logical volume is missing important physical volumes, then the logical volume usually cannot be activated and is handled as an error target.

vgchange -a [ey|n] VG_NAMEActivates (

-a ey) or deactivates (-a n) a volume group and its logical volumes for input/output.When activating a volume in a cluster, ensure that you use the

eyoption. This option is used by default in the load script.vgremove VG_NAMERemoves a volume group. Before using this command, remove the logical volumes, then deactivate the volume group.

vgdisplay VG_NAMEDisplays information about a specified volume group.

To find the total physical extent of a volume group, enter

>vgdisplay VG_NAME | grep "Total PE"lvcreate -L SIZE -n LV_NAME VG_NAMECreates a logical volume of the specified size.

lvcreate -L SIZE --thinpool POOL_NAME VG_NAMECreates a thin pool named

myPoolof the specified size from the volume group VG_NAME.The following example creates a thin pool with a size of 5 GB from the volume group

LOCAL:>sudolvcreate -L 5G --thinpool myPool LOCALlvcreate -T VG_NAME/POOL_NAME -V SIZE -n LV_NAMECreates a thin logical volume within the pool POOL_NAME. The following example creates a 1GB thin volume named

myThin1from the poolmyPoolon the volume groupLOCAL:>sudolvcreate -T LOCAL/myPool -V 1G -n myThin1lvcreate -T VG_NAME/POOL_NAME -V SIZE -L SIZE -n LV_NAMEIt is also possible to combine thin pool and thin logical volume creation in one command:

>sudolvcreate -T LOCAL/myPool -V 1G -L 5G -n myThin1lvcreate --activationmode ACTIVATION_MODE LV_NAMEConfigures the mode of logical volume activation. You can specify one of the following values:

complete- the logical volume can be activated only if all its physical volumes are active.degraded- is the default activation mode. If there is a sufficient level of redundancy to activate a logical volume, the logical volume can be activated even though some physical volumes are missing.partial- the LVM tries to activate the volume even though some physical volumes are missing. In this case part of the logical volume may be unavailable and it might cause data loss. This option is typically not used, but might be useful when restoring data.

You can specify the activation mode also in

/etc/lvm/lvm.confby specifying one of the above described values of theactivation_modeconfiguration option.lvcreate -s [-L SIZE] -n SNAP_VOLUME SOURCE_VOLUME_PATH VG_NAMECreates a snapshot volume for the specified logical volume. If the size option (

-Lor--size) is not included, the snapshot is created as a thin snapshot.lvremove /dev/VG_NAME/LV_NAMERemoves a logical volume.

Before using this command, close the logical volume by unmounting it with the

umountcommand.lvremove SNAP_VOLUME_PATHRemoves a snapshot volume.

lvconvert --merge SNAP_VOLUME_PATHReverts the logical volume to the version of the snapshot.

vgextend VG_NAME DEVICEAdds the specified device (physical volume) to an existing volume group.

vgreduce VG_NAME DEVICERemoves a specified physical volume from an existing volume group.

Ensure that the physical volume is not currently being used by a logical volume. If it is, you must move the data to another physical volume by using the

pvmovecommand.lvextend -L SIZE /dev/VG_NAME/LV_NAMEExtends the size of a specified logical volume. Afterward, you must also expand the file system to take advantage of the newly available space. See Chapter 2, Resizing file systems for details.

lvreduce -L SIZE /dev/VG_NAME/LV_NAMEReduces the size of a specified logical volume.

Ensure that you reduce the size of the file system first before shrinking the volume, otherwise you risk losing data. See Chapter 2, Resizing file systems for details.

lvrename /dev/VG_NAME/LV_NAME /dev/VG_NAME/NEW_LV_NAMERenames an existing LVM logical volume. It does not change the volume group name.

In case you want to manage LV device nodes and symbolic links by using LVM instead of by using udev rules, you can achieve this by disabling notifications from udev with one of the following methods:

Configure

activation/udev_rules = 0andactivation/udev_sync = 0in/etc/lvm/lvm.conf.Note that specifying

--nodevsyncwith thelvcreatecommand has the same effect asactivation/udev_sync = 0; settingactivation/udev_rules = 0is still required.Setting the environment variable

DM_DISABLE_UDEV:export DM_DISABLE_UDEV=1

This will also disable notifications from udev. In addition, all udev related settings from

/etc/lvm/lvm.confwill be ignored.

5.9.1 Resizing a logical volume with commands #

The lvresize, lvextend, and

lvreduce commands are used to resize logical volumes.

See the man pages for each of these commands for syntax and options

information. To extend an LV there must be enough unallocated space

available on the VG.

The recommended way to grow or shrink a logical volume is to use the YaST Partitioner. When using YaST, the size of the file system in the volume will automatically be adjusted, too.

LVs can be extended or shrunk manually while they are being used, but this may not be true for a file system on them. Extending or shrinking the LV does not automatically modify the size of file systems in the volume. You must use a different command to grow the file system afterward. For information about resizing file systems, see Chapter 2, Resizing file systems.

Ensure that you use the right sequence when manually resizing an LV:

If you extend an LV, you must extend the LV before you attempt to grow the file system.

If you shrink an LV, you must shrink the file system before you attempt to shrink the LV.

To extend the size of a logical volume:

Open a terminal.

If the logical volume contains an Ext2 or Ext4 file system, which do not support online growing, dismount it. In case it contains file systems that are hosted for a virtual machine (such as a Xen VM), shut down the VM first.

At the terminal prompt, enter the following command to grow the size of the logical volume:

>sudolvextend -L +SIZE /dev/VG_NAME/LV_NAMEFor SIZE, specify the amount of space you want to add to the logical volume, such as 10 GB. Replace

/dev/VG_NAME/LV_NAMEwith the Linux path to the logical volume, such as/dev/LOCAL/DATA. For example:>sudolvextend -L +10GB /dev/vg1/v1Adjust the size of the file system. See Chapter 2, Resizing file systems for details.

In case you have dismounted the file system, mount it again.

For example, to extend an LV with a (mounted and active) Btrfs on it by 10 GB:

>sudolvextend −L +10G /dev/LOCAL/DATA>sudobtrfs filesystem resize +10G /dev/LOCAL/DATA

To shrink the size of a logical volume:

Open a terminal.

If the logical volume does not contain a Btrfs file system, dismount it. In case it contains file systems that are hosted for a virtual machine (such as a Xen VM), shut down the VM first. Note that volumes with the XFS file system cannot be reduced in size.

Adjust the size of the file system. See Chapter 2, Resizing file systems for details.

At the terminal prompt, enter the following command to shrink the size of the logical volume to the size of the file system:

>sudolvreduce /dev/VG_NAME/LV_NAMEIn case you have unmounted the file system, mount it again.

For example, to shrink an LV with a Btrfs on it by 5 GB:

>sudobtrfs filesystem resize -size 5G /dev/LOCAL/DATA sudo lvreduce /dev/LOCAL/DATA

Starting with SUSE Linux Enterprise Server 12 SP1, lvextend,

lvresize, and lvreduce support the

option --resizefs which will not only change the size of

the volume, but will also resize the file system. Therefore the examples

for lvextend and lvreduce shown

above can alternatively be run as follows:

>sudolvextend --resizefs −L +10G /dev/LOCAL/DATA>sudolvreduce --resizefs -L -5G /dev/LOCAL/DATA

Note that the --resizefs is supported for the following

file systems: ext2/3/4, Btrfs, XFS. Resizing Btrfs with this option is

currently only available on SUSE Linux Enterprise Server, since it is not yet accepted

upstream.

5.9.2 Using LVM cache volumes #

LVM supports the use of fast block devices (such as an SSD device) as write-back or write-through caches for large slower block devices. The cache logical volume type uses a small and fast LV to improve the performance of a large and slow LV.

To set up LVM caching, you need to create two logical volumes on the caching device. A large one is used for the caching itself, a smaller volume is used to store the caching metadata. These two volumes need to be part of the same volume group as the original volume. When these volumes are created, they need to be converted into a cache pool which needs to be attached to the original volume:

Create the original volume (on a slow device) if not already existing.

Add the physical volume (from a fast device) to the same volume group the original volume is part of and create the cache data volume on the physical volume.

Create the cache metadata volume. The size should be 1/1000 of the size of the cache data volume, with a minimum size of 8 MB.

Combine the cache data volume and metadata volume into a cache pool volume:

>sudolvconvert --type cache-pool --poolmetadata VOLUME_GROUP/METADATA_VOLUME VOLUME_GROUP/CACHING_VOLUMEAttach the cache pool to the original volume:

>sudolvconvert --type cache --cachepool VOLUME_GROUP/CACHING_VOLUME VOLUME_GROUP/ORIGINAL_VOLUME

For more information on LVM caching, see the lvmcache(7) man page.

5.10 Tagging LVM2 storage objects #

A tag is an unordered keyword or term assigned to the metadata of a storage object. Tagging allows you to classify collections of LVM storage objects in ways that you find useful by attaching an unordered list of tags to their metadata.

5.10.1 Using LVM2 tags #

After you tag the LVM2 storage objects, you can use the tags in commands to accomplish the following tasks:

Select LVM objects for processing according to the presence or absence of specific tags.

Use tags in the configuration file to control which volume groups and logical volumes are activated on a server.

Override settings in a global configuration file by specifying tags in the command.

A tag can be used in place of any command line LVM object reference that accepts:

a list of objects

a single object as long as the tag expands to a single object

Replacing the object name with a tag is not supported everywhere yet. After the arguments are expanded, duplicate arguments in a list are resolved by removing the duplicate arguments, and retaining the first instance of each argument.

Wherever there might be ambiguity of argument type, you must prefix a tag

with the commercial at sign (@) character, such as

@mytag. Elsewhere, using the “@” prefix is

optional.

5.10.2 Requirements for creating LVM2 tags #

Consider the following requirements when using tags with LVM:

- Supported characters

An LVM tag word can contain the ASCII uppercase characters A to Z, lowercase characters a to z, numbers 0 to 9, underscore (_), plus (+), hyphen (-), and period (.). The word cannot begin with a hyphen. The maximum length is 128 characters.

- Supported storage objects

You can tag LVM2 physical volumes, volume groups, logical volumes, and logical volume segments. PV tags are stored in its volume group’s metadata. Deleting a volume group also deletes the tags in the orphaned physical volume. Snapshots cannot be tagged, but their origin can be tagged.

LVM1 objects cannot be tagged because the disk format does not support it.

5.10.3 Command line tag syntax #

--addtagTAG_INFOAdd a tag to (or tag) an LVM2 storage object. Example:

>sudovgchange --addtag @db1 vg1--deltagTAG_INFORemove a tag from (or untag) an LVM2 storage object. Example:

>sudovgchange --deltag @db1 vg1--tagTAG_INFOSpecify the tag to use to narrow the list of volume groups or logical volumes to be activated or deactivated.

Enter the following to activate the volume if it has a tag that matches the tag provided (example):

>sudolvchange -ay --tag @db1 vg1/vol2

5.10.4 Configuration file syntax #

The following sections show example configurations for certain use cases.

5.10.4.1 Enabling host name tags in the lvm.conf file #

Add the following code to the /etc/lvm/lvm.conf file

to enable host tags that are defined separately on host in a

/etc/lvm/lvm_<HOSTNAME>.conf

file.

tags {

# Enable hostname tags

hosttags = 1

}

You place the activation code in the

/etc/lvm/lvm_<HOSTNAME>.conf

file on the host. See

Section 5.10.4.3, “Defining activation”.

5.10.4.2 Defining tags for host names in the lvm.conf file #

tags {

tag1 { }

# Tag does not require a match to be set.

tag2 {

# If no exact match, tag is not set.

host_list = [ "hostname1", "hostname2" ]

}

}5.10.4.3 Defining activation #

You can modify the /etc/lvm/lvm.conf file to activate

LVM logical volumes based on tags.

In a text editor, add the following code to the file:

activation {

volume_list = [ "vg1/lvol0", "@database" ]

}

Replace @database with your tag. Use

"@*" to match the tag against any tag set on the host.

The activation command matches against VGNAME, VGNAME/LVNAME, or @TAG set in the metadata of volume groups and logical volumes. A volume group or logical volume is activated only if a metadata tag matches. The default if there is no match, is not to activate.

If volume_list is not present and tags are defined on

the host, then it activates the volume group or logical volumes only if a

host tag matches a metadata tag.

If volume_list is defined, but empty, and no tags are

defined on the host, then it does not activate.

If volume_list is undefined, it imposes no limits on LV activation (all are allowed).

5.10.4.4 Defining activation in multiple host name configuration files #

You can use the activation code in a host’s configuration file

(/etc/lvm/lvm_<HOST_TAG>.conf)

when host tags are enabled in the lvm.conf file. For

example, a server has two configuration files in the

/etc/lvm/ directory:

lvm.conf |

lvm_<HOST_TAG>.conf |

At start-up, load the /etc/lvm/lvm.conf file, and

process any tag settings in the file. If any host tags were defined, it

loads the related

/etc/lvm/lvm_<HOST_TAG>.conf

file. When it searches for a specific configuration file entry, it

searches the host tag file first, then the lvm.conf

file, and stops at the first match. Within the

lvm_<HOST_TAG>.conf

file, use the reverse order that tags were set in. This allows the file

for the last tag set to be searched first. New tags set in the host tag

file will trigger additional configuration file loads.

5.10.5 Using tags for a simple activation control in a cluster #

You can set up a simple host name activation control by enabling the

hostname_tags option in the

/etc/lvm/lvm.conf file. Use the same file on every

machine in a cluster so that it is a global setting.

In a text editor, add the following code to the

/etc/lvm/lvm.conffile:tags { hostname_tags = 1 }Replicate the file to all hosts in the cluster.

From any machine in the cluster, add

db1to the list of machines that activatevg1/lvol2:>sudolvchange --addtag @db1 vg1/lvol2On the

db1server, enter the following to activate it:>sudolvchange -ay vg1/vol2

5.10.6 Using tags to activate on preferred hosts in a cluster #

The examples in this section demonstrate two methods to accomplish the following:

Activate volume group

vg1only on the database hostsdb1anddb2.Activate volume group

vg2only on the file server hostfs1.Activate nothing initially on the file server backup host

fsb1, but be prepared for it to take over from the file server hostfs1.

5.10.6.1 Option 1: centralized admin and static configuration replicated between hosts #

In the following solution, the single configuration file is replicated among multiple hosts.

Add the

@databasetag to the metadata of volume groupvg1. In a terminal, enter>sudovgchange --addtag @database vg1Add the

@fileservertag to the metadata of volume groupvg2. In a terminal, enter>sudovgchange --addtag @fileserver vg2In a text editor, modify the

/etc/lvm/lvm.conffile with the following code to define the@database,@fileserver,@fileserverbackuptags.tags { database { host_list = [ "db1", "db2" ] } fileserver { host_list = [ "fs1" ] } fileserverbackup { host_list = [ "fsb1" ] } } activation { # Activate only if host has a tag that matches a metadata tag volume_list = [ "@*" ] }Replicate the modified

/etc/lvm/lvm.conffile to the four hosts:db1,db2,fs1, andfsb1.If the file server host goes down,

vg2can be brought up onfsb1by entering the following commands in a terminal on any node:>sudovgchange --addtag @fileserverbackup vg2>sudovgchange -ay vg2

5.10.6.2 Option 2: localized admin and configuration #

In the following solution, each host holds locally the information about which classes of volume to activate.

Add the

@databasetag to the metadata of volume groupvg1. In a terminal, enter>sudovgchange --addtag @database vg1Add the

@fileservertag to the metadata of volume groupvg2. In a terminal, enter>sudovgchange --addtag @fileserver vg2Enable host tags in the

/etc/lvm/lvm.conffile:In a text editor, modify the

/etc/lvm/lvm.conffile with the following code to enable host tag configuration files.tags { hosttags = 1 }Replicate the modified

/etc/lvm/lvm.conffile to the four hosts:db1,db2,fs1, andfsb1.

On host

db1, create an activation configuration file for the database hostdb1. In a text editor, create/etc/lvm/lvm_db1.conffile and add the following code:activation { volume_list = [ "@database" ] }On host

db2, create an activation configuration file for the database hostdb2. In a text editor, create/etc/lvm/lvm_db2.conffile and add the following code:activation { volume_list = [ "@database" ] }On host fs1, create an activation configuration file for the file server host

fs1. In a text editor, create/etc/lvm/lvm_fs1.conffile and add the following code:activation { volume_list = [ "@fileserver" ] }If the file server host

fs1goes down, to bring up a spare file server host fsb1 as a file server:On host

fsb1, create an activation configuration file for the hostfsb1. In a text editor, create/etc/lvm/lvm_fsb1.conffile and add the following code:activation { volume_list = [ "@fileserver" ] }In a terminal, enter one of the following commands:

>sudovgchange -ay vg2>sudovgchange -ay @fileserver