SAP HANA System Replication Scale-Out - Performance-Optimized Scenario #

with SAPHanaSR-angi

SAP

SUSE® Linux Enterprise Server for SAP applications is optimized in various ways for SAP* applications. This guide provides detailed information about installing and customizing _SUSE Linux Enterprise Server for SAP Applications_ for SAP HANA scale-out system replication in a multi-target architecture. It is based on SUSE Linux Enterprise Server for SAP applications 15 SP6. The concept however can be used with SUSE Linux Enterprise Server for SAP applications 15 SP4 or newer.

Disclaimer: Documents published as part of the SUSE Best Practices series have been contributed voluntarily by SUSE employees and third parties. They are meant to serve as examples of how particular actions can be performed. They have been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. SUSE cannot verify that actions described in these documents do what is claimed or whether actions described have unintended consequences. SUSE LLC, its affiliates, the authors, and the translators may not be held liable for possible errors or the consequences thereof.

1 About this guide #

1.1 Introduction #

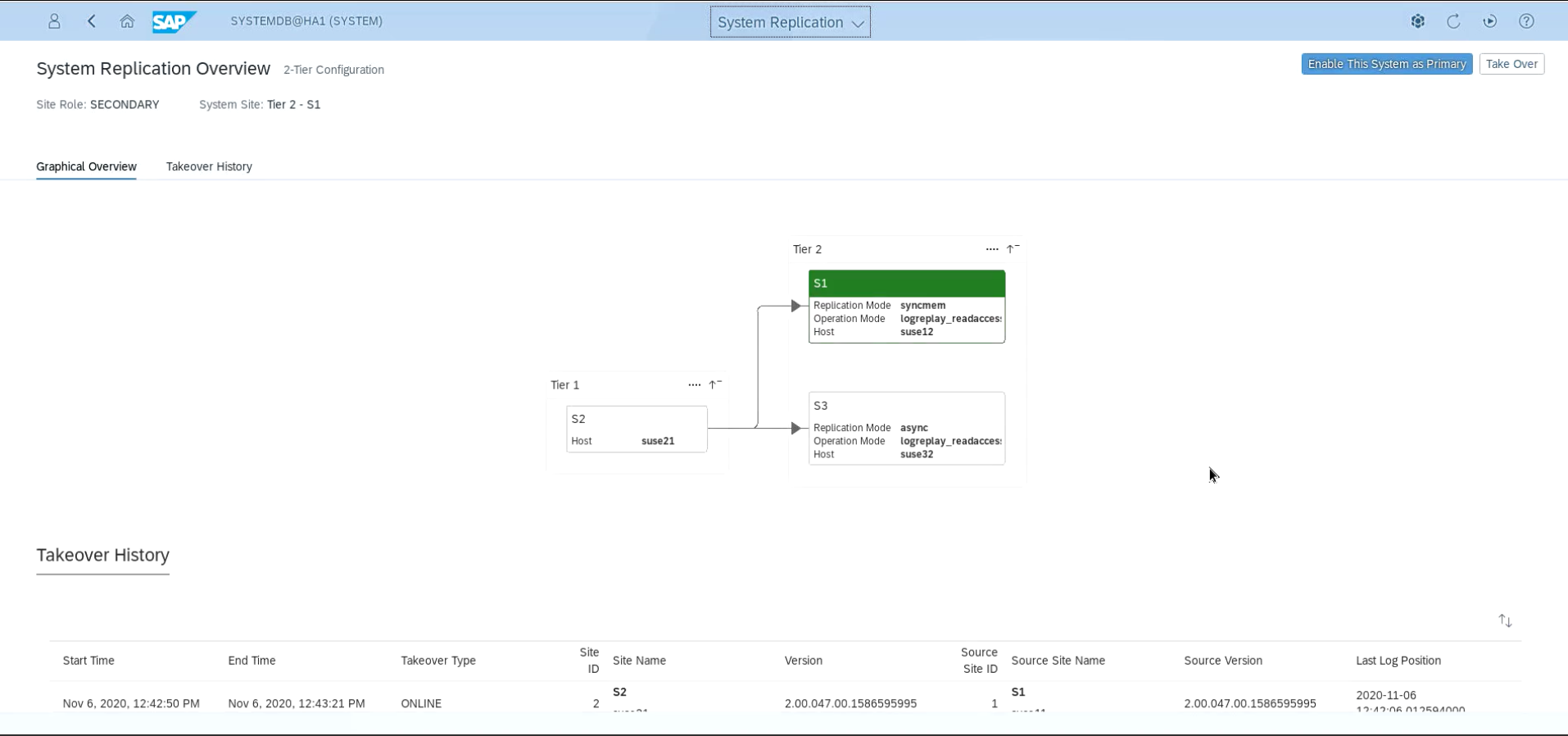

SUSE® Linux Enterprise Server for SAP Applications is optimized in various ways for SAP* applications. This guide provides detailed information about installing and customizing SUSE Linux Enterprise Server for SAP Applications for SAP HANA scale-out system replication in a multi-target architecture. The automation of system replication between SAP HANA first site to SAP HANA second site is managed by SUSE Linux Enterprise Server for SAP Applications while there is an additional system replication to a third site outside the scope of automation by SUSE Linux Enterprise Server for SAP Applications.

High availability is an important aspect of running your mission-critical SAP HANA servers.

The SAP HANA scale-out multi-target system replication is a replication of all data in SAP HANA to a second SAP HANA system in a SYNC replication. To a third SAP HANA system usually it is an ASYNC replication. The SAP HANA itself replicates all of its data to secondary SAP HANA instances. It is an out-of-the-box, standard feature.

The recovery time objective (RTO) is minimized through the data replication at regular intervals. SAP HANA supports asynchronous and synchronous modes. The document at hand describes the synchronous replication from memory into memory of the second system. This is the only method that allows the cluster to make a decision based on coded algorithms.

1.2 Abstract #

This guide describes planning, setup, and basic testing of SUSE Linux Enterprise Server for SAP Applications 15 for an "SAP HANA Scale-Out Multi-Target System Replication - ERP style" scenario.

From the application perspective the following variants are covered:

Plain system replication

Multi-tier (chained) system replication

Multi-target system replication

Multi-tenant database containers for all above

Active/active read enabled for all of the above

HANA host auto-failover is not used, there is only one master name server configured and no candidates

HANA host auto-failover is restricted and not explained in this guide

From the infrastructure perspective the following variants are covered:

3-site cluster with disk-based SBD fencing and a 4th non-cluster site (replication [ A ⇒ B ] → C )

1-site cluster with disk-based SBD fencing and a 2nd non-cluster site is possible, but not explained in this guide

Other fencing is possible, but not explained here

On-premises deployment on physical and virtual machines

Public cloud deployment (usually needs additional documentation on cloud specific details)

Deployment automation simplifies roll-out. There are several options available, particularly on public cloud platforms. Ask your public cloud provider or your SUSE contact for details.

In this guide the software package SAPHanaSR-angi is used. This package replaces the two packages SAPHanaSR and SAPHanaSR-ScaleOut. Thus new deployment should be done with SAPHanaSR-angi only. For upgrading existing clusters to SAPHanaSR-angi, read the blog article https://www.suse.com/c/how-to-upgrade-to-saphanasr-angi/ .

1.3 Additional documentation and resources #

Chapters in this manual contain links to additional documentation resources that are either available on the system or on the Internet.

For the latest SUSE product documentation updates, see https://documentation.suse.com .

Find white-papers, best-practices guides, and other resources at the

SUSE Linux Enterprise Server for SAP Applications resource library: https://documentation.suse.com/sbp/sap-15/

SUSE Best Practices Web page: https://documentation.suse.com/sbp/sap-15/

Supported high availability solutions by SUSE Linux Enterprise Server for SAP Applications overview: https://documentation.suse.com/sles-sap/sap-ha-support/html/sap-ha-support/article-sap-ha-support.html

Lastly, there are manual pages shipped with the product.

1.4 Feedback #

Several feedback channels are available:

- Bugs and Enhancement Requests

For services and support options available for your product, refer to http://www.suse.com/support/.

To report bugs for a product component, go to https://scc.suse.com/support/ requests, log in, and select Submit New SR (Service Request).

For feedback on the documentation of this product, you can send a mail to doc-team@suse.com. Make sure to include the document title, the product version and the publication date of the documentation. To report errors or suggest enhancements, provide a concise description of the problem and refer to the respective section number and page (or URL).

2 Scope of this documentation #

This document describes how to set up SAP HANA scale-out multi-target system replication with a cluster installed on two sites (and a third site which acts as majority maker) based on SUSE Linux Enterprise Server for SAP Applications 15 SP4. Furthermore, it describes how to add an additional non-cluster site for a multi-target architecture. This concept can also be used with SUSE Linux Enterprise Server for SAP Applications 15 SP4 or newer.

For a better understanding and overview, the installation and setup is subdivided into seven steps.

Planning (section Section 3, “Planning the installation”)

OS setup (section [OsSetup])

SAP HANA installation (section [SAPHanaInst])

SAP HANA system replication configuration (section [SAPHanaHsr])

SAP HANA cluster integration (section Section 7, “Integrating SAP HANA with the Linux cluster”)

SLES for SAP cluster configuration (section Section 8, “Configuring the cluster and SAP HANA resources”)

Setup of third SAP HANA site in a multi-target architecture (section Section 9, “Setting up a scale-out multi-target architecture”)

Testing (section [Testing])

First, we will set up a SUSE Linux Enterprise Server for SAP Applications cluster controlling two sites of SAP HANA scale-out in a system replication configuration. Next, we will set up a third site which is outside the SUSE Linux Enterprise High Availability Extension cluster but acts as additional SAP HANA target, forming a multi-target architecture.

With SAPHanaSR-angi, various SAP HANA scale-out configurations are supported. Details on requirements and supported scenarios are given below.

In this guide we will cover a scale-out scenario without standby nodes, thus there is no host auto-failover. More details are explained at https://www.suse.com/c/sap-hana-scale-out-system-replication-for-large-erp-systems/ . The scenario where SAP HANA is configured for host auto-failover is explained at https://documentation.suse.com/sbp/sap-15/html/SLES4SAP-hana-scaleOut-PerfOpt-15/index.html.

For upgrading an existing SAP HANA scale-out system replication cluster from classical SAPHanaSR-ScaleOut, consult manual page SAPHanaSR_upgrade_to_angi(8).

3 Planning the installation #

Planning the installation is essential for a successful SAP HANA cluster setup.

What you need before you start:

Software from SUSE: SUSE Linux Enterprise Server for SAP Applications installation media and a valid subscription for getting updates

Software from SAP: SAP HANA installation media

Physical or virtual systems including disks and NFS storage pools (see below)

Filled parameter sheet (see below)

3.1 Minimum lab requirements and prerequisites #

This section defines some minimum requirements to install SAP HANA scale-out multi-target in ERP style.

From SAP HANA perspective we have three sites with two nodes each. Each site has one NFS service providing three shares to the two nodes. The NFS services must not be stretched across sites. The /hana/shared/ needs to be shared across the two nodes. The /hana/data/ and /hana/log/ are on NFS and provided to both nodes, for simplicity. However, instead this two shares could be placed on the nodes locally, both file systems at each node.

Refer to SAP HANA TDI documentation for allowed storage configuration and file systems.

From Linux cluster perspective we have three sites. Two of the cluster sites are hosting the workload. The third cluster site is for the majority maker node. HANA file systems and NFS shares are not managed by the Linux cluster.

The SBD based fencing needs up to three block devices. They are shared across the three cluster sites and accessed by all five cluster nodes. One block device for SBD might be an iSCSI target placed at the cluster third site. The SBD block devices are backed by storage outside the cluster.

Requirements with 2 SAP HANA instances per site (aka [ 2+0 ⇐ 2+0 ] → 2+0) plus the majority maker:

6 VMs with each 32 GB RAM, 50 GB disk space

1 VM with 2 GB RAM, 50 GB disk space

1 shared disk for SBD with 10 MB disk space

3 NFS pools (one per site) with a capacity of each 120 GB

1 additional IP address for takeover

optional: 2nd additional IP address for active/read-enabled setup

The minimum lab requirements mentioned here are no SAP sizing information. These data are provided only to rebuild the described cluster in a lab for test purposes. Even for such tests the requirements can increase depending on your test scenario. For productive systems ask your hardware vendor or use the official SAP sizing tools and services.

3.2 Parameter sheet #

The multi-target architecture with a cluster organizing two SAP HANA sites and a third site is quite complex. The installation should be planned properly. You should have all needed parameters like SID, IP addresses and much more already in place. It is a good practice to first fill out the parameter sheet and then begin with the installation.

| Parameter | Value |

|---|---|

Path to SLES for SAP media | |

RMT server or SCC account | |

NTP server(s) | |

Path to SAP HANA media | |

S-User for SAP marketplace | |

Node 1 name site 1 | |

Node 2 name site 1 | |

Node 1 name site 2 | |

Node 2 name site 2 | |

Node 1 name site 3 | |

Node 2 name site 3 | |

Node name majority maker (site 3 or 4) | |

IP addresses of all cluster nodes | |

SID | |

Instance number | |

Service IP address | |

Service IP address active/read-enabled | |

HANA site name site 1 | |

HANA site name site 2 | |

HANA site name site 3 | |

NFS server site 1 | |

NFS share "shared" site 1 | |

NFS share "data" site 1 | |

NFS share "log" site 1 | |

NFS server site 2 | |

NFS share "shared" site 2 | |

NFS share "data" site 2 | |

NFS share "log" site 2 | |

NFS server site 3 | |

NFS share "shared" site 3 | |

NFS share "data" site 3 | |

NFS share "log" site 3 | |

SBD STONITH block device(s) | |

Watchdog driver |

3.3 Scale-out scenario and HA resource agents #

To automate the failover of SAP HANA database and virtual IP resource between the first two sites in a scale-out multi-target setup, SUSE Linux Enterprise High Availability which comes with SUSE Linux Enterprise Server for SAP Applications is used. Two resource agents have been created to handle the scenario.

The first is the SAPHanaController resource agent (RA), which checks and manages the SAP HANA database instances. This RA is configured as a multi-state resource.

The master assumes responsibility for the active master name server of the SAP HANA database running in primary mode. All other instances are represented by the unpromoted mode.

The second resource agent is SAPHanaTopology. This RA has been created to make configuring the cluster as simple as possible. It runs on all nodes (except the majority maker) of a SUSE Linux Enterprise High Availability 15 cluster. It gathers information about the statuses and configurations of the SAP HANA system replication. It is designed as a normal (stateless) clone resource.

The third resource agent is SAPHanaFilesystem. This RA

With the current version of resource agents, SAP HANA system replication for scale-out is supported in the following scenarios or use cases:

- Performance optimized, single container ([A => B])

In the performance optimized scenario an SAP HANA RDBMS on site "A" is synchronizing with an SAP HANA RDBMS on a second site "B". As the SAP HANA RDBMS on the second site is configured to preload the tables the takeover time is typically very short. See also the requirements section below for details.

- Performance optimized, multi-tenancy also named MDC ([%A => %B])

Multi-tenancy is available for all of the supported scenarios and use cases in this document. This scenario is the default installation type for SAP HANA 2.0. The setup and configuration from a cluster point of view is the same for multi-tenancy and single containers. The one caveat is, that the tenants are managed all together by the Linux cluster. See also the requirements section below.

- Multi-Tier Replication ([A => B] -> C)

A Multi-Tier system replication has an additional target, which must be connected to the secondary (chain topology). This is a special case of the Multi-Target replication. Because of the mandatory chain topology, the RA feature AUTOMATED_REGISTER=true is not possible with pure Multi-Tier replication. See also the requirements section below.

- Multi-Target Replication ([A <= B] -> C)

This scenario and setup is described in this document. A multi-target system replication has an additional target, which is connected to either the secondary (chain topology) or to the primary (star topology). Multi-target replication is possible since SAP HANA 2.0 SPS04. See also the requirements section below.

3.4 The concept of the multi-target scenario #

A multi-target scenario consists of 3 sites. Site 1 and site 2 are in HA cluster while site 3 is outside the HA cluster.

In case of failure of the primary SAP HANA on site 1 the cluster first tries to start the takeover process. This allows to use the already loaded data at the secondary site. Typically the takeover is much faster than the local restart.

A site is noticed as "down" or "on error", if the LandscapeHostConfiguration status reflects this (return code 1). This happens when worker nodes are going down without any SAP HANA standby nodes left. ERP-style SAP HANA scale-out database will have no standby nodes by design. Find more details on concept and implementation in manual pages SAPHanaSR-angi(7) and SAPHanaSR-ScaleOut(7).

To achieve an automation of this resource handling process, use the SAP HANA resource agents included in the SAPHanaSR-angi RPM package delivered with SUSE Linux Enterprise Server for SAP Applications.

You can configure the level of automation by setting the parameter AUTOMATED_REGISTER. If automated registration is activated the cluster will also automatically register a former failed primary to get the new secondary. Find configuration details in manual page ocf_suse_SAPHanaController(7).

The resource agent for HANA in a Linux cluster does not trigger a takeover to the secondary site when a software failure causes one or more HANA processes to be restarted. The same is valid when a hardware error causes the index server to restart locally. Therefor the SAPHanaSR-angi package contains the HA/DR provider hook script susChkSrv.py. For details see manual page susChkSrv.py(7).

Site 3 is connected as an additional system replication target to either SAP HANA site inside the cluster. That two HANA sites need to be configured for automatically re-registering the 3rd site in case of takeover.

3.5 Important prerequisites #

Read the SAP Notes and papers first.

The SAPHanaSR-angi resource agent software package supports scale-out (multiple-box to multiple-box) system replication with the following configurations and parameters:

The cluster must include a valid STONITH method. SBD disk-based is the recommended STONITH method.

Both clusters controlled SAP HANA sites are either in the same network segment (layer 2) to allow an easy takeover of an IP address, or you need a technique like overlay IP addresses in virtual private clouds.

Technical users and groups, such as <sid>adm, are defined locally in the Linux system.

Name resolution of the cluster nodes and the virtual IP address should be done locally on all cluster nodes to not depend on DNS services (as it can fail, too).

Time synchronization is needed between the cluster nodes using reliable time services like NTP.

All SAP HANA sites have the same SAP Identifier (SID) and instance number.

The SAP HANA scale-out system must have only one active master name server per site. There are no configured master name servers.

For SAP HANA databases without additional master name server candidate, the package SAPHanaSR-angi version 1.2 or newer is needed.

The SAP HANA scale-out system must have only one failover group.

There is maximum one additional SAP HANA system replication connected from outside the Linux cluster. Thus two sites are managed by the Linux cluster, one site outside is recognized. For SAP HANA multi-tier and multi-target system replication, the package SAPHanaSR-angi version 1.2 or newer is needed.

Only one SAP HANA SID is installed. Thus the performance optimized setup is supported. The cost optimized and MCOS scenarios are currently not supported.

The saphostagent must be running. saphostagent is needed to translate between the system node names and SAP host names used during the installation of SAP HANA.

For SystemV style, the

sapinitscript needs to be active.For systemd style, the services saphostagent and SAP<SID>_<INO> can stay enabled. The systemd-enabled saphostagent and instance´s sapstartsrv is supported from SAPHanaSR-angi 1.2. Refer to the OS documentation for the systemd version. SAP HANA comes with native systemd integration as default starting with version 2.0 SPS07. Refer to SAP documentation for the SAP HANA version.

Combining systemd style hostagent with SystemV style instance is allowed. However, all nodes in one Linux cluster need to use the same style.

All SAP HANA instances controlled by the cluster must not be activated via

sapinitauto-start.Automated start of SAP HANA instances during system boot must be switched off.

The replication mode should be either 'sync' or 'syncmem'. 'async' is supported outside the Linux cluster.

No firewall rules must block any needed port.

No SELinux rules must block any needed action.

Sizing of both SAP HANA sites needs to be done according to SAP rules. The scale-out scenarios require both sites to be prepared to run the primary SAP HANA database.

SAP HANA 2.0 SPS05 rev.059.04 and later provides Python 3 and the HA/DR provider hook method srConnectionChanged() with needed parameters for susHanaSR.py.

SAP HANA 2.0 SPS05 or later provides the HA/DR provider hook method srServiceStateChanged() with needed parameters for susChkSrv.py.

SAP HANA 2.0 SPS06 or later provides the HA/DR provider hook method preTakeover() with multi-target-aware parameters and separate return code for Linux HA clusters.

No other HA/DR provider hook script should be configured for the above mentioned methods. Hook scripts for other methods, provided in SAPHanaSR-angi, can be used in parallel, if not documented otherwise.

The Linux cluster needs to be up and running to allow HA/DR provider events being written into CIB attributes. The current HANA SR status might differ from CIB srHook attribute after Linux cluster maintenance.

The user <sid>adm needs execution permission as user root for the command

SAPHanaSR-hookHelper.For optimal automation, AUTOMATED_REGISTER="true" is recommended.

As good starting configuration for projects, it is recommended to switch off

the automated registration of a failed primary, therefor

AUTOMATED_REGISTER="false" is the default.

In this case, you need to register a failed primary after a takeover manually. Use SAP tools like SAP HANA Cockpit or hdbnsutil. Make sure to use always the exact site names as already known to the cluster.

The two SAP HANA sites inside the Linux cluster can be configured to re-register the outer SAP HANA in case of takeover. For this a configuration item 'register_secondaries_on_takeover=true' needs to be added in the system_replication block of the global.ini file. See also manual page susHanaSR.py(7).

You need at least SAPHanaSR-angi version 1.2, SUSE Linux Enterprise Server for SAP Applications 15 SP4 and SAP HANA 2.0 SPS 05 rev.59.04 for all mentioned setups.

The Linux cluster can be either freshly installed as described in this guide, or it can be upgraded as described in respective documentation. Not allowed is mixing old and new cluster attributes or hook scripts within one cluster.

No manual actions must be performed on the SAP HANA database while it is controlled by the Linux cluster. All administrative actions need to be aligned with the cluster.

Find more details in the REQUIREMENTS section of manual pages SAPHanaSR-ScaleOut(7), ocf_suse_SAPHanaController(7), ocf_suse_SAPHanaFilesystem(7), susHanaSR.py(7), SAPHanaSR-manageAttr(8), susChkSrv.py(7), susTkOver.py(7) and SAPHanaSR-alert-fencing(8).

You must implement a valid STONITH method. Without a valid STONITH method, the complete cluster is unsupported and will not work properly.

In this setup guide, NFS is used as storage for the SAP HANA database. This has been chosen for simplicity. However, any storage supported by SAP HANA and the SAP HANA storage API can be used. Refer to the SAP HANA TDI documentation for supported storage and follow the respective storage vendor’s configuration instructions.

This setup guide focuses on the scale-out multi-target setup.

If you need to implement a different scenario, it is strongly recommended to define a Proof-of-Concept (PoC) with SUSE. This PoC will focus on testing the existing solution in your scenario. The limitation of most of the above items is mostly because of testing limits.

4 Setting up the operating system #

This section includes information you should consider during the installation of the operating system.

In this document, first SUSE Linux Enterprise Server for SAP Applications is installed and configured. Then the SAP HANA database including the system replication is set up. Next, the automation with the cluster is set up and configured. Finally, the multi-target setup of the 3rd site is set up and configured.

4.1 Installing SUSE Linux Enterprise Server for SAP Applications #

Multiple installation guides are already existing, with different reasons to set up the server in a certain way. Below it is outlined where this information can be found. In addition, you will find important details you should consider to get a system which is well prepared to deliver SAP HANA.

4.1.1 Installing the base operating system #

Depending on your infrastructure and the hardware used, you need to adapt the installation. All supported installation methods and minimum requirement are described in the Deployment Guide (https://documentation.suse.com/sles/15-SP4/single-html/SLES-deployment/#book-deployment). In case of automated installations you can find further information in the AutoYaST Guide (https://documentation.suse.com/sles/15-SP4/single-html/SLES-autoyast/#book-autoyast). The major installation guide for SUSE Linux Enterprise Server for SAP Applications to fit all requirements for SAP HANA is described in the SAP notes:

2578899 SUSE Linux Enterprise Server 15: Installation Note and

2684254 SAP HANA DB: Recommended OS settings for SLES 15 / SLES for SAP applications 15

4.1.2 Installing additional software #

SUSE delivers with SUSE Linux Enterprise Server for SAP Applications special resource agents for SAP HANA. With the pattern sap-hana the resource agent for SAP HANA ScaleUp is installed. For the ScaleOut scenario you need a special resource agent. Follow the instructions below on each node if you have installed the systems based on SAP note 2684254. The pattern High Availability summarizes all tools recommended to be installed on all nodes, including the majority maker.

remove package: patterns-sap-hana, SAPHanaSR, yast2-sap-ha

install package: SAPHanaSR-angi, ClusterTools2, saptune

install pattern: ha_sles

To do so, for example, use Zypper:

As Linux user root , type:

# zypper remove SAPHanaSR

If the package is installed, you will get an output like this:

Loading repository data... Reading installed packages... Resolving package dependencies... The following 3 packages are going to be REMOVED: patterns-sap-hana SAPHanaSR yast2-sap-ha The following pattern is going to be REMOVED: sap-hana 3 packages to remove. After the operation, 494.2 KiB will be freed. Continue? [y/n/...? shows all options] (y): y (1/3) Removing patterns-sap-hana-15.3-6.8.2.x86_64 ..............................[done] (2/3) Removing yast2-sap-ha-1.0.0-2.5.12.noarch .................................[done] (3/3) Removing SAPHanaSR-0.161.21-1.1.noarch ....................................[done]

As user root, type:

# zypper in SAPHanaSR-angi

If the package is not installed yet, you should get an output like the below:

Refreshing service 'Advanced_Systems_Management_Module_15_x86_64'. Refreshing service 'SUSE_Linux_Enterprise_Server_for_SAP_Applications_15_SP4_x86_64'. Loading repository data... Reading installed packages... Resolving package dependencies... The following 1 NEW packages are going to be installed: SAPHanaSR-angi 2 new packages to install. Overall download size: 539.1 KiB. Already cached: 0 B. After the operation, additional 763.1 KiB will be used. Continue? [y/n/...? shows all options] (y): y Retrieving package SAPHanaSR-angi-1.2.5-150400-1.1.noarch (1/1), 48.7 KiB (211.8 KiB unpacked) Retrieving: SAPHanaSR-angi-1.2.5-150400-1.1.noarch.rpm ....................................[done] Checking for file conflicts: .............................................................[done] (1/1) Installing: SAPHanaSR-angi-1.2.5-150400-1.1.noarch ..................................[done]

Install the tools for High Availability on all nodes.

# zypper in --type pattern ha_sles # zypper in ClusterTools2

4.1.3 Getting the latest updates #

If you have installed the packages before, make sure to deploy the newest updates on all machines to have the latest versions of the resource agents and other packages. Also, make sure all systems have indentical package versions. A prerequisite is a valid subscription for SUSE Linux Enterprise Server for SAP Applications. There are multiple ways to get updates via SUSE Manager, the Repository Management Tool (RMT), or via a direct connection to the SUSE Customer Center (SCC).

Depending on your company or customer rules, use zypper update or zypper patch.

The command zypper patch will install all available needed patches.

As user root, type:

# zypper patch

The command zypper update will update all or specified installed packages with newer versions, if possible.

As user root, type:

# zypper update

4.2 Configuring SUSE Linux Enterprise Server for SAP Applications to run SAP HANA #

4.2.1 Tuning or modifying the operating system #

Operating system tuning are described in SAP note 1275776 and 2684254. The SAP note 1275776 explains three ways to implementing the settings.

# saptune solution apply HANA

4.2.2 Enabling SSH access via public key (optional) #

Public key authentication provides SSH users access to their servers without entering their passwords. SSH keys are also more secure than passwords, because the private key used to secure the connection is never shared. Private keys can also be encrypted. Their encrypted contents cannot easily be read. For the document at hand, a very simple but useful setup is used. This setup is based on only one SSH key pair which enables SSH access to all cluster nodes.

Follow your company security policy to set up access to the systems.

As user root create an SSH key on one node.

# ssh-keygen -t rsa

The SSH key generation asks for missing parameters.

Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:ip/8kdTbYZNuuEUAdsaYOAErkwnkAPBR7d2SQIpIZCU root@<host1> The key's randomart image is: +---[RSA 2048]----+ |XEooo+ooo+o | |=+.= o=.o+. | |..B o. + o. | | (°< | | / ) | | -- | | B. . o o = | | o . . + | | +.. . | +----[SHA256]-----+

After the ssh-keygen is set up, you will have two new files under /root/.ssh/ .

# ls /root/.ssh/ id_rsa id_rsa.pub

Collect the public host keys from all other node. For the document at hand, the ssh-keyscan command is used.

# ssh-keyscan

The SSH host key is automatically collected and stored in the file /root/.ssh/known_host during the first SSH connection. To avoid to confirm the first login with "yes", which accepts the host key, collect and store them beforehand.

# ssh-keyscan -t ecdsa-sha2-nistp256 <host1>,<host1 ip> >>.ssh/known_hosts # ssh-keyscan -t ecdsa-sha2-nistp256 <host2>,<host2 ip> >>.ssh/known_hosts # ssh-keyscan -t ecdsa-sha2-nistp256 <host3>,<host3 ip> >>.ssh/known_hosts ...

After collecting all host keys store them in a file named authorized_keys. Push the entire directory /root/.ssh/ from the first node to all further cluster members.

# rsync -ay /root/.ssh/ <host2>:/root/.ssh/ # rsync -ay /root/.ssh/ <host3>:/root/.ssh/ # rsync -ay /root/.ssh/ <host4>:/root/.ssh/ ...

4.2.3 Setting up disk layout for SAP HANA #

An SAP certified storage system with a validated storage API is generally recommended. This is a prerequisite of a stable and reliable scale-out installation.

/hana/shared/<SID>

/hana/data/<SID>

/hana/log/<SID>

Create the mount directories on all SAP HANA nodes.

# mkdir -p /hana/shared/<SID> # mkdir -p /hana/data/<SID> # mkdir -p /hana/log/<SID> # mkdir -p /usr/sap

The SAP HANA installation needs a special storage setup. The NFS setup used for this guide must be reboot-persistent. You can achieve this with entries in the /etc/fstab file.

NFS version 4 is required in the setup at hand.

Create /etc/fstab entries for the three NFS pools.

<nfs> /hana/shared/<SID> nfs4 defaults 0 0

In the sample environment those lines are as follows:

/exports/TST_WDF1/shared /hana/shared/TST nfs4 defaults 0 0

Mount all NFS shares.

# mount -a

Create other directories (optional).

# mkdir -p /sapsoftware

File systems

- /hana/shared/<SID>

The mount directory is used for shared files between all hosts in an SAP HANA system. Each HANA site has its own instance of this directory. It is accessible to the two nodes of that site. In our setup we use NFS.

- /hana/log/<SID>

The default path to the log directory depends on the SAP System ID of the SAP HANA host. In our setup each HANA site has its own instance of this directory. It is accessible to the two nodes of that site. Each node has its own subdirectories. In our setup we use NFS. It would be possible be to use local disks instead.

- /hana/data/<SID>

The default path to the data directory depends on the system ID of the SAP HANA host. In our setup each HANA site has its own instance of this directory. It is accessible to the two nodes of that site. Each node has its own subdirectories. In our setup we use NFS. It would be possible be to use local disks instead.

- /usr/sap

This is the path to the local SAP system instance directories. It is possible to join this location with the Linux installation.

- /sapsoftware

(optional) Space for copying the SAP install software media. This NFS pool is mounted on all HANA sites and contains the SAP HANA installation media and installation parameter files.

Set up host name resolution for all machines. You can either use a DNS server or modify the /etc/hosts on all nodes.

With maintaining the /etc/hosts file, you minimize the impact of a failing DNS service. Replace the IP address and the host name in the following commands.

# vi /etc/hosts

Insert the following lines to /etc/hosts. Change the IP address and host name to match your environment.

192.168.201.151 hanaso0 192.168.201.152 hanaso2 ...

Enable NTP service on all nodes.

Simply enable an ntp service on all node in the cluster to have proper time synchronization.

# yast2 ntp-client

5 Installing the SAP HANA databases on both sites #

The infrastructure is set up. Now install the SAP HANA database at both sites. This chapter summarizes the test environment. In a cluster a machine is also called a node. Always use the official documentation from SAP to install SAP HANA and to set up the system replication.

This guide shows SAP HANA and saphostagent with native systemd integration. An example for legacy SystemV is outlined in the appendix Section 11.3, “Example for checking legacy SystemV integration”.

Install the SAP HANA database on all SAP HANA nodes.

Check if the SAP hostagent is installed on all SAP HANA nodes.

Verify that both databases are up and running.

In the example at hand, to make it easier to follow the documentation, the machines (or nodes) are named hanaso0, … hanasoX. The nodes (hanaso0, hanaso1) will be part of site "A" (WDF1), the nodes (hanaso2, hanaso3) will be part of site "B" (ROT1), and the nodes (hanaso4, hanaso5) will be part of site "C" (FRA1).

The following users are automatically created during the SAP HANA installation:

- <sid>adm

The user<sid>adm is the operating system user required for administrative tasks, such as starting and stopping the system.

- sapadm

The SAP Host Agent administrator.

- SYSTEM

The SAP HANA database superuser.

5.1 Installing the SAP HANA database #

Read the SAP Installation and Setup Manuals available at the SAP Marketplace.

Download the SAP HANA Software from SAP Marketplace.

Mount the file systems to install SAP HANA database software and database content (data and log).

Start the installation.

Mount /hana/shared from the nfs server.

# for system in hanaso{0,1,2,3,4,5}; do ssh $system mount -a doneInstall the SAP HANA Database as described in the SAP HANA Server Installation Guide on all machines (three sites) except the majority maker. All three databases need to have same SID and instance number. You can use either the graphical user interface or the command line installer

hdblcm. The command line installer can be used in an interactive or batch mode.Example 7: Using hdblcm in interactive mode ## <path_to_sap_media>/hdblcm

Alternatively you can also use the batch mode of

hdblcm. This can either be done by specifying all needed parameters via the command line or by using a parameter file.In the example at hand the command line parameters are used. In the batch mode you need to provide an XML password file (here <path>/hana_passwords). A template of this password file can be created with the following command:

Example 8: Creating a password file ## <path_to_sap_media>/hdblcm --dump_configfile_template=templateFile

This command creates two files:

templateFile is the template for a parameter file.

templateFile.xml is the XML template used to provide several hana_passwords to the hdblcm installer.

The XML password file looks as follows:

Example 9: The XML password template #<?xml version="1.0" encoding="UTF-8"?> <!-- Replace the 3 asterisks with the password --> <Passwords> <root_password><![CDATA[***]]></root_password> <sapadm_password><![CDATA[***]]></sapadm_password> <master_password><![CDATA[***]]></master_password> <sapadm_password><![CDATA[***]]></sapadm_password> <password><![CDATA[***]]></password> <system_user_password><![CDATA[***]]></system_user_password> <streaming_cluster_manager_password><![CDATA[***]]></streaming_cluster_manager_password> <ase_user_password><![CDATA[***]]></ase_user_password> <org_manager_password><![CDATA[***]]></org_manager_password> </Passwords>After having created the XML password file, you can immediately start the SAP HANA installation in batch mode by providing all needed parameters via the command line.

Example 10: Using hdblcm in batch mode #In the example below the password file is used to provide the password during the installation dialog. All installation parameters are named directly as one command.

# cat <path>/hana_passwords | \ <path_to_sap_media>/hdblcm \ --batch \ --sid=<SID>\ --number=<instanceNumber> \ --action=install \ --hostname=<node1> \ --addhosts=<node2>:role=worker \ --certificates_hostmap=<node1>=<node1> \ --certificates_hostmap=<node2>=<node2> \ --install_hostagent \ --system_usage=test \ --checkmnt=/hana/shared/<SID> \ --sapmnt=/hana/shared \ --datapath=<datapath> \ --logpath=<logpath> \ --root_user=root \ --workergroup=default \ --home=/usr/sap/<SID>/home \ --userid=<uid> \ --shell=/bin/bash \ --groupid=<gid> \ --read_password_from_stdin=xml

The second example use the modified template file as answering file.

# cat <path>/hana_passwords | \ <path_to_sap_media>/hdblcm \ -b \ --configfile=<path_to_templateFile>/<mod_templateFile> \ --read_password_from_stdin=xml

5.2 Checking if the SAP hostagent is installed on all SAP HANA nodes #

Check if the native systemd enabled SAP hostagent and instance sapstartsrv are installed on all SAP HANA nodes. If not, install and enable it now.

As Linux user root run the command systemctl on all SAP HANA nodes to check

the SAP hostagent and instance services:

# systemctl list-unit-files | grep sap saphostagent.service enabled sapinit.service generated saprouter.service disabled saptune.service enabled

The mandatory saphostagent service is enabled. This is the installation default.

Some more SAP related services might be enabled, for example the recommended saptune.

The instance service SAP<SID>_<NR>.service needs to be enabled as well.

# systemctl list-unit-files | grep SAP SAPTST_00.service enabled

The instance service is indeed enabled, as required.

5.3 Verifying both databases are up and running #

Verify that both databases are up and running on all SAP HANA nodes.

As Linux user root run the command systemd-cgls all SAP HANA nodes to check

both databases:

# systemd-cgls -u SAP.slice Unit SAP.slice (/SAP.slice): ├─saphostagent.service │ ├─2630 /usr/sap/hostctrl/exe/saphostexec pf=/usr/sap/hostctrl/exe/host_profile -systemd │ ├─2671 /usr/sap/hostctrl/exe/sapstartsrv pf=/usr/sap/hostctrl/exe/host_profile -D │ └─3591 /usr/sap/hostctrl/exe/saposcol -l -w60 pf=/usr/sap/hostctrl/exe/host_profile └─SAPTST_00.service ├─ 1257 hdbcompileserver ├─ 1274 hdbpreprocessor ├─ 1353 hdbindexserver -port 31003 ├─ 1356 hdbxsengine -port 31007 ├─ 2077 hdbwebdispatcher ├─ 2300 hdbrsutil --start --port 31003 --volume 3 --volumesuffix mnt00001/hdb00003.00003 --identifier 1644426276 ├─28462 /usr/sap/TST/HDB00/exe/sapstartsrv pf=/usr/sap/TST/SYS/profile/TST_HDB00_hanaso0 ├─31314 sapstart pf=/usr/sap/TST/SYS/profile/TST_HDB00_hanaso0 ├─31372 /usr/sap/TST/HDB00/hanaso0/trace/hdb.sapTST_HDB00 -d -nw -f /usr/sap/TST/HDB00/suse21/daemon.ini pf=/usr/sap/TST/SYS/profile/TST_HDB00_hanaso1 ├─31479 hdbnameserver └─32201 hdbrsutil --start --port 31001 --volume 1 --volumesuffix mnt00001/hdb00001 --identifier 1644426203

The SAP hostagent saphostagent.service and the instance´s sapstartsrv

SAPTST_00.service are running in the SAP.slice.

See also manual pages systemctl(8) and systemd-cgls(8) for details.

Use the python script landscapeHostConfiguration.py to show the status of an entire SAP HANA site. The landscape host configuration is shown with a line per SAP HANA host. Query the host roles (as user <sid>adm):

~> HDBSettings.sh landscapeHostConfiguration.py | Host | Host |... NameServer | NameServer | IndexServer | IndexServer | | Active |... Config Role | Actual Role | Config Role | Actual Role | ------ | ------ |... ----------- | ----------- | ----------- | ----------- | hanaso0 | yes |... master 1 | master | worker | master | hanaso1 | yes |... master 2 | slave | worker | slave overall host status: ok

Get an overview of instances of that site (as user <sid>adm) You should get a list of SAP HANA instances belonging to that site.

~> sapcontrol -nr <instanceNumber> -function GetSystemInstanceList 06.01.2024 17:25:16 GetSystemInstanceList OK hostname, instanceNr, httpPort, httpsPort, startPriority, features, dispstatus hanaso0, 00, 50013, 50014, 0.3, HDB|HDB_WORKER, GREEN hanaso1, 00, 50013, 50014, 0.3, HDB|HDB_WORKER, GREEN

6 Setting up SAP HANA system replication #

This section describes the setup of the system replication (HSR) after SAP HANA has been installed properly.

Back up the primary database

Enable the primary database

Register the secondary database

Verify the system replication

For more information read the Section Setting Up System Replication of the SAP HANA Administration Guide.

6.1 Backing up the primary database #

First back up the primary database as described in the SAP HANA Administration Guide, Section SAP HANA Database Backup and Recovery.

Below find examples to back up SAP HANA with SQL Commands:

As user <sid>adm enter the following command:

~> hdbsql -i <instanceNumber> -u SYSTEM -d SYSTEMDB \

"BACKUP DATA FOR FULL SYSTEM USING FILE ('backup')"You get the following command output (or similar):

0 rows affected (overall time 15.352069 sec; server time 15.347745 sec)

Enter the following command as user <sid>adm:

~> hdbsql -i <instanceNumber> -u <dbuser> \

"BACKUP DATA USING FILE ('backup')"Without a valid backup, you cannot bring SAP HANA into a system replication configuration.

6.2 Enabling the primary database #

As Linux user <sid>adm enable the system replication at the primary node. You need to define a site name (like WDF1) which must be unique for all SAP HANA databases which are connected via system replication. This means the secondary must have a different site name.

As user <sid>adm enable the primary:

~> hdbnsutil -sr_enable --name=WDF1

Check if the command output is similar to:

nameserver is active, proceeding ... successfully enabled system as system replication source site done.

The command line tool hdbnsutil can be used to check the system replication

mode and site name.

~> hdbnsutil -sr_stateConfiguration

If the system replication enablement was successful at the primary, the output should be as follows:

checking for active or inactive nameserver ... System Replication State ~~~~~~~~~~~~~~~~~~~~~~~~ mode: primary site id: 1 site name: WDF1 done.

The mode has changed from “none” to “primary” and the site now has a site name and a site ID.

6.3 Registering the secondary database #

The SAP HANA database instance on the secondary side must be stopped before the system can be registered for the system replication. You can use your preferred method to stop the instance (like HDB or sapcontrol). After the database instance has been stopped successfully, you can register the instance using hdbnsutil.

~> sapcontrol -nr <instanceNumber> -function StopSystem

The copy of key and key-data should only be done on the master name server. As the files are in the global file space, you do not need to run the command on all cluster nodes.

cd /usr/sap/<SID>/SYS/global/security/rsecssfs

rsync -va {,<node1-siteB>:}$PWD/data/SSFS_<SID>.DAT

rsync -va {,<node1-siteB>:}$PWD/key/SSFS_<SID>.KEY~> hdbnsutil -sr_register --name=<site2> \

--remoteHost=<node1-siteA> --remoteInstance=<instanceNumber> \

--replicationMode=sync --operationMode=logreplayadding site ... checking for inactive nameserver ... nameserver hanaso2:30001 not responding. collecting information ... updating local ini files ... done.

The remoteHost is the primary node in our case, the remoteInstance is the database instance number (here 00).

Now start the database instance again and verify the system replication status. On the secondary site, the mode should be one of „SYNC“, „SYNCMEM“ or „ASYNC“. The mode depends on the sync option defined during the registration of the secondary.

~> sapcontrol -nr <instanceNumber> -function StartSystem

Wait until the SAP HANA database is started completely.

~> hdbnsutil -sr_stateConfiguration

The output should look like the following:

System Replication State ~~~~~~~~~~~~~~~~~~~~~~~~ mode: sync site id: 2 site name: ROT1 active primary site: 1 primary masters: hanaso0 hanaso1 done.

6.4 Verifying the system replication #

To view the replication state of the whole SAP HANA cluster, use the following command as <sid>adm user on the primary site.

~> HDBSettings.sh systemReplicationStatus.py

This script prints a human-readable table of the system replication channels and their status. The most interesting column is the Replication Status, which should be ACTIVE.

| Database | Host | .. Site Name | Secondary | .. Secondary | .. Replication | | | .. | Host | .. Site Name | .. Status | -------- | ------ | .. --------- | --------- | .. --------- | .. ------ | SYSTEMDB | hanaso0 | .. WDF1 | hanaso2 | .. ROT1 | .. ACTIVE | TST | hanaso0 | .. WDF1 | hanaso2 | .. ROT1 | .. ACTIVE | TST | hanaso0 | .. WDF1 | hanaso2 | .. ROT1 | .. ACTIVE | TST | hanaso1 | .. WDF1 | hanaso3 | .. ROT1 | .. ACTIVE status system replication site "2": ACTIVE overall system replication status: ACTIVE Local System Replication State ~~~~~~~~~~ mode: PRIMARY site id: 1 site name: WDF1

7 Integrating SAP HANA with the Linux cluster #

This chapter describes what to change on the SAP HANA configuration for the ERP style scale-out multi-target scenario.

Stop SAP HANA

Configure system replication operation mode

Adapt SAP HANA nameserver configuration

Implement susHanaSR.py for srConnectionChanged

Implement susChkSrv.py for srServiceStateChanged

Implement susTkOver.py for preTakeover

Allow <sid>adm to access the cluster

Start SAP HANA

Test the HA/DR provider hook script integration

All hook scripts should be used directly from the SAPHanaSR-angi package. If the scripts are moved or copied, regular SUSE package updates will not work.

7.1 Stopping SAP HANA #

The SAP HANA needs to be stopped at both sites that will be part of the Linux cluster. At each site do the following:

# su - <sid>adm ~> sapcontrol -nr <instanceNumber> -function StopSystem ~> sapcontrol -nr <instanceNumber> -function WaitforStopped 300 20 ~> sapcontrol -nr <instanceNumber> -function GetSystemInstanceList

7.2 Configuring the system replication operation mode #

When your system is connected as an SAPHanaSR target, you can find an entry in the global.ini file which defines the operation mode. Up to now there are three modes available:

delta_datashipping

logreplay

logreplay_readaccess

Until performing a takeover and re-registration in the opposite direction, the entry for the operation mode is missing on your primary site. The default and preferred mode for HA is logreplay. Using the operation mode logreplay makes your secondary site in the SAP HANA system replication a hot standby system. For more details regarding replication modes, check the available SAP documentation such as the guide "How To Perform System Replication for SAP HANA".

For a multi-target setup, site 3 should follow the primary SAP HANA after takeover. For this a configuration 'register_secondaries_on_takeover = true' needs to be added in the system_replication block of the global.ini file. This configuration needs to be added on the two SAP HANA sites in the Linux cluster.

Check both global.ini files and add the operation mode, if needed. Also add the 'register_secondaries_on_takeover = true' for multi-target setups.

Path for the global.ini: /hana/shared/<SID>/global/hdb/custom/config/global.ini

[system_replication] operation_mode = logreplay register_secondaries_on_takeover = true

7.3 Adapting SAP HANA name server configuration #

We need change the nameserver.ini for the two sites controlled by the Linux cluster. This change ensures that there is no second master name server candidate as this is an ERP style setup. By default during the SAP HANA installation the second node at each site will be configured as the master name server candidate. We need to remove the second node from the line starting with 'master ='. The below example is given for instance number '00'.

Before configuration change:

[landscape] ... master = hanaso0:30001 hanaso1:30001 worker = hanaso0 hanaso1 active_master = hanaso0:30001

After configuration change:

[landscape] ... master = hanaso0:30001 worker = hanaso0 hanaso1 active_master = hanaso0:30001

Refer to SAP HANA documentation for details.

7.4 Implementing susHanaSR.py for srConnectionChanged #

This step must be done on both sites that will be part of the cluster.

Use the SAP HANA tools for changing global.ini and integrating the hook script.

In global.ini, the section [ha_dr_provider_sushanasr] needs to be

created. The section [trace] might be adapted.

The ready-to-use HA/DR hook script is shipped with the SAPHanaSR-angi

package in directory /usr/share/SAPHanaSR-angi/.

The hook script must be available on all cluster nodes, including the majority

maker. Find more details in manual pages susHanaSR.py(7) and

SAPHanaSR-manageProvider(8).

[ha_dr_provider_sushanasr] provider = susHanaSR path = /usr/share/SAPHanaSR-angi/ execution_order = 1 [trace] ha_dr_sushanasr = info

7.5 Implementing susChkSrv.py for srServiceStateChanged #

This step must be done on both sites that will be part of the cluster.

Use the SAP HANA tools for changing global.ini and integrating the hook script.

In global.ini, the section [ha_dr_provider_suschksrv] needs to be created.

The section [trace] might be adapted.

The ready-to-use HA/DR hook script is shipped with the SAPHanaSR-angi

package in directory /usr/share/SAPHanaSR-angi/.

The hook script must be available on all cluster nodes, including the majority

maker. Find more details in manual pages susChkSrv.py(7), SAPHanaSR-manageProvider(8)

and SAPHanaSR-alert-fencing(8).

[ha_dr_provider_suschksrv] provider = susChkSrv path = /usr/share/SAPHanaSR-angi/ execution_order = 3 action_on_lost = stop [trace] ha_dr_suschksrv = info

It is again reminded that the srHook script "susChkSrv.py" is not available in the installation ISO media. It is only available in update channels of SUSE Linux Enterprise Server for SAP Applications 15 SP4. So, for a correctly working setup a full system patching is mandatory after registering the system to SCC, RMT or SUSE Manager. From SUSE Linux Enterprise Server for SAP Applications 15 SP5 onwards the "susChkSrv.py" will be included in the ISO.

7.6 Implementing susTkOver.py for preTakeover #

This step must be done on both sites that will be part of the cluster.

Use the SAP HANA tools for changing global.ini and integrating the hook script.

In global.ini, the section [ha_dr_provider_sustkover] needs to be created.

The section [trace] might be adapted.

The ready-to-use HA/DR hook script is shipped with the SAPHanaSR-angi

package in directory /usr/share/SAPHanaSR-angi/.

The hook script must be available on all cluster nodes, including the majority

maker. Find more details in manual pages susTkOver.py(7) and

SAPHanaSR-manageProvider(8).

[ha_dr_provider_sustkover] provider = susTkOver path = /usr/share/SAPHanaSR-angi/ execution_order = 2 sustkover_timeout = 30 [trace] ha_dr_sustkover = info

It is again reminded that the srHook script "susTkOver.py" is not available in the installation ISO media. It is only available in update channels of SUSE Linux Enterprise Server for SAP Applications 15 SP4. So, for a correctly working setup a full system patching is mandatory after registering the system to SCC, RMT or SUSE Manager. From SUSE Linux Enterprise Server for SAP Applications 15 SP5 onwards the "susTkOver.py" will be included in the ISO.

7.7 Allowing <sid>adm to access the cluster #

The current version of the susHanaSR.py python hook uses the command

sudo to allow the <sid>adm user to access the cluster attributes. In Linux

you can use visudo to start the vi editor for the Linux system /etc/sudoers.

We recommend to use a specific file /etc/sudoers.d/SAPHanaSR instead. That

file can be edited by plain vi, or handled by any configuration management.

The user <sid>adm must be able to set the cluster attributes hana_<sid>_site_srHook_* and hana_<sid>_gsh. The SAP HANA system replication hook needs password free access. The following example limits the sudo access to exactly setting the needed attribute. See manual pages sudoers(5), susHanaSR.py(7) and susChkSrv.py(7) for details.

Example for basic options to allow <sid>adm to use the hook scripts. Replace the <sid> by the lowercase SAP system ID. Replace the <SID> by the uppercase SAP system ID.

# SAPHanaSR-angi needs for HA/DR hook scripts <sid>adm ALL=(ALL) NOPASSWD: /usr/sbin/crm_attribute -n hana_<sid>_* <sid>adm ALL=(ALL) NOPASSWD: /usr/bin/SAPHanaSR-hookHelper --sid=<SID> *

Example for looking up the sudo permissions for the hook scripts.

# sudo -U <sid>adm -l | grep "NOPASSWD"

7.8 Starting SAP HANA #

After having completed the SAP HANA configuration and having configured the communication between SAP HANA and the Linux cluster, you can start the SAP HANA database on both sites.

~> sapcontrol -nr <instanceNumber> -function StartSystem

The sapcontrol service commits the request with OK.

12.06.2024 11:11:01 StartSystem OK

Check if SAP HANA has finished starting.

~> sapcontrol -nr <instanceNumber> -function WaitforStarted 300 20 ~> sapcontrol -nr <instanceNumber> -function GetSystemInstanceList

7.9 Testing the HA/DR provider hook script integration #

When the SAP HANA database has been restarted after the changes, check if the hook scripts have been loaded correctly. A useful verification is to check the SAP HANA trace files as <sid>adm. More complete checks will be done later, when the Linux cluster is up and running.

7.9.1 Checking for susHanaSR.py #

Check if SAP HANA has initialized the susHanaSR.py hook script for the srConnectionChanged events. Check the HANA name server trace files and the specific hook script trace file. Do this on both sites' master name server. See also manual page susHanaSR.py(7).

~> cdtrace ~> grep HADR.*load.*susHanaSR nameserver_*.trc | tail -3 ~> grep susHanaSR.*init nameserver_*.trc | tail -3

7.9.2 Checking for susChkSrv.py #

Check if SAP HANA has initialized the susChkSrv.py hook script for the srServiceStateChanged events. Check the HANA name server trace files and the specific hook script trace file. Do this on all nodes. See also manual page susChkSrv.py(7).

~> cdtrace ~> grep HADR.*load.*susChkSrv nameserver_*.trc | tail -3 ~> grep susChkSrv.init nameserver_*.trc | tail -3

7.9.3 Checking for susTkOver.py #

Check if SAP HANA has initialized the susTkOver.py hook script for the preTakeover events. Check the HANA name server trace. Do this on all nodes. See also manual page susTkOver.py(7).

~> cdtrace ~> grep HADR.*load.*susTkOver nameserver_*.trc | tail -3 ~> grep susTkOver.init nameserver_*.trc | tail -3

8 Configuring the cluster and SAP HANA resources #

This chapter describes the configuration of the SUSE Linux Enterprise High Availability Extension cluster. The SUSE Linux Enterprise High Availability Extension is part of SUSE Linux Enterprise Server for SAP Applications. Further, the integration of SAP HANA System Replication with the SUSE Linux Enterprise High Availability Extension cluster is explained. The integration is done by using the SAPHanaSR-angi package which is also part of SUSE Linux Enterprise Server for SAP Applications.

Install the cluster packages

Basic cluster configuration

Configure cluster properties, resources and alerts

Final steps

8.1 Installing the cluster packages #

If not already done, install the pattern High Availability on all nodes.

To do so, use Zypper.

# zypper in -t pattern ha_sles

Now the resource agents for controlling the SAP HANA system replication need to be installed at all cluster nodes, including the majority maker.

# zypper in SAPHanaSR-angi

If you have the packages installed before, make sure to get the newest updates on all nodes

# zypper patch

8.2 Configuring the basic cluster #

After having installed the cluster packages, the next step is to set up the basic cluster framework. For convenience, use YaST or the ha-cluster-init script.

It is strongly recommended to add a second corosync ring, implement unicast (UCAST) communication and adjust the timeout values to your environment.

Prerequisites

Name resolution

Time synchronization

Redundant network for cluster communication

STONITH method

8.2.1 Setting up watchdog for "Storage-based Fencing" #

It is recommended to use SBD as central STONITH device, as done in the example at hand. Each node constantly monitors connectivity to the storage device, and terminates itself in case the partition becomes unreachable. Whenever SBD is used, a correctly working watchdog is crucial. Modern systems support a hardware watchdog that needs to be "tickled" by a software component. The software component (usually a daemon) regularly writes a service pulse to the watchdog. If the daemon stops "tickling" the watchdog, the hardware will enforce a system restart. This protects against failures of the SBD process itself, such as dying, or getting stuck on an I/O error.

Access to the Watchdog Timer: No other software must access the watchdog timer. Some hardware vendors ship systems management software that uses the watchdog for system resets (for example HP ASR daemon). Disable such software, if watchdog is used by SBD.

Determine the right watchdog module. Alternatively, you can find a list of installed drivers with your kernel version.

# ls -l /lib/modules/$(uname -r)/kernel/drivers/watchdog

Check if any watchdog module is already loaded.

# lsmod | egrep "(wd|dog|i6|iT|ibm)"

If you get a result, the system has already a loaded watchdog. If the watchdog does not match your watchdog device, you need to unload the module.

To safely unload the module, check first if an application is using the watchdog device.

# lsof /dev/watchdog # rmmod <wrong_module>

Enable your watchdog module and make it persistent. For the example below, softdog has been used which has some restrictions and should not be used as first option.

# echo softdog > /etc/modules-load.d/watchdog.conf # systemctl restart systemd-modules-load

Check if the watchdog module is loaded correctly.

# lsmod | grep dog

Testing the watchdog can be done with a simple action. Ensure to switch of your SAP HANA first because watchdog will force an unclean reset/shutdown of your system.

In case of a hardware watchdog a desired action is predefined after the timeout of the watchdog has reached. If your watchdog module is loaded and not controlled by any other application, do the following:

Triggering the watchdog without continuously updating the watchdog resets/switches off the system. This is the intended mechanism. The following commands will force your system to be reset/switched off.

# touch /dev/watchdog

In case the softdog module is used the following action can be performed:

# echo 1 > /dev/watchdog

After your test was successful you can implement the watchdog on all cluster members. The example below applies to the softdog module. Replace <wrong_module> by the module name queried before.

# for i in hana{so0,so1,so2,so3,mm}; do

ssh -T $i <<EOSSH

hostname

rmmod <wrong_module>

echo softdog > /etc/modules-load.d/watchdog.conf

systemctl restart systemd-modules-load

lsmod |grep -e dog

EOSSH

done8.2.2 Basic cluster configuration using ha-cluster-init #

For more detailed information about ha-cluster-* tools, see section Overview of the Bootstrap Scripts of the Installation and Setup Quick Start Guide for SUSE Linux Enterprise High Availability at https://documentation.suse.com/sle-ha/15-SP4/html/SLE-HA-all/article-installation.html#sec-ha-inst-quick-bootstrap.

Create an initial setup by using the ha-cluster-init command. Follow the dialog

steps.

This is only to be done on the first cluster node. If you are using SBD as STONITH mechanism, you need to first load the watchdog kernel module matching your setup. In the example at hand, the softdog kernel module is used.

The command ha_cluster-init configures the basic cluster framework including:

SSH keys

csync2 to transfer configuration files

SBD (at least one device)

corosync (at least one ring)

HAWK Web interface

# ha-cluster-init -u -s <sbd-device>

As requested by ha-cluster-init, change the password of the user hacluster on all cluster nodes.

Do not forget to change the password of the user hacluster.

8.2.3 Cluster configuration for all other cluster nodes #

The other nodes of the cluster could be integrated by starting the command

ha-cluster-join. This command asks for the IP address or name of the first

cluster node. Than all needed configuration files are copied over. As a result

the cluster is started on all nodes. Do not forget the majority maker.

If you are using SBD as STONITH method, you need to activate the softdog kernel module matching your systems. In the example at hand the softdog kernel module is used.

# ha-cluster-join -c <host1>

8.2.4 Checking the cluster for the first time #

Now it is time to check and optionally start the cluster for the first time on all nodes.

# crm cluster run "crm cluster start"

All nodes should be started in parallel. Otherwise unseen nodes might get fenced.

Check whether all cluster nodes have registered at the SBD device(s). See manual page cs_show_sbd_devices(8) for details.

# cs_show_sbd_devices

Check the cluster status with crm_mon. Use the option -r to also see

resources which are configured but stopped.

# crm_mon -r

The command will show the empty cluster and will print something like the screen output below. The most interesting information in this output is that there are two nodes in the status "online" and the message "partition with quorum".

Stack: corosync Current DC: hanamm (version 1.1.16-4.8-77ea74d) - partition with quorum Last updated: Tue Jan 25 16:55:04 2024 Last change: Tue Jan 25 16:53:58 2024 by root via crm_attribute on hanaso2 5 nodes configured 1 resource configured Online: [ hanamm hanaso0 hanaso1 hanaso2 hanaso3 ] Full list of resources: stonith-sbd (stonith:external/sbd): Started hanamm

8.3 Configuring cluster properties and resources #

This section describes how to configure bootstrap, STONITH, resources and

constraints using the crm configure shell command as described in section

Managing cluster resources of the SUSE Linux Enterprise High Availability Extension Administration Guide

(see https://documentation.suse.com/sle-ha/15-SP4/html/SLE-HA-all/cha-ha-manage-resources.html).

Use the command crm to add the objects to the Cluster Resource Management

(CRM). Copy the following examples to a local file and then load the

configuration to the Cluster Information Base (CIB). The benefit is that

you have a scripted setup and a backup of your configuration.

Perform all crm commands only on one node, for example on machine hanaso0.

First write a text file with the configuration, which you load into your cluster in a second step. This step is as follows:

# vi crm-file<XX> # crm configure load update crm-file<XX>

8.3.1 Cluster bootstrap and more #

The first example defines the cluster bootstrap options including the resource and operation defaults.

The stonith-timeout should be greater than 1.2 times the SBD msgwait timeout.

Find more details and examples in manual page SAPHanaSR-ScaleOut_basic_cluster(7).

# vi crm-bs.txt

Enter the following to crm-bs.txt:

property cib-bootstrap-options: \

have-watchdog=true \

cluster-infrastructure=corosync \

cluster-name=hacluster \

placement-strategy=balanced \

no-quorum-policy=freeze \

stonith-enabled=true \

concurrent-fencing=true \

stonith-action=reboot \

stonith-timeout=150

rsc_defaults rsc-options: \

resource-stickiness=1000 \

migration-threshold=50

op_defaults op-options: \

timeout=600 \

record-pending=trueNow add the configuration to the cluster.

# crm configure load update crm-bs.txt

8.3.2 STONITH #

As already explained in the requirements, STONITH is crucial for a supported cluster setup. Without a valid fencing mechanism your cluster is unsupported.

A standard STONITH mechanism implements SBD based fencing. The SBD STONITH method is very stable and reliable and has proved very good road capability.

You can use other fencing methods available for example from your public cloud provider. However, it is crucial to intensively test the server fencing.

For SBD based fencing you can use one up to three SBD devices. The cluster will react differently when an SBD device is lost. The differences and SBD fencing are explained very well in the SUSE product documentation of the SUSE Linux Enterprise High Availability Extension available at https://documentation.suse.com/.

You need to adapt the SBD resource for the SAP HANA scale-out cluster.

As user <sid>adm create a file named for crm-fencing.txt.

# vi crm-fencing.txt

Enter the following to crm-fencing.txt:

primitive stonith-sbd stonith:external/sbd \ params pcmk_action_limit=-1 pcmk_delay_max=1

Now load the configuration from the file to the cluster.

# crm configure load update crm-fencing.txt

8.3.3 Cluster in maintenance mode #

Load the configuration for the resources and the constraints step-by-step to the cluster to explain the different parts. The best way to avoid unexpected cluster reactions is to

first set the complete cluster to maintenance mode,

then do all needed changes and,

as last step, end the cluster maintenance mode.

# crm maintenance on

8.3.4 SAPHanaTopology #

SAPHanaTopology is the resource agent (RA) that analyzes the SAP HANA topology

and writes its findings into the CIB.

Prepare the RA configuration in a text file, for example crm-saphanatop.txt,

and load these with the crm command.

If necessary, change the SID and instance number (bold) to appropriate values for your setup.

hanaso0:~ # vi crm-saphanatop.txt

Enter the following to crm-saphanatop.txt:

primitive rsc_SAPHanaTop_<SID>_HDB<instanceNumber> ocf:suse:SAPHanaTopology \

op monitor interval="50" timeout="600" \

op start interval="0" timeout="600" \

op stop interval="0" timeout="300" \

params SID="<SID>" InstanceNumber="<instanceNumber>"

clone cln_SAPHanaTop_<SID>_HDB<instanceNumber> rsc_SAPHanaTop_<SID>_HDB<instanceNumber> \

meta clone-node-max="1" interleave="true"primitive rsc_SAPHanaTop_TST_HDB00 ocf:suse:SAPHanaTopology \ op monitor interval="50" timeout="600" \ op start interval="0" timeout="600" \ op stop interval="0" timeout="300" \ params SID="TST" InstanceNumber="00" clone cln_SAPHanaTop_TST_HDB00 rsc_SAPHanaTop_TST_HDB00 \ meta clone-node-max="1" interleave="true"

For additional information about all parameters, use the command

man ocf_suse_SAPHanaTopology.

Again, add the configuration to the cluster.

# crm configure load update crm-saphanatop.txt

The most important parameters here are SID (TST) and InstanceNumber (00),

which are self explaining in an SAP context.

Beside these parameters, the timeout values or the operations (start, monitor,

stop) are typical values to be adjusted for your environment.

Additional information about all parameters can be found in manual page

ocf_suse_SAPHanaTopology(7).

8.3.5 SAPHanaFilesystem #

This step is to define the resources to monitor the file system used by HANA, for example /hana/shared/<SID>. The RA monitors the file system but does not mount nor umount it. Mounting and umounting is done by the OS through /etc/fstab.

If necessary, change the SID and instance number (bold) to the appropriate values for your setup.

hanaso0:~ # vi crm-saphanafil.txt

Enter the following to crm-saphanafil.txt:

primitive rsc_SAPHanaFil_<SID>_HDB<instanceNumber> ocf:suse:SAPHanaFilesystem \

op monitor interval="120" timeout="120" \

op start interval="0" timeout="10" \

op stop interval="0" timeout="20" \

params SID="<SID>" InstanceNumber="<instanceNumber>" ON_FAIL_ACTION="fence"

clone cln_SAPHanaFil_<SID>_HDB<instanceNumber> rsc_SAPHanaFil_<SID>_HDB<instanceNumber> \

meta clone-node-max="1" interleave="true"primitive rsc_SAPHanaFil_TST_HDB00 ocf:suse:SAPHanaFilesystem \ op monitor interval="120" timeout="120" \ op start interval="0" timeout="10" \ op stop interval="0" timeout="20" \ params SID="TST" InstanceNumber="00" ON_FAIL_ACTION="fence" clone cln_SAPHanaFil_TST_HDB00 rsc_SAPHanaFil_TST_HDB00 \ meta clone-node-max="1" interleave="true"

Additional information about all parameters can be found in manual page ocf_suse_SAPHanaFilesystem(7).

Again, add the configuration to the cluster.

# crm configure load update crm-saphanafil.txt

The most important parameters here are SID (TST) and InstanceNumber (00), which are self explaining in an SAP context. ON_FAIL_ACTION defines how the RA should react on monitor failures. See also manual page SAPHanaSR-alert-fencing(8). Beside these parameters, the timeout values or the operations (start, monitor, stop) are typical values to be adjusted for your environment.

8.3.6 SAPHanaController #

SAPHanaController is the resource agent (RA) that controls the HANA database.

Prepare the RA configuration in a text file, for example crm-saphanatop.txt,

and load these with the crm command.

Enter the following to crm-saphanacon.txt:

# vi crm-saphanacon.txt

Enter the following to crm-saphanacon.txt:

primitive rsc_SAPHanaCon_<SID>_HDB<instanceNumber> ocf:suse:SAPHanaController \

op start interval="0" timeout="3600" \

op stop interval="0" timeout="3600" \

op promote interval="0" timeout="900" \

op demote interval="0" timeout="320" \

op monitor interval="60" role="Promoted" timeout="700" \

op monitor interval="61" role="Unpromoted" timeout="700" \

params SID="<SID>" InstanceNumber="<instanceNumber>" \

PREFER_SITE_TAKEOVER="true" \

DUPLICATE_PRIMARY_TIMEOUT="7200" AUTOMATED_REGISTER="false" \

HANA_CALL_TIMEOUT="120"

clone mst_SAPHanaCon_<SID>_HDB<instanceNumber> \

rsc_SAPHanaCon_<SID>_HDB<instanceNumber> \

meta clone-node-max="1" promotable="true" interleave="true" \

maintenance="true"The most important parameters here are <SID> (TST) and <instanceNumber> (00), which are in the SAP context quite self explaining. Beside these parameters, the timeout values or the operations (start, monitor, stop) are typical tunables. Find more details in manual page ocf_suse_SAPHanaController(7).

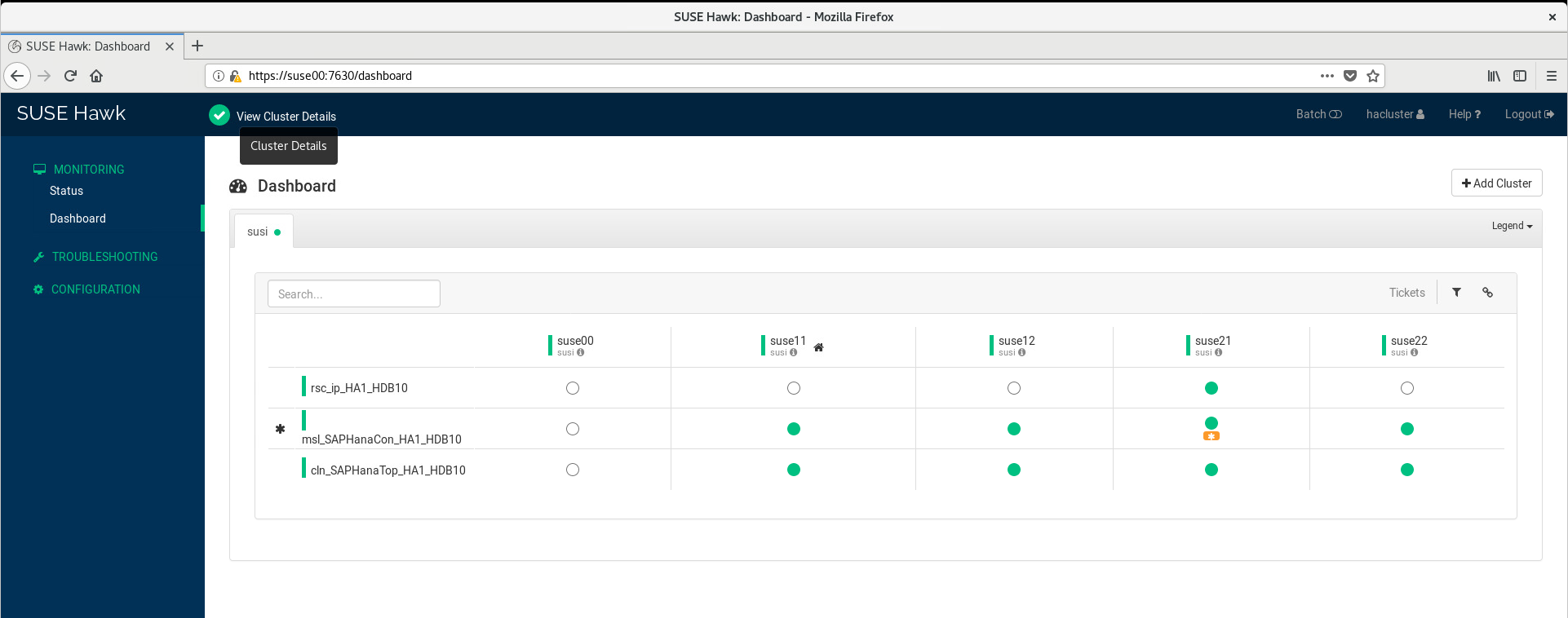

primitive rsc_SAPHanaCon_TST_HDB00 ocf:suse:SAPHanaController \ op start interval="0" timeout="3600" \ op stop interval="0" timeout="3600" \ op promote interval="0" timeout="900" \ op demote interval="0" timeout="320" \ op monitor interval="60" role="Promoted" timeout="700" \ op monitor interval="61" role="Unpromoted" timeout="700" \ params SID="TST" InstanceNumber="00" PREFER_SITE_TAKEOVER="true" \ DUPLICATE_PRIMARY_TIMEOUT="7200" AUTOMATED_REGISTER="false" \ HANA_CALL_TIMEOUT="120" clone msl_SAPHanaCon_TST_HDB00 rsc_SAPHanaCon_TST_HDB00 \ meta clone-node-max="1" promotable="true" interleave="true" \ maintenance="true"

Add the configuration to the cluster.

# crm configure load update crm-saphanacon.txt

| Name | Description |

|---|---|

PREFER_SITE_TAKEOVER | Defines whether RA should prefer to takeover to the secondary instance instead of restarting the failed primary locally. Set to true for SAPHanaSR-angi. |

AUTOMATED_REGISTER | Defines whether a former primary should be automatically registered to be secondary of the new primary. With this parameter you can adapt the level of system replication automation. If set to false, the former primary must be manually registered. The cluster will not start this SAP HANA RDBMS until it is registered to avoid double primary up situations. |

DUPLICATE_PRIMARY_TIMEOUT | Time difference needed between two primary time stamps if a dual-primary situation occurs. If the time difference is less than the time gap, the cluster holds one or both sites in a "WAITING" status. This is to give an administrator the chance to react on a failover. If the complete node of the former primary crashed, the former primary will be registered after the time difference is passed. If "only" the SAP HANA RDBMS has crashed, then the former primary will be registered immediately. After this registration to the new primary, all data will be overwritten by the system replication. |

Additional information about all parameters can be found in manual page ocf_suse_SAPHana_Controller(7).

8.3.7 Virtual IP address of the HANA primary #

The last mandatory resource to be added to the cluster is covering the virtual IP address for the HANA primary master name server. Replace the bold string with your instance number, SAP HANA system ID and the virtual IP address.

# vi crm-vip.txt

Enter the following to crm-vip.txt:

primitive rsc_ip_<SID>_HDB<instanceNumber> ocf:heartbeat:IPaddr2 \

op monitor interval="10s" timeout="20s" \

params ip="<IP>"primitive rsc_ip_TST_HDB00 ocf:heartbeat:IPaddr2 \ op monitor interval="10s" timeout="20s" \ params ip="192.7.7.20"

Load the file to the cluster.

# crm configure load update crm-vip.txt

In most installations, only the parameter ip needs to be set to the virtual IP address to be presented to the client systems. See manual page ocf_heartbeat_IPaddr2(7) for details on additional parameters.

8.3.8 Constraints #

The constraints are organizing the correct placement of the virtual IP address for the client database access and the start order between the two resource agents SAPHanaController and SAPHanaTopology.

# vi crm-cs.txt

Enter the following to crm-cs.txt:

colocation col_saphana_ip_<SID>_HDB<instanceNumber> 2000: rsc_ip_<SID>_HDB<instanceNumber>:Started \

mst_SAPHanaCon_<SID>_HDB<instanceNumber>:Promoted

order ord_SAPHana_<SID>_HDB<instanceNumber> Optional: cln_SAPHanaTop_<SID>_HDB<instanceNumber> \

mst_SAPHanaCon_<SID>_HDB<instanceNumber>

location SAPHanaCon_not_on_majority_maker mst_SAPHanaCon_<SID>_HDB<instanceNumber> \

-inf: <majority maker>

location SAPHanaTop_not_on_majority_maker cln_SAPHanaTop_<SID>_HDB<instanceNumber> \

-inf: <majority maker>

location SAPHanaFil_not_on_majority_maker cln_SAPHanaFil_<SID>_HDB<instanceNumber> \

-inf: <majority maker>colocation col_saphana_ip_TST_HDB00 2000: rsc_ip_TST_HDB00:Started \ mst_SAPHanaCon_TST_HDB00:Master order ord_SAPHana_TST_HDB00 Optional: cln_SAPHanaTop_TST_HDB00 \ mst_SAPHanaCon_TST_HDB00 location SAPHanaCon_not_on_majority_maker msl_SAPHanaCon_TST_HDB00 -inf: hanamm location SAPHanaTop_not_on_majority_maker cln_SAPHanaTop_TST_HDB00 -inf: hanamm location SAPHanaFil_not_on_majority_maker cln_SAPHanaFil_TST\_HDB00 -inf: hanamm

Load the file to the cluster.

# crm configure load update crm-cs.txt

8.3.9 Configuring virtual IP address of the HANA read-enabled secondary #

This optional resource is covering the virtual IP address for the read-enabled HANA secondary master name server. It is useful if SAP HANA is configured with the active/read-enabled feature. Replace the bold string with your instance number, SAP HANA system ID and the virtual IP address.

In scale-out this works only for an SAP HANA topology with exactly one master name server and exactly one worker node.

# vi crm-vip-ro.txt

Enter the following to crm-vip-ro.txt:

primitive rsc_ip_ro_<SID>_HDB<instanceNumber> ocf:heartbeat:IPaddr2 \

op monitor interval="10s" timeout="20s" \

params ip="<IP-ro>"

colocation col_ip_ro_with_secondary_<SID>_HDB<instanceNumber> \

2000: rsc_ip_ro_<SID>_HDB<instanceNumber>:Started \