Optimizing Linux for AMD EPYC™ 9004 Series Processors with SUSE Linux Enterprise 15 SP4

Tuning & Performance

The document at hand provides an overview of both the AMD EPYC™ 9004 Series Classic and AMD EPYC™ 9004 Series Dense Processors. It details how some computational-intensive workloads can be tuned on SUSE Linux Enterprise Server 15 SP4.

Disclaimer:

Documents published as part of the SUSE Best Practices series have been contributed voluntarily by SUSE employees and third parties. They are meant to serve as examples of how particular actions can be performed. They have been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. SUSE cannot verify that actions described in these documents do what is claimed or whether actions described have unintended consequences. SUSE LLC, its affiliates, the authors, and the translators may not be held liable for possible errors or the consequences thereof.

1 Overview #

The AMD EPYC 9004 Series Processor is the latest generation of the AMD64 System-on-Chip (SoC) processor family. It is based on the Zen 4 microarchitecture introduced in 2022, supporting up to 96 cores (192 threads) and 12 memory channels per socket. At the time of writing, 1-socket and 2-socket models are expected to be available from Original Equipment Manufacturers (OEMs) in 2023. In 2023, a new AMD EPYC 9004 Series Dense Processor was launched which is based on a similar architecture to the AMD EPYC 9004 Series Classic Processor supporting up to 128 cores (256 threads). This document provides an overview of the AMD EPYC 9004 Series Classic Processor and how computational-intensive workloads can be tuned on SUSE Linux Enterprise Server 15 SP4. Additional details about the AMD EPYC 9004 Series Dense Processor are provided where appropriate.

2 AMD EPYC 9004 Series Classic Processor architecture #

Symmetric multiprocessing (SMP) systems are those that contain two or more physical processing cores. Each core may have two threads if Symmetric multithreading (SMT) is enabled, with some resources being shared between SMT siblings. To minimize access latencies, multiple layers of caches are used with each level being larger but with higher access costs. Cores may share different levels of cache which should be considered when tuning for a workload.

Historically, a single socket contained several cores sharing a hierarchy of caches and memory channels and multiple sockets were connected via a memory interconnect. Modern configurations may have multiple dies as a Multi-Chip Module (MCM) with one set of interconnects within the socket and a separate interconnect for each socket. This means that some CPUs and memory are faster to access than others depending on the “distance”. This should be considered when tuning for Non-Uniform Memory Architecture (NUMA) as all memory accesses may not reference local memory incurring a variable access penalty.

The 4th Generation AMD EPYC Processor has an MCM design with up to thirteen dies on each package. From a topology point of view, this is significantly different to the 1st Generation AMD EPYC Processor design. However, it is similar to the 3rd Generation AMD EPYC Processor other than the increase in die count. One die is a central IO die through which all off-chip communication passes through. The basic building block of a compute die is an eight-core Core CompleX (CCX) with its own L1-L3 cache hierarchy. Similar to the 3rd Generation AMD EPYC Processor, one Core Complex Die (CCD) consists of one CCX connected via an Infinity Link to the IO die, as opposed to two CCXs used in the 2nd Generation AMD EPYC Processor. This allows direct communication within a CCD instead of using the IO link maintaining reduced communication and memory access latency. A 96-core 4th Generation AMD EPYC Processor socket therefore consists of 12 CCDs consisting of 12 CCXs (containing 8 cores each) or 96 cores in total (192 threads with SMP enabled) with one additional IO die for 13 dies in total. This is a large increase in both the core count and number of memory channels relative to the 3rd Generation AMD EPYC Processor.

Both the 3rd and 4th Generation AMD EPYC Processors potentially have a larger L3 cache. In a standard configuration, a 4th Generation AMD EPYC Processor has 32MB L3 cache. Some CPU chips may also include an AMD V-Cache expansion that can triple the size of the L3 cache. This potentially provides a major performance boost to applications as more active data can be stored in low-latency cache. The exact performance impact is variable, but any memory-intensive workload should benefit from having a lower average memory access latency because of a larger cache.

Communication between the chip and memory happens via the IO die. Each CCD has one dedicated Infinity Fabric link to the die and one memory channel per CCD located on the die. The practical consequence of this architecture versus the 1st Generation AMD EPYC Processor is that the topology is simpler. The first generation had separate memory channels per die and links between dies giving two levels of NUMA distance within a single socket and a third distance when communicating between sockets. This meant that a two-socket machine for EPYC had 4 NUMA nodes (3 levels of NUMA distance). The 2nd Generation AMD EPYC Processor has only 2 NUMA nodes (2 levels of NUMA distance) which makes it easier to tune and optimize. The NUMA distances are the same for the 3rd and 4th Generation AMD EPYC Processors.

The IO die has a total of 12 memory controllers supporting DDR5 Dual Inline Memory Modules (DIMMs) with the maximum supported speed expected to be DDR-5200 at the time of writing. This implies a peak channel bandwidth of 40.6 GB/sec or 487.2 GB/sec total throughput across a socket. The exact bandwidth depends on the DIMMs selected, the number of memory channels populated, how cache is used and the efficiency of the application. Where possible, all memory channels should have a DIMM installed to maximize memory bandwidth.

While the topologies and basic layout is similar between the 3rd and 4th Generation AMD EPYC Processors, there are several micro-architectural differences. The Instructions Per Cycle (IPC) has improved by 13% on average across a selected range of workloads, although the exact improvement is workload-dependent. The improvements result from a variety of factors including a larger L2 cache, improvements in branch prediction, the execution engine, the front-end fetching/decoding of instructions and additional instructions such as supporting AVX-512. The degree to which these changes affect performance varies between applications.

Power management on the links is careful to minimize the amount of power required. If the links are idle, the power may be used to boost the frequency of individual cores. Hence, minimizing access is not only important from a memory access latency point of view, but it also has an impact on the speed of individual cores.

There are 128 IO lanes supporting PCIe Gen 5.0 per socket. Lanes can be used as Infinity links, PCI Express links or SATA links with a limit of 32 SATA links. The exact number of PCIe 4.0, PCIe 5.0 and configuration links vary by chip and motherboard. This allows very large IO configurations and a high degree of flexibility, given that either IO bandwidth or the bandwidth between sockets can be optimized, depending on the OEM requirements. The most likely configuration is that the number of PCIe links will be the same for 1- and 2-socket machines, given that some lanes per socket will be used for inter-socket communication. While some links must be used for inter-socket communication, adding a socket does not compromise the number of available IO channels. The exact configuration used depends on the platform.

3 AMD EPYC 9004 Series Classic Processor topology #

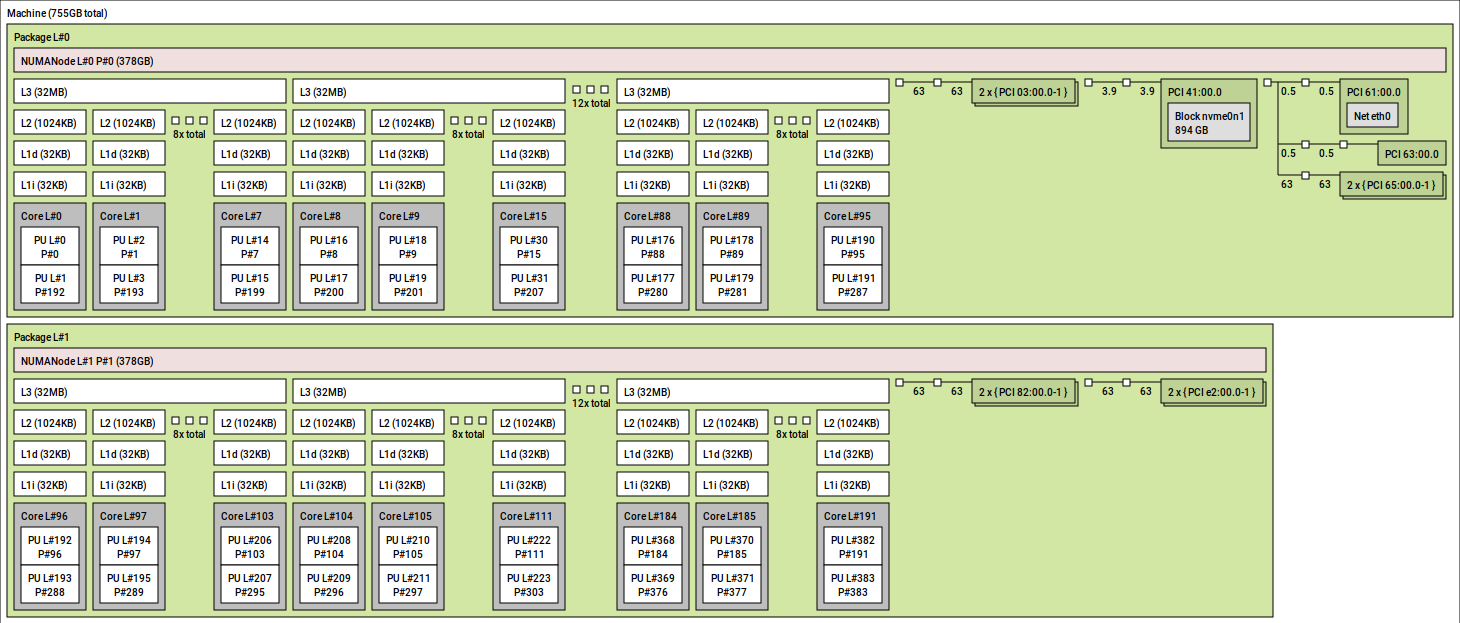

Figure 1, “AMD EPYC 9004 Series Classic Processor Topology” below shows the topology of an example machine with a fully populated memory configuration generated by the lstopo tool.

This tool is part of the hwloc package. The two “packages” correspond to each socket. The CCXs consisting of 8 cores (16 threads) each should be clear, as each CCX has one L3 cache and each socket has 12 CCXs resulting in 96 cores (192 threads). Not obvious are the links to the IO die, but the IO die should be taken into account when splitting a workload to optimize bandwidth to memory. In this example, the IO channels are not heavily used, but the focus will be on CPU and memory-intensive loads. If optimizing for IO, it is recommended that, where possible, the workload is located on the nodes local to the IO channel.

The computer output below shows a conventional view of the topology using the numactl tool which is slightly edited for clarity. The CPU IDs that map to each node are reported on the “node X cpus:” lines. They note the NUMA distances on the table at the bottom of the computer output. Node 0 and node 1 are a distance of 32 apart as they are on separate sockets. The distance is not a guarantee of the access latency, it is an estimate of the relative difference. The general interpretation of this distance would suggest that a remote node is 3.2 times longer than a local memory access but the actual latency cost can be different.

epyc:~ # numactl --hardware node 0 cpus: 0 .. 95 192 .. 287 node 0 size: 386740 MB node 0 free: 369215 MB node 1 cpus: 96 .. 191 288 .. 383 node 1 size: 386771 MB node 1 free: 371177 MB node distances: node 0 1 0: 10 32 1: 32 10

Note that the two sockets displayed are masking some details. There are multiple CCDs and multiple channels meaning that there are slight differences in access latency even to “local” memory. If an application is so sensitive to latency that it needs to be aware of the precise relative distances, then the Nodes Per Socket (NPS) value can be adjusted in the BIOS. If adjusted, numactl will show additional nodes and the relative distances between them.

Finally, the cache topology can be discovered in a variety of fashions. In addition to

lstopo which can provide the information, the level, size and ID of CPUs

that share cache can be identified from the files under

/sys/devices/system/cpu/cpuN/cache.

4 AMD EPYC 9004 Series Dense Processor #

The AMD EPYC 9004 Series Dense Processor launched in 2023. While the fundamental microarchitecture is based on the “Zen 4” compute core, there are some important differences between it and the AMD EPYC 9004 Series Classic Processors. Both processors are socket-compatible, have the same number of memory channels and the same number of I/O lanes. This means that the processors may be used interchangeably on the same platform with the same limitation that dual-socket configurations must use identical processors. Both processors use the same Instruction Set Architecture (ISA). This means that code optimized for one processor will run without modification on the other.

Despite the compatible ISA, the processors are physically different using a manufacturing process focused on increased density for both the CPU core and the physical cache. The L1 and L2 caches have the same capacity. The L3 cache, however, is half the capacity of the AMD EPYC 9004 Series Classic Processor with the space used for additional CCDs. The basic CCX structure for both the AMD EPYC 9004 Series Dense and 9004 Series Classic processor is similar but each CCD for the AMD EPYC 9004 Series Dense has 2 CCXs per CCD instead of 1. While the AMD EPYC 9004 Series Classic has 12 CCDs with 1 CCX each within a socket, the AMD EPYC 9004 Series Dense processor has 8 CCDs, each with 2 CCXs. This increases the maximum number of its cores per socket from 96 cores to 128. Finally, the Thermal Design Points (TDPs) differ for the AMD EPYC 9004 Series Dense processor, with different frequency scaling limits and generally a lower peak frequency. While each individual core may achieve less peak performance than the AMD EPYC 9004 Series Classic Processor, the total peak compute throughput available is higher because of the increased number of cores.

The intended use case and workloads determine which processor is superior. The key advantage of the AMD EPYC 9004 Series Dense Processor is packing more cores within the same socket. This may benefit cloud or hyperscale environments in that more containers or virtual machines can use uncontested CPUs for their workloads within the same physical machine. As a result, physical space in data centers can potentially be reduced. It may also benefit some HPC workloads that are primarily CPU and memory bound. For example, some HPC workloads scale to the number of available cores working on data sets that are too large to fit into a typical cache. For such workloads, the AMD EPYC 9004 Series Dense Processor may be ideal.

5 Memory and CPU binding #

NUMA is a scalable memory architecture for multiprocessor systems that can reduce contention on a memory channel. A full discussion on tuning for NUMA is beyond the scope for this document. But the document “A NUMA API for Linux” at http://developer.amd.com/wordpress/media/2012/10/LibNUMA-WP-fv1.pdf provides a valuable introduction.

The default policy for programs is the “local policy”. A program which calls

malloc() or mmap() reserves virtual address space but

does not immediately allocate physical memory. The physical memory is allocated the first time

the address is accessed by any thread and, if possible, the memory will be local to the

accessing CPU. If the mapping is of a file, the first access may have occurred at any time in

the past so there are no guarantees about locality.

When memory is allocated to a node, it is less likely to move if a thread changes to a CPU on another node or if multiple programs are remote-accessing the data unless Automatic NUMA Balancing (NUMAB) is enabled. When NUMAB is enabled, unbound process accesses are sampled. If there are enough remote accesses, then the data will be migrated to local memory. This mechanism is not perfect and incurs overhead of its own. This can be important for performance for thread and process migrations between nodes to be minimized and for memory placement to be carefully considered and tuned.

The taskset tool is used to set or get the CPU affinity for new or existing processes. An example usage is to confine a new process to CPUs local to one node. Where possible, local memory will be used. But if the total required memory is larger than the node, then remote memory can still be used. In such configurations, it is recommended to size the workload such that it fits in the node. This avoids that any of the data is being paged out when kswapd wakes to reclaim memory from the local node.

numactl controls both memory and CPU policies for processes that it launches and can modify existing processes. In many respects, the parameters are easier to specify than taskset. For example, it can bind a task to all CPUs on a specified node instead of having to specify individual CPUs with taskset. Most importantly, it can set the memory allocation policy without requiring application awareness.

Using policies, a preferred node can be specified where the task will use that node if memory is available. This is typically used in combination with binding the task to CPUs on that node. If a workload's memory requirements are larger than a single node and predictable performance is required, then the “interleave” policy will round-robin allocations from allowed nodes. This gives suboptimal but predictable access latencies to main memory. More importantly, interleaving reduces the probability that the operating system (OS) will need to reclaim any data belonging to a large task.

Further improvements can be made to access latencies by binding a workload to a single CCD within a node. As L3 caches are shared within a CCD on both the 3rd and 4th Generation AMD EPYC Processors, binding a workload to a CCD avoids L3 cache misses caused by workload migration. This is an important difference from the 2nd Generation AMD EPYC Processor which favored binding within a CCX.

In most respects, the guidance for optimal bindings for cache and nodes remains the same between the 3rd and 4th Generation AMD EPYC Processors. However, with SUSE Linux Enterprise 15 SP4, the necessity to bind specifically to the L3 cache for optimal performance is relaxed. The CPU scheduler in SUSE Linux Enterprise 15 SP4 has superior knowledge of the cache topology of all generations of the AMD EPYC Processors and how to balance load between CPU caches, NUMA nodes and memory channels.

With SUSE Linux Enterprise 15 SP4 having superior knowledge of the CPU cache topology and how to balance load, tuning specifically has a smaller impact to performance for a given workload. This is not a limitation of the operating system. It is a side-effect of the baseline performance being improved on AMD EPYC Processors in general.

See examples below on how taskset and numactl can be used to start commands bound to different CPUs depending on the topology.

# Run a command bound to CPU 1 epyc:~ # taskset -c 1 [command] # Run a command bound to CPUs belonging to node 0 epyc:~ # taskset -c `cat /sys/devices/system/node/node0/cpulist` [command] # Run a command bound to CPUs belonging to nodes 0 and 1 epyc:~ # numactl –cpunodebind=0,1 [command] # Run a command bound to CPUs that share L3 cache with cpu 1 epyc:~ # taskset -c `cat /sys/devices/system/cpu/cpu1/cache/index3/shared_cpu_list` [command]

5.1 Tuning for local access without binding #

The ability to use local memory where possible and remote memory if necessary is

valuable. But there are cases where it is imperative that local memory always be used. If

this is the case, the first priority is to bind the task to that node. If that is not

possible, then the command sysctl vm.zone_reclaim_mode=1 can be used to

aggressively reclaim memory if local memory is not available.

vm.zone_reclaim_modeWhile this option is good from a locality perspective, it can incur high costs because of stalls related to reclaim and the possibility that data from the task will be reclaimed. Treat this option with a high degree of caution and testing.

5.2 Hazards with CPU binding #

There are three major hazards to consider with CPU binding.

The first to watch for is remote memory nodes being used when the process is not allowed to run on CPUs local to that node. The scenarios when this can occur are outside the scope of this paper. However, a common reason is an IO-bound thread communicating with a kernel IO thread on a remote node bound to the IO controller. In such a setup, the data buffers managed by the application are stored in remote memory incurring an access cost for the IO.

While tasks may be bound to CPUs, the resources they are accessing, such as network or

storage devices, may not have interrupts routed locally. irqbalance

generally makes good decisions. But in cases where the network or IO is extremely

high-performance or the application has very low latency requirements, it may be necessary

to disable irqbalance using systemctl. When that is

done, the IRQs for the target device need to be routed manually to CPUs local to the target

workload for optimal performance.

The second is that guides about CPU binding tend to focus on binding to a single CPU. This is not always optimal when the task communicates with other threads, as fixed bindings potentially miss an opportunity for the processes to use idle CPUs sharing a common cache. This is particularly true when dispatching IO, be it to disk or a network interface, where a task may benefit from being able to migrate close to the related threads. It also applies to pipeline-based communicating threads for a computational workload. Hence, focus initially on binding to CPUs sharing L3 cache. Then consider whether to bind based on an L1/L2 cache or a single CPU using the primary metric of the workload to establish whether the tuning is appropriate.

The final hazard is similar: if many tasks are bound to a smaller set of CPUs, then the subset of CPUs could be oversaturated even though there is spare CPU capacity available.

5.3 cpusets and memory control groups #

cpusets are ideal when multiple workloads must be isolated on a machine in a predictable fashion. cpusets allow a machine to be partitioned into subsets. These sets may overlap, and in that case they suffer from similar problems as CPU affinities. In the event there is no overlap, they can be switched to “exclusive” mode which treats them completely in isolation with relatively little overhead. Similarly, they are well suited when a primary workload must be protected from interference because of low-priority tasks. In such cases the low-priority tasks can be placed in a cpuset. The caveat with cpusets is that the overhead is higher than using scheduler and memory policies. Ordinarily, the accounting code for cpusets is completely disabled. But when a single cpuset is created, there is a second layer of checks against scheduler and memory policies.

Similarly, memcg can be used to limit the amount of memory that can be used by a set of processes. When the limits are exceeded, the memory will be reclaimed by tasks within memcg directly without interfering with any other tasks. This is ideal for ensuring there is no inference between two or more sets of tasks. Similar to cpusets, there is some management overhead incurred. This means, if tasks can simply be isolated on a NUMA boundary, then this is preferred from a performance perspective. The major hazard is that, if the limits are exceeded, then the processes directly stall to reclaim the memory which can incur significant latencies.

Without memcg, when memory gets low, the global reclaim daemon does

work in the background and if it reclaims quickly enough, no stalls are incurred. When

using memcg, observe the allocstall counter in

/proc/vmstat as this can detect early if stalling is a

problem.

6 High performance storage devices and interrupt affinity #

High performance storage devices like Non-Volatile Memory Express (NVMe) or Serial Attached SCSI (SAS) controller are designed to take advantage of parallel IO submission. These devices typically support a large number of submit and receive queues, which are tied to MSI-X interrupts. Ideally, these devices should provide as many MSI-X vectors as there are CPUs in the system. To achieve the best performance, each MSI-X vector should be assigned to an individual CPU.

7 Automatic NUMA balancing #

Automatic NUMA Balancing (NUMAB) is a feature that identifies and relocates pages that are being accessed remotely for applications that are not NUMA-aware. There are cases where it is impractical or impossible to specify policies. In such cases, the balancing should be sufficient for throughput-sensitive workloads, but on occasion, NUMAB may be considered hazardous as it incurs a cost. Under ideal conditions, an application is NUMA aware and uses memory policies to control what memory is accessed and NUMAB simply ignores such regions. However, even if an application does not use memory policies, it is possible that the application still accesses mostly local memory and NUMA adds overhead confirming that accesses are local which is an unnecessary cost. For latency-sensitive workloads, the sampling for NUMA balancing may be too unpredictable and would prefer to incur the remote access cost or interleave memory instead of using NUMA. The final corner case where NUMA balancing is a hazard happens when the number of runnable tasks always exceeds the number of CPUs in a single node. In this case, the load balancer (and potentially affine wakes) may pull tasks away from the preferred node as identified by Automatic NUMA balancing resulting in excessive sampling and migrations.

If the workloads can be manually optimized with policies, then consider disabling

automatic NUMA balancing by specifying numa_balancing=disable on the kernel

command line or via sysctl kernel.numa_balancing. The same applies if it is

known that the application is mostly accessing local memory.

While a disconnect between CPU Scheduler and NUMA Balancing placement decisions still potentially exists in SUSE Linux Enterprise 15 SP4 when the machine is heavily overloaded, the impact is much reduced relative to previous releases for most scenarios. The placement decisions made by the CPU Scheduler and NUMA Balancing are now coupled. Situations where the CPU scheduler and NUMA Balancing make opposing decisions are relatively rare.

8 Evaluating workloads #

The first and foremost step when evaluating how a workload should be tuned is to establish a primary metric such as latency, throughput, operations per second or elapsed time. When each tuning step is considered or applied, it is critical that the primary metric be examined before conducting any further analysis. This is to avoid intensive focus on a relatively wrong bottleneck. Make sure that the metric is measured multiple times to ensure that the result is reproducible and reliable within reasonable boundaries. When that is established, analyze how the workload is using different system resources to determine what area should be the focus. The focus in this paper is on how CPU and memory is used. But other evaluations may need to consider the IO subsystem, network subsystem, system call interfaces, external libraries, etc. The methodologies that can be employed to conduct this are outside the scope of this paper. But the book “Systems Performance: Enterprise and the Cloud” by Brendan Gregg (see http://www.brendangregg.com/systems-performance-2nd-edition-book.html) is a recommended primer on the subject.

8.1 CPU utilization and saturation #

Decisions on whether to bind a workload to a subset of CPUs require that the CPU

utilization and any saturation risk is known. Both the ps and

pidstat commands can be used to sample the number of threads in a

system. Typically, pidstat yields more useful information with the

important exception of the run state. A system may have many threads, but if they are idle,

they are not contributing to utilization. The mpstat command can report

the utilization of each CPU in the system.

High utilization of a small subset of CPUs may be indicative of a single-threaded workload that is pushing the CPU to the limits and may indicate a bottleneck. Conversely, low utilization may indicate a task that is not CPU-bound, is idling frequently or is migrating excessively. While each workload is different, load utilization of CPUs may show a workload that can run on a subset of CPUs to reduce latencies because of either migrations or remote accesses. When utilization is high, it is important to determine if the system could be saturated. The vmstat tool reports the number of runnable tasks waiting for a CPU in the “r” column where any value over 1 indicates that wakeup latencies may be incurred. While the exact wakeup latency can be calculated using trace points, knowing that there are tasks queued is an important step. If a system is saturated, it may be possible to tune the workload to use fewer threads.

Overall, the initial intent should be to use CPUs from as few NUMA nodes as possible to reduce access latency. However, there are exceptions. The AMD EPYC 9004 Series Processor has a large number of high-speed memory channels to main memory, so consider the workload thread activity. If they are cooperating threads or sharing data, isolate them on as few nodes as possible to minimize cross-node memory accesses. If the threads are completely independent with no shared data, it may be best to isolate them on a subset of CPUs from each node. This is to maximize the number of available memory channels and throughput to main memory. For some computational workloads, it may be possible to use hybrid models such as MPI for parallelization across nodes and OpenMP for threads within nodes.

It is expected that tuning based on the AMD EPYC 7003 Series Processor will also usually perform optimally on AMD EPYC 9004 series. The main consideration is to account for potential differences in L3 cache sizes because of AMD V-Cache if workloads are tuned specifically for cache size. Also, keep in mind that CPU bindings based on caches may potentially be relaxed on SUSE Linux Enterprise 15 SP4.

8.2 Transparent Huge Pages #

Huge pages are a feature that can improve performance in many cases. This is achieved by

reducing the number of page faults, the cost of translating virtual addresses to physical

addresses because of fewer layers in the page table and being able to cache translations for

a larger portion of memory. Transparent Huge Pages (THP)

is supported for private anonymous memory that automatically backs mappings with huge pages

where anonymous memory could be allocated as heap,

malloc(), mmap(MAP_ANONYMOUS), etc. There is also

support for using THP pages backed by tmpfs which can be configured at

mount time using the huge= mount option. While the THP feature has

existed for a long time, it has evolved significantly.

Many tuning guides recommend disabling THP because of problems with early implementations. Specifically, when the machine was running for long enough, the use of THP could incur severe latencies and could aggressively reclaim memory in certain circumstances. These problems were resolved by the time SUSE Linux Enterprise Server 15 SP2 was released, and this is still the case for SUSE Linux Enterprise Server 15 SP4. This means there are no good grounds for automatically disabling THP because of severe latency issues without measuring the impact. However, there are exceptions that are worth considering for specific workloads.

Some high-end in-memory databases and other applications aggressively use

mprotect() to ensure that unprivileged data is never leaked. If these

protections are at the base page granularity, then there may be many THP splits and rebuilds

that incur overhead. It can be identified if this is a potential problem by using

strace or perf trace to detect the frequency and

granularity of the system call. If they are high-frequency, consider disabling THP. It can

also be sometimes inferred from observing the thp_split and

thp_collapse_alloc counters in

/proc/vmstat.

Workloads that sparsely address large mappings may have a higher memory footprint when using THP. This could result in premature reclaim or fallback to remote nodes. An example would be HPC workloads operating on large sparse matrices. If memory usage is much higher than expected, compare memory usage with and without THP to decide if the trade-off is not worthwhile. This may be critical on AMD EPYC 7003 and 9004 Series Processor given that any spillover will congest the Infinity links and potentially cause cores to run at a lower frequency.

This is specific to sparsely addressed memory. A secondary hint for this case may be that the application primarily uses large mappings with a much higher Virtual Size (VSZ, see Section 8.4, “Memory utilization and saturation”) than Resident Set Size (RSS). Applications which densely address memory benefit from the use of THP by achieving greater bandwidth to memory.

Parallelized workloads that operate on shared buffers with thread counts exceeding the number of available CPUs on a single node may experience a slowdown with THP if the granularity of partitioning is not aligned to the huge page. The problem is that if a large shared buffer is partitioned on a 4K boundary, then false sharing may occur whereby one thread accesses a huge page locally and other threads access it remotely. If this situation is encountered, the granularity of sharing should be increased to the THP size. But if that is not possible, disabling THP is an option.

Applications that are extremely latency-sensitive or must always perform in a

deterministic fashion can be hindered by THP. While there are fewer faults, the time for

each fault is higher as memory must be allocated and cleared before being visible. The

increase in fault times may be in the microsecond granularity. Ensure this is a relevant

problem as it typically only applies to extremely latency-sensitive applications. The

secondary problem is that a kernel daemon periodically scans a process looking for

contiguous regions that can be backed by huge pages. When creating a huge page, there is a

window during which that memory cannot be accessed by the application and new mappings

cannot be created until the operation is complete. This can be identified as a problem with

thread-intensive applications that frequently allocate memory. In this case, consider

effectively disabling khugepaged by setting a large value in

/sys/kernel/mm/transparent_hugepage/khugepaged/alloc_sleep_millisecs.

This will still allow THP to be used opportunistically while avoiding stalls when calling

malloc() or mmap().

THP can be disabled. To do so, specify transparent_hugepage=disable

on the kernel command line, at runtime via

/sys/kernel/mm/transparent_hugepage/enabled or on a per-process basis

by using a wrapper to execute the workload that calls

prctl(PR_SET_THP_DISABLE).

8.3 User/kernel footprint #

Assuming an application is mostly CPU- or memory-bound, it is useful to determine if the

footprint is primarily in user space or kernel space. This gives a hint where tuning should

be focused. The percentage of CPU time can be measured on a coarse-grained fashion using

vmstat or a fine-grained fashion using mpstat. If an

application is mostly spending time in user space, then the focus should be on tuning the

application itself. If the application is spending time in the kernel, then it should be

determined which subsystem dominates. The strace or perf

trace commands can measure the type, frequency and duration of system calls as

they are the primary reasons an application spends time within the kernel. In some cases, an

application may be tuned or modified to reduce the frequency and duration of system calls.

In other cases, a profile is required to identify which portions of the kernel are most

relevant as a target for tuning.

8.4 Memory utilization and saturation #

The traditional means of measuring memory utilization of a workload is to examine the

Virtual Size (VSZ) and Resident

Set Size (RSS). This can be done by using either the ps or

pidstat tool. This is a reasonable first step but is potentially

misleading when shared memory is used and multiple processes are examined. VSZ is simply a

measure of memory space reservation and is not necessarily used. RSS may be double accounted

if it is a shared segment between multiple processes. The file

/proc/pid/maps can be used to identify all segments used and whether

they are private or shared. The file /proc/pid/smaps and

/proc/pid/smaps_rollup reveals more detailed information including

the Proportional Set Size (PSS). PSS is an estimate of

RSS except it is divided between the number of processes mapping that segment, which can

give a more accurate estimate of utilization. Note that the smaps and

smaps_rollup files are very expensive to read and should not be

monitored at a high frequency. This is especially the case if workloads are using large

amounts of address space, many threads or both. Finally, the Working

Set Size (WSS) is the amount of active memory required to complete computations

during an arbitrary phase of a program's execution. It is not a value that can be trivially

measured. But conceptually it is useful as the interaction between WSS relative to available

memory affects memory residency and page fault rates.

On NUMA systems, the first saturation point is a node overflow when the “local” policy is in effect. Given no binding of memory, when a node is filled, a remote node’s memory will be used transparently and background reclaim will take place on the local node. Two consequences of this are that remote access penalties will be used and old memory from the local node will be reclaimed. If the WSS of the application exceeds the size of a local node, then paging and re-faults may be incurred.

The first item to identify is whether a remote node overflow occurred, which is

accounted for in /proc/vmstat as the numa_hit,

numa_miss, numa_foreign,

numa_interleave, numa_local and numa_other

counters:

numa_hitis incremented when an allocation uses the preferred node where preferred may be either a local node or one specified by a memory policy.numa_missis incremented when an alternative node is used to satisfy an allocation.numa_foreignis rarely useful but is accounted against a node that was preferred. It is a subtle distinction fromnuma_missthat is rarely useful.numa_interleaveis incremented when an interleave policy was used to select allowed nodes in a round-robin fashion.numa_localincrements when a local node is used for an allocation regardless of policy.numa_otheris used when a remote node is used for an allocation regardless of policy.

For the local memory policy, the numa_hit and

numa_miss counters are the most important to pay attention to. An

application that is allocating memory that starts incrementing the

numa_miss implies that the first level of saturation has been reached.

If monitoring the proc is undesirable, then the

numastat provides the same information. If this is observed on the AMD

EPYC 9004 Series Processor, it may be valuable to bind the application to nodes that

represent dies on a single socket. If the ratio of hits to misses is close to 1, consider an

evaluation of the interleave policy to avoid unnecessary reclaim.

These NUMA statistics only apply at the time a physical page is allocated and are not

related to the reference behavior of the workload. For example, if a task running on node

0 allocates memory local to node 0, then it will be accounted for as a

node_hit in the statistics. However, if the memory is shared with a

task running on node 1, all the accesses may be remote, which is a miss from the

perspective of the hardware but not accounted for in /proc/vmstat.

Detecting remote and local accesses at the hardware level requires using the hardware's

Performance Management Unit to detect.

When the first saturation point is reached, reclaim will be active. This can be observed

by monitoring the pgscan_kswapd and pgsteal_kswapd

counters in /proc/vmstat. If this is matched with an increase in major

faults or minor faults, then it may be indicative of severe thrashing. In this case, the

interleave policy should be considered. An ideal tuning option is to identify if shared

memory is the source of the usage. If this is the case, then interleave the shared memory

segments. This can be done in some circumstances using numactl or by

modifying the application directly.

More severe saturation is observed if the pgscan_direct and

pgsteal_direct counters are also increasing. These counters indicate

that the application is stalling while memory is being reclaimed. If the application was

bound to individual nodes, increasing the number of available nodes will alleviate the

pressure. If the application is unbound, it indicates that the WSS of the workload exceeds

all available memory. It can only be alleviated by tuning the application to use less memory

or increasing the amount of RAM available.

A more generalized view of resource pressure for CPU, memory and IO can be measured

using the kernel Pressure Stall Information feature

enabled with the command line psi=1. When enabled, proc files under

/proc/pressure show if some or all active tasks were stalled recently

contending on a resource. This information is not always available in production. But if the

information is available, the memory pressure information may be used to guide whether a

deeper analysis is necessary and which resource is the bottleneck.

As before, whether to use memory nodes from one socket or two sockets depends on the application. If the individual processes are independent, either socket can be used. Where possible, keep communicating processes on the same socket to maximize memory throughput while minimizing the socket interconnect traffic.

8.5 Other resources #

The analysis of other resources is outside the scope of this paper. However, a common

scenario is that an application is IO-bound. A superficial check can be made using the

vmstat tool. This tool checks what percentage of CPU time is spent idle

combined with the number of processes that are blocked and the values in the bi and bo columns. Similarly,

if PSI is enabled, then the IO pressure file will show whether some or all active tasks are

losing time because of lack of resources. Further analysis is required to determine if an

application is IO rather than CPU- or memory-bound. However, this is a sufficient check to

start with.

9 Power management #

Modern CPUs balance power consumption and performance through Performance States (P-States). Low utilization workloads may use lower P-States to conserve power while still achieving acceptable performance. When a CPU is idle, lower power idle states (C-States) can be selected to further conserve power. However, this comes with higher exit latencies when lower power states are selected. It is further complicated by the fact that, if individual cores are idle and running at low power, the additional power can be used to boost the performance of active cores. This means this scenario is not a straightforward balance between power consumption and performance. More complexity is added on the AMD EPYC 7003 and 9004 Series Processors whereby spare power may be used to boost either cores or the Infinity links.

The 4th Generation AMD EPYC Processor provides SenseMI.

This technology, among other capabilities, enables CPUs to make adjustments to voltage and

frequency depending on the historical state of the CPU. There is a latency penalty when

switching P-States, but the AMD EPYC 9004 Series Processor is capable of making fine-grained

adjustments to reduce the likelihood that the latency is a bottleneck. On SUSE Linux

Enterprise Server, the AMD EPYC 9004 Series Processor uses the acpi_cpufreq

driver. This allows P-states to be configured to match requested performance. However, this is

limited in terms of the full capabilities of the hardware. It cannot boost the frequency

beyond the maximum stated frequencies, and if a target is specified, then the highest

frequency below the target will be used. A special case is if the governor is set to performance. In this situation the hardware will use the highest

available frequency in an attempt to work quickly and then return to idle.

What should be determined is whether power management is likely to be a factor for a workload. A single thread workload that is CPU-bound is likely to run at the highest frequency on a single core. Lastly, a workload that does not communicate heavily with other processes and is mostly CPU-bound is unlikely to experience any side effects because of power management. The exceptions are when load balancing moves tasks away from active CPUs if there is a compute imbalance between NUMA nodes or the machine is heavily overloaded.

The workloads that are most likely to be affected by power management are those that:

synchronously communicate between multiple threads.

idle frequently.

have low CPU utilization overall.

It will be further compounded if the threads are sensitive to wakeup latency.

Power management is critical, not only for power savings, but because power saved from idling inactive cores can be used to boost the performance of active cores. On the other side, low utilization tasks may take longer to complete if the task is not active long enough for the CPU to run at a high frequency. In some cases, problems can be avoided by configuring a workload to use the minimum number of CPUs necessary for its active tasks. Deciding that means monitoring the power state of CPUs.

The P-State and C-State of each CPU can be examined using the turbostat

utility. The computer output below shows an example, slightly edited to fit the page, where a

workload is busy on CPU 0 and other workloads are idle. A useful exercise is to start a

workload and monitor the output of turbostat paying close attention to CPUs

that have moderate utilization and running at a lower frequency. If the workload is

latency-sensitive, it is grounds for either minimizing the number of CPUs available to the

workload or configuring power management.

Pac. Die Core CPU Avg_M Busy% Bzy_M TSC_M IRQ POLL C1 C2 POLL% C1% C2% - - - - 1516 44.16 3432 1198 1.65 340109 90 706 6937 0.00 0.00 0 0 0 0 463 13.24 3498 1200 0.26 2936 0 0 1406 0.00 0.00 0 0 0 192 1 0.04 3499 1200 0.10 27 0 0 21 0.00 0.00 0 0 1 1 3189 91.16 3498 1200 1.52 1792 0 0 18 0.00 0.00 0 0 1 193 317 9.07 3498 1200 0.55 203 0 0 18 0.00 0.00 0 0 2 2 3436 98.22 3498 1200 1.40 1905 0 0 3 0.00 0.00 0 0 2 194 78 2.22 3500 1200 0.92 84 0 0 29 0.00 0.00

If tuning CPU frequency management is appropriate, the following actions can be taken to

set the management policy to performance using the cpupower utility:

epyc:~# cpupower frequency-set -g performance Setting cpu: 0 Setting cpu: 1 Setting cpu: 2 ...

Persisting it across reboots can be done via a local init script, via

udev or via one-shot systemd service file if

necessary. Note that turbostat will still show that idling CPUs use a low

frequency. The impact of the policy is that the highest P-State will be used as soon as

possible when the CPU is active. In some cases, a latency bottleneck will occur because of a

CPU exiting idle. If this is identified on the AMD EPYC 9004 Series Processor, restrict the

C-state by specifying processor.max_cstate=2 if lower P-States exist on the

kernel command line. This will prevent CPUs from entering lower C-states. The availability of

P-states can be determined with cpupower idle-info. It is expected on the

AMD EPYC 9004 Series Processor that the exit latency from C1 is very low. But by allowing C2,

it reduces interference from the idle loop injecting micro-operations into the pipeline and

should be the best state overall. It is also possible to set the max idle state on individual

cores using cpupower idle-set. If SMT is enabled, the idle state should be

set on both siblings.

10 Security mitigation #

On occasion, a security fix is applied to a distribution that has a performance impact. The most notable example is Meltdown and multiple variants of Spectre but includes others such as ForeShadow (L1TF). The AMD EPYC 9004 Series Processor is immune to the Meltdown variant and page table isolation is never active. However, it is vulnerable to a subset of Spectre variants although retbleed is a notable exception. The following table lists all security vulnerabilities that affect the 4th Generation AMD EPYC Processor. In addition, it specifies which mitigations are enabled by default for SUSE Linux Enterprise Server 15 SP4.

| Vulnerability | Affected | Mitigations |

|---|---|---|

|

ITLB Multihit |

No |

N/A |

|

L1TF |

No |

N/A |

|

MDS |

No |

N/A |

|

Meltdown |

No |

N/A |

|

MMIO Stale Data |

No |

N/A |

|

Retbleed |

No |

N/A |

|

Speculative Store Bypass |

Yes |

prctl and seccomp policy |

|

Spectre v1 |

Yes |

usercopy/swapgs barriers and __user pointer sanitization |

|

Spectre v2 |

Yes |

Retpoline, RSB filling, and conditional IBPB, IBRS_FW, and STIBP |

|

SRBDS |

No |

N/A |

|

TSX Async Abort |

No |

N/A |

If it can be guaranteed that the server is in a trusted environment running only known code that is not malicious, the mitigations=off parameter can be specified on the kernel command line. This option disables all security mitigations and may improve performance in some cases. However, at the time of writing and in most cases, the gain on an AMD EPYC 9004 Series Processor is marginal when compared to other CPUs. Evaluate carefully whether the gain is worth the risk and if unsure, leave the mitigations enabled.

11 Hardware-based profiling #

The AMD EPYC 9004 Series Processor has extensive Performance

Monitoring Unit (PMU) capabilities. Advanced monitoring of a workload can be

conducted via the perf. The command supports a range of hardware events

including cycles, L1 cache access/misses, TLB access/misses, retired branch instructions and

mispredicted branches. To identify what subsystem may be worth tuning in the OS, the most

useful invocation is perf record -a -e cycles sleep 30. This captures 30

seconds of data for the entire system. You can also call perf record -e cycles

command to gather a profile of a given workload. Specific information on the OS

can be gathered through tracepoints or creating probe points with perf or

trace-cmd. But the details on how to conduct such analyses are beyond the

scope of this paper.

12 Compiler selection #

SUSE Linux Enterprise ships with multiple versions of GCC. SUSE Linux Enterprise 15 SP4 service packs ship with GCC 7 which at the time of writing is GCC 7.5.0 with the package version 7-3.9.1. The intention is to avoid unintended consequences when porting code that may affect the building and operation of applications. The GCC 7 development originally started in 2016, with a branch created in 2017 and GCC 7.5 released in 2019. This means that the system compiler has no awareness of the AMD EPYC 7002 or later Series processors.

Fortunately, the add-on Developer Tools Module includes additional

compilers with the latest version currently based on GCC 11.2.1. This

compiler is capable of generating optimized code targeted at the 3rd Generation AMD EPYC

Processor using the znver3 target. It also provides additional support

for OpenMP 5.0. Unlike the system compiler, the major version of GCC

shipped with the Developer Tools Module can change during the lifetime of the product. It is

expected that GCC 12 will be included in future releases for generating

optimized code for the 4th Generation AMD EPYC Processor. Unfortunately, at the time of

writing, there is not a version of GCC available optimized for the AMD EPYC 9004 Series

Processor specifically.

The OS packages are built against a generic target. However, where applications and benchmarks can be rebuilt from source, the minimum option should be -march=znver3 for GCC 11 and later versions of GCC.

Further information on how to install the Developer Tools Module and how to build optimized versions of applications can be found in the guide Advanced optimization and new capabilities of GCC 11.

13 Candidate workloads #

The workloads that will benefit most from the 4th Generation AMD EPYC Processor architecture are those that can be parallelized and are either memory or IO-bound. This is particularly true for workloads that are “NUMA friendly”. They can be parallelized, and each thread can operate independently for most of the workload's lifetime. For memory-bound workloads, the primary benefit will be taking advantage of the high bandwidth available on each channel. For IO-bound workloads, the primary benefit will be realized when there are multiple storage devices, each of which is connected to the node local to a task issuing IO.

13.1 Test setup #

The following sections will demonstrate how an OpenMP and MPI workload can be configured and tuned on an AMD EPYC 9004 Series Processor reference platform. The system has two processors, each with 96 cores and SMT enabled for a total of 192 cores (384 logical CPUs). The peak bandwidth available to the machine depends on the type of DIMMs installed and how the DIMM slots are populated. Note that the peak theoretical transfer speed is rarely reached in practice, given that it can be affected by the mix of reads/writes and the location and temporal proximity of memory locations accessed.

|

CPU |

2x AMD EPYC 9654 |

|

Platform |

AMD Engineering Sample Platform |

|

Drive |

Samsung SSD 983 DCT 960GB |

|

OS |

SUSE Linux Enterprise Server 15 SP4 |

|

Memory Type |

24x 32GB DDR5 |

|

Memory Interleaving |

Channel |

|

Memory Speed |

4800 MT/sec |

|

Kernel command line |

|

13.2 Test workload: STREAM #

STREAM is a memory bandwidth benchmark created by Dr. John D. McCalpin from the University of Virginia (for more information, see https://www.cs.virginia.edu/stream/). It can be used to measure bandwidth of each cache level and bandwidth to main memory while calculating four basic vector operations. Each operation can exhibit different throughputs to main memory depending on the locations and type of access.

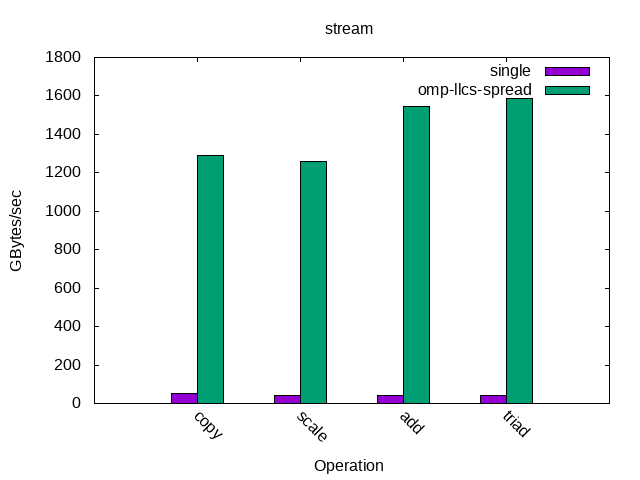

The benchmark was configured to run both single-threaded and parallelized with OpenMP to take advantage of multiple memory controllers. The array of elements for the benchmark was set at 268,435,456 elements at compile time so that each array was 2048MB in size for a total memory footprint of approximately 6144 MB. The size was selected in line with the recommendation from STREAM that the array sizes be at least 4 times the total size of L3 cache available in the system. Pay special attention to the exact size of the L3 cache if V-Cache is present. An array-size offset was used so that the separate arrays for each parallelized thread would not share a Transparent Huge Page. The reason is that NUMA balancing may choose to migrate shared pages leading to some distortion of the results.

|

Compiler |

gcc-11 (SUSE Linux) 11.2.1 |

|

Compiler flags |

-Ofast -march=znver3 -mcmodel=medium -DOFFSET=512 |

|

OpenMP compiler flag |

-fopemp |

|

OpenMP environment variables |

OMP_PROC_BIND=SPREAD OMP_NUM_THREADS=24 |

The march=znver3 is a reflection of the compiler available in

SUSE Linux Enterprise 15 SP4 at the time of writing. It should be checked if a later

GCC version is available in the Developer Tools

Module that supported march=znver4. The number of OpenMP

threads was selected to have at least one thread running for every memory channel by having

one thread per L3 cache available. The OMP_PROC_BIND parameter

spreads the threads such that one thread is bound to each available dedicated L3 cache to

maximize available bandwidth. This can be verified using perf, as

illustrated below with slight editing for formatting and clarity.

epyc:~ # perf record -e sched:sched_migrate_task ./stream

epyc:~ # perf script

...

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494780 prio=120 orig_cpu=0 dest_cpu=8

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494781 prio=120 orig_cpu=0 dest_cpu=16

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494782 prio=120 orig_cpu=0 dest_cpu=24

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494783 prio=120 orig_cpu=0 dest_cpu=32

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494784 prio=120 orig_cpu=0 dest_cpu=40

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494785 prio=120 orig_cpu=0 dest_cpu=48

stream-nnn x: sched:sched_migrate_task: comm=stream pid=494786 prio=120 orig_cpu=0 dest_cpu=56

...Several options were considered for the test system that were unnecessary for STREAM running on the AMD EPYC 9004 Series Processor but may be useful in other situations. STREAM performance can be limited if a load/store instruction stalls to fetch data. CPUs may automatically prefetch data based on historical behavior but it is not guaranteed. In limited cases, depending on the CPU and workload, this may be addressed by specifying -fprefetch-loop-arrays and depending on whether the workload is store-intensive, -mprefetchwt1. In the test system using AMD EPYC 9004 Series Processors, explicit prefetching did not help and was omitted. This is because an explicitly scheduled prefetch may disable a CPU's predictive algorithms and degrade performance. Similarly, for some workloads branch mispredictions can be a major problem, and in some cases breach mispredictions can be offset against I-Cache pressure by specifying -funroll-loops. In the case of STREAM on the test system, the CPU accurately predicted the branches rendering the unrolling of loops unnecessary. For math-intensive workloads it can be beneficial to link the application with -lmvec depending on the application. In the case of STREAM, the workload did not use significant math-based operations and so this option was not used. Some styles of code blocks and loops can also be optimized to use vectored operations by specifying -ftree-vectorize and explicitly adding support for CPU features such as -mavx2. In all cases, STREAM does not benefit as its operations are very basic. But it should be considered on an application-by-application basis and when building support libraries such as numerical libraries. In all cases, experimentation is recommended but caution advised. This holds particularly true when considering options like prefetch that may have been advisable on much older CPUs or completely different workloads. Such options are not universally beneficial or always suitable for modern CPUs such as the AMD EPYC 9004 Series Processors.

In the case of STREAM running on the AMD EPYC 9004 Series Processor, it was sufficient to enable -Ofast. This includes the -O3 optimizations to enable vectorization. In addition, it gives some leeway for optimizations that increase the code size with additional options for fast math that may not be standards-compliant.

For OpenMP, the SPREAD option was used to spread the load across L3 caches. OpenMP has a variety of different placement options if manually tuning placement. But careful attention should be paid to OMP_PLACES, given the importance of the L3 Cache topology in AMD EPYC 9004 Series Processors, if the operating system does not automatically place tasks appropriately. At the time of writing, it is not possible to specify l3cache as a place similar to what MPI has. In this case, the topology will need to be examined either with library support such as hwloc, directly via the sysfs or manually. While it is possible to guess via the CPUID, it is not recommended as CPUs may be offlined or the enumeration may vary between platforms because of BIOS implementations. An example specification of places based on L3 cache for the test system is:

{0:8,192:8}, {8:8,200:8}, {16:8,208:8}, {24:8,216:8}, {32:8,224:8}, {40:8,232:8},

{48:8,240:8}, {56:8,248:8}, {64:8,256:8}, {72:8,264:8}, {80:8,272:8}, {88:8,280:8},

{96:8,288:8}, {104:8,296:8}, {112:8,304:8}, {120:8,312:8}, {128:8,320:8}, {136:8,328:8},

{144:8,336:8}, {152:8,344:8}, {160:8,352:8}, {168:8,360:8}, {176:8,368:8}, {184:8,376:8}Figure 2, “STREAM Bandwidth, Single Threaded and Parallelized” shows the reported bandwidth for the single and parallelized case. The single-threaded bandwidth for the basic Copy vector operation on a single core was 50.3 GB/sec. This is higher than the theoretical maximum of a single DIMM, but the IO die may interleave accesses, and caching effects and prefetch still apply. The total throughput for each parallel operation ranged from 1259 GB/sec to 1587 GB/sec depending on the type of operation and how efficiently memory bandwidth was used. This is very roughly scaling with the number of memory channels available on the machine with caching effects accounting for results exceeding theoretical maximums.

Higher STREAM scores can be reported by reducing the array sizes so that cache is partially used with the maximum score requiring that each threads memory footprint fits inside the L1 cache. Additionally, it is possible to achieve results closer to the theoretical maximum by manual optimization of the STREAM benchmark using vectored instructions and explicit scheduling of loads and stores. The purpose of this configuration was to illustrate the impact of properly binding a workload that can be fully parallelized with data-independent threads.

13.3 Test workload: NASA Parallel Benchmark #

NASA Parallel Benchmark (NPB) is a small set of compute-intensive kernels designed to evaluate the performance of supercomputers. They are small compute kernels derived from Computational Fluid Dynamics (CFD) applications. The problem size can be adjusted for different memory sizes. Reference implementations exist for both MPI and OpenMP. This setup will focus on the MPI reference implementation.

While each application behaves differently, one common characteristic is that the workload is very context-switch intensive, barriers frequently yield the CPU to other tasks and the lifetime of individual processes can be very short-lived. The following paragraphs detail the tuning selected for this workload.

The most basic step is setting the CPU governor to “performance” although it is not mandatory. This can address issues with short-lived or mobile tasks failing to run long enough for a higher P-State to be selected even though the workload is very throughput-sensitive. The migration cost parameter is set to reduce the frequency in which the load balancer will move an individual task. The minimum granularity is adjusted to reduce overscheduling effects.

Depending on the computational kernel used, the workload may require a power-of-two number or a square number of processes to be used. However, note that using all available CPUs can mean that the application can contend with itself for CPU time. Furthermore, as IO may be issued to shared memory backed by disk, there are system threads that also need CPU time. Finally, if there is CPU contention, MPI tasks can be stalled waiting on an available CPU and OpenMPI may yield tasks prematurely if it detects there are more MPI tasks than CPUs available. These factors should be carefully considered when tuning for parallelized workloads in general and MPI workloads in particular.

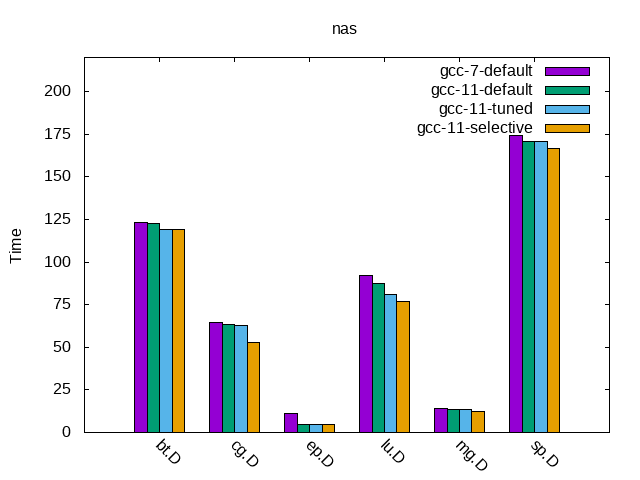

In the specific case of testing NPB on the System Under Test, there was usually a limited advantage to limiting the number of CPUs used. For the Embarrassingly Parallel (EP) load in particular, it benefits from using all available CPUs. Hence, the default configuration used all available CPUs (384) which is both a power-of-two and square number of CPUs because it was a sensible starting point. However, this is not universally true. Using perf, it was found that some workloads are memory-bound and do not benefit from a high degree of parallelization. In addition, for the final configuration, some workloads were parallelized to have one task per L3 cache in the system to maximize cache usage. The exception was the Scalar Pentadiagonal (SP) workload which was both memory-bound and benefited from using all available CPUs. As the number of cores can vary between chips and the number of populated memory channels, the tuning parameters used for this test may not be universally true for all AMD EPYC platforms. This highlights that there is no universal good choice for optimizing a workload for a platform. Thus, experimentation and validation of tuning parameters is vital.

The basic compilation flags simply turned on all safe optimizations. The tuned flags used -Ofast which can be unsafe for some mathematical workloads but generated the correct output for NPB. The other flags used the optimal instructions available on the distribution compiler and vectorized some operations. GCC 11 is more strict in terms of matching types in Fortran. Depending on the version of NPB used, it may be necessary to specify the -fallow-argument-mismatch or -fallow-invalid-boz to compile unless the source code is modified.

As NPB uses shared files, an XFS partition was used for the temporary files. It is,

however, only used for mapping shared files and is not a critical path for the benchmark and

no IO tuning is necessary. In some cases, with MPI applications, it will be possible to use

a tmpfs partition for OpenMPI. This avoids unnecessary IO assuming the

increased physical memory usage does not cause the application to be paged out.

|

Compiler |

gcc-11 (SUSE Linux) 11.2.1 |

|

OpenMPI |

openmpi4-4.1.1-150400.1.11.x86_64 |

|

Default compiler flags |

-m64 -O3 -mcmodel=large |

|

Default number processes |

384 |

|

Selective number processes |

bt=384 cg=192 ep=384 lu=192 mg=192 sp=192 |

|

Fortran compiler flags |

-fallow-argument-mismatch -fallow-invalid-boz |

|

Tuned compiler flags |

-Ofast -march=znver3 -mtune=znver3 -ftree-vectorize |

|

CPU governor performance |

cpupower frequency-set -g performance |

|

mpirun parameters |

-mca btl ^openib,udapl -np 64 --bind-to l3cache:overload-allowed |

|

mpirun environment |

TMPDIR=/xfs-data-partition |

Figure 3, “NAS MPI Results” shows the time, as reported by the benchmark, for each of the kernels to complete.

The gcc-7-default test used the system compiler, all available CPUs, basic compilation options and the performance governor. The second test gcc-11-default used an alternative compiler. gcc-11-tuned used additional compilation options, and bound tasks to L3 caches gaining between 1.89% and 43.4% performance on average relative to gcc-7-default. Even taking into account that ep.D is an outlier because of the fact it is an embarrassingly parallel workload, the range of improvements is between 1.89% and 11.65%. The final test selective used processes that either used all CPUs, avoided heavy overloaded or limited processes to one per L3 cache, showing additional gains between 0.7% and 15.56% depending on the computational kernel.

In some cases, it will be necessary to compile an application that can run on different CPUs. In such cases, -march=znver3 may not be suitable if it generates binaries that are incompatible with other vendors. In such cases, it is possible to specify the ISA-specific options that are cross-compatible with many x86-based CPUs such as -mavx2, -mfma, -msse2 or msse4a while favoring optimal code generation for AMD EPYC 9004 Series Processors with -mtune=znver3. This can be used to strike a balance between excellent performance on a single CPU and great performance on multiple CPUs.

14 Tuning AMD EPYC 9004 Series Dense #

As the AMD EPYC 9004 Series Classic and AMD EPYC Series Dense are ISA-compatible, no code

tuning or compiler setting changes should be necessary. For Cloud environments, partitioning

or any binding of Virtual CPUs to Physical CPUs may need to be adjusted to account for the

increased number of cores. The additional cores may also allow additional containers or

virtual machines to be hosted on the same physical machine without CPU contention. Similarly,

the degree of parallelization for HPC workloads may need to be adjusted. In cases where the

workload is tuned based on the number of CCD's, adjustments may be necessary for the increased

number of CCDs. An exception are cases where the workload already hits scaling limits. While

tuning based on the different number of CCXs is possible, it should only be necessary for

applications with very strict latency requirements. As the size of the cache is halved,

partitioning based on cache sizes may also need to be adjusted. In some cases, where workloads

are tuned based on the output of tools like hwloc partitioning

may adjust

automatically but any static partitioning should be re-examined.

When configuring workloads for either the AMD EPYC 9004 Series Classic or the AMD EPYC 9004 Series Dense, the most important task is to set expectations. While super-linear scaling is possible, it should not be expected. It may be possible to achieve super-linear scaling in Cloud Environments for the number of instances hosted without performance loss if individual containers or virtual machines are not utilizing 100% of CPU. However, it should be planned carefully and tested. This would be particularly true in cases where multiple instances are hosted that have different times of day or year for active phases. The normal expectation is a best case of 33% gain for CPU-intensive workloads because of the increased number of cores. But sub-linear scaling is common because of resource contention. Contention between SMT siblings, memory bandwidth, memory availability, memory interconnects, thread communication overhead or peripheral devices may prevent perfect linear scaling even for perfectly parallelized applications. Similarly, not all applications can scale perfectly. It is possible for performance to plateau and even regress as the degree of parallelization increases. Testing for parallelized workloads using NAS indicated that actual gains were between 4% and 21% for most workloads that did not have an inherent scaling limitation.

15 Using AMD EPYC 9004 Series Processors for virtualization #

Running Virtual Machines (VMs) has some aspects in common with running “regular” tasks on a host operating system. Therefore, most of the tuning advice described so far in this document are valid and applicable to this section too.

However, virtualization workloads do pose their own specific challenges and some special considerations need to be made, to achieve a better tailored and more effective set of tuning advice, for cases where a server is used only as a virtualization host. And this is especially relevant for large systems, such as AMD EPYC 9004 Series Processors.

This is because:

VMs typically run longer, and consume more memory, than most of others “regular” OS processes.

VMs can be configured to behave either as NUMA-aware or non NUMA-aware “workloads”.

In fact, VMs often run for hours, days, or even months, without being terminated or restarted. Therefore, it is almost never acceptable to pay the price of suboptimal resource partitioning and allocation, even when there is the expectation that things will be better next time. For example, it is always desirable that vCPUs run close to the memory that they are accessing. For reasonably big NUMA aware VMs, this happens only with proper mapping of the virtual NUMA nodes of the guest to physical NUMA nodes on the host. For smaller, NUMA-unaware VMs, that means allocating all their memory on the smallest possible number of host NUMA nodes, and making their vCPUs run on the pCPUs of those nodes as much as possible.

Also, poor mapping of virtual machine resources (virtual CPUs and memory, but also IO) on the host topology induces performance degradation to everything that runs inside the virtual machine – and potentially even to other components of the system .

Regarding NUMA-awareness, a VM is called out to be NUMA aware if a (virtual) NUMA topology is defined and exposed to the VM itself and if the OS that the VM runs (guest OS) is also NUMA aware. On the contrary, a VM is called NUMA-unaware if either no (virtual) NUMA topology is exposed or the guest OS is not NUMA aware.

VMs that are large enough (in terms of amount of memory and number of virtual CPUs) to span multiple host NUMA nodes, benefit from being configured as NUMA aware VMs. However, even for small and NUMA-unaware VMs, wise placement of their memory on the host nodes, and effective mapping of their virtual CPUs (vCPUs) on the host physical CPUs (pCPUs) is key for achieving good and consistent performance.

This second half of the present document focuses on tuning a system where CPU and memory intensive workloads run inside VMs. We will explain how to configure and tune both the host and the VMs, in a way that performance comparable to the ones of the host can be achieved.

Both the Kernel-based Virtual Machine (KVM) and the Xen-Project hypervisor (Xen), as available in SUSE Linux Enterprise Server 15 SP4, provide mechanisms to enact this kind of resource partitioning and allocation.

KVM only supports one type of VM – a fully hardware-based virtual machine (HVM). Under Xen, VMs can be paravirtualized (PV) or hardware virtualized machines (HVM).

Xen HVM guests with paravirtualized interfaces enabled (called PVHVM, or HVM) are very similar to KVM VMs, which are based on hardware virtualization but also employ paravirtualized IO (VirtIO). In this document, we always refer to Xen VMs of the (PV)HVM type.

16 Resources allocation and tuning of the host #

No details are given, here, about how to install and configure a system so that it becomes a suitable virtualization host. For similar instructions, refer to the SUSE documentation at SUSE Linux Enterprise Server 15 SP4 Virtualization Guide: Installation of Virtualization Components.

The same applies to configuring things such as networking and storage, either for the host or for the VMs. For similar instructions, refer to suitable chapters of OS and virtualization documentation and manuals. As an example, to know how to assign network interfaces (or ports) to one or more VMs, for improved network throughput, refer to SUSE Linux Enterprise Server 15 SP4 Virtualization Guide: Assigning a Host PCI Device to a VM Guest.

16.1 Allocating resources to the host OS #

Even if the main purpose of a server is “limited” to running VMs, some activities will be carried out on the host OS. In fact, in both Xen and KVM, the host OS is at least responsible for helping with the IO of the VMs. It may, therefore, be necessary to make sure that the host OS has some resources (namely, CPUs and memory) exclusively assigned to itself.

On KVM, the host OS is the SUSE Linux Enterprise distribution installed on the server, which then loads the hypervisor kernel modules. On Xen, the host OS still is a SUSE Linux Enterprise distribution, but it runs inside what is to all the effect an (although special) Virtual Machine (called Domain 0, or Dom0).

In the absence of any specific requirements involving host resources, a good rule of thumb suggests that 5 to 10 percent of the physical RAM should be reserved to the host OS. On KVM, increase that quota in case the plan is to run many (for example hundreds) of VMs. On Xen, it is okay to always give dom0 not more than a few gigabytes of memory. This is especially the case when planning to take advantage of disaggregation (see https://wiki.xenproject.org/wiki/Dom0_Disaggregation).

In terms of CPUs, depending on the workload, it may be fine to use all the physical CPUs for the VMs (and this is in fact how the benchmarks in the experimental section of this guide have been conducted). On the other hand, if it is necessary to keep some CPUs for the host Os / dom0, we advise to reserve at least one physical core on each NUMA node. This is especially true for a system like the one show in Figure 1, “AMD EPYC 9004 Series Classic Processor Topology”. In fact, host OS activities are mostly related to performing IO for VMs and it is beneficial for performance if the kernel threads that handle devices can run on the nodes to which the devices themselves are attached, which is both NUMA nodes, in our case.

System administrators need to be able to reach out and login to the system, to monitor, manage and troubleshoot it. Therefore, it is possible that even more resources needs to be assigned to the host OS. This would be for making sure that management tools (for example, the SSH daemon) can be reached, and that the hypervisor's toolstack (for example, libvirt) can run without too much contention.

16.1.1 Reserving CPUs and memory for the host on KVM #

When using KVM, sparing, for example, 24 cores (that is one full core for each CCX on both NUMA nodes) and 64 GB of RAM for the host OS is done by stopping creating VMs when the total number of vCPUs of all VMs has reached 336 (as each core has 2 threads) and when the total cumulative amount of allocated RAM has reached 690 GB.

To make sure that these CPUs are not used by the virtual machines and are available to the host, virtual CPU pinning (discussed later in this document) can be used. There are also other ways to enforce this, for example with cgroups, or by means of the isolcpus boot parameter, but we will not cover them in details.

16.1.2 Reserving CPUs and memory for the host on Xen #

When using Xen, dom0 resource allocation needs to be done explicitly at system boot

time. For example, assigning 12 physical cores and 64 GB of RAM to it is done by specifying

the following additional parameters on the hypervisor boot command line (for example, by

editing /etc/defaults/grub, and then updating the boot loader):

dom0_mem=65536M,max:65536M dom0_max_vcpus=48

The number of vCPUs is 24 because we want Dom0 to have 24 physical cores, and the AMD EPYC 9004 Series Processor has Symmetric multithreading (SMT). 65536M memory (that is 64 GB) is specified both as current and as maximum value, to prevent Dom0 from using ballooning (see https://wiki.xenproject.org/wiki/Tuning_Xen_for_Performance#Memory ).

Making sure that Dom0 vCPUs run on specific pCPUs is not strictly necessary. If wanted, it can be enforced acting on the Xen scheduler, when Dom0 is booted (as there is currently no mechanism to set up this via Xen boot time parameters). If using the xl toolstack, the command is:

xl vcpu-pin 0 <vcpu-ID> <pcpu-list>

Or, with virsh:

virsh vcpupin 0 -\-vcpu <vcpu-ID> -\-cpulist <pcpu-list>

Note that virsh vcpupin –config ... is not effective for

Dom0.

If wanting to limit Dom0 to only a specific (set of) NUMA node(s), the

dom0_nodes=<nodeid> boot command line option can be used. This

will affect both memory and vCPUs. In fact, it means that memory for Dom0 will be