Geo Clustering Quick Start #

Geo clustering protects workloads across globally distributed data centers. This document guides you through the basic setup of a Geo cluster, using the Geo bootstrap scripts provided by the CRM Shell.

Copyright © 2006–2026 SUSE LLC and contributors. All rights reserved.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.2 or (at your option) version 1.3; with the Invariant Section being this copyright notice and license. A copy of the license version 1.2 is included in the section entitled “GNU Free Documentation License”.

For SUSE trademarks, see https://www.suse.com/company/legal/. All third-party trademarks are the property of their respective owners. Trademark symbols (®, ™ etc.) denote trademarks of SUSE and its affiliates. Asterisks (*) denote third-party trademarks.

All information found in this book has been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. Neither SUSE LLC, its affiliates, the authors nor the translators shall be held liable for possible errors or the consequences thereof.

1 Conceptual overview #

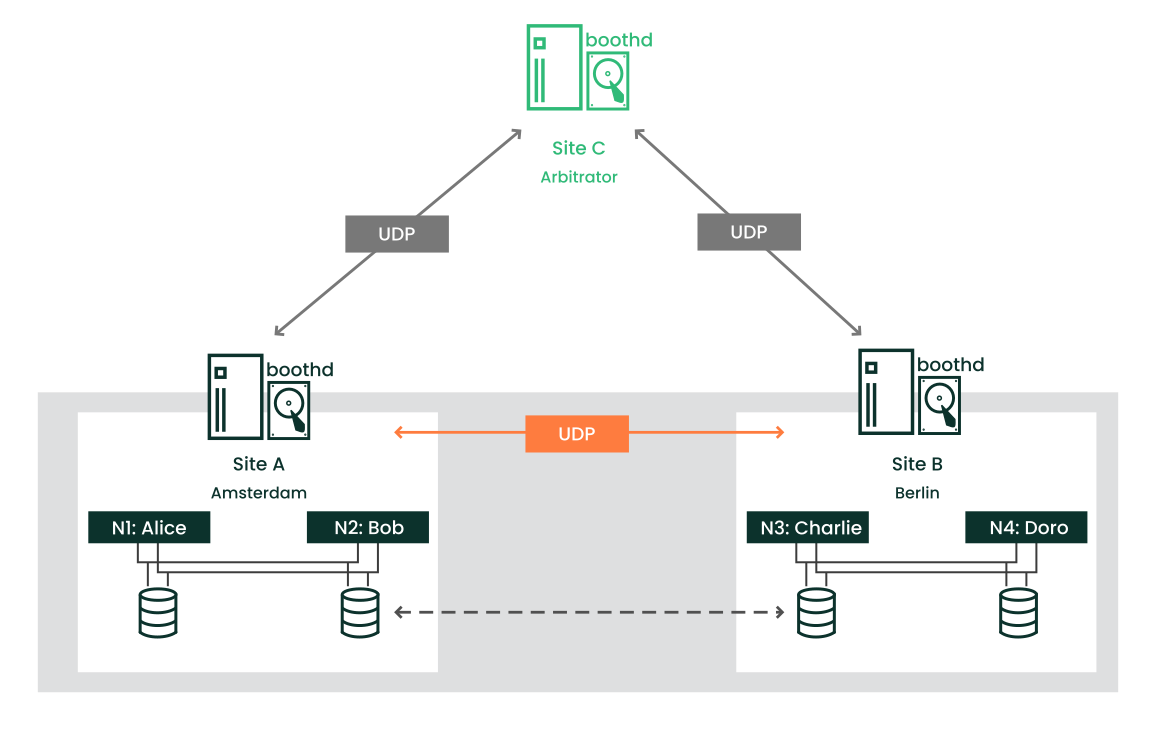

Geo clusters based on SUSE® Linux Enterprise High Availability can be considered

“overlay” clusters where each cluster site corresponds to a

cluster node in a traditional cluster. The overlay cluster is managed by the

booth cluster ticket manager (in the following called booth). Each of the

parties involved in a Geo cluster runs a service, the boothd. It

connects to the booth daemons running on the other sites and exchanges

connectivity details. For making cluster resources highly available across

sites, booth relies on cluster objects called tickets. A ticket grants the

right to run certain resources on a specific cluster site. Booth guarantees

that every ticket is granted to no more than one site at a time.

If the communication between two booth instances breaks down, it might be

because of a network breakdown between the cluster sites or

because of an outage of one cluster site. In this case, you need an additional

instance (a third cluster site or an arbitrator) to reach

consensus about decisions (such as failover of resources across sites).

Arbitrators are single machines (outside of the clusters) that run a booth

instance in a special mode. Each Geo cluster can have one or multiple

arbitrators.

It is also possible to run a two-site Geo cluster without an arbitrator. In this case, a Geo cluster administrator needs to manually manage the tickets. If a ticket should be granted to more than one site at the same time, booth displays a warning.

For more details on the concept, components and ticket management used for Geo clusters, see Administration Guide.

2 Usage scenario #

In the following, we will set up a basic Geo cluster with two cluster sites and one arbitrator:

We assume the cluster sites are named

amsterdamandberlin.We assume that each site consists of two nodes. The nodes

aliceandbobbelong to the clusteramsterdam. The nodescharlieanddorobelong to the clusterberlin.Site

amsterdamwill get the following virtual IP address:192.168.201.100.Site

berlinwill get the following virtual IP address:192.168.202.100.We assume that the arbitrator has the following IP address:

192.168.203.100.

Before you proceed, make sure the following requirements are fulfilled:

- Two existing clusters

You have at least two existing clusters that you want to combine into a Geo cluster (if you need to set up two clusters first, follow the instructions in the Installation and Setup Quick Start).

- Meaningful cluster names

Each cluster has a meaningful name that reflects its location, for example:

amsterdamandberlin. Cluster names are defined in/etc/corosync/corosync.conf.- Arbitrator

You have installed a third machine that is not part of any existing clusters and is to be used as arbitrator.

For detailed requirements on each item, see also Section 3, “Requirements”.

3 Requirements #

All machines (cluster nodes and arbitrators) that will be part of the cluster need at least the following modules and extensions:

Basesystem Module 15 SP5

Server Applications Module 15 SP5

SUSE Linux Enterprise High Availability 15 SP5

When installing the machines, select HA GEO Node as

system role. This leads to the installation of a

minimal system where the packages from the pattern Geo Clustering

for High Availability (ha_geo) are installed by default.

The virtual IPs to be used for each cluster site must be accessible across the Geo cluster.

The sites must be reachable on one UDP and TCP port per booth instance. That means any firewalls or IPsec tunnels in between must be configured accordingly.

Other setup decisions may require opening more ports (for example, for DRBD or database replication).

All cluster nodes on all sites should synchronize to an NTP server outside the cluster. For more information, see the Administration Guide for SUSE Linux Enterprise Server 15 SP5.

If nodes are not synchronized, log files and cluster reports are very hard to analyze.

Use an uneven number of sites in your Geo cluster. If the network connection breaks down, this makes sure that there still is a majority of sites (to avoid a split-brain scenario). If you have an even number of cluster sites, use an arbitrator for handling automatic failover of tickets. If you do not use an arbitrator, you need to handle ticket failover manually.

The cluster on each site has a meaningful name, for example:

amsterdamandberlin.The cluster names for each site are defined in the respective

/etc/corosync/corosync.conffiles:totem { [...] cluster_name: amsterdam }Change the name with following

crmshcommand:#crm cluster rename NEW_NAMEStop and start the cluster services for the changes to take effect:

#crm cluster restartMixed architectures within one cluster are not supported. However, for Geo clusters, each member of the Geo cluster can have a different architecture—be it a cluster site or an arbitrator. For example, you can run a Geo cluster with three members (two cluster sites and an arbitrator), where one cluster site runs on IBM Z, the other cluster site runs on x86, and the arbitrator runs on POWER.

4 Overview of the Geo bootstrap scripts #

With

crm cluster geo_init, turn a cluster into the first site of a Geo cluster. The script takes parameters like the names of the clusters, the arbitrator, and one or multiple tickets and creates/etc/booth/booth.conffrom them. It copies the booth configuration to all nodes on the current cluster site. It also configures the cluster resources needed for booth on the current cluster site.For details, see Section 6, “Setting up the first site of a Geo cluster”.

With

crm cluster geo_join, add the current cluster to an existing Geo cluster. The script copies the booth configuration from an existing cluster site and writes it to/etc/booth/booth.confon all nodes on the current cluster site. It also configures the cluster resources needed for booth on the current cluster site.For details, see Section 7, “Adding another site to a Geo cluster”.

With

crm cluster geo_init_arbitrator, turn the current machine into an arbitrator for the Geo cluster. The script copies the booth configuration from an existing cluster site and writes it to/etc/booth/booth.conf.For details, see Section 8, “Adding the arbitrator”.

All bootstrap scripts log to

/var/log/crmsh/crmsh.log. Check the log file

for any details of the bootstrap process. Any options set during the

bootstrap process can be modified later (by modifying booth settings,

modifying resources etc.). For details, see Administration Guide.

5 Installing the High Availability and Geo clustering packages #

The packages for configuring and managing a Geo cluster are included in the

High Availability and Geo Clustering for High Availability installation

patterns. These patterns are only available after the SUSE Linux Enterprise High Availability is installed.

You can register to the SUSE Customer Center and install SUSE Linux Enterprise High Availability while installing SUSE Linux Enterprise Server, or after installation. For more information, see Deployment Guide for SUSE Linux Enterprise Server.

Install the High Availability and Geo clustering patterns from the command line:

#zypper install -t pattern ha_sles ha_geoInstall the High Availability and Geo clustering patterns on all machines that will be part of your cluster.

Note: Installing software packages on all nodesFor an automated installation of SUSE Linux Enterprise Server 15 SP5 and SUSE Linux Enterprise High Availability 15 SP5, use AutoYaST to clone existing nodes. For more information, see Section 3.2, “Mass installation and deployment with AutoYaST”.

6 Setting up the first site of a Geo cluster #

Use the crm cluster geo_init command to turn an existing

cluster into the first site of a Geo cluster.

amsterdam) with crm cluster geo_init #Define a virtual IP per cluster site that can be used to access the site. We assume using

192.168.201.100and192.168.202.100for this purpose. You do not need to configure the virtual IPs as cluster resources yet. This will be done by the bootstrap scripts.Define the name of at least one ticket that will grant the right to run certain resources on a cluster site. Use a meaningful name that reflects the resources that will depend on the ticket (for example,

ticket-nfs). The bootstrap scripts only need the ticket name—you can define the remaining details (ticket dependencies of the resources) later on, as described in Section 10, “Next steps”.Log in to a node of an existing cluster (for example, on node

aliceof the clusteramsterdam).Run

crm cluster geo_init. For example, use the following options:#crm cluster geo_init \ --clusters "amsterdam=192.168.201.100 berlin=192.168.202.100" \1--tickets ticket-nfs \2--arbitrator 192.168.203.1003The names of the cluster sites (as defined in

/etc/corosync/corosync.conf) and the virtual IP addresses you want to use for each cluster site. In this case, we have two cluster sites (amsterdamandberlin) with a virtual IP address each.The name of one or multiple tickets.

The host name or IP address of a machine outside of the clusters.

The bootstrap script creates the booth configuration file and synchronizes it across the cluster sites. It also creates the basic cluster resources needed for booth. Step 4 of Procedure 2 would result in the following booth configuration and cluster resources:

crm cluster geo_init ## The booth configuration file is "/etc/booth/booth.conf". You need to # prepare the same booth configuration file on each arbitrator and # each node in the cluster sites where the booth daemon can be launched. # "transport" means which transport layer booth daemon will use. # Currently only "UDP" is supported. transport="UDP" port="9929" arbitrator="192.168.203.100" site="192.168.201.100" site="192.168.202.100" authfile="/etc/booth/authkey" ticket="ticket-nfs" expire="600"

crm cluster geo_init #primitive1 booth-ip IPaddr2 \ params rule #cluster-name eq amsterdam ip=192.168.201.100 \ params rule #cluster-name eq berlin ip=192.168.202.100 \ primitive2 booth-site ocf:pacemaker:booth-site \ meta resource-stickiness=INFINITY \ params config=booth \ op monitor interval=10s group3 g-booth booth-ip booth-site \ meta target-role=Stopped4

A virtual IP address for each cluster site. It is required by the booth daemons who need a persistent IP address on each cluster site. | |

A primitive resource for the booth daemon. It communicates with the booth

daemons on the other cluster sites. The daemon can be started on any

node of the site. To make the resource stay on the same node, if possible,

resource-stickiness is set to | |

A cluster resource group for both primitives. With this configuration, each booth daemon will be available at its individual IP address, independent of the node the daemon is running on. | |

The cluster resource group is not started by default. After verifying the configuration of your cluster resources (and adding the resources you need to complete your setup), you need to start the resource group. See Required steps to complete the Geo cluster setup for details. |

7 Adding another site to a Geo cluster #

After you have initialized the first site of your Geo cluster, add the

second cluster with crm cluster geo_join, as described in

Procedure 3.

The script needs SSH access to an already configured cluster site and will

add the current cluster to the Geo cluster.

berlin) with crm cluster geo_join #Log in to a node of the cluster site you want to add (for example, on node

charlieof the clusterberlin).Run the

crm cluster geo_joincommand. For example:#crm cluster geo_join \ --cluster-node 192.168.201.100\1--clusters "amsterdam=192.168.201.100 berlin=192.168.202.100"2Specifies where to copy the booth configuration from. Use the IP address or host name of a node in an already configured Geo cluster site. You can also use the virtual IP address of an existing cluster site (like in this example). Alternatively, use the IP address or host name of an already configured arbitrator for your Geo cluster.

The names of the cluster sites (as defined in

/etc/corosync/corosync.conf) and the virtual IP addresses you want to use for each cluster site. In this case, we have two cluster sites (amsterdamandberlin) with a virtual IP address each.

The crm cluster geo_join command copies the booth

configuration from

1, see

Example 1. In

addition, it creates the cluster resources needed for booth (see

Example 2).

8 Adding the arbitrator #

After you have set up all sites of your Geo cluster with

crm cluster geo_init and

crm cluster geo_join, set up the arbitrator with

crm cluster geo_init_arbitrator.

crm cluster geo_init_arbitrator #Log in to the machine you want to use as arbitrator.

Run the following command. For example:

#crm cluster geo_init_arbitrator --cluster-node 192.168.201.1001Specifies where to copy the booth configuration from. Use the IP address or host name of a node in an already configured Geo cluster site. Alternatively, use the virtual IP address of an already existing cluster site (like in this example).

The crm cluster geo_init_arbitrator script copies the

booth configuration from

1, see

Example 1. It also

enables and starts the booth service on the arbitrator. Thus, the arbitrator

is ready to communicate with the booth instances on the cluster sites when

the booth services are running there too.

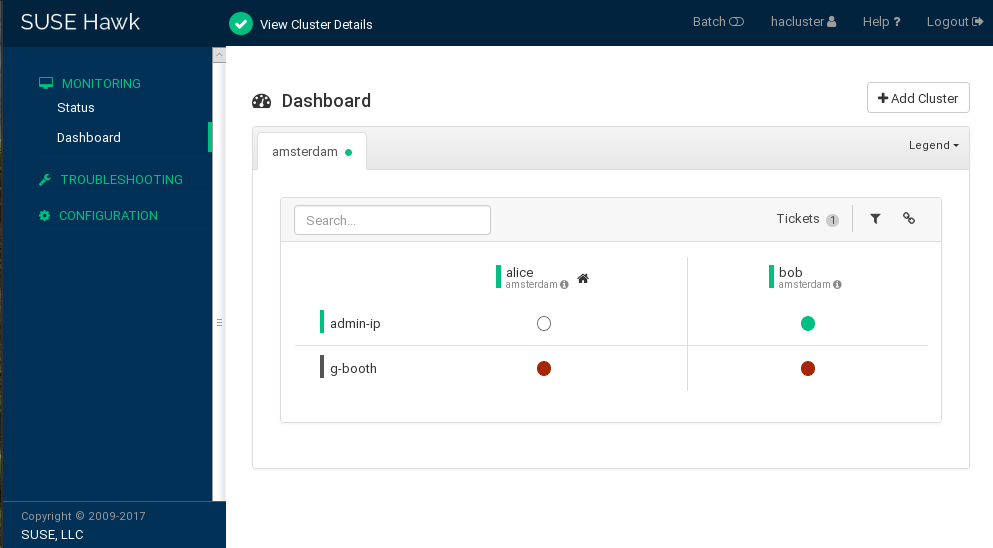

9 Monitoring the cluster sites #

To view both cluster sites with the resources and the ticket that you have created during the bootstrapping process, use Hawk2. The Hawk2 Web interface allows you to monitor and manage multiple (unrelated) clusters and Geo clusters.

All clusters to be monitored from Hawk2's must be running SUSE Linux Enterprise High Availability 15 SP5.

If you did not replace the self-signed certificate for Hawk2 on every cluster node with your own certificate (or a certificate signed by an official Certificate Authority) yet, do the following: log in to Hawk2 on every node in every cluster at least once. Verify the certificate (or add an exception in the browser to bypass the warning). Otherwise Hawk2 cannot connect to the cluster.

Start a Web browser and enter the virtual IP of your first cluster site,

amsterdam:https://192.168.201.100:7630/

Alternatively, use the IP address or host name of

aliceorbob. If you have set up both nodes with the bootstrap scripts, thehawkservice should run on both nodes.Log in to the Hawk2 Web interface.

From the left navigation bar, select .

Hawk2 shows an overview of the resources and nodes on the current cluster site. In addition, it shows any that have been configured for the Geo cluster. If you need information about the icons used in this view, click .

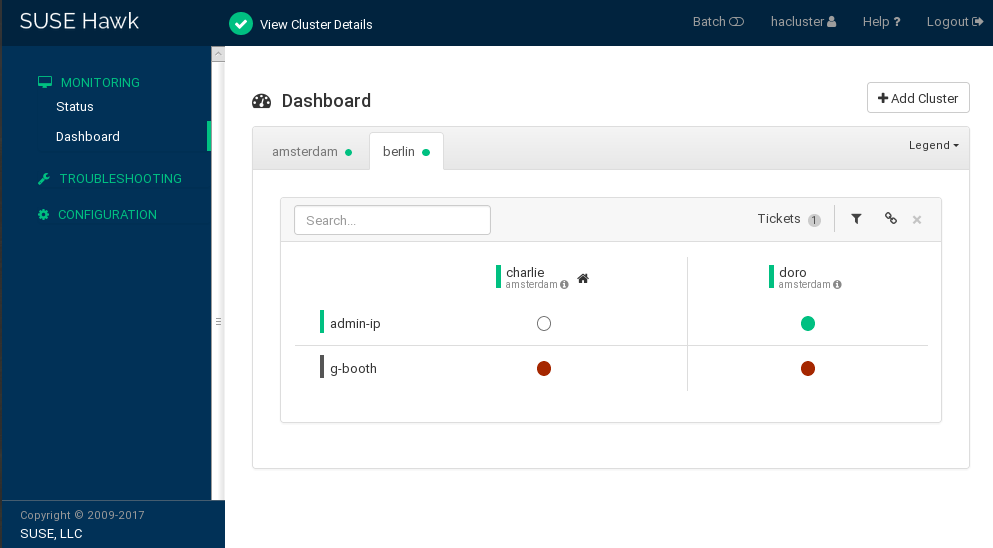

Figure 2: Hawk2 dashboard with one cluster site (amsterdam) #To add a dashboard for the second cluster site, click .

Enter the with which to identify the cluster in the . In this case,

berlin.Enter the fully qualified host name of one of the cluster nodes (in this case,

charlieordoro).Click . Hawk2 displays a second tab for the newly added cluster site with an overview of its nodes and resources.

Figure 3: Hawk2 dashboard with both cluster sites #

To view more details for a cluster site or to manage it, switch to the site's tab and click the chain icon.

Hawk2 opens the view for this site in a new browser window or tab. From there, you can administer this part of the Geo cluster.

10 Next steps #

The Geo clustering bootstrap scripts provide a quick way to set up a basic Geo cluster that can be used for testing purposes. However, to move the resulting Geo cluster into a functioning Geo cluster that can be used in production environments, more steps are required.

- Starting the booth services on cluster sites

After the bootstrap process, the arbitrator booth service cannot communicate with the booth services on the cluster sites yet, because they are not started by default.

The booth service for each cluster site is managed by the booth resource group

g-booth(see Example 2, “Cluster resources created bycrm cluster geo_init”). To start one instance of the booth service per site, start the respective booth resource group on each cluster site. This enables all booth instances to communicate with each other.- Configuring ticket dependencies and order constraints

To make resources depend on the ticket that you have created during the Geo cluster bootstrap process, configure constraints. For each constraint, set a

loss-policythat defines what should happen to the respective resources if the ticket is revoked from a cluster site.For details, see Chapter 6, Configuring cluster resources and constraints.

- Initially granting a ticket to a site

Before booth can manage a certain ticket within the Geo cluster, you initially need to grant it to a site manually. You can use either the booth client command line tool or Hawk2 to grant a ticket.

For details, see Chapter 8, Managing Geo clusters.

The bootstrap scripts create the same booth resources on both cluster sites, and the same booth configuration files on all sites, including the arbitrator. If you extend the Geo cluster setup (to move to a production environment), you will probably fine-tune the booth configuration and change the configuration of the booth-related cluster resources. Afterward, you need to synchronize the changes to the other sites of your Geo cluster to take effect.

To synchronize changes in the booth configuration to all cluster sites (including the arbitrator), use Csync2. Find more information at Chapter 5, Synchronizing configuration files across all sites and arbitrators.

The CIB (Cluster Information Database) is not automatically synchronized across cluster sites of a Geo cluster. That means any changes in resource configuration that are required on all cluster sites need to be transferred to the other sites manually. Do so by tagging the respective resources, exporting them from the current CIB, and importing them to the CIB on the other cluster sites. For details, see Section 6.4, “Transferring the resource configuration to other cluster sites”.

11 For more information #

More documentation for this product is available at https://documentation.suse.com/sle-ha/15-SP5/.

For further configuration and administration tasks, see the comprehensive Geo Clustering Guide.