Managing KVM Virtualization on SUSE Linux Enterprise Server

- WHAT?

KVM (Kernel Virtual Machine) is a virtualization solution that transforms the Linux kernel into a hypervisor for running multiple isolated virtual environments.

- WHY?

Use KVM virtualization to consolidate server workloads and significantly save hardware resources.

- EFFORT

It takes less than 15 minutes of reading time to understand the concept of virtualization.

- GOAL

By the end of this guide, you will have a configured KVM host and be able to deploy virtual machine guests with optimized storage, networking and direct hardware access.

1 Setting up a KVM VM Host Server #

This section documents how to set up and use SUSE Linux Enterprise Server 16.0 as a QEMU-KVM based virtual machine host.

The virtual guest system needs the same hardware resources as if it were installed on a physical machine. The more guests you plan to run on the host system, the more hardware resources—CPU, disk, memory and network—you need to add to the VM Host Server.

1.1 CPU support for virtualization #

To run KVM, your CPU must support virtualization, and virtualization

needs to be enabled in the BIOS. The file /proc/cpuinfo

includes information about your CPU features.

To find out whether your system supports virtualization, see the section Architecture Support in the article Virtualization Limits and Support.

1.2 Required software #

The KVM host requires several packages to be installed. To install all necessary packages, do the following:

Install the patterns-server-kvm_server and patterns-server-kvm_tools.

Create a . If you do not plan to dedicate an additional physical network card to your virtual guests, network bridging is a standard way to connect the guest machines to the network.

After all the required packages are installed (and the new network setup activated), try to load the KVM kernel module relevant for your CPU type—

kvm_intelorkvm_amd:#modprobe kvm_amdCheck if the module is loaded into memory:

>lsmod | grep kvm kvm_amd 237568 20 kvm 1376256 17 kvm_amdNow the KVM host is ready to serve KVM VM Guests.

1.3 KVM host-specific features #

You can improve the performance of KVM-based VM Guests by letting them fully use specific features of the VM Host Server's hardware (paravirtualization). This section introduces techniques that allow guests to access the physical host's hardware directly, bypassing the emulation layer for optimal performance.

Examples included in this section assume basic knowledge of the

qemu-system-ARCH command-line options.

1.3.1 Using the host storage with virtio-scsi #

virtio-scsi is an advanced storage stack for

KVM. It replaces the former virtio-blk stack

for SCSI device pass-through. It has several advantages over

virtio-blk:

- Improved scalability

KVM guests have a limited number of PCI controllers, which results in a limited number of attached devices.

virtio-scsisolves this limitation by grouping multiple storage devices on a single controller. Each device on avirtio-scsicontroller is represented as a logical unit, or LUN.- Standard command set

virtio-blkuses a small set of commands that need to be known to both thevirtio-blkdriver and the virtual machine monitor, and so introducing a new command requires updating both the driver and the monitor.By comparison,

virtio-scsidoes not define commands, but rather a transport protocol for these commands following the industry-standard SCSI specification. This approach is shared with other technologies, such as Fibre Channel, ATAPI and USB devices.- Device naming

virtio-blkdevices are presented inside the guest as/dev/vdX, which is different from device names in physical systems and may cause migration problems.virtio-scsikeeps the device names identical to those on physical systems, making the virtual machines easily relocatable.- SCSI device pass-through

For virtual disks backed by a whole LUN on the host, it is preferable for the guest to send SCSI commands directly to the LUN (pass-through). This is limited in

virtio-blk, as guests need to use the virtio-blk protocol instead of SCSI command pass-through, and, moreover, it is not available for Windows guests.virtio-scsinatively removes these limitations.

1.3.1.1 virtio-scsi usage #

KVM supports the SCSI pass-through feature with the

virtio-scsi-pci device:

# qemu-system-x86_64 [...] \

-device virtio-scsi-pci,id=scsi1.3.2 Accelerated networking with vhost-net #

The vhost-net module is used to accelerate

KVM's paravirtualized network drivers. It provides better latency and

greater network throughput. Use the vhost-net driver

by starting the guest with the following example command line:

# qemu-system-x86_64 [...] \

-netdev tap,id=guest0,vhost=on,script=no \

-net nic,model=virtio,netdev=guest0,macaddr=00:16:35:AF:94:4B

guest0 is an identification string of the

vhost-driven device.

1.3.3 Scaling network performance with multiqueue virtio-net #

As the number of virtual CPUs increases in VM Guests, QEMU offers a way of improving network performance using multiqueue. Multiqueue virtio-net scales network performance by allowing VM Guest virtual CPUs to transfer packets in parallel. Multiqueue support is required on both the VM Host Server and VM Guest sides.

The multiqueue virtio-net solution is most beneficial in the following cases:

Network traffic packets are large.

VM Guest has many connections active at the same time, mainly between the guest systems, or between the guest and the host, or between the guest and an external system.

The number of active queues is equal to the number of virtual CPUs in the VM Guest.

While multiqueue virtio-net increases the total network throughput, it increases CPU consumption as it uses the virtual CPU's power.

The following procedure lists important steps to enable the

multiqueue feature with qemu-system-ARCH. It

assumes that a tap network device with multiqueue capability

(supported since kernel version 3.8) is set up on the VM Host Server.

In

qemu-system-ARCH, enable multiqueue for the tap device:-netdev tap,vhost=on,queues=2*N

where

Nstands for the number of queue pairs.In

qemu-system-ARCH, enable multiqueue and specify MSI-X (Message Signaled Interrupt) vectors for the virtio-net-pci device:-device virtio-net-pci,mq=on,vectors=2*N+2

where the formula for the number of MSI-X vectors results from: N vectors for TX (transmit) queues, N for RX (receive) queues, one for configuration purposes, and one for possible VQ (vector quantization) control.

In the VM Guest, enable multiqueue on the relevant network interface (

eth0in this example):>sudoethtool -L eth0 combined 2*N

The resulting qemu-system-ARCH command line looks

similar to the following example:

qemu-system-x86_64 [...] -netdev tap,id=guest0,queues=8,vhost=on \ -device virtio-net-pci,netdev=guest0,mq=on,vectors=10

The id of the network device

(guest0) needs to be identical for both options.

Inside the running VM Guest, specify the following command with

root privileges:

>sudoethtool -L eth0 combined 8

Now the guest system networking uses the multiqueue support from the

qemu-system-ARCH hypervisor.

1.3.4 VFIO: secure direct access to devices #

Directly assigning a PCI device to a VM Guest (PCI pass-through) avoids performance issues caused by avoiding any emulation in performance-critical paths. VFIO replaces the traditional KVM PCI Pass-Through device assignment. A prerequisite for this feature is a VM Host Server configuration as described in Important: Requirements for VFIO and SR-IOV.

To be able to assign a PCI device via VFIO to a VM Guest, you need to find out which IOMMU Group it belongs to. The IOMMU (input/output memory management unit that connects a direct memory access-capable I/O bus to the main memory) API supports the notion of groups. A group is a set of devices that can be isolated from all other devices in the system. Groups are therefore the unit of ownership used by VFIO.

Identify the host PCI device to assign to the guest.

>sudolspci -nn [...] 00:10.0 Ethernet controller [0200]: Intel Corporation 82576 \ Virtual Function [8086:10ca] (rev 01) [...]Note down the device ID,

00:10.0in this example, and the vendor ID (8086:10ca).Find the IOMMU group of this device:

>sudoreadlink /sys/bus/pci/devices/0000\:00\:10.0/iommu_group ../../../kernel/iommu_groups/20The IOMMU group for this device is

20. Now you can check the devices belonging to the same IOMMU group:>sudols -l /sys/bus/pci/devices/0000\:01\:10.0/iommu_group/devices/ [...] 0000:00:1e.0 -> ../../../../devices/pci0000:00/0000:00:1e.0 [...] 0000:01:10.0 -> ../../../../devices/pci0000:00/0000:00:1e.0/0000:01:10.0 [...] 0000:01:10.1 -> ../../../../devices/pci0000:00/0000:00:1e.0/0000:01:10.1Unbind the device from the device driver:

>sudoecho "0000:01:10.0" > /sys/bus/pci/devices/0000\:01\:10.0/driver/unbindBind the device to the vfio-pci driver using the vendor ID from step 1:

>sudoecho "8086 153a" > /sys/bus/pci/drivers/vfio-pci/new_idA new device

/dev/vfio/IOMMU_GROUPis created as a result,/dev/vfio/20in this case.Change the ownership of the newly created device:

>sudochown qemu.qemu /dev/vfio/DEVICENow run the VM Guest with the PCI device assigned.

>sudoqemu-system-ARCH [...] -device vfio-pci,host=00:10.0,id=ID

As of SUSE Linux Enterprise Server 16.0, hotplugging of PCI devices passed to a VM Guest via VFIO is not supported.

You can find more detailed information on the

VFIO driver in the

/usr/src/linux/Documentation/vfio.txt file

(package kernel-source needs to be installed).

1.3.5 VirtFS: sharing directories between host and guests #

VM Guests normally run in a separate computing space—they are provided their own memory range, dedicated CPUs, and file system space. The ability to share parts of the VM Host Server's file system makes the virtualization environment more flexible by simplifying mutual data exchange. Network file systems, such as CIFS and NFS, have been the traditional way of sharing directories. But as they are not specifically designed for virtualization purposes, they suffer from major performance and feature issues.

SELinux Requirement: For security_model=mapped,

configure SELinux context:

#semanage fcontext -a -t virtiofsd_t "/tmp(/.*)?"#restorecon -Rv /tmp

1.3.5.1 Host Configuration #

Nothing needs to be done on the host side aside from installing virtiofsd. The VM Guest xml libvirt file should have a configuration like:

<filesystem type="virtiofs" accessmode="mapped"/> <driver="virtiofs"/> <source dir="/tmp"/> <target dir="host_tmp"/> <alias name="fs0"/> <address type="pci" domain="0x0000" bus="0x01" slot="0x00" function="0x0"/> </filesystem>

9p Protocol is a legacy solution with critical flaws. Moreover,

it incurs ~30-50% higher CPU overhead than virtiofs

for sequential I/O due to constant context switching between user and kernel space.

1.3.5.1.1 Access Mode Options for virtiofs #

The accessmode attribute in the <filesystem> element

defines how guest file permissions map to host permissions. Only two values are valid:

accessmode | Description | Security Implications |

|---|---|---|

mapped |

The default mode. Maps guest UIDs/GIDs to host UIDs/GIDs using a translation table. Guest files appear as if owned by a dedicated "virtiofs" user on the host (typically UID 1000).

Example: Guest user |

Recommended for all environments. Prevents guest users from directly accessing host user accounts. Does not require matching host users. |

passthrough |

Guest UIDs/GIDs are used directly on the host. The guest must have matching users on the host.

Example: Guest user |

Only for trusted guests (e.g., same-tenant cloud environments).

Requires matching host users (e.g., host must have Security risk: Compromised guest can directly access host user accounts. |

none |

Invalid value (common documentation error).

Always use |

Causes immediate configuration failure. |

Key Configuration Rule:

accessmode='mapped' must match the host's

security_model=mapped in virtiofsd.

Mismatched modes cause mount failures with errors like:

Failed to set security context: Operation not permitted.

1.3.5.2 Guest Configuration #

On the guest, load the kernel module and mount the file system:

The virtiofs module should be loaded automatically, if not do:

# modprobe virtiofsNow you can mount the target directory on your VM Guest:

# mount -t virtiofs -o dax host_tmp /mnt/hosttmpOptions:

virtiofsdax: Enables direct access for performance (recommended).daxcannot be used with the BTRFS file system.host_tmp: The target directory in the VM Guest configuration.

1.3.5.3 Persistent mounts virtiofs across reboot #

Simply add this line to /etc/fstab:

host_tmp /mnt/hosttmp virtiofs rw,nofail 0 0

1.3.5.4 Troubleshooting Common Issues #

Guest Kernel Check: verify the module

virtiofsis loaded:#lsmod | grep virtiofsPermission denied: Check SELinux context (see Section 1.3.5.1, “Host Configuration”)Mount fails with 9p: Verify you used-t virtiofs(not9p)Guest writes not syncing: Add

cache=noneto mount options

1.3.6 KSM: sharing memory pages between guests #

Kernel Same Page Merging (KSM) is a Linux kernel feature that merges identical memory pages from multiple running processes into one memory region. Because KVM guests run as processes under Linux, KSM provides the memory overcommit feature to hypervisors for more efficient use of memory. Therefore, if you need to run multiple virtual machines on a host with limited memory, KSM may be helpful to you.

KSM stores its status information in

the files under the /sys/kernel/mm/ksm directory:

> ls -1 /sys/kernel/mm/ksm

full_scans

merge_across_nodes

pages_shared

pages_sharing

pages_to_scan

pages_unshared

pages_volatile

run

sleep_millisecs

For more information on the meaning of the

/sys/kernel/mm/ksm/* files, see

/usr/src/linux/Documentation/vm/ksm.txt (package

kernel-source).

To use KSM, do the following.

Although SLES includes KSM support in the kernel, it is disabled by default. To enable it, run the following command:

#echo 1 > /sys/kernel/mm/ksm/runNow run several VM Guests under KVM and inspect the content of files

pages_sharingandpages_shared, for example:>while [ 1 ]; do cat /sys/kernel/mm/ksm/pages_shared; sleep 1; done 13522 13523 13519 13518 13520 13520 13528

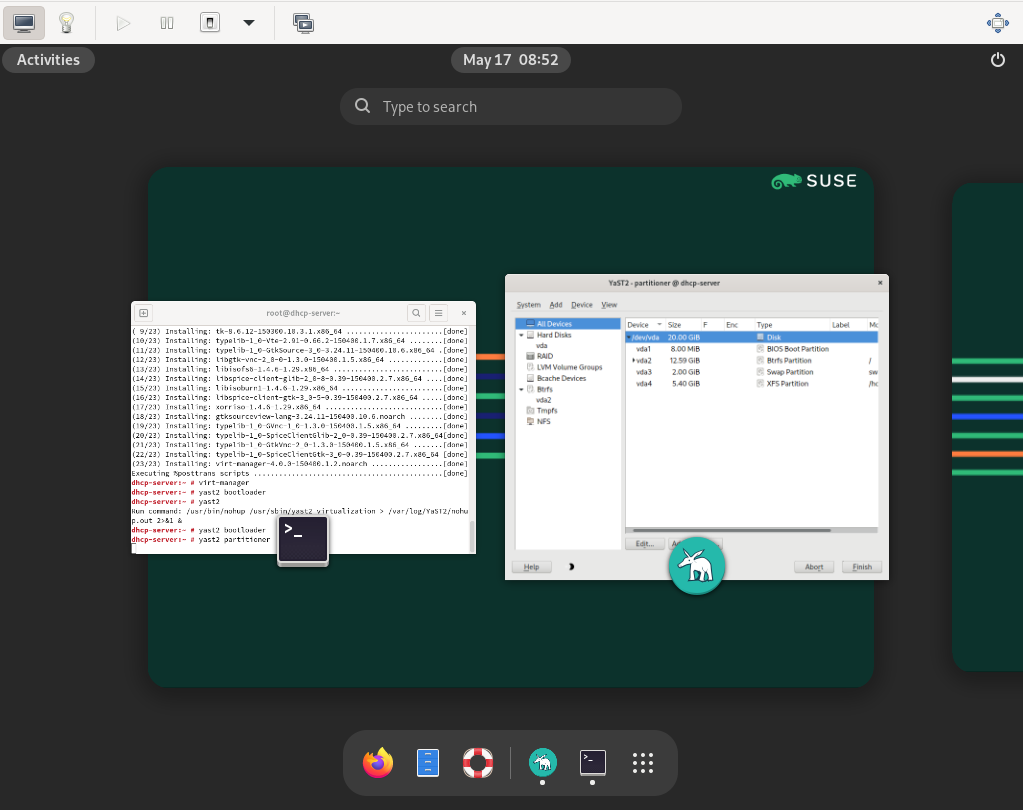

2 Guest installation #

The libvirt-based tools such as

virt-manager and virt-install offer

convenient interfaces to set up and manage virtual machines. They act as a

kind of wrapper for the qemu-system-ARCH command.

However, it is also possible to use qemu-system-ARCH

directly without using

libvirt-based tools.

qemu-system-ARCH and libvirt

Virtual Machines created with

qemu-system-ARCH are not visible to the

libvirt-based tools.

2.1 Basic installation with qemu-system-ARCH #

In the following example, a virtual machine for a SUSE Linux Enterprise Server 11 installation is created. For detailed information on the commands, refer to the respective man pages.

If you do not already have an image of a system that you want to run in a virtualized environment, you need to create one from the installation media. In such a case, you need to prepare a hard disk image, and obtain an image of the installation media or the media itself.

Create a hard disk with qemu-img.

> qemu-img create1 -f raw2 /images/sles/hda3 8G4

The subcommand | |

Specify the disk's format with the | |

The full path to the image file. | |

The size of the image, 8 GB in this case. The image is created as a Sparse image file that grows when the disk is filled with data. The specified size defines the maximum size to which the image file can grow. |

After at least one hard disk image is created, you can set up a virtual

machine with qemu-system-ARCH that boots into the

installation system:

# qemu-system-x86_64 -name "sles"1-machine accel=kvm -M pc2 -m 7683 \

-smp 24 -boot d5 \

-drive file=/images/sles/hda,if=virtio,index=0,media=disk,format=raw6 \

-drive file=/isos/15 SP6-Online-ARCH-GM-media1.iso,index=1,media=cdrom7 \

-net nic,model=virtio,macaddr=52:54:00:05:11:118 -net user \

-vga cirrus9 -balloon virtio10Name of the virtual machine that is displayed in the window caption and can be used for the VNC server. This name must be unique. | |

Specifies the machine type. Use | |

Maximum amount of memory for the virtual machine. | |

Defines an SMP system with two processors. | |

Specifies the boot order. Valid values are | |

Defines the first ( | |

The second ( | |

Defines a paravirtualized ( | |

Specifies the graphic card. If you specify | |

Defines the paravirtualized balloon device that allows dynamically

changing the amount of memory (up to the maximum value specified with

the parameter |

After the installation of the guest operating system finishes, you can start the related virtual machine without the need to specify the CD-ROM device:

# qemu-system-x86_64 -name "sles" -machine type=pc,accel=kvm -m 768 \

-smp 2 -boot c \

-drive file=/images/sles/hda,if=virtio,index=0,media=disk,format=raw \

-net nic,model=virtio,macaddr=52:54:00:05:11:11 \

-vga cirrus -balloon virtio2.2 Managing disk images with qemu-img #

In the previous section (see

Section 2.1, “Basic installation with qemu-system-ARCH”), we used the

qemu-img command to create an image of a hard disk.

You can, however, use qemu-img for general disk image

manipulation. This section introduces qemu-img

subcommands to help manage disk images flexibly.

2.2.1 General information on qemu-img invocation #

qemu-img uses subcommands (like

zypper does) to do specific tasks. Each subcommand

understands a different set of options. Certain options are general and

used by more of these subcommands, while others are unique to the

related subcommand. See the qemu-img man page (man 1

qemu-img) for a list of all supported options.

qemu-img uses the following general syntax:

> qemu-img subcommand [options]and supports the following subcommands:

createCreates a new disk image on the file system.

checkChecks an existing disk image for errors.

compareCheck if two images have the same content.

mapDumps the metadata of the image file name and its backing file chain.

amendModifies the options specific to the image format for the image file.

convertConverts an existing disk image to a new one in a different format.

infoDisplays information about the relevant disk image.

snapshotManages snapshots of existing disk images.

commitApplies changes made to an existing disk image.

rebaseCreates a new base image based on an existing image.

resizeIncreases or decreases the size of an existing image.

2.2.2 Creating, converting, and checking disk images #

This section describes how to create disk images, check their condition, convert a disk image from one format to another, and get detailed information about a particular disk image.

2.2.2.1 qemu-img create #

Use qemu-img create to create a new disk image for

your VM Guest operating system. The command uses the following

syntax:

> qemu-img create -f fmt1 -o options2 fname3 size4

The format of the target image. Supported formats are

| |

Certain image formats support additional options to be passed on the

command line. You can specify them here with the

| |

Path to the target disk image to be created. | |

Size of the target disk image (if not already specified with the

|

To create a new disk image sles.raw in the

directory /images growing up to a maximum size

of 4 GB, run the following command:

>qemu-img create -f raw -o size=4G /images/sles.raw Formatting '/images/sles.raw', fmt=raw size=4294967296>ls -l /images/sles.raw -rw-r--r-- 1 tux users 4294967296 Nov 15 15:56 /images/sles.raw>qemu-img info /images/sles.raw image: /images/sles11.raw file format: raw virtual size: 4.0G (4294967296 bytes) disk size: 0

As you can see, the virtual size of the newly created image is 4 GB, but the actual reported disk size is 0, as no data has been written to the image yet.

If you need to create a disk image on the Btrfs file system, you

can use nocow=on to reduce the performance

overhead created by the copy-on-write feature of Btrfs:

> qemu-img create -o nocow=on test.img 8G

If you, however, want to use copy-on-write, for example, for

creating snapshots or sharing them across virtual machines, then

leave the command line without the nocow option.

2.2.2.2 qemu-img convert #

Use qemu-img convert to convert disk images to

another format. To get a complete list of image formats supported by

QEMU, run qemu-img -h and look

at the last line of the output. The command uses the following

syntax:

> qemu-img convert -c1 -f fmt2 -O out_fmt3 -o options4 fname5 out_fname6

Applies compression to the target disk image. Only

| |

The format of the source disk image. It is normally autodetected and can therefore be omitted. | |

The format of the target disk image. | |

Specify additional options relevant to the target image format.

Use | |

Path to the source disk image to be converted. | |

Path to the converted target disk image. |

>qemu-img convert -O vmdk /images/sles.raw \ /images/sles.vmdk>ls -l /images/ -rw-r--r-- 1 tux users 4294967296 16. lis 10.50 sles.raw -rw-r--r-- 1 tux users 2574450688 16. lis 14.18 sles.vmdk

To see a list of options relevant for the selected target image

format, run the following command (replace vmdk

with your image format):

>qemu-img convert -O vmdk /images/sles.raw /images/sles.vmdk -o ?Supported options: size Virtual disk size backing_file File name of a base image compat6 VMDK version 6 image subformat VMDK flat extent format, can be one of {monolithicSparse \ (default) | monolithicFlat | twoGbMaxExtentSparse | twoGbMaxExtentFlat} scsi SCSI image

2.2.2.3 qemu-img check #

Use qemu-img check to check the existing disk

image for errors. Not all disk image formats support this feature.

The command uses the following syntax:

> qemu-img check -f fmt1 fname2The format of the source disk image. It is normally autodetected and can therefore be omitted. | |

Path to the source disk image to be checked. |

If no error is found, the command returns no output. Otherwise, the type and number of errors found are shown.

> qemu-img check -f qcow2 /images/sles.qcow2

ERROR: invalid cluster offset=0x2af0000

[...]

ERROR: invalid cluster offset=0x34ab0000

378 errors were found on the image.2.2.2.4 Increasing the size of an existing disk image #

When creating a new image, you must specify its maximum size before the image is created (see Section 2.2.2.1, “qemu-img create”). After you have installed the VM Guest and have been using it for a certain time, the initial size of the image may no longer be sufficient. In that case, add more space to it.

To increase the size of an existing disk image by 2 gigabytes, use:

> qemu-img resize /images/sles.raw +2GB

You can resize the disk image using the formats

raw and qcow2. To resize an

image in another format, convert it to a supported format with

qemu-img convert first.

The image now contains an empty space of 2 GB after the final partition. You can resize existing partitions or add new ones.

2.2.2.5 Advanced options for the qcow2 file format #

qcow2 is the main disk image format used by QEMU. Its size grows on demand, and disk space is only allocated when it is needed by the virtual machine.

A qcow2-formatted file is organized in units of constant size. These units are called clusters. Viewed from the guest side, the virtual disk is also divided into clusters of the same size. QEMU defaults to 64 kB clusters, but you can specify a different value when creating a new image:

> qemu-img create -f qcow2 -o cluster_size=128K virt_disk.qcow2 4GA qcow2 image contains a set of tables organized in two levels that are called the L1 and L2 tables. There is just one L1 table per disk image, while there can be many L2 tables depending on how big the image is.

To read or write data to the virtual disk, QEMU needs to read its corresponding L2 table to find out the relevant data location. Because reading the table for each I/O operation consumes system resources, QEMU keeps a cache of L2 tables in memory to speed up disk access.

2.2.2.5.1 Choosing the right cache size #

The cache size relates to the amount of allocated space. L2 cache can map the following amount of virtual disk:

disk_size = l2_cache_size * cluster_size / 8

With the default 64 kB of cluster size, that is

disk_size = l2_cache_size * 8192

Therefore, to have a cache that maps n gigabytes

of disk space with the default cluster size, you need

l2_cache_size = disk_size_GB * 131072

QEMU uses 1 MB (1048576 bytes) of L2 cache by default. Following the above formulas, 1 MB of L2 cache covers 8 GB (1048576 / 131072) of virtual disk. This means that the performance is fine with the default L2 cache size if your virtual disk size is up to 8 GB. For larger disks, you can speed up disk access by increasing the L2 cache size.

2.2.2.5.2 Configuring the cache size #

You can use the -drive option on the QEMU

command line to specify the cache size. Alternatively, when

communicating via QMP, use the blockdev-add

command. For more information on QMP, see

Section 3.11, “QMP - QEMU machine protocol”.

The following options configure the cache size for the virtual guest:

- l2-cache-size

The maximum size of the L2 table cache.

- refcount-cache-size

The maximum size of the refcount block cache. For more information on refcount, see https://raw.githubusercontent.com/qemu/qemu/master/docs/qcow2-cache.txt.

- cache-size

The maximum size of both caches combined.

When specifying values for the options above, be aware of the following:

The size of both the L2 and refcount block caches needs to be a multiple of the cluster size.

If you only set one option, QEMU automatically adjusts the other options so that the L2 cache is 4 times bigger than the refcount cache.

The refcount cache is used much less often than the L2 cache; therefore, you can keep it small:

# qemu-system-ARCH [...] \

-drive file=disk_image.qcow2,l2-cache-size=4194304,refcount-cache-size=2621442.2.2.5.3 Reducing memory usage #

The larger the cache, the more memory it consumes. There is a separate L2 cache for each qcow2 file. When using a lot of big disk images, you may need a considerably large amount of memory. Memory consumption is even worse if you add backing files (Section 2.2.4, “Manipulate disk images effectively”) and snapshots (see Section 2.2.3, “Managing snapshots of virtual machines with qemu-img”) to the guest's setup chain.

This is why QEMU introduced the

cache-clean-interval setting. It defines an

interval in seconds after which all cache entries that have not

been accessed are removed from memory.

The following example removes all unused cache entries every 10 minutes:

# qemu-system-ARCH [...] -drive file=hd.qcow2,cache-clean-interval=600If this option is not set, the default value is 0, and it disables this feature.

2.2.3 Managing snapshots of virtual machines with qemu-img #

Virtual Machine snapshots are snapshots of the complete environment in which a VM Guest is running. The snapshot includes the state of the processor (CPU), memory (RAM), devices, and all writable disks.

Snapshots are helpful when you need to save your virtual machine in a particular state. For example, after you have configured network services on a virtualized server and want to quickly start the virtual machine in the same state you last saved it. Or you can create a snapshot after the virtual machine has been powered off to create a backup state before you try something experimental and make the VM Guest unstable. This section introduces the latter case, while the former is described in Section 3, “Virtual machine administration using QEMU monitor”.

To use snapshots, your VM Guest must contain at least one writable

hard disk image in qcow2 format. This device is

normally the first virtual hard disk.

Virtual Machine snapshots are created with the

savevm command in the interactive QEMU monitor. To

make identifying a particular snapshot easier, you can assign it a

tag. For more information on the QEMU monitor, see

Section 3, “Virtual machine administration using QEMU monitor”.

Once your qcow2 disk image contains saved snapshots,

you can inspect them with the qemu-img snapshot

command.

Do not create or delete virtual machine snapshots with the

qemu-img snapshot command while the virtual

machine is running. Otherwise, you may damage the disk image with the

state of the virtual machine saved.

2.2.3.1 Listing existing snapshots #

Use qemu-img snapshot -l

DISK_IMAGE to view a list of all existing

snapshots saved in the disk_image image. You can

get the list even while the VM Guest is running.

> qemu-img snapshot -l /images/sles.qcow2

Snapshot list:

ID1 TAG2 VM SIZE3 DATE4 VM CLOCK5

1 booting 4.4M 2013-11-22 10:51:10 00:00:20.476

2 booted 184M 2013-11-22 10:53:03 00:02:05.394

3 logged_in 273M 2013-11-22 11:00:25 00:04:34.843

4 ff_and_term_running 372M 2013-11-22 11:12:27 00:08:44.965Unique auto-incremented identification number of the snapshot. | |

Unique description string of the snapshot. It is meant as a human-readable version of the ID. | |

The disk space occupied by the snapshot. The more memory is consumed by running applications, the bigger the snapshot is. | |

Time and date the snapshot was created. | |

The current state of the virtual machine's clock. |

2.2.3.2 Creating snapshots of a powered-off virtual machine #

Use qemu-img snapshot -c

SNAPSHOT_TITLE

DISK_IMAGE to create a snapshot of the

current state of a virtual machine that was previously powered off.

> qemu-img snapshot -c backup_snapshot /images/sles.qcow2> qemu-img snapshot -l /images/sles.qcow2

Snapshot list:

ID TAG VM SIZE DATE VM CLOCK

1 booting 4.4M 2013-11-22 10:51:10 00:00:20.476

2 booted 184M 2013-11-22 10:53:03 00:02:05.394

3 logged_in 273M 2013-11-22 11:00:25 00:04:34.843

4 ff_and_term_running 372M 2013-11-22 11:12:27 00:08:44.965

5 backup_snapshot 0 2013-11-22 14:14:00 00:00:00.000If something breaks in your VM Guest and you need to restore the state of the saved snapshot (ID 5 in our example), power off your VM Guest and execute the following command:

> qemu-img snapshot -a 5 /images/sles.qcow2

The next time you run the virtual machine with

qemu-system-ARCH, it will be in the state of

snapshot number 5.

The qemu-img snapshot -c command is not related

to the savevm command of QEMU monitor (see

Section 3, “Virtual machine administration using QEMU monitor”). For example, you cannot apply

a snapshot with qemu-img snapshot -a on a

snapshot created with savevm in QEMU's

monitor.

2.2.3.3 Deleting snapshots #

Use qemu-img snapshot -d

SNAPSHOT_ID

DISK_IMAGE to delete old or unneeded

snapshots of a virtual machine. This saves disk space inside the

qcow2 disk image, as the space occupied by the

snapshot data is restored:

> qemu-img snapshot -d 2 /images/sles.qcow22.2.4 Manipulate disk images effectively #

Imagine the following real-life situation: you are a server administrator who runs and manages several virtualized operating systems. One group of these systems is based on one specific distribution, while another group (or groups) is based on different versions of the distribution or even on a different (and maybe non-Unix) platform. To make the case even more complex, individual virtual guest systems based on the same distribution differ according to the department and deployment. A file server typically uses a different setup and services than a Web server does, while both may still be based on SUSE® Linux Enterprise Server.

With QEMU it is possible to create “base” disk images. You can use them as template virtual machines. These base images save you plenty of time because you do not need to install the same operating system more than once.

2.2.4.1 Base and derived images #

First, build a disk image as usual and install the target system on

it. For more information, see

Section 2.1, “Basic installation with qemu-system-ARCH” and

Section 2.2.2, “Creating, converting, and checking disk images”. Then build a

new image while using the first one as a base image. The base image

is also called a backing file. After your new

derived image is built, never boot the base

image again, but boot the derived image instead. Several derived

images may depend on one base image at the same time. Therefore,

changing the base image can damage the dependencies. While using your

derived image, QEMU writes changes to it and uses the base image

only for reading.

It is a good practice to create a base image from a freshly installed (and, if needed, registered) operating system with no patches applied and no additional applications installed or removed. Later on, you can create another base image with the latest patches applied and based on the original base image.

2.2.4.2 Creating derived images #

While you can use the raw format for base

images, you cannot use it for derived images because the

raw format does not support the

backing_file option. Use, for example, the

qcow2 format for the derived images.

For example, /images/sles_base.raw is the base

image holding a freshly installed system.

> qemu-img info /images/sles_base.raw

image: /images/sles_base.raw

file format: raw

virtual size: 4.0G (4294967296 bytes)

disk size: 2.4G

The image's reserved size is 4 GB, the actual size is 2.4 GB, and its

format is raw. Create an image derived from the

/images/sles_base.raw base image with:

> qemu-img create -f qcow2 /images/sles_derived.qcow2 \

-o backing_file=/images/sles_base.raw

Formatting '/images/sles_derived.qcow2', fmt=qcow2 size=4294967296 \

backing_file='/images/sles_base.raw' encryption=off cluster_size=0Look at the derived image details:

> qemu-img info /images/sles_derived.qcow2

image: /images/sles_derived.qcow2

file format: qcow2

virtual size: 4.0G (4294967296 bytes)

disk size: 140K

cluster_size: 65536

backing file: /images/sles_base.raw \

(actual path: /images/sles_base.raw)Although the reserved size of the derived image is the same as the size of the base image (4 GB), the actual size is only 140 KB. The reason is that only changes made to the system inside the derived image are saved. Run the derived virtual machine, register it, if needed, and apply the latest patches. Do any other changes in the system, such as removing unneeded or installing new software packages. Then shut the VM Guest down and examine its details once more:

> qemu-img info /images/sles_derived.qcow2

image: /images/sles_derived.qcow2

file format: qcow2

virtual size: 4.0G (4294967296 bytes)

disk size: 1.1G

cluster_size: 65536

backing file: /images/sles_base.raw \

(actual path: /images/sles_base.raw)

The disk size value has grown to 1.1 GB, which is

the disk space occupied by the changes on the file system compared to

the base image.

2.2.4.3 Rebasing derived images #

After you have modified the derived image (applied patches, installed specific applications, changed environment settings, etc.), it reaches the desired state. At that point, you can merge the original base image and the derived image to create a new base image.

Your original base image (/images/sles_base.raw)

holds a freshly installed system. It can be a template for new

modified base images, while the new one can contain the same system

as the first one plus all security and update patches applied, for

example. After you have created this new base image, you can use it

as a template for more specialized derived images as well. The new

base image becomes independent of the original one. The process of

creating base images from derived ones is called

rebasing:

> qemu-img convert /images/sles_derived.qcow2 \

-O raw /images/sles_base2.raw

This command created the new base image

/images/sles_base2.raw using the

raw format.

> qemu-img info /images/sles_base2.raw

image: /images/sles11_base2.raw

file format: raw

virtual size: 4.0G (4294967296 bytes)

disk size: 2.8GThe new image is 0.4 gigabytes larger than the original base image. It uses no backing file, and you can easily create new derived images based on it. This lets you create a sophisticated hierarchy of virtual disk images for your organization, saving a lot of time and work.

2.2.4.4 Mounting an image on a VM Host Server #

It can be useful to mount a virtual disk image on the host system.

Linux systems can mount an internal partition of a

raw disk image using a loopback device. The first

example procedure is more complex but more illustrative, while the

second one is straightforward:

Set a loop device on the disk image whose partition you want to mount.

>losetup /dev/loop0 /images/sles_base.rawFind the sector size and the starting sector number of the partition you want to mount.

>fdisk -lu /dev/loop0 Disk /dev/loop0: 4294 MB, 4294967296 bytes 255 heads, 63 sectors/track, 522 cylinders, total 8388608 sectors Units = sectors of 1 * 512 = 5121 bytes Disk identifier: 0x000ceca8 Device Boot Start End Blocks Id System /dev/loop0p1 63 1542239 771088+ 82 Linux swap /dev/loop0p2 * 15422402 8385929 3421845 83 LinuxCalculate the partition start offset:

sector_size * sector_start = 512 * 1542240 = 789626880Delete the loop and mount the partition inside the disk image with the calculated offset on a prepared directory.

>losetup -d /dev/loop0>mount -o loop,offset=789626880 \ /images/sles_base.raw /mnt/sles/>ls -l /mnt/sles/ total 112 drwxr-xr-x 2 root root 4096 Nov 16 10:02 bin drwxr-xr-x 3 root root 4096 Nov 16 10:27 boot drwxr-xr-x 5 root root 4096 Nov 16 09:11 dev [...] drwxrwxrwt 14 root root 4096 Nov 24 09:50 tmp drwxr-xr-x 12 root root 4096 Nov 16 09:16 usr drwxr-xr-x 15 root root 4096 Nov 16 09:22 varCopy one or more files onto the mounted partition and unmount it when finished.

>cp /etc/X11/xorg.conf /mnt/sles/root/tmp>ls -l /mnt/sles/root/tmp>umount /mnt/sles/

Never mount a partition of an image of a running virtual machine in

read-write mode. This could corrupt the

partition and break the whole VM Guest.

3 Virtual machine administration using QEMU monitor #

When a virtual machine is invoked by the qemu-system-ARCH command, for

example, qemu-system-x86_64, a monitor console is

provided for performing interaction with the user. Using the commands

available in the monitor console, it is possible to inspect the running

operating system, change removable media, take screenshots or audio grabs

and control other aspects of the virtual machine.

The following sections list selected useful QEMU monitor commands and

their purpose. To get the full list, enter help in the

QEMU monitor command line.

3.1 Accessing monitor console #

libvirt

You can access the monitor console only if you started the virtual

machine directly with the qemu-system-ARCH command and are viewing its

graphical output in a built-in QEMU window.

If you started the virtual machine with libvirt, for example, using

virt-manager, and are viewing its output via VNC or

Spice sessions, you cannot access the monitor console directly. You

can, however, send the monitor command to the virtual machine via

virsh:

# virsh qemu-monitor-command COMMAND

The way you access the monitor console depends on which display device

you use to view the output of a virtual machine. Find more details about

displays in Section 4.3.2.2, “Display options”.

For example, to view the monitor while the -display gtk

option is in use, press Ctrl–Alt–2. Similarly, when

the -nographic option is in use, you can switch to the

monitor console by pressing the following key combination:

Ctrl–AC.

To get help while using the console, use help or

?. To get help for a specific command, use

help COMMAND.

3.2 Getting information about the guest system #

To get information about the guest system, use info.

If used without any option, a list of possible options is printed.

Options determine which part of the system is analyzed:

info versionShows the version of QEMU.

info commandsLists available QMP commands.

info networkShows the network state.

info chardevShows the character devices.

info blockInformation about block devices, such as hard disks, floppy drives, or CD-ROMs.

info blockstatsRead and write statistics on block devices.

info registersShows the CPU registers.

info cpusShows information about available CPUs.

info historyShows the command-line history.

info irqShows the interrupt statistics.

info picShows the i8259 (PIC) state.

info pciShows the PCI information.

info tlbShows virtual to physical memory mappings.

info memShows the active virtual memory mappings.

info jitShows dynamic compiler information.

info kvmShows the KVM information.

info numaShows the NUMA information.

info usbShows the guest USB devices.

info usbhostShows the host USB devices.

info profileShows the profiling information.

info captureShows the capture (audio grab) information.

info snapshotsShows the currently saved virtual machine snapshots.

info statusShows the current virtual machine status.

info miceShows which guest mice are receiving events.

info vncShows the VNC server status.

info nameShows the current virtual machine name.

info uuidShows the current virtual machine's UUID.

info usernetShows the user network stack connection states.

info migrateShows the migration status.

info balloonShows the balloon device information.

info qtreeShows the device tree.

info qdmShows the qdev device model list.

info romsShows the ROMs.

info migrate_cache_sizeShows the current migration xbzrle (“Xor Based Zero Run Length Encoding”) cache size.

info migrate_capabilitiesShows the status of the multiple migration capabilities, such as xbzrle compression.

info mtreeShows the VM Guest memory hierarchy.

info trace-eventsShows available trace-events and their status.

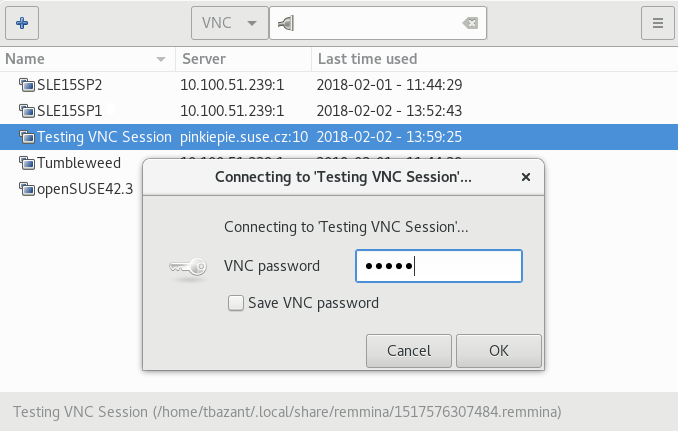

3.3 Changing VNC password #

To change the VNC password, use the change vnc

password command and enter the new password:

(qemu) change vnc password Password: ******** (qemu)

3.4 Managing devices #

To add a new disk while the guest is running (hotplug), use the

drive_add and device_add commands.

First define a new drive to be added as a device to bus 0:

(qemu) drive_add 0 if=none,file=/tmp/test.img,format=raw,id=disk1 OK

You can confirm your new device by querying the block subsystem:

(qemu) info block [...] disk1: removable=1 locked=0 tray-open=0 file=/tmp/test.img ro=0 drv=raw \ encrypted=0 bps=0 bps_rd=0 bps_wr=0 iops=0 iops_rd=0 iops_wr=0

After the new drive is defined, it needs to be connected to a device so

that the guest can see it. The typical device would be a

virtio-blk-pci or scsi-disk. To get

the full list of available values, run:

(qemu) device_add ? name "VGA", bus PCI name "usb-storage", bus usb-bus [...] name "virtio-blk-pci", bus virtio-bus

Now add the device

(qemu) device_add virtio-blk-pci,drive=disk1,id=myvirtio1

and confirm with

(qemu) info pci

[...]

Bus 0, device 4, function 0:

SCSI controller: PCI device 1af4:1001

IRQ 0.

BAR0: I/O at 0xffffffffffffffff [0x003e].

BAR1: 32 bit memory at 0xffffffffffffffff [0x00000ffe].

id "myvirtio1"

Devices added with the device_add command can be

removed from the guest with device_del. Enter

help device_del on the QEMU monitor command line

for more information.

To release the device or file connected to the removable media device,

use the eject DEVICE

command. Use the optional -f to force ejection.

To change removable media (like CD-ROMs), use the

change DEVICE command. The

name of the removable media can be determined using the info

block command:

(qemu)info blockide1-cd0: type=cdrom removable=1 locked=0 file=/dev/sr0 ro=1 drv=host_device(qemu)change ide1-cd0 /path/to/image

3.5 Controlling keyboard and mouse #

It is possible to use the monitor console to emulate keyboard and mouse

input if necessary. For example, if your graphical user interface

intercepts certain key combinations at a low level (such as

Ctrl–Alt–F1

in X Window System), you can still enter them using the

sendkey KEYS:

sendkey ctrl-alt-f1

To list the key names used in the KEYS option,

enter sendkey and press →|.

To control the mouse, the following commands can be used:

mouse_moveDX dy [DZ]Move the active mouse pointer to the specified coordinates dx, dy with the optional scroll axis dz.

mouse_buttonVALChange the state of the mouse buttons (1=left, 2=middle, 4=right).

mouse_setINDEXSet which mouse device receives events. Device index numbers can be obtained with the

info micecommand.

3.6 Changing available memory #

If the virtual machine was started with the -balloon

virtio option (the paravirtualized balloon device is therefore

enabled), you can change the available memory dynamically. For more

information about enabling the balloon device, see

Section 2.1, “Basic installation with qemu-system-ARCH”.

To get information about the balloon device in the monitor console and to

determine whether the device is enabled, use the info

balloon command:

(qemu) info balloon

If the balloon device is enabled, use the balloon

MEMORY_IN_MB command to set the requested

amount of memory:

(qemu) balloon 400

3.7 Dumping virtual machine memory #

To save the content of the virtual machine memory to a disk or console output, use the following commands:

memsaveADDRSIZEFILENAMESaves a virtual memory dump starting at ADDR of size SIZE to file FILENAME

pmemsaveADDRSIZEFILENAMESaves a physical memory dump starting at ADDR of size SIZE to file FILENAME-

- x /FMTADDR

Makes a virtual memory dump starting at address ADDR and formatted according to the FMT string. The FMT string consists of three parameters

COUNTFORMATSIZE:The COUNT parameter is the number of items to be dumped.

The FORMAT can be

x(hex),d(signed decimal),u(unsigned decimal),o(octal),c(char) ori(assembly instruction).The SIZE parameter can be

b(8 bits),h(16 bits),w(32 bits) org(64 bits). On x86,horwcan be specified with theiformat to respectively select 16 or 32-bit code instruction size.- xp /FMTADDR

Makes a physical memory dump starting at address ADDR and formatted according to the FMT string. The FMT string consists of three parameters

COUNTFORMATSIZE:The COUNT parameter is the number of items to be dumped.

The FORMAT can be

x(hex),d(signed decimal),u(unsigned decimal),o(octal),c(char) ori(asm instruction).The SIZE parameter can be

b(8 bits),h(16 bits),w(32 bits) org(64 bits). On x86,horwcan be specified with theiformat to respectively select 16 or 32-bit code instruction size.

3.8 Managing virtual machine snapshots #

Managing snapshots in QEMU monitor is not supported by SUSE yet. The information found in this section may be helpful in specific cases.

Virtual Machine snapshots are snapshots of the complete

virtual machine, including the state of the CPU, RAM, and the content of all

writable disks. To use virtual machine snapshots, you must have at least

one non-removable and writable block device using the

qcow2 disk image format.

Snapshots are helpful when you need to save your virtual machine in a particular state. For example, after you have configured network services on a virtualized server and want to quickly start the virtual machine in the same state that was saved last. You can also create a snapshot after the virtual machine has been powered off to create a backup state before you try something experimental and make the VM Guest unstable. This section introduces the former case, while the latter is described in Section 2.2.3, “Managing snapshots of virtual machines with qemu-img”.

The following commands are available for managing snapshots in QEMU monitor:

savevmNAMECreates a new virtual machine snapshot under the tag NAME or replaces an existing snapshot.

loadvmNAMELoads a virtual machine snapshot tagged NAME.

delvmDeletes a virtual machine snapshot.

info snapshotsPrints information about available snapshots.

(qemu) info snapshots Snapshot list: ID1 TAG2 VM SIZE3 DATE4 VM CLOCK5 1 booting 4.4M 2013-11-22 10:51:10 00:00:20.476 2 booted 184M 2013-11-22 10:53:03 00:02:05.394 3 logged_in 273M 2013-11-22 11:00:25 00:04:34.843 4 ff_and_term_running 372M 2013-11-22 11:12:27 00:08:44.965

Unique auto-incremented identification number of the snapshot.

Unique description string of the snapshot. It is meant as a human-readable version of the ID.

The disk space occupied by the snapshot. The more memory is consumed by running applications, the bigger the snapshot is.

Time and date the snapshot was created.

The current state of the virtual machine's clock.

3.9 Suspending and resuming virtual machine execution #

The following commands are available for suspending and resuming virtual machines:

stopSuspends the execution of the virtual machine.

contResumes the execution of the virtual machine.

system_resetResets the virtual machine. The effect is similar to the reset button on a physical machine. This may leave the file system in an unclean state.

system_powerdownSends an ACPI shutdown request to the machine. The effect is similar to the power button on a physical machine.

qorquitTerminates QEMU immediately.

3.10 Live migration #

The live migration process allows to transmit any virtual machine from one host system to another host system without any interruption in availability. It is possible to change hosts permanently or only during maintenance.

The requirements for live migration:

libvirt requirements apply.

Live migration is only possible between VM Host Servers with the same CPU features.

AHCI interface, VirtFS feature, and the

-mem-pathcommand line option are not compatible with migration.The guest on the source and destination hosts must be started in the same way.

-snapshotqemu command line option should not be used for migration (and thisqemucommand line option is not supported).

The postcopy mode is not yet supported in

SUSE Linux Enterprise Server. It is released as a technology preview only. For more

information about postcopy, see

https://wiki.qemu.org/Features/PostCopyLiveMigration.

More recommendations can be found on the following Web site: https://www.linux-kvm.org/page/Migration

The live migration process has the following steps:

The virtual machine instance is running on the source host.

The virtual machine is started on the destination host in frozen listening mode. The parameters used are the same as on the source host plus the

-incoming tcp:IP:PORTparameter, where IP specifies the IP address and PORT specifies the port for listening to the incoming migration. If 0 is set as the IP address, the virtual machine listens on all interfaces.On the source host, switch to the monitor console and use the

migrate -d tcp:DESTINATION_IP:PORT command to initiate the migration.To determine the state of the migration, use the

info migratecommand in the monitor console on the source host.To cancel the migration, use the

migrate_cancelcommand in the monitor console on the source host.To set the maximum tolerable downtime for migration in seconds, use the

migrate_set_downtimeNUMBER_OF_SECONDS command.To set the maximum speed for migration in bytes per second, use the

migrate_set_speedBYTES_PER_SECOND command.

3.11 QMP - QEMU machine protocol #

QMP is a JSON-based protocol that allows applications—such as

libvirt—to communicate with a running QEMU instance. There are

several ways you can access the QEMU monitor using QMP commands.

3.11.1 Access QMP via standard input/output #

The most flexible way to use QMP is by specifying the

-mon option. The following example creates a QMP

instance using standard input/output. In the following examples,

-> marks lines with commands sent from the client to

the running QEMU instance, while <- marks lines

with the output returned from QEMU.

>sudoqemu-system-x86_64 [...] \ -chardev stdio,id=mon0 \ -mon chardev=mon0,mode=control,pretty=on <- { "QMP": { "version": { "qemu": { "micro": 0, "minor": 0, "major": 2 }, "package": "" }, "capabilities": [ ] } }

When a new QMP connection is established, QMP sends its greeting

message and enters capabilities negotiation mode. In this mode, only

the qmp_capabilities command works. To exit

capabilities negotiation mode and enter command mode, the

qmp_capabilities command must be issued first:

-> { "execute": "qmp_capabilities" }

<- {

"return": {

}

}

"return": {} is a QMP's success response.

QMP's commands can have arguments. For example, to eject a CD-ROM drive, enter the following:

->{ "execute": "eject", "arguments": { "device": "ide1-cd0" } }

<- {

"timestamp": {

"seconds": 1410353381,

"microseconds": 763480

},

"event": "DEVICE_TRAY_MOVED",

"data": {

"device": "ide1-cd0",

"tray-open": true

}

}

{

"return": {

}

}3.11.2 Access QMP via telnet #

Instead of the standard input/output, you can connect the QMP interface to a network socket and communicate with it via a specified port:

>sudoqemu-system-x86_64 [...] \ -chardev socket,id=mon0,host=localhost,port=4444,server,nowait \ -mon chardev=mon0,mode=control,pretty=on

And then run telnet to connect to port 4444:

> telnet localhost 4444

Trying ::1...

Connected to localhost.

Escape character is '^]'.

<- {

"QMP": {

"version": {

"qemu": {

"micro": 0,

"minor": 0,

"major": 2

},

"package": ""

},

"capabilities": [

]

}

}You can create several monitor interfaces at the same time. The following example creates one HMP instance—human monitor which understands “normal” QEMU monitor's commands—on the standard input/output, and one QMP instance on localhost port 4444:

>sudoqemu-system-x86_64 [...] \ -chardev stdio,id=mon0 -mon chardev=mon0,mode=readline \ -chardev socket,id=mon1,host=localhost,port=4444,server,nowait \ -mon chardev=mon1,mode=control,pretty=on

3.11.3 Access QMP via Unix socket #

Invoke QEMU using the -qmp option and create a Unix

socket:

>sudoqemu-system-x86_64 [...] \ -qmp unix:/tmp/qmp-sock,server --monitor stdio QEMU waiting for connection on: unix:./qmp-sock,server

To communicate with the QEMU instance via the

/tmp/qmp-sock socket, use nc (see

man 1 nc for more information) from another terminal

on the same host:

>sudonc -U /tmp/qmp-sock <- {"QMP": {"version": {"qemu": {"micro": 0, "minor": 0, "major": 2} [...]

3.11.4 Access QMP via libvirt's virsh command #

If you run your virtual machines under libvirt, you can communicate with its

running guests by running the virsh

qemu-monitor-command:

>sudovirsh qemu-monitor-command vm_guest1 \ --pretty '{"execute":"query-kvm"}' <- { "return": { "enabled": true, "present": true }, "id": "libvirt-8" }

In the above example, we ran the simple command

query-kvm, which checks if the host is capable of

running KVM and if KVM is enabled.

To use the standard human-readable output format of QEMU instead of

the JSON format, use the --hmp option:

>sudovirsh qemu-monitor-command vm_guest1 --hmp "query-kvm"

4 Running virtual machines with qemu-system #

Once you have a virtual disk image ready (for more information on disk

images, see Section 2.2, “Managing disk images with qemu-img”), you can

start the related virtual machine.

Section 2.1, “Basic installation with qemu-system-ARCH” introduced simple commands

to install and run a VM Guest. This article focuses on a more detailed

explanation of qemu-system-ARCH usage and shows

solutions for more specific tasks. For a complete list of

qemu-system-ARCH's options, see its man page

(man 1 qemu).

4.1 Basic qemu-system-ARCH invocation #

The qemu-system-ARCH command uses the following

syntax:

qemu-system-ARCH OPTIONS1 -drive file=DISK_IMAGE2

| |

Path to the disk image holding the guest system you want to

virtualize. |

KVM support is available only for 64-bit Arm® architecture (AArch64). Running QEMU on the AArch64 architecture requires you to specify:

A machine type designed for QEMU Arm® virtual machines using the

-machine virt-VERSION_NUMBERoption.A firmware image file using the

-biosoption.You can specify the firmware image files alternatively using the

-driveoptions, for example:-drive file=/usr/share/edk2/aarch64/QEMU_EFI-pflash.raw,if=pflash,format=raw -drive file=/var/lib/libvirt/qemu/nvram/opensuse_VARS.fd,if=pflash,format=raw

A CPU of the VM Host Server using the

-cpu hostoption (default iscortex-15).The same Generic Interrupt Controller (GIC) version as the host using the

-machine gic-version=hostoption (default is2).If a graphic mode is needed, a graphic device of type

virtio-gpu-pci.

For example:

>sudoqemu-system-aarch64 [...] \ -bios /usr/share/qemu/qemu-uefi-aarch64.bin \ -cpu host \ -device virtio-gpu-pci \ -machine virt,accel=kvm,gic-version=host

4.2 General qemu-system-ARCH options #

This section introduces general qemu-system-ARCH

options and options related to the basic emulated hardware, such as the

virtual machine's processor, memory, model type, or time processing

methods.

-name NAME_OF_GUESTSpecifies the name of the running guest system. The name is displayed in the window caption and used for the VNC server.

-boot OPTIONSSpecifies the order in which the defined drives are booted. Drives are represented by letters, where

aandbstand for the floppy drives 1 and 2,cstands for the first hard disk,dstands for the first CD-ROM drive, andntopstand for Ether-boot network adapters.For example,

qemu-system-ARCH [...] -boot order=ndcfirst tries to boot from the network, then from the first CD-ROM drive, and finally from the first hard disk.-pidfile FILENAMEStores QEMU's process identification number (PID) in a file. This is useful if you run QEMU from a script.

-nodefaultsBy default, QEMU creates basic virtual devices even if you do not specify them on the command line. This option turns this feature off, and you must specify every single device manually, including graphical and network cards, parallel or serial ports, or virtual consoles. Even QEMU monitor is not attached by default.

-daemonize“Daemonizes” the QEMU process after it is started. QEMU detaches from the standard input and standard output after it is ready to receive connections on any of its devices.

SeaBIOS is the default BIOS used. You can boot USB devices, any drive (CD-ROM, Floppy or a hard disk). It has USB mouse and keyboard support and supports multiple VGA cards. For more information about SeaBIOS, refer to the SeaBIOS Web site.

4.2.1 Basic virtual hardware #

4.2.1.1 Machine type #

You can specify the type of the emulated machine. Run

qemu-system-ARCH -M help to view a list of

supported machine types.

The machine type isapc: ISA-only-PC is unsupported.

4.2.1.2 CPU model #

To specify the type of the processor (CPU) model, run

qemu-system-ARCH -cpu

MODEL. Use qemu-system-ARCH -cpu

help to view a list of supported CPU models.

4.2.1.3 Other basic options #

The following is a list of the most commonly used options while launching qemu from the command line. To see all options available, refer to the qemu-doc man page.

-m MEGABYTESSpecifies how many megabytes are used for the virtual RAM size.

-balloon virtioSpecifies a paravirtualized device to dynamically change the amount of virtual RAM assigned to the VM Guest. The upper limit is the amount of memory specified with

-m.-smp NUMBER_OF_CPUSSpecifies how many CPUs to emulate. QEMU supports up to 255 CPUs on the PC platform (up to 64 with KVM acceleration used). This option also takes other CPU-related parameters, such as number of sockets, the number of sockets, the number of cores per socket, or the number of threads per core.

The following is an example of a working

qemu-system-ARCH command line:

>sudoqemu-system-x86_64 \ -name "SLES 16.0" \ -M pc-i440fx-2.7 -m 512 \ -machine accel=kvm -cpu kvm64 -smp 2 \ -drive format=raw,file=/images/sles.raw

-no-acpiDisables ACPI support.

-SQEMU starts with the CPU stopped. To start the CPU, enter

cin QEMU monitor. For more information, see Section 3, “Virtual machine administration using QEMU monitor”.

4.2.2 Storing and reading the configuration of virtual devices #

-readconfig CFG_FILEInstead of entering the device configuration options on the command line each time you want to run VM Guest,

qemu-system-ARCHcan read them from a file that was either previously saved with-writeconfigor edited manually.-writeconfig CFG_FILEDumps the current virtual machine's device configuration to a text file. It can consequently be reused with the

-readconfigoption.>sudoqemu-system-x86_64 -name "SLES 16.0" \ -machine accel=kvm -M pc-i440fx-2.7 -m 512 -cpu kvm64 \ -smp 2 /images/sles.raw -writeconfig /images/sles.cfg (exited)>cat /images/sles.cfg # qemu config file [drive] index = "0" media = "disk" file = "/images/sles_base.raw"This way, you can effectively manage the configuration of your virtual machines' devices in a well-arranged manner.

4.2.3 Guest real-time clock #

-rtc OPTIONSSpecifies the way the RTC is handled inside a VM Guest. By default, the clock of the guest is derived from that of the host system. Therefore, we recommend that the host system clock be synchronized with an accurate external clock, for example, via an NTP service.

If you need to isolate the VM Guest clock from the host one, specify

clock=vminstead of the defaultclock=host.You can also specify the initial time of the VM Guest's clock with the

baseoption:>sudoqemu-system-x86_64 [...] -rtc clock=vm,base=2010-12-03T01:02:00Instead of a time stamp, you can specify

utcorlocaltime. The former instructs VM Guest to start at the current UTC value (Coordinated Universal Time, see https://en.wikipedia.org/wiki/UTC), while the latter applies the local time setting.

4.3 Using devices in QEMU #

QEMU virtual machines emulate all devices needed to run a VM Guest. QEMU supports, for example, several types of network cards, block devices (hard and removable drives), USB devices, character devices (serial and parallel ports), or multimedia devices (graphic and sound cards). This section introduces options for configuring multiple types of supported devices.

If your device, such as -drive, needs a special

driver and driver properties to be set, specify them with the

-device option, and identify with

drive= suboption. For example:

>sudoqemu-system-x86_64 [...] -drive if=none,id=drive0,format=raw \ -device virtio-blk-pci,drive=drive0,scsi=off ...

To get help on available drivers and their properties, use

-device ? and -device

DRIVER,?.

4.3.1 Block devices #

Block devices are vital for virtual machines. These are fixed or removable storage media called drives. One of the connected hard disks typically holds the guest operating system to be virtualized.

Virtual Machine drives are defined with

-drive. This option has many suboptions, some of

which are described in this section. For the complete list, see the

man page (man 1 qemu).

-drive option #file=image_fnameSpecifies the path to the disk image that must be used with this drive. If not specified, an empty (removable) drive is assumed.

if=drive_interfaceSpecifies the type of interface to which the drive is connected. Currently, only

floppy,scsi,ide, orvirtioare supported by SUSE.virtiodefines a paravirtualized disk driver. Default iside.index=index_of_connectorSpecifies the index number of a connector on the disk interface (see the

ifoption) where the drive is connected. If not specified, the index is automatically incremented.media=typeSpecifies the type of media. Can be

diskfor hard disks, orcdromfor removable CD-ROM drives.format=img_fmtSpecifies the format of the connected disk image. If not specified, the format is autodetected. Currently, SUSE supports

rawandqcow2formats.cache=methodSpecifies the caching method for the drive. Possible values are

unsafe,writethrough,writeback,directsync, ornone. To improve performance when using theqcow2image format, selectwriteback.nonedisables the host page cache and, therefore, is the safest option. The default for image files iswriteback.

To simplify defining block devices, QEMU understands several

shortcuts which you may find handy when entering the

qemu-system-ARCH command line.

You can use

>sudoqemu-system-x86_64 -cdrom /images/cdrom.iso

instead of

>sudoqemu-system-x86_64 -drive format=raw,file=/images/cdrom.iso,index=2,media=cdrom

and

>sudoqemu-system-x86_64 -hda /images/imagei1.raw -hdb /images/image2.raw -hdc \ /images/image3.raw -hdd /images/image4.raw

instead of

>sudoqemu-system-x86_64 -drive format=raw,file=/images/image1.raw,index=0,media=disk \ -drive format=raw,file=/images/image2.raw,index=1,media=disk \ -drive format=raw,file=/images/image3.raw,index=2,media=disk \ -drive format=raw,file=/images/image4.raw,index=3,media=disk

As an alternative to using disk images (see

Section 2.2, “Managing disk images with qemu-img”) you can also use

existing VM Host Server disks, connect them as drives, and access them from

VM Guest. Use the host disk device directly instead of disk image

file names.

To access the host CD-ROM drive, use

>sudoqemu-system-x86_64 [...] -drive file=/dev/cdrom,media=cdrom

To access the host hard disk, use

>sudoqemu-system-x86_64 [...] -drive file=/dev/hdb,media=disk

A host drive used by a VM Guest must not be accessed concurrently by the VM Host Server or another VM Guest.

4.3.1.1 Freeing unused guest disk space #

A Sparse image file is a type of disk image file that grows in size as the user adds data to it, taking up only as much disk space as is stored in it. For example, if you copy 1 GB of data inside the sparse disk image, its size grows by 1 GB. If you then delete, for example, 500 MB of the data, the image size does not by default decrease as expected.

This is why the discard=on option is introduced on

the KVM command line. It tells the hypervisor to automatically free

the “holes” after deleting data from the sparse guest

image. This option is valid only for the

if=scsi drive interface:

>sudoqemu-system-x86_64 [...] -drive format=img_format,file=/path/to/file.img,if=scsi,discard=on

if=scsi is not supported. This interface does not

map to virtio-scsi, but rather to the

lsi SCSI adapter.

4.3.1.2 IOThreads #

IOThreads are dedicated event loop threads for virtio devices to perform I/O requests to improve scalability, especially on an SMP VM Host Server with SMP VM Guests using many disk devices. Instead of using QEMU's main event loop for I/O processing, IOThreads allow spreading I/O work across multiple CPUs and can improve latency when properly configured.

IOThreads are enabled by defining IOThread objects. virtio devices

can then use the objects for their I/O event loops. Many virtio

devices can use a single IOThread object, or virtio devices and

IOThread objects can be configured in a 1:1 mapping. The following

example creates a single IOThread with ID

iothread0, which is then used as the event loop for

two virtio-blk devices.

>sudoqemu-system-x86_64 [...] -object iothread,id=iothread0\ -drive if=none,id=drive0,cache=none,aio=native,\ format=raw,file=filename -device virtio-blk-pci,drive=drive0,scsi=off,\ iothread=iothread0 -drive if=none,id=drive1,cache=none,aio=native,\ format=raw,file=filename -device virtio-blk-pci,drive=drive1,scsi=off,\ iothread=iothread0 [...]

The following qemu command line example illustrates a 1:1 virtio device to IOThread mapping:

>sudoqemu-system-x86_64 [...] -object iothread,id=iothread0\ -object iothread,id=iothread1 -drive if=none,id=drive0,cache=none,aio=native,\ format=raw,file=filename -device virtio-blk-pci,drive=drive0,scsi=off,\ iothread=iothread0 -drive if=none,id=drive1,cache=none,aio=native,\ format=raw,file=filename -device virtio-blk-pci,drive=drive1,scsi=off,\ iothread=iothread1 [...]

4.3.1.3 Bio-based I/O path for virtio-blk #

For better performance of I/O-intensive applications, a new I/O path was introduced for the virtio-blk interface in kernel version 3.7. This bio-based block device driver skips the I/O scheduler, and thus shortens the I/O path in the guest and has lower latency. It is especially useful for high-speed storage devices, such as SSD disks.

The driver is disabled by default. To use it, do the following:

Append

virtio_blk.use_bio=1to the kernel command line on the guest. You can do so via › › .You can also do it by editing

/etc/default/grub, searching for the line that containsGRUB_CMDLINE_LINUX_DEFAULT=, and adding the kernel parameter at the end. Then rungrub2-mkconfig >/boot/grub2/grub.cfgto update the grub2 boot menu.Reboot the guest with the new kernel command line active.

The bio-based virtio-blk driver does not help on slow devices such as spin hard disks. The reason is that the benefit of scheduling is larger than what the shortened bio path offers. Do not use the bio-based driver on slow devices.

4.3.1.4 Accessing iSCSI resources directly #

QEMU now integrates with libiscsi. This allows

QEMU to access iSCSI resources directly and use them as virtual

machine block devices. This feature does not require any host iSCSI

initiator configuration, as is needed for a libvirt iSCSI target-based storage pool setup. Instead, it directly connects guest storage

interfaces to an iSCSI target LUN via the user space library

libiscsi. iSCSI-based disk devices can also be specified in the

libvirt XML configuration.

This feature is only available using the RAW image format, as the iSCSI protocol has certain technical limitations.

The following is the QEMU command-line interface for iSCSI connectivity.

The use of libiscsi-based storage provisioning is not yet exposed by the virt-manager interface, but instead it would be configured by directly editing the guest XML. This new way of accessing iSCSI based storage is to be done at the command line.

>sudoqemu-system-x86_64 -machine accel=kvm \ -drive file=iscsi://192.168.100.1:3260/iqn.2016-08.com.example:314605ab-a88e-49af-b4eb-664808a3443b/0,\ format=raw,if=none,id=mydrive,cache=none \ -device ide-hd,bus=ide.0,unit=0,drive=mydrive ...

Here is an example snippet of guest domain XML which uses the protocol-based iSCSI:

<devices>

...

<disk type='network' device='disk'>

<driver name='qemu' type='raw'/>

<source protocol='iscsi' name='iqn.2013-07.com.example:iscsi-nopool/2'>

<host name='example.com' port='3260'/>

</source>

<auth username='myuser'>

<secret type='iscsi' usage='libvirtiscsi'/>

</auth>

<target dev='vda' bus='virtio'/>

</disk>

</devices>Contrast that with an example which uses the host-based iSCSI initiator which virt-manager sets up:

<devices>

...

<disk type='block' device='disk'>

<driver name='qemu' type='raw' cache='none' io='native'/>

<source dev='/dev/disk/by-path/scsi-0:0:0:0'/>

<target dev='hda' bus='ide'/>

<address type='drive' controller='0' bus='0' target='0' unit='0'/>

</disk>

<controller type='ide' index='0'>

<address type='pci' domain='0x0000' bus='0x00' slot='0x01'

function='0x1'/>

</controller>

</devices>4.3.1.5 Using RADOS block devices with QEMU #

RADOS Block Devices (RBD) store data in a Ceph cluster. They allow snapshotting, replication and data consistency. You can use an RBD from your KVM-managed VM Guests similarly to how you use other block devices.

For more details, refer to the SUSE Enterprise Storage Administration Guide, chapter Ceph as a Back-end for QEMU KVM Instance.

4.3.2 Graphic devices and display options #

This section describes QEMU options affecting the type of the emulated video card and the way the VM Guest graphical output is displayed.

4.3.2.1 Defining video cards #

QEMU uses -vga to define a video card used to

display the VM Guest's graphical output. The -vga

option understands the following values:

noneDisables video cards on VM Guest (no video card is emulated). You can still access the running VM Guest via the serial console.

stdEmulates a standard VESA 2.0 VBE video card. Use it if you intend to use a high display resolution on the VM Guest.

- qxl

QXL is a paravirtual graphic card. It is VGA compatible (including VESA 2.0 VBE support).

qxlis recommended when using thespicevideo protocol.- virtio

Paravirtual VGA graphic card.

4.3.2.2 Display options #

The following options affect the way the VM Guest's graphical output is displayed.

-display gtkDisplay video output in a GTK window. This interface provides UI elements to configure and control the VM during runtime.

-display sdlDisplay video output via SDL in a separate graphics window. For more information, see the SDL documentation.

-spice option[,option[,...]]Enables the Spice remote desktop protocol.

-display vncRefer to Section 4.5, “Viewing a VM Guest with VNC” for more information.

-nographicDisables QEMU's graphical output. The emulated serial port is redirected to the console.

After starting the virtual machine with

-nographic, press Ctrl–A H in the virtual console to view the list of other useful shortcuts, for example, to toggle between the console and the QEMU monitor.>sudoqemu-system-x86_64 -hda /images/sles_base.raw -nographic C-a h print this help C-a x exit emulator C-a s save disk data back to file (if -snapshot) C-a t toggle console timestamps C-a b send break (magic sysrq) C-a c switch between console and monitor C-a C-a sends C-a (pressed C-a c) QEMU 2.3.1 monitor - type 'help' for more information (qemu)-no-frameDisables decorations for the QEMU window. Convenient for a dedicated desktop workspace.

-full-screenStarts QEMU graphical output in full-screen mode.

-no-quitDisables the close button of the QEMU window and prevents it from being closed by force.

-alt-grab,-ctrl-grabBy default, the QEMU window releases the “captured” mouse after pressing Ctrl–Alt. You can change the key combination to either Ctrl–Alt–Shift (

-alt-grab), or the right Ctrl key (-ctrl-grab).

4.3.3 USB devices #

There are two ways to create USB devices usable by the VM Guest in

KVM: you can either emulate new USB devices inside a VM Guest, or

assign an existing host USB device to a VM Guest. To use USB devices

in QEMU you first need to enable the generic USB driver with the

-usb option. Then you can specify individual devices

with the -usbdevice option.

4.3.3.1 Emulating USB devices in VM Guest #

SUSE currently supports the following types of USB devices:

disk, host,

serial, braille,

net, mouse, and

tablet.

-usbdevice option #diskEmulates a mass storage device based on a file. The optional

formatoption is used rather than detecting the format.>sudoqemu-system-x86_64 [...] -usbdevice disk:format=raw:/virt/usb_disk.rawhostPass through the host device (identified by bus.addr).

serialSerial converter to a host character device.

brailleEmulates a braille device using BrlAPI to display the braille output.

netEmulates a network adapter that supports CDC Ethernet and RNDIS protocols.