5 Deployment #

This section describes the process steps for the deployment of the Rancher Kubernetes Engine solution. It describes the process steps to deploy each of the component layers starting as a base functional proof-of-concept, having considerations on migration toward production, providing scaling guidance that is needed to create the solution.

5.1 Deployment overview #

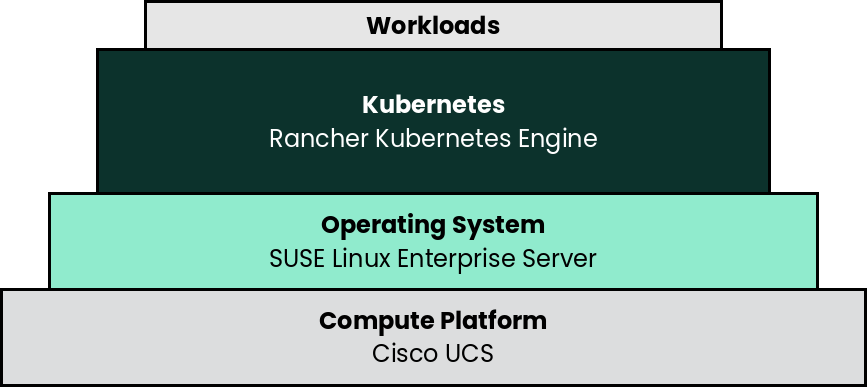

The deployment stack is represented in the following figure:

and details are covered for each layer in the following sections.

The following section’s content is ordered and described from the bottom layer up to the top.

5.2 Compute Platform #

The base, starting configuration can reside all within a single Cisco UCS server. Based upon the relatively small resource requirements for a Rancher Kubernetes Engine deployment, a viable approach is to deploy as a virtual machine (VM) on the target nodes, on top of an existing hypervisor, like KVM.

- Preparation(s)

For a physical host, that is racked, cabled and powered up, like Cisco UCS C240 SD M5 used in the deployment:

If using Cisco UCS Integrated Management Controller (IMC):

Provide a DHCP Server for an IP address to the Cisco UCS Integrated Management Controller or use a monitor, keyboard, and mouse for initial IMC configuration

Log into the interface as admin

On left menu click on

Storage → Cisco 12G Modular Raid ControllerCreate virtual drive from unused physical drives, for example pick two drives for the operating system and click on

>>button. Under virtual drive properties enterbootas the name and click onCreate Virtual Drive, thenOK.

On the left menu click on

Networking → Adapter Card MLOMClick on the

vNICstab, and the factory default configuration comes with two vNICs defined with one vNIC assigned to port 0 and one vNIC assigned to port 1. Both vNICs are configured to allow any kind of traffic, with or without a VLAN tag. VLAN IDs must be managed on the operating system level.TipA great feature of the Cisco VIC card is the possibility to define multiple virtual network adapters presented to the operating system, which are configured best for specific use. Like, admin traffic should be configured with MTU 1500 to be compatible with all communication partners, whereas the network for storage intensive traffic should be configured with MTU 9000 for best throughput. For high-availability, the two network devices per traffic type will be combined in a bond on the operating system layer.

These new settings become active with the next power cycle of the server. At the top right side of the window click on

Host Power → Power Off, in the pop-up windows click onOK.On the top menu item list, select

Launch vKVMSelect the

Virtual Mediatab and activateVirtual Devicesfound inVirtual MediatabClick the

Virtual Mediatab to selectMap CD/DVDIn the

Virtual Media - CD/DVDwindow, browse to respective operating system media, open and use the image for a system boot.

- Deployment Process

On the respective compute module node, determine if a hypervisor is already available for the solution`s virtual machines.

If this will be the first use of this node, an option is to deploy a KVM hypervisor, based upon SUSE Linux Enterprise Server by following the Virtualization Guide.

Given the simplicity of the deployment, the operating system and hypervisor can be installed with the SUSE Linux Enterprise Server ISO media and the Cisco IMC virtual media and virtual console methodology.

Then for the solution VM, use the hypervisor user interface to allocate the necessary CPU, memory, disk and networking as noted in the SUSE Rancher hardware requirements.

- Deployment Consideration(s)

To further optimize deployment factors, leverage the following practices:

To monitor and operate a Cisco UCS server from Intersight, the first step is to claim the device. The following procedure provides the steps to claim the Cisco UCS C240 server manually in Intersight.

Logon to Intersight web interface and navigate to

Admin > TargetsOn the top right corner of the window click on

Claim a New TargetIn the next window, select

Compute / Fabric → Cisco UCS Server (Standalone), click onStartIn another tab of the web browser, logon to the CIntegrated Management Controller portal of the Cisco UCS C240 SD M5 and navigate to

Admin → Device ConnectorBack in Intersight, enter the Device ID and Claim Code from the server and click on Claim. The server is now listed in Intersight under

Targetsand underServersEnable

Tunneld vKVMand click onSave. Tunneld vKVM allows Intersight to open the vKVM window in case the client has no direct network access to the server on the local lan or via VPN.Navigate to

Operate → Servers →name of the new server to see the details and Actions available for this system.The available actions are based on the Intersight license level available for this server and the privileges of the used user account.

NotePlease have a look at Intersight Licensing to get an overview of the functions available with the different license tiers.

Now you can remotely manage the server and leverage existing or setup specific deployment profiles for the use case, plus perform the operating system installation.

While the initial deployment only requires a single VM, as noted in later deployment sections, having multiple VMs provides resiliency to accomplish high availability. To reduce single points of failure, it would be beneficial to have the multi-VM deployments spread across multiple hypervisor nodes. So consideration of consistent hypervisor and compute module configurations, with the needed resources for the SUSE Rancher VMs will yield a robust, reliable production implementation.

5.3 SUSE Linux Enterprise Server #

As the base software layer, use an enterprise-grade Linux operating system. For example, SUSE Linux Enterprise Server.

- Preparation(s)

To meet the solution stack prerequisites and requirements, SUSE operating system offerings, like SUSE Linux Enterprise Server can be used.

Ensure these services are in place and configured for this node to use:

Domain Name Service (DNS) - an external network-accessible service to map IP Addresses to host names

Network Time Protocol (NTP) - an external network-accessible service to obtain and synchronize system times to aid in time stamp consistency

Software Update Service - access to a network-based repository for software update packages. This can be accessed directly from each node via registration to

the general, internet-based SUSE Customer Center (SCC) or

an organization’s SUSE Manager infrastructure or

a local server running an instance of Repository Mirroring Tool (RMT)

NoteDuring the node’s installation, it can be pointed to the respective update service. This can also be accomplished post-installation with the command line tool named SUSEConnect.

- Deployment Process

On the compute platform node, install the noted SUSE operating system, by following these steps:

Download the SUSE Linux Enterprise Server product (either for the ISO or Virtual Machine image)

Identify the appropriate, supported version of SUSE Linux Enterprise Server by reviewing the support matrix for SUSE Rancher versions Web page.

The installation process is described and can be performed with default values by following steps from the product documentation, see Installation Quick Start

TipAdjust both the password and the local network addressing setup to comply with local environment guidelines and requirements.

- Deployment Consideration(s)

To further optimize deployment factors, leverage the following practices:

To reduce user intervention, unattended deployments of SUSE Linux Enterprise Server can be automated

for ISO-based installations, by referring to the AutoYaST Guide

5.4 Rancher Kubernetes Engine #

- Preparation(s)

Identify the appropriate, desired version of the Rancher Kubernetes Engine binary (for example vX.Y.Z) that includes the needed Kubernetes version by reviewing

the "Supported Rancher Kubernetes Engine Versions" associated with the respective SUSE Rancher version from "Rancher Kubernetes Engine Downstream Clusters" section, or

the "Releases" on the Download Web page.

On the target node with a default installation of SUSE Linux Enterprise Server operating system, log in to the node either as root or as a user with sudo privileges and enable the required container runtime engine

sudo SUSEConnect -p sle-module-containers/15.3/x86_64 sudo zypper refresh ; zypper install docker sudo systemctl enable --now docker.service

Then validate the container runtime engine is working

sudo systemctl status docker.service sudo docker ps --all

For the underlying operating system firewall service, either

enable and configure the necessary inbound ports or

stop and completely disable the firewall service.

- Deployment Process

The primary steps for deploying this Rancher Kubernetes Engine Kubernetes are:

NoteInstalling Rancher Kubernetes Engine requires a client system (i.e. admin workstation) that has been configured with kubectl.

Download the Rancher Kubernetes Engine binary according to the instructions on product documentation page, then follow the directions on that page, but with the following exceptions:

Create the cluster.yml file with the command

rke configNoteSee product documentation for example-yamls and config-options for detailed examples and descriptions of the cluster.yml parameters.

It is recommended to create a unique SSH key for this Rancher Kubernetes Engine cluster with the command

ssh-keygenProvide the path to that key for the option "Cluster Level SSH Private Key Path"

The option "Number of Hosts" refers to the number of hosts to configure at this time

Additional hosts can be added very easily after Rancher Kubernetes Engine cluster creation

For this implementation it is recommended to configure one or three hosts

Give all hosts the roles of "Control Plane", "Worker", and "etcd"

Answer "n" for the option "Enable PodSecurityPolicy"

Update the cluster.yml file before continuing with the step "Deploying Kubernetes with RKE"

If a load balancer has been deployed for the Rancher Kubernetes Engine control-plane nodes, update the cluster.yml file before deploying Rancher Kubernetes Engine to include the IP address or FQDN of the load balancer. The appropriate location is under authentication.sans. For example:

LB_IP_Host=""

authentication: strategy: x509 sans: ["${LB_IP_Host}"]Verify password-less SSH is available from the admin workstation to each of the cluster hosts as the user specified in the cluster.yml file

When ready, run

rke upto create the RKE clusterAfter the

rke upcommand completes, the RKE cluster will continue the Kubernetes installation processMonitor the progress of the installation:

Export the variable KUBECONFIG to the absolute path name of the kube_config_cluster.yml file. I.e.

export KUBECONFIG=~/rke-cluster/kube_config_cluster.ymlRun the command:

watch -c "kubectl get deployments -A"The cluster deployment is complete when elements of all the deployments show at least "1" as "AVAILABLE"

Use Ctrl+c to exit the watch loop after all deployment pods are running

TipTo address Availability and possible scaling to a multiple node cluster, etcd is enabled instead of using the default SQLite datastore.

- Deployment Consideration(s)

To further optimize deployment factors, leverage the following practices:

A full high-availability Rancher Kubernetes Engine cluster is recommended for production workloads. For this use case, two additional hosts should be added; for a total of three. All three hosts will perform the roles of control-plane, etcd, and worker.

Deploy the same operating system on the new compute platform nodes, and prepare them in the same way as the first node

Update the cluster.yml file to include the additional node

Using a text editor, copy the information for the first node (found under the "nodes:" section)

The node information usually starts with "- address:" and ends with the start of another node entry, or the beginning of the "services: " section, i.e.

- address: 172.16.240.71 port: "22" internal_address: "" role: - controlplane - worker - etcd . . . labels: {} taints: []

Paste the information into the same section, once for each additional host

Update the pasted information, as appropriate, for each additional host

When the cluster.yml file is updated with the information specific to each node, run the command

rke upRun the command:

watch -c "kubectl get deployments -A"The cluster deployment is complete when elements of all the deployments show at least "1" as "AVAILABLE"

Use Ctrl+c to exit the watch loop after all deployment pods are running

After this successful deployment of the Rancher Kubernetes Engine solution, review the product documentation for details on how to directly use this Kubernetes cluster. Furthermore, by reviewing the SUSE Rancher product documentation this solution can also be:

imported (refer to subsection "Importing Existing Clusters"), then

managed (refer to subsection "Cluster Administration") and

accessed (refer to subsection "Cluster Access") to address orchestration of workloads, maintaining security and many more functions are readily available.