5 Deployment #

This section describes the process steps for the deployment of the K3s solution. It describes the process steps to deploy each of the component layers starting as a base functional proof-of-concept, having considerations on migration toward production, providing scaling guidance that is needed to create the solution.

5.1 Deployment overview #

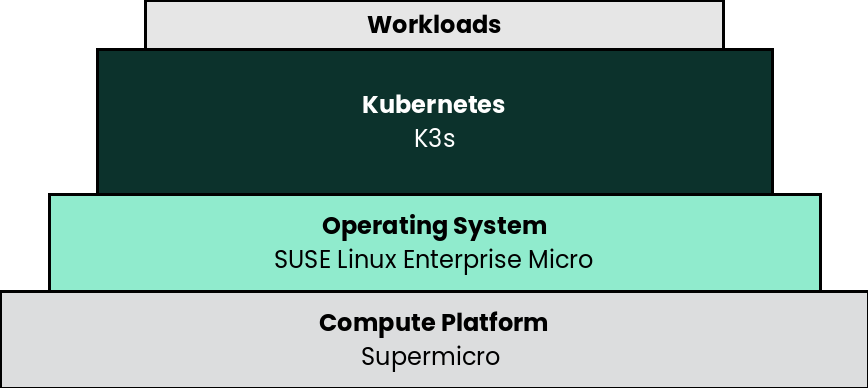

The deployment stack is represented in the following figure:

and details are covered for each layer in the following sections.

The following section’s content is ordered and described from the bottom layer up to the top.

5.2 Compute Platform #

The base, starting configuration can reside all within a single server. Based upon the relatively small resource requirements for a K3s deployment, a viable approach is to deploy directly on baremetal or as a virtual machine (VM) on the target nodes, on top of an existing hypervisor, like KVM. For physical host, there are tools that can be used during the setup of the server, see below:

The Supermicro Baseboad Management Controller (BMC) provides remote access to multiple users at different locations for networking. It also allows a system administrator to monitor system health and manage computer events remotely, including media redirection of software image files used for installing operating systems and and HTML5 web console interaction.

5.3 SUSE Linux Enterprise Micro #

As the base software layer, use an enterprise-grade Linux operating system. For example, SUSE Linux Enterprise Micro.

- Preparation(s)

To meet the solution stack prerequisites and requirements, SUSE operating system offerings, like SUSE Linux Enterprise Micro can be used.

Ensure these services are in place and configured for this node to use:

Domain Name Service (DNS) - an external network-accessible service to map IP Addresses to host names

Network Time Protocol (NTP) - an external network-accessible service to obtain and synchronize system times to aid in time stamp consistency

Software Update Service - access to a network-based repository for software update packages. This can be accessed directly from each node via registration to

the general, internet-based SUSE Customer Center (SCC) or

an organization’s SUSE Manager infrastructure or

a local server running an instance of Repository Mirroring Tool (RMT)

NoteDuring the node’s installation, it can be pointed to the respective update service. This can also be accomplished post-installation with the command line tool named SUSEConnect.

- Deployment Process

On the compute platform node, install the noted SUSE operating system, by following these steps:

Download the SUSE Linux Enterprise Micro product (either for the ISO or Virtual Machine image)

Identify the appropriate, supported version of SUSE Linux Enterprise Micro by reviewing the support matrix for SUSE Rancher versions Web page.

The installation process is described and can be performed with default values by following steps from the product documentation, see Installation Quick Start

TipAdjust both the password and the local network addressing setup to comply with local environment guidelines and requirements.

- Deployment Consideration(s)

To further optimize deployment factors, leverage the following practices:

To reduce user intervention, unattended deployments of SUSE Linux Enterprise Micro can be automated

for ISO-based installations, by referring to the AutoYaST Guide

for raw-image based installation, by configuring the Ignition and Combustion tooling as described in the Installation Quick Start

5.4 K3s #

- Preparation(s)

Identify the appropriate, desired version of the K3s binary (for example vX.YY.ZZ+k3s1) by reviewing

the "Supported K3s Versions" associated with the respective SUSE Rancher version from "K3s Downstream Clusters" section, or

the "Releases" on the Download Web page.

On the target node with a default installation of SUSE Linux Enterprise Micro operating system, log in to the node either as root or as a user with sudo privileges and install a required package for the next layer.

sudo transactional-update pkg install apparmor-parser sudo reboot

For the underlying operating system firewall service, either

enable and configure the necessary inbound ports or

stop and completely disable the firewall service.

- Deployment Process

Perform the following steps to install the first K3s server on one of the nodes to be used for the Kubernetes control plane

Set the following variable with the noted version of K3s, as found during the preparation steps.

K3s_VERSION=""

Install the version of K3s with embedded etcd enabled:

curl -sfL https://get.k3s.io | \ INSTALL_K3S_VERSION=${K3s_VERSION} \ INSTALL_K3S_EXEC='server --cluster-init --write-kubeconfig-mode=644' \ sh -s -Since SELinux is resident on SUSE Linux Enterprise Micro, the K3s install command will include another required package "k3s-selinux" as a transactional-update in a new snapshot. So a reboot is required to access the installed package and complete the deployment.

systemctl reboot

TipTo address Availability and possible scaling to a multiple node cluster, etcd is enabled instead of using the default SQLite datastore.

Monitor the progress of the installation:

watch -c "kubectl get deployments -A"The K3s deployment is complete when elements of all the deployments (coredns, local-path-provisioner, metrics-server, and traefik) show at least "1" as "AVAILABLE"

Use Ctrl+c to exit the watch loop after all deployment pods are running

- Deployment Consideration(s)

To further optimize deployment factors, leverage the following practices:

A full high-availability K3s cluster is recommended for production workloads. The etcd key/value store (aka database) requires an odd number of servers (aka master nodes) be allocated to the K3s cluster. In this case, two additional control-plane servers should be added; for a total of three.

Deploy the same operating system on the new compute platform nodes, then log in to the new nodes as root or as a user with sudo privileges.

Execute the following sets of commands on each of the remaining control-plane nodes:

Set the following additional variables, as appropriate for this cluster

# Private IP preferred, if available FIRST_SERVER_IP="" # From /var/lib/rancher/k3s/server/node-token file on the first server NODE_TOKEN="" # Match the first of the first server K3s_VERSION=""

Install K3s

curl -sfL https://get.k3s.io | \ INSTALL_K3S_VERSION=${K3s_VERSION} \ K3S_URL=https://${FIRST_SERVER_IP}:6443 \ K3S_TOKEN=${NODE_TOKEN} \ K3S_KUBECONFIG_MODE="644" INSTALL_K3S_EXEC='server' \ sh -Monitor the progress of the installation:

watch -c "kubectl get deployments -A"The K3s deployment is complete when elements of all the deployments (coredns, local-path-provisioner, metrics-server, and traefik) show at least "1" as "AVAILABLE"

Use Ctrl+c to exit the watch loop after all deployment pods are running

NoteThis can be changed to the normal Kubernetes default by adding a taint to each server node. See the official Kubernetes documentation for more information on how to do that.

(Optional) In cases where agent nodes are desired, execute the following sets of commands, using the same "K3s_VERSION", "FIRST_SERVER_IP", and "NODE_TOKEN" variable settings as above, on each of the agent nodes to add it to the K3s cluster:

curl -sfL https://get.k3s.io | \ INSTALL_K3S_VERSION=${K3s_VERSION} \ K3S_URL=https://${FIRST_SERVER_IP}:6443 \ K3S_TOKEN=${NODE_TOKEN} \ K3S_KUBECONFIG_MODE="644" \ sh -

After this successful deployment of the K3s solution, review the product documentation for details on how to directly use this Kubernetes cluster. Furthermore, by reviewing the SUSE Rancher product documentation this solution can also be:

imported (refer to sub-section "Importing Existing Clusters"), then

managed (refer to sub-section "Cluster Administration") and

accessed (refer to sub-section "Cluster Access") to address orchestration of workloads, maintaining security and many more functions are readily available.