12 Determine the cluster state #

When you have a running cluster, you may use the ceph tool

to monitor it. Determining the cluster state typically involves checking the

status of Ceph OSDs, Ceph Monitors, placement groups, and Metadata Servers.

To run the ceph tool in an interactive mode, type

ceph at the command line with no arguments. The

interactive mode is more convenient if you are going to enter more

ceph commands in a row. For example:

cephuser@adm > ceph

ceph> health

ceph> status

ceph> quorum_status

ceph> mon stat12.1 Checking a cluster's status #

You can find the immediate state of the cluster using ceph

status or ceph -s:

cephuser@adm > ceph -s

cluster:

id: b4b30c6e-9681-11ea-ac39-525400d7702d

health: HEALTH_OK

services:

mon: 5 daemons, quorum ses-node1,ses-main,ses-node2,ses-node4,ses-node3 (age 2m)

mgr: ses-node1.gpijpm(active, since 3d), standbys: ses-node2.oopvyh

mds: my_cephfs:1 {0=my_cephfs.ses-node1.oterul=up:active}

osd: 3 osds: 3 up (since 3d), 3 in (since 11d)

rgw: 2 daemons active (myrealm.myzone.ses-node1.kwwazo, myrealm.myzone.ses-node2.jngabw)

task status:

scrub status:

mds.my_cephfs.ses-node1.oterul: idle

data:

pools: 7 pools, 169 pgs

objects: 250 objects, 10 KiB

usage: 3.1 GiB used, 27 GiB / 30 GiB avail

pgs: 169 active+cleanThe output provides the following information:

Cluster ID

Cluster health status

The monitor map epoch and the status of the monitor quorum

The OSD map epoch and the status of OSDs

The status of Ceph Managers

The status of Object Gateways

The placement group map version

The number of placement groups and pools

The notional amount of data stored and the number of objects stored

The total amount of data stored.

The used value reflects the actual amount of raw storage

used. The xxx GB / xxx GB value means the amount

available (the lesser number) of the overall storage capacity of the

cluster. The notional number reflects the size of the stored data before it

is replicated, cloned or snapshot. Therefore, the amount of data actually

stored typically exceeds the notional amount stored, because Ceph creates

replicas of the data and may also use storage capacity for cloning and

snapshotting.

Other commands that display immediate status information are:

ceph pg statceph osd pool statsceph dfceph df detail

To get the information updated in real time, put any of these commands

(including ceph -s) as an argument of the

watch command:

# watch -n 10 'ceph -s'Press Ctrl–C when you are tired of watching.

12.2 Checking cluster health #

After you start your cluster and before you start reading and/or writing data, check your cluster's health:

cephuser@adm > ceph health

HEALTH_WARN 10 pgs degraded; 100 pgs stuck unclean; 1 mons down, quorum 0,2 \

node-1,node-2,node-3If you specified non-default locations for your configuration or keyring, you may specify their locations:

cephuser@adm > ceph -c /path/to/conf -k /path/to/keyring healthThe Ceph cluster returns one of the following health codes:

- OSD_DOWN

One or more OSDs are marked down. The OSD daemon may have been stopped, or peer OSDs may be unable to reach the OSD over the network. Common causes include a stopped or crashed daemon, a down host, or a network outage.

Verify the host is healthy, the daemon is started, and network is functioning. If the daemon has crashed, the daemon log file (

/var/log/ceph/ceph-osd.*) may contain debugging information.- OSD_crush type_DOWN, for example OSD_HOST_DOWN

All the OSDs within a particular CRUSH subtree are marked down, for example all OSDs on a host.

- OSD_ORPHAN

An OSD is referenced in the CRUSH map hierarchy but does not exist. The OSD can be removed from the CRUSH hierarchy with:

cephuser@adm >ceph osd crush rm osd.ID- OSD_OUT_OF_ORDER_FULL

The usage thresholds for backfillfull (defaults to 0.90), nearfull (defaults to 0.85), full (defaults to 0.95), and/or failsafe_full are not ascending. In particular, we expect backfillfull < nearfull, nearfull < full, and full < failsafe_full.

To read the current values, run:

cephuser@adm >ceph health detail HEALTH_ERR 1 full osd(s); 1 backfillfull osd(s); 1 nearfull osd(s) osd.3 is full at 97% osd.4 is backfill full at 91% osd.2 is near full at 87%The thresholds can be adjusted with the following commands:

cephuser@adm >ceph osd set-backfillfull-ratio ratiocephuser@adm >ceph osd set-nearfull-ratio ratiocephuser@adm >ceph osd set-full-ratio ratio- OSD_FULL

One or more OSDs has exceeded the full threshold and is preventing the cluster from servicing writes. Usage by pool can be checked with:

cephuser@adm >ceph dfThe currently defined full ratio can be seen with:

cephuser@adm >ceph osd dump | grep full_ratioA short-term workaround to restore write availability is to raise the full threshold by a small amount:

cephuser@adm >ceph osd set-full-ratio ratioAdd new storage to the cluster by deploying more OSDs, or delete existing data in order to free up space.

- OSD_BACKFILLFULL

One or more OSDs has exceeded the backfillfull threshold, which prevents data from being allowed to rebalance to this device. This is an early warning that rebalancing may not be able to complete and that the cluster is approaching full. Usage by pool can be checked with:

cephuser@adm >ceph df- OSD_NEARFULL

One or more OSDs has exceeded the nearfull threshold. This is an early warning that the cluster is approaching full. Usage by pool can be checked with:

cephuser@adm >ceph df- OSDMAP_FLAGS

One or more cluster flags of interest has been set. With the exception of full, these flags can be set or cleared with:

cephuser@adm >ceph osd set flagcephuser@adm >ceph osd unset flagThese flags include:

- full

The cluster is flagged as full and cannot service writes.

- pauserd, pausewr

Paused reads or writes.

- noup

OSDs are not allowed to start.

- nodown

OSD failure reports are being ignored, such that the monitors will not mark OSDs down.

- noin

OSDs that were previously marked out will not be marked back in when they start.

- noout

Down OSDs will not automatically be marked out after the configured interval.

- nobackfill, norecover, norebalance

Recovery or data rebalancing is suspended.

- noscrub, nodeep_scrub

Scrubbing (see Section 17.6, “Scrubbing placement groups”) is disabled.

- notieragent

Cache tiering activity is suspended.

- OSD_FLAGS

One or more OSDs has a per-OSD flag of interest set. These flags include:

- noup

OSD is not allowed to start.

- nodown

Failure reports for this OSD will be ignored.

- noin

If this OSD was previously marked out automatically after a failure, it will not be marked in when it starts.

- noout

If this OSD is down, it will not be automatically marked out after the configured interval.

Per-OSD flags can be set and cleared with:

cephuser@adm >ceph osd add-flag osd-IDcephuser@adm >ceph osd rm-flag osd-ID- OLD_CRUSH_TUNABLES

The CRUSH Map is using very old settings and should be updated. The oldest tunables that can be used (that is the oldest client version that can connect to the cluster) without triggering this health warning is determined by the

mon_crush_min_required_versionconfiguration option.- OLD_CRUSH_STRAW_CALC_VERSION

The CRUSH Map is using an older, non-optimal method for calculating intermediate weight values for straw buckets. The CRUSH Map should be updated to use the newer method (

straw_calc_version=1).- CACHE_POOL_NO_HIT_SET

One or more cache pools is not configured with a hit set to track usage, which prevents the tiering agent from identifying cold objects to flush and evict from the cache. Hit sets can be configured on the cache pool with:

cephuser@adm >ceph osd pool set poolname hit_set_type typecephuser@adm >ceph osd pool set poolname hit_set_period period-in-secondscephuser@adm >ceph osd pool set poolname hit_set_count number-of-hitsetscephuser@adm >ceph osd pool set poolname hit_set_fpp target-false-positive-rate- OSD_NO_SORTBITWISE

No pre-Luminous v12 OSDs are running but the

sortbitwiseflag has not been set. You need to set thesortbitwiseflag before Luminous v12 or newer OSDs can start:cephuser@adm >ceph osd set sortbitwise- POOL_FULL

One or more pools has reached its quota and is no longer allowing writes. You can set pool quotas and usage with:

cephuser@adm >ceph df detailYou can either raise the pool quota with

cephuser@adm >ceph osd pool set-quota poolname max_objects num-objectscephuser@adm >ceph osd pool set-quota poolname max_bytes num-bytesor delete some existing data to reduce usage.

- PG_AVAILABILITY

Data availability is reduced, meaning that the cluster is unable to service potential read or write requests for some data in the cluster. Specifically, one or more PGs is in a state that does not allow I/O requests to be serviced. Problematic PG states include peering, stale, incomplete, and the lack of active (if those conditions do not clear quickly). Detailed information about which PGs are affected is available from:

cephuser@adm >ceph health detailIn most cases the root cause is that one or more OSDs is currently down. The state of specific problematic PGs can be queried with:

cephuser@adm >ceph tell pgid query- PG_DEGRADED

Data redundancy is reduced for some data, meaning the cluster does not have the desired number of replicas for all data (for replicated pools) or erasure code fragments (for erasure coded pools). Specifically, one or more PGs have either the degraded or undersized flag set (there are not enough instances of that placement group in the cluster), or have not had the clean flag set for some time. Detailed information about which PGs are affected is available from:

cephuser@adm >ceph health detailIn most cases the root cause is that one or more OSDs is currently down. The state of specific problematic PGs can be queried with:

cephuser@adm >ceph tell pgid query- PG_DEGRADED_FULL

Data redundancy may be reduced or at risk for some data because of a lack of free space in the cluster. Specifically, one or more PGs has the backfill_toofull or recovery_toofull flag set, meaning that the cluster is unable to migrate or recover data because one or more OSDs is above the backfillfull threshold.

- PG_DAMAGED

Data scrubbing (see Section 17.6, “Scrubbing placement groups”) has discovered some problems with data consistency in the cluster. Specifically, one or more PGs has the inconsistent or snaptrim_error flag is set, indicating an earlier scrub operation found a problem, or that the repair flag is set, meaning a repair for such an inconsistency is currently in progress.

- OSD_SCRUB_ERRORS

Recent OSD scrubs have uncovered inconsistencies.

- CACHE_POOL_NEAR_FULL

A cache tier pool is nearly full. Full in this context is determined by the target_max_bytes and target_max_objects properties on the cache pool. When the pool reaches the target threshold, write requests to the pool may block while data is flushed and evicted from the cache, a state that normally leads to very high latencies and poor performance. The cache pool target size can be adjusted with:

cephuser@adm >ceph osd pool set cache-pool-name target_max_bytes bytescephuser@adm >ceph osd pool set cache-pool-name target_max_objects objectsNormal cache flush and evict activity may also be throttled because of reduced availability or performance of the base tier, or overall cluster load.

- TOO_FEW_PGS

The number of PGs in use is below the configurable threshold of

mon_pg_warn_min_per_osdPGs per OSD. This can lead to suboptimal distribution and balance of data across the OSDs in the cluster reduce overall performance.- TOO_MANY_PGS

The number of PGs in use is above the configurable threshold of

mon_pg_warn_max_per_osdPGs per OSD. This can lead to higher memory usage for OSD daemons, slower peering after cluster state changes (for example OSD restarts, additions, or removals), and higher load on the Ceph Managers and Ceph Monitors.While the

pg_numvalue for existing pools cannot be reduced, thepgp_numvalue can. This effectively co-locates some PGs on the same sets of OSDs, mitigating some of the negative impacts described above. Thepgp_numvalue can be adjusted with:cephuser@adm >ceph osd pool set pool pgp_num value- SMALLER_PGP_NUM

One or more pools has a

pgp_numvalue less thanpg_num. This is normally an indication that the PG count was increased without also increasing the placement behavior. This is normally resolved by settingpgp_numto matchpg_num, triggering the data migration, with:cephuser@adm >ceph osd pool set pool pgp_num pg_num_value- MANY_OBJECTS_PER_PG

One or more pools have an average number of objects per PG that is significantly higher than the overall cluster average. The specific threshold is controlled by the

mon_pg_warn_max_object_skewconfiguration value. This is usually an indication that the pool(s) containing most of the data in the cluster have too few PGs, and/or that other pools that do not contain as much data have too many PGs. The threshold can be raised to silence the health warning by adjusting themon_pg_warn_max_object_skewconfiguration option on the monitors.- POOL_APP_NOT_ENABLED¶

A pool exists that contains one or more objects but has not been tagged for use by a particular application. Resolve this warning by labeling the pool for use by an application. For example, if the pool is used by RBD:

cephuser@adm >rbd pool init pool_nameIf the pool is being used by a custom application 'foo', you can also label it using the low-level command:

cephuser@adm >ceph osd pool application enable foo- POOL_FULL

One or more pools have reached (or is very close to reaching) its quota. The threshold to trigger this error condition is controlled by the

mon_pool_quota_crit_thresholdconfiguration option. Pool quotas can be adjusted up or down (or removed) with:cephuser@adm >ceph osd pool set-quota pool max_bytes bytescephuser@adm >ceph osd pool set-quota pool max_objects objectsSetting the quota value to 0 will disable the quota.

- POOL_NEAR_FULL

One or more pools are approaching their quota. The threshold to trigger this warning condition is controlled by the

mon_pool_quota_warn_thresholdconfiguration option. Pool quotas can be adjusted up or down (or removed) with:cephuser@adm >ceph osd osd pool set-quota pool max_bytes bytescephuser@adm >ceph osd osd pool set-quota pool max_objects objectsSetting the quota value to 0 will disable the quota.

- OBJECT_MISPLACED

One or more objects in the cluster are not stored on the node where the cluster wants them to be. This is an indication that data migration caused by a recent cluster change has not yet completed. Misplaced data is not a dangerous condition in itself. Data consistency is never at risk, and old copies of objects are never removed until the desired number of new copies (in the desired locations) are present.

- OBJECT_UNFOUND

One or more objects in the cluster cannot be found. Specifically, the OSDs know that a new or updated copy of an object should exist, but a copy of that version of the object has not been found on the OSDs that are currently up. Read or write requests to the 'unfound' objects will be blocked. Ideally, the down OSD that has the most recent copy of the unfound object can be brought back up. Candidate OSDs can be identified from the peering state for the PG(s) responsible for the unfound object:

cephuser@adm >ceph tell pgid query- REQUEST_SLOW

One or more OSD requests is taking a long time to process. This can be an indication of extreme load, a slow storage device, or a software bug. You can query the request queue on the OSD(s) in question with the following command executed from the OSD host:

cephuser@adm >cephadm enter --name osd.ID -- ceph daemon osd.ID opsYou can see a summary of the slowest recent requests:

cephuser@adm >cephadm enter --name osd.ID -- ceph daemon osd.ID dump_historic_opsYou can find the location of an OSD with:

cephuser@adm >ceph osd find osd.id- REQUEST_STUCK

One or more OSD requests have been blocked for a relatively long time, for example 4096 seconds. This is an indication that either the cluster has been unhealthy for an extended period of time (for example, not enough running OSDs or inactive PGs) or there is some internal problem with the OSD.

- PG_NOT_SCRUBBED

One or more PGs have not been scrubbed (see Section 17.6, “Scrubbing placement groups”) recently. PGs are normally scrubbed every

mon_scrub_intervalseconds, and this warning triggers whenmon_warn_not_scrubbedsuch intervals have elapsed without a scrub. PGs will not scrub if they are not flagged as clean, which may happen if they are misplaced or degraded (see PG_AVAILABILITY and PG_DEGRADED above). You can manually initiate a scrub of a clean PG with:cephuser@adm >ceph pg scrub pgid- PG_NOT_DEEP_SCRUBBED

One or more PGs has not been deep scrubbed (see Section 17.6, “Scrubbing placement groups”) recently. PGs are normally scrubbed every

osd_deep_mon_scrub_intervalseconds, and this warning triggers whenmon_warn_not_deep_scrubbedseconds have elapsed without a scrub. PGs will not (deep) scrub if they are not flagged as clean, which may happen if they are misplaced or degraded (see PG_AVAILABILITY and PG_DEGRADED above). You can manually initiate a scrub of a clean PG with:cephuser@adm >ceph pg deep-scrub pgid

If you specified non-default locations for your configuration or keyring, you may specify their locations:

# ceph -c /path/to/conf -k /path/to/keyring health12.3 Checking a cluster's usage stats #

To check a cluster’s data usage and distribution among pools, use the

ceph df command. To get more details, use ceph

df detail.

cephuser@adm > ceph df

--- RAW STORAGE ---

CLASS SIZE AVAIL USED RAW USED %RAW USED

hdd 30 GiB 27 GiB 121 MiB 3.1 GiB 10.40

TOTAL 30 GiB 27 GiB 121 MiB 3.1 GiB 10.40

--- POOLS ---

POOL ID STORED OBJECTS USED %USED MAX AVAIL

device_health_metrics 1 0 B 0 0 B 0 8.5 GiB

cephfs.my_cephfs.meta 2 1.0 MiB 22 4.5 MiB 0.02 8.5 GiB

cephfs.my_cephfs.data 3 0 B 0 0 B 0 8.5 GiB

.rgw.root 4 1.9 KiB 13 2.2 MiB 0 8.5 GiB

myzone.rgw.log 5 3.4 KiB 207 6 MiB 0.02 8.5 GiB

myzone.rgw.control 6 0 B 8 0 B 0 8.5 GiB

myzone.rgw.meta 7 0 B 0 0 B 0 8.5 GiB

The RAW STORAGE section of the output provides an

overview of the amount of storage your cluster uses for your data.

CLASS: The storage class of the device. Refer to Section 17.1.1, “Device classes” for more details on device classes.SIZE: The overall storage capacity of the cluster.AVAIL: The amount of free space available in the cluster.USED: The space (accumulated over all OSDs) allocated purely for data objects kept at block device.RAW USED: The sum of 'USED' space and space allocated/reserved at block device for Ceph purposes, for example BlueFS part for BlueStore.% RAW USED: The percentage of raw storage used. Use this number in conjunction with thefull ratioandnear full ratioto ensure that you are not reaching your cluster’s capacity. See Section 12.8, “Storage capacity” for additional details.Note: Cluster fill levelWhen a raw storage fill level is getting close to 100%, you need to add new storage to the cluster. A higher usage may lead to single full OSDs and cluster health problems.

Use the command

ceph osd df treeto list the fill level of all OSDs.

The POOLS section of the output provides a list of pools

and the notional usage of each pool. The output from this section

does not reflect replicas, clones or snapshots. For

example, if you store an object with 1MB of data, the notional usage will be

1MB, but the actual usage may be 2MB or more depending on the number of

replicas, clones and snapshots.

POOL: The name of the pool.ID: The pool ID.STORED: The amount of data stored by the user.OBJECTS: The notional number of objects stored per pool.USED: The amount of space allocated purely for data by all OSD nodes in kB.%USED: The notional percentage of storage used per pool.MAX AVAIL: The maximum available space in the given pool.

The numbers in the POOLS section are notional. They are not inclusive of

the number of replicas, snapshots or clones. As a result, the sum of the

USEDand %USED amounts will not add up

to the RAW USED and %RAW USED amounts

in the RAW STORAGE section of the output.

12.4 Checking OSD status #

You can check OSDs to ensure they are up and on by executing:

cephuser@adm > ceph osd stator

cephuser@adm > ceph osd dumpYou can also view OSDs according to their position in the CRUSH map.

ceph osd tree will print a CRUSH tree with a host, its

OSDs, whether they are up, and their weight:

cephuser@adm > ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 3 0.02939 root default

-3 3 0.00980 rack mainrack

-2 3 0.00980 host osd-host

0 1 0.00980 osd.0 up 1.00000 1.00000

1 1 0.00980 osd.1 up 1.00000 1.00000

2 1 0.00980 osd.2 up 1.00000 1.0000012.5 Checking for full OSDs #

Ceph prevents you from writing to a full OSD so that you do not lose data.

In an operational cluster, you should receive a warning when your cluster is

getting near its full ratio. The mon osd full ratio

defaults to 0.95, or 95% of capacity before it stops clients from writing

data. The mon osd nearfull ratio defaults to 0.85, or 85%

of capacity, when it generates a health warning.

Full OSD nodes will be reported by ceph health:

cephuser@adm > ceph health

HEALTH_WARN 1 nearfull osds

osd.2 is near full at 85%or

cephuser@adm > ceph health

HEALTH_ERR 1 nearfull osds, 1 full osds

osd.2 is near full at 85%

osd.3 is full at 97%The best way to deal with a full cluster is to add new OSD hosts/disks allowing the cluster to redistribute data to the newly available storage.

After an OSD becomes full—it uses 100% of its disk space—it will normally crash quickly without warning. Following are a few tips to remember when administering OSD nodes.

Each OSD's disk space (usually mounted under

/var/lib/ceph/osd/osd-{1,2..}) needs to be placed on a dedicated underlying disk or partition.Check the Ceph configuration files and make sure that Ceph does not store its log file to the disks/partitions dedicated for use by OSDs.

Make sure that no other process writes to the disks/partitions dedicated for use by OSDs.

12.6 Checking the monitor status #

After you start the cluster and before first reading and/or writing data, check the Ceph Monitors' quorum status. When the cluster is already serving requests, check the Ceph Monitors' status periodically to ensure that they are running.

To display the monitor map, execute the following:

cephuser@adm > ceph mon stator

cephuser@adm > ceph mon dumpTo check the quorum status for the monitor cluster, execute the following:

cephuser@adm > ceph quorum_statusCeph will return the quorum status. For example, a Ceph cluster consisting of three monitors may return the following:

{ "election_epoch": 10,

"quorum": [

0,

1,

2],

"monmap": { "epoch": 1,

"fsid": "444b489c-4f16-4b75-83f0-cb8097468898",

"modified": "2011-12-12 13:28:27.505520",

"created": "2011-12-12 13:28:27.505520",

"mons": [

{ "rank": 0,

"name": "a",

"addr": "192.168.1.10:6789\/0"},

{ "rank": 1,

"name": "b",

"addr": "192.168.1.11:6789\/0"},

{ "rank": 2,

"name": "c",

"addr": "192.168.1.12:6789\/0"}

]

}

}12.7 Checking placement group states #

Placement groups map objects to OSDs. When you monitor your placement

groups, you will want them to be active and

clean. For a detailed discussion, refer to

Section 12.9, “Monitoring OSDs and placement groups”.

12.8 Storage capacity #

When a Ceph storage cluster gets close to its maximum capacity, Ceph prevents you from writing to or reading from Ceph OSDs as a safety measure to prevent data loss. Therefore, letting a production cluster approach its full ratio is not a good practice, because it sacrifices high availability. The default full ratio is set to .95, meaning 95% of capacity. This a very aggressive setting for a test cluster with a small number of OSDs.

When monitoring your cluster, be alert to warnings related to the

nearfull ratio. It means that a failure of some OSDs

could result in a temporary service disruption if one or more OSDs fails.

Consider adding more OSDs to increase storage capacity.

A common scenario for test clusters involves a system administrator removing

a Ceph OSD from the Ceph storage cluster to watch the cluster rebalance. Then

removing another Ceph OSD, and so on until the cluster eventually reaches the

full ratio and locks up. We recommend a bit of capacity planning even with a

test cluster. Planning enables you to estimate how much spare capacity you

will need in order to maintain high availability. Ideally, you want to plan

for a series of Ceph OSD failures where the cluster can recover to an

active + clean state without replacing those Ceph OSDs

immediately. You can run a cluster in an active +

degraded state, but this is not ideal for normal operating

conditions.

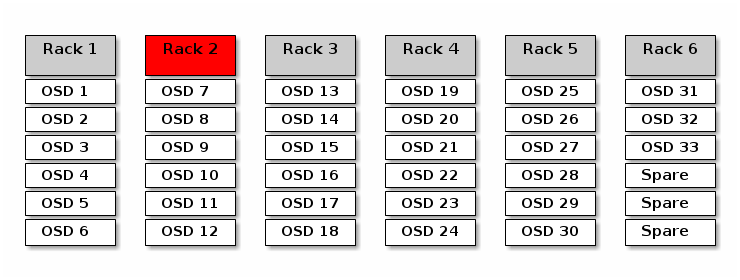

The following diagram depicts a simplistic Ceph storage cluster containing

33 Ceph nodes with one Ceph OSD per host, each of them reading from and

writing to a 3 TB drive. This exemplary cluster has a maximum actual

capacity of 99 TB. The mon osd full ratio option is set to

0.95. If the cluster falls to 5 TB of the remaining capacity, it will not

allow the clients to read and write data. Therefore the storage cluster’s

operating capacity is 95 TB, not 99 TB.

It is normal in such a cluster for one or two OSDs to fail. A less frequent

but reasonable scenario involves a rack’s router or power supply failing,

which brings down multiple OSDs simultaneously (for example, OSDs 7-12). In

such a scenario, you should still strive for a cluster that can remain

operational and achieve an active + clean

state—even if that means adding a few hosts with additional OSDs in

short order. If your capacity usage is too high, you may not lose data. But

you could still sacrifice data availability while resolving an outage within

a failure domain if capacity usage of the cluster exceeds the full ratio.

For this reason, we recommend at least some rough capacity planning.

Identify two numbers for your cluster:

The number of OSDs.

The total capacity of the cluster.

If you divide the total capacity of your cluster by the number of OSDs in your cluster, you will find the mean average capacity of an OSD within your cluster. Consider multiplying that number by the number of OSDs you expect will fail simultaneously during normal operations (a relatively small number). Finally, multiply the capacity of the cluster by the full ratio to arrive at a maximum operating capacity. Then, subtract the number of the amount of data from the OSDs you expect to fail to arrive at a reasonable full ratio. Repeat the foregoing process with a higher number of OSD failures (a rack of OSDs) to arrive at a reasonable number for a near full ratio.

The following settings only apply on cluster creation and are then stored in the OSD map:

[global] mon osd full ratio = .80 mon osd backfillfull ratio = .75 mon osd nearfull ratio = .70

These settings only apply during cluster creation. Afterward they need to

be changed in the OSD Map using the ceph osd

set-nearfull-ratio and ceph osd set-full-ratio

commands.

- mon osd full ratio

The percentage of disk space used before an OSD is considered

full. Default is .95- mon osd backfillfull ratio

The percentage of disk space used before an OSD is considered too

fullto backfill. Default is .90- mon osd nearfull ratio

The percentage of disk space used before an OSD is considered

nearfull. Default is .85

If some OSDs are nearfull, but others have plenty of

capacity, you may have a problem with the CRUSH weight for the

nearfull OSDs.

12.9 Monitoring OSDs and placement groups #

High availability and high reliability require a fault-tolerant approach to managing hardware and software issues. Ceph has no single point-of-failure, and can service requests for data in a 'degraded' mode. Ceph’s data placement introduces a layer of indirection to ensure that data does not bind directly to particular OSD addresses. This means that tracking down system faults requires finding the placement group and the underlying OSDs at root of the problem.

A fault in one part of the cluster may prevent you from accessing a particular object. That does not mean that you cannot access other objects. When you run into a fault, follow the steps for monitoring your OSDs and placement groups. Then begin troubleshooting.

Ceph is generally self-repairing. However, when problems persist, monitoring OSDs and placement groups will help you identify the problem.

12.9.1 Monitoring OSDs #

An OSD’s status is either in the cluster ('in') or out of the cluster ('out'). At the same time, it is either up and running ('up') or it is down and not running ('down'). If an OSD is 'up', it may be either in the cluster (you can read and write data) or out of the cluster. If it was in the cluster and recently moved out of the cluster, Ceph will migrate placement groups to other OSDs. If an OSD is out of the cluster, CRUSH will not assign placement groups to it. If an OSD is 'down', it should also be 'out'.

If an OSD is 'down' and 'in', there is a problem and the cluster will not be in a healthy state.

If you execute a command such as ceph health,

ceph -s or ceph -w, you may notice

that the cluster does not always echo back HEALTH OK.

With regard to OSDs, you should expect that the cluster will

not echo HEALTH OK under the

following circumstances:

You have not started the cluster yet (it will not respond).

You have started or restarted the cluster and it is not ready yet, because the placement groups are being created and the OSDs are in the process of peering.

You have added or removed an OSD.

You have modified your cluster map.

An important aspect of monitoring OSDs is to ensure that when the cluster is up and running, all the OSDs in the cluster are up and running, too. To see if all the OSDs are running, execute:

# ceph osd stat

x osds: y up, z in; epoch: eNNNN

The result should tell you the total number of OSDs (x), how many are 'up'

(y), how many are 'in' (z), and the map epoch (eNNNN). If the number of

OSDs that are 'in' the cluster is more than the number of OSDs that are

'up', execute the following command to identify the

ceph-osd daemons that are not running:

# ceph osd tree

#ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 2.00000 pool openstack

-3 2.00000 rack dell-2950-rack-A

-2 2.00000 host dell-2950-A1

0 ssd 1.00000 osd.0 up 1.00000 1.00000

1 ssd 1.00000 osd.1 down 1.00000 1.00000For example, if an OSD with ID 1 is down, start it:

cephuser@osd > sudo systemctl start ceph-CLUSTER_ID@osd.0.serviceSee Section 4.3, “OSDs not running” for problems associated with OSDs that have stopped or that will not restart.

12.9.2 Assigning placement group sets #

When CRUSH assigns placement groups to OSDs, it looks at the number of

replicas for the pool and assigns the placement group to OSDs such that

each replica of the placement group gets assigned to a different OSD. For

example, if the pool requires three replicas of a placement group, CRUSH

may assign them to osd.1, osd.2 and

osd.3 respectively. CRUSH actually seeks a pseudo-random

placement that will take into account failure domains you set in your

CRUSH Map, so you will rarely see placement groups assigned to nearest

neighbor OSDs in a large cluster. We refer to the set of OSDs that should

contain the replicas of a particular placement group as the acting set. In

some cases, an OSD in the acting set is down or otherwise not able to

service requests for objects in the placement group. When these situations

arise, it may match one of the following scenarios:

You added or removed an OSD. Then, CRUSH reassigned the placement group to other OSDs and therefore changed the composition of the acting set, causing the migration of data with a 'backfill' process.

An OSD was 'down', was restarted, and is now recovering.

An OSD in the acting set is 'down' or unable to service requests, and another OSD has temporarily assumed its duties.

Ceph processes a client request using the up set, which is the set of OSDs that will actually handle the requests. In most cases, the up set and the acting set are virtually identical. When they are not, it may indicate that Ceph is migrating data, an OSD is recovering, or that there is a problem (for example, Ceph usually echoes a

HEALTH WARNstate with a 'stuck stale' message in such scenarios).

To retrieve a list of placement groups, run:

cephuser@adm > ceph pg dumpTo view which OSDs are within the acting set or the up set for a given placement group, run:

cephuser@adm > ceph pg map PG_NUM

osdmap eNNN pg RAW_PG_NUM (PG_NUM) -> up [0,1,2] acting [0,1,2]The result should tell you the osdmap epoch (eNNN), the placement group number (PG_NUM), the OSDs in the up set ('up'), and the OSDs in the acting set ('acting'):

If the up set and acting set do not match, this may be an indicator either of the cluster rebalancing itself, or of a potential problem with the cluster.

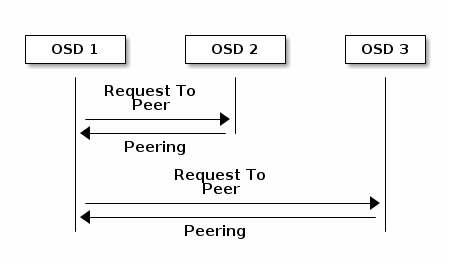

12.9.3 Peering #

Before you can write data to a placement group, it must be in an

active state, and it should be in a

clean state. For Ceph to determine the current state

of a placement group, the primary OSD of the placement group (the first OSD

in the acting set), peers with the secondary and tertiary OSDs to

establish agreement on the current state of the placement group (assuming a

pool with three replicas of the PG).

12.9.4 Monitoring placement group states #

If you execute a command such as ceph health,

ceph -s or ceph -w, you may notice

that the cluster does not always echo back the HEALTH OK

message. After you check to see if the OSDs are running, you should also

check placement group states.

Expect that the cluster will not echo

HEALTH OK in a number of placement group peering-related

circumstances:

You have created a pool and placement groups have not peered yet.

The placement groups are recovering.

You have added an OSD to or removed an OSD from the cluster.

You have modified your CRUSH Map and your placement groups are migrating.

There is inconsistent data in different replicas of a placement group.

Ceph is scrubbing a placement group’s replicas.

Ceph does not have enough storage capacity to complete backfilling operations.

If one of the above mentioned circumstances causes Ceph to echo

HEALTH WARN, do not panic. In many cases, the cluster

will recover on its own. In some cases, you may need to take action. An

important aspect of monitoring placement groups is to ensure that when the

cluster is up and running, all placement groups are 'active' and preferably

in the 'clean state'. To see the status of all placement groups, run:

cephuser@adm > ceph pg stat

x pgs: y active+clean; z bytes data, aa MB used, bb GB / cc GB availThe result should tell you the total number of placement groups (x), how many placement groups are in a particular state such as 'active+clean' (y) and the amount of data stored (z).

In addition to the placement group states, Ceph will also echo back the amount of storage capacity used (aa), the amount of storage capacity remaining (bb), and the total storage capacity for the placement group. These numbers can be important in a few cases:

You are reaching your

near full ratioorfull ratio.Your data is not getting distributed across the cluster because of an error in your CRUSH configuration.

Placement group IDs consist of the pool number (not pool name) followed by

a period (.) and the placement group ID—a hexadecimal number. You

can view pool numbers and their names from the output of ceph osd

lspools. For example, the default pool rbd

corresponds to pool number 0. A fully qualified placement group ID has the

following form:

POOL_NUM.PG_ID

And it typically looks like this:

0.1f

To retrieve a list of placement groups, run the following:

cephuser@adm > ceph pg dumpYou can also format the output in JSON format and save it to a file:

cephuser@adm > ceph pg dump -o FILE_NAME --format=jsonTo query a particular placement group, run the following:

cephuser@adm > ceph pg POOL_NUM.PG_ID queryThe following list describes the common placement group states in detail.

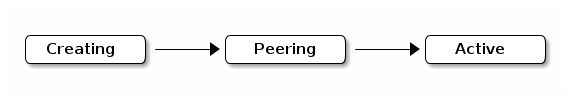

- CREATING

When you create a pool, it will create the number of placement groups you specified. Ceph will echo 'creating' when it is creating one or more placement groups. When they are created, the OSDs that are part of the placement group’s acting set will peer. When peering is complete, the placement group status should be 'active+clean', which means that a Ceph client can begin writing to the placement group.

Figure 12.3: Placement groups status #- PEERING

When Ceph is peering a placement group, it is bringing the OSDs that store the replicas of the placement group into agreement about the state of the objects and metadata in the placement group. When Ceph completes peering, this means that the OSDs that store the placement group agree about the current state of the placement group. However, completion of the peering process does not mean that each replica has the latest contents.

Note: Authoritative historyCeph will not acknowledge a write operation to a client until all OSDs of the acting set persist the write operation. This practice ensures that at least one member of the acting set will have a record of every acknowledged write operation since the last successful peering operation.

With an accurate record of each acknowledged write operation, Ceph can construct and enlarge a new authoritative history of the placement group—a complete and fully ordered set of operations that, if performed, would bring an OSD’s copy of a placement group up to date.

- ACTIVE

When Ceph completes the peering process, a placement group may become

active. Theactivestate means that the data in the placement group is generally available in the primary placement group and the replicas for read and write operations.- CLEAN

When a placement group is in the

cleanstate, the primary OSD and the replica OSDs have successfully peered and there are no stray replicas for the placement group. Ceph replicated all objects in the placement group the correct number of times.- DEGRADED

When a client writes an object to the primary OSD, the primary OSD is responsible for writing the replicas to the replica OSDs. After the primary OSD writes the object to storage, the placement group will remain in a 'degraded' state until the primary OSD has received an acknowledgement from the replica OSDs that Ceph created the replica objects successfully.

The reason a placement group can be 'active+degraded' is that an OSD may be 'active' even though it does not hold all of the objects yet. If an OSD goes down, Ceph marks each placement group assigned to the OSD as 'degraded'. The OSDs must peer again when the OSD comes back up. However, a client can still write a new object to a degraded placement group if it is 'active'.

If an OSD is 'down' and the 'degraded' condition persists, Ceph may mark the down OSD as 'out' of the cluster and remap the data from the 'down' OSD to another OSD. The time between being marked 'down' and being marked 'out' is controlled by the

mon osd down out intervaloption, which is set to 600 seconds by default.A placement group can also be 'degraded' because Ceph cannot find one or more objects that should be in the placement group. While you cannot read or write to unfound objects, you can still access all of the other objects in the 'degraded' placement group.

- RECOVERING

Ceph was designed for fault-tolerance at a scale where hardware and software problems are ongoing. When an OSD goes 'down', its contents may fall behind the current state of other replicas in the placement groups. When the OSD is back 'up', the contents of the placement groups must be updated to reflect the current state. During that time period, the OSD may reflect a 'recovering' state.

Recovery is not always trivial, because a hardware failure may cause a cascading failure of multiple OSDs. For example, a network switch for a rack or cabinet may fail, which can cause the OSDs of a number of host machines to fall behind the current state of the cluster. Each of the OSDs must recover when the fault is resolved.

Ceph provides a number of settings to balance the resource contention between new service requests and the need to recover data objects and restore the placement groups to the current state. The

osd recovery delay startsetting allows an OSD to restart, re-peer and even process some replay requests before starting the recovery process. Theosd recovery thread timeoutsets a thread timeout, because multiple OSDs may fail, restart and re-peer at staggered rates. Theosd recovery max activesetting limits the number of recovery requests an OSD will process simultaneously to prevent the OSD from failing to serve. Theosd recovery max chunksetting limits the size of the recovered data chunks to prevent network congestion.- BACK FILLING

When a new OSD joins the cluster, CRUSH will reassign placement groups from OSDs in the cluster to the newly added OSD. Forcing the new OSD to accept the reassigned placement groups immediately can put excessive load on the new OSD. Backfilling the OSD with the placement groups allows this process to begin in the background. When backfilling is complete, the new OSD will begin serving requests when it is ready.

During the backfill operations, you may see one of several states: 'backfill_wait' indicates that a backfill operation is pending, but is not yet in progress; 'backfill' indicates that a backfill operation is in progress; 'backfill_too_full' indicates that a backfill operation was requested, but could not be completed because of insufficient storage capacity. When a placement group cannot be backfilled, it may be considered 'incomplete'.

Ceph provides a number of settings to manage the load associated with reassigning placement groups to an OSD (especially a new OSD). By default,

osd max backfillssets the maximum number of concurrent backfills to or from an OSD to 10. Thebackfill full ratioenables an OSD to refuse a backfill request if the OSD is approaching its full ratio (90%, by default) and change withceph osd set-backfillfull-ratiocommand. If an OSD refuses a backfill request, theosd backfill retry intervalenables an OSD to retry the request (after 10 seconds, by default). OSDs can also setosd backfill scan minandosd backfill scan maxto manage scan intervals (64 and 512, by default).- REMAPPED

When the acting set that services a placement group changes, the data migrates from the old acting set to the new acting set. It may take some time for a new primary OSD to service requests. So it may ask the old primary to continue to service requests until the placement group migration is complete. When data migration completes, the mapping uses the primary OSD of the new acting set.

- STALE

While Ceph uses heartbeats to ensure that hosts and daemons are running, the

ceph-osddaemons may also get into a 'stuck' state where they are not reporting statistics in a timely manner (for example, a temporary network fault). By default, OSD daemons report their placement group, boot and failure statistics every half second (0.5), which is more frequent than the heartbeat thresholds. If the primary OSD of a placement group’s acting set fails to report to the monitor or if other OSDs have reported the primary OSD as 'down', the monitors will mark the placement group as 'stale'.When you start your cluster, it is common to see the 'stale' state until the peering process completes. After your cluster has been running for a while, seeing placement groups in the 'stale' state indicates that the primary OSD for those placement groups is down or not reporting placement group statistics to the monitor.

12.9.5 Finding an object location #

To store object data in the Ceph Object Store, a Ceph client needs to set an object name and specify a related pool. The Ceph client retrieves the latest cluster map and the CRUSH algorithm calculates how to map the object to a placement group, and then calculates how to assign the placement group to an OSD dynamically. To find the object location, all you need is the object name and the pool name. For example:

cephuser@adm > ceph osd map POOL_NAME OBJECT_NAME [NAMESPACE]

As an example, let us create an object. Specify an object name

'test-object-1', a path to an example file 'testfile.txt' containing some

object data, and a pool name 'data' using the rados put

command on the command line:

cephuser@adm > rados put test-object-1 testfile.txt --pool=dataTo verify that the Ceph Object Store stored the object, run the following:

cephuser@adm > rados -p data lsNow, identify the object location. Ceph will output the object’s location:

cephuser@adm > ceph osd map data test-object-1

osdmap e537 pool 'data' (0) object 'test-object-1' -> pg 0.d1743484 \

(0.4) -> up ([1,0], p0) acting ([1,0], p0)

To remove the example object, simply delete it using the rados

rm command:

cephuser@adm > rados rm test-object-1 --pool=data