4 Installing applications from AI Library #

SUSE AI is delivered as a set of components that you can combine to meet specific use cases. To enable the full integrated stack, you need to deploy multiple applications in sequence. Applications with the fewest dependencies must be installed first, followed by dependent applications once their required dependencies are in place within the cluster.

For production deployments, we strongly recommended deploying Rancher, SUSE Observability, and workloads from the AI library to separate Kubernetes clusters.

4.1 What is SUSE Application Collection? #

SUSE Application Collection provides curated, trusted, compliant and up-to-date applications for Kubernetes. Learn more on its dedicated Web site and in the product summary.

4.2 What is SUSE Registry? #

Applications in the SUSE Registry are not built by the SUSE build system. They are mirrored upstream projects with attached supply chain artifacts. These artifacts help you source popular applications and tools in the AI ecosystem from a single source and provide built-in visibility into their upstream origins. Learn more on the registry’s Web site and refer to Section 4.15, “Verifying SUSE AI Library applications” to see how to verify the supply chain artifacts .

You can install the required AI Library component Helm charts using one of the following methods:

Install each AI application manually using the Helm CLI as described in Section 4.3, “Installation procedure”.

Use a meta Helm chart called SUSE AI Deployer to include all the dependencies specific to your use case. Refer to Section 5.2, “AI Library deployer” for more details.

Use SUSE AI Lifecycle Manager GUI to install individual AI applications. Refer to Section 5.3, “Lifecycle manager” for more details.

Important: The ClusterRepo repositoryHelm charts of AI applications are hosted in the private SUSE registries: SUSE Application Collection and SUSE Registry. Therefore, SUSE AI Lifecycle Manager requires a ClusterRepo repository as the source of AI application charts. The ClusterRepo name is used by the extension to identify and select the chart source.

4.3 Installation procedure #

This procedure includes steps to install AI Library applications.

Purchase the SUSE AI entitlement. It is a separate entitlement from SUSE Rancher Prime.

Visit https://apps.rancher.io/ to perform the check for the SUSE AI entitlement. If the entitlement check is successful, you are given access to pull and deploy the SUSE AI-related Helm charts and container images available on SUSE Application Collection.

Visit the SUSE Application Collection, sign in and get the user access token as described in https://docs.apps.rancher.io/get-started/authentication/.

Create a Kubernetes namespace if it does not already exist. The steps in this procedure assume that all containers are deployed into the same namespace, referred to as

SUSE_AI_NAMESPACE. Replace its name to match your preferences. Helm charts of AI applications are hosted in the private SUSE registries: SUSE Application Collection and SUSE Registry.> kubectl create namespace <SUSE_AI_NAMESPACE>Create the SUSE Application Collection secret.

> kubectl create secret docker-registry application-collection \ --docker-server=dp.apps.rancher.io \ --docker-username=<APPCO_USERNAME> \ --docker-password=<APPCO_USER_TOKEN> \ -n <SUSE_AI_NAMESPACE>Create the SUSE Registry secret.

> kubectl create secret docker-registry suse-ai-registry \ --docker-server=registry.suse.com \ --docker-username=regcode \ --docker-password=<SCC_REG_CODE> \ -n <SUSE_AI_NAMESPACE>Log in to the SUSE Application Collection Helm registry.

> helm registry login dp.apps.rancher.io/charts \ -u <APPCO_USERNAME> \ -p <APPCO_USER_TOKEN>Log in to the SUSE Registry Helm registry. The username is

regcodeand the password is the SCC registration code of your SUSE AI subscription you saved earlier.> helm registry login registry.suse.com \ -u regcode \ -p <SCC_REG_CODE>Install cert-manager as described in Section 4.4, “Installing cert-manager”.

Install AI Library components. You can either install each component separately, or use the SUSE AI Deployer chart to install the components together as described in Section 5.2, “AI Library deployer”.

Install an application with vector database capabilities. Open WebUI supports either OpenSearch or Milvus.

(Optional) Install Ollama as described in Section 4.7, “Installing Ollama”.

Install Open WebUI as described in Section 4.8, “Installing Open WebUI”.

Install vLLM as described in Section 4.9, “Installing vLLM”.

Install mcpo as described in Section 4.10, “Installing mcpo”.

Install PyTorch as described in Section 4.11, “Installing PyTorch”.

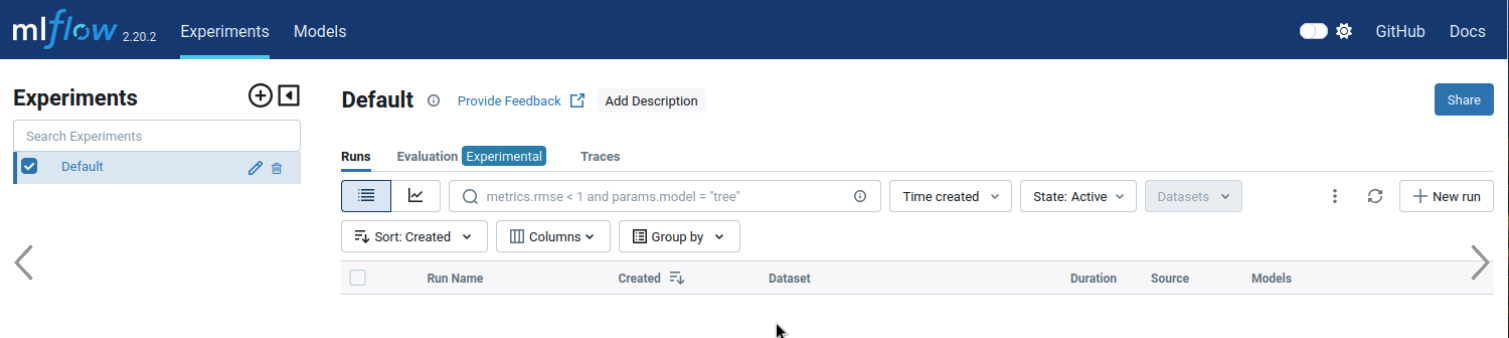

Install MLflow as described in Section 4.14, “Installing MLflow”.

Install Qdrant as described in Section 4.12, “Installing Qdrant”.

Install LiteLLM as described in Section 4.13, “Installing LiteLLM”.

4.4 Installing cert-manager #

cert-manager is an extensible X.509 certificate controller for Kubernetes workloads. It supports certificates from popular public issuers as well as private issuers. cert-manager ensures that the certificates are valid and up-to-date, and attempts to renew certificates at a configured time before expiry.

In previous releases, cert-manager was automatically installed together with Open WebUI. Currently, cert-manager is no longer part of the Open WebUI Helm chart and you need to install it separately.

4.4.1 Details about the cert-manager application #

Before deploying cert-manager, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/cert-managerAlternatively, you can also refer to the cert-manager Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/cert-manager. It contains available versions and the link to pull the cert-manager container image.

4.4.2 cert-manager installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

Install the cert-manager chart.

> helm upgrade --install cert-manager \

oci://dp.apps.rancher.io/charts/cert-manager \

-n <CERT_MANAGER_NAMESPACE> \

--set crds.enabled=true \

--set 'global.imagePullSecrets[0].name'=application-collection4.4.3 Upgrading cert-manager #

To upgrade cert-manager to a specific new version, run the following command:

> helm upgrade --install cert-manager \

oci://dp.apps.rancher.io/charts/cert-manager \

-n <CERT_MANAGER_NAMESPACE> \

--version <VERSION_NUMBER>To upgrade cert-manager to the latest version, run the following command:

> helm upgrade --install cert-manager \

oci://dp.apps.rancher.io/charts/cert-manager \

-n <CERT_MANAGER_NAMESPACE>4.4.4 Uninstalling cert-manager #

To uninstall cert-manager, run the following command:

> helm uninstall cert-manager -n <CERT_MANAGER_NAMESPACE>4.5 Installing OpenSearch #

OpenSearch is a community-driven, open source search and analytics suite. It is used to search, visualize and analyze data. OpenSearch consists of a data store and search engine (OpenSearch), a visualization and user interface (OpenSearch Dashboards), and a server-side data collector (Data Prepper). Its functionality can be extended by plug-ins that enhance features like search, analytics, observability, security or machine learning.

4.5.1 Details about the OpenSearch application #

Before deploying OpenSearch, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/opensearchAlternatively, you can also refer to the OpenSearch Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/opensearch. It contains OpenSearch dependencies, available versions and the link to pull the OpenSearch container image.

4.5.2 OpenSearch installation procedure #

OpenSearch can operate as a single-node or multi-node cluster. The following override file examples outline both scenarios.

Both scenarios require increasing the value of the vm.max_map_count to at least 262144.

To check the current value, run the following command:

> cat /proc/sys/vm/max_map_countTo increase the value, add the following to /etc/sysctl.conf:

vm.max_map_count=262144

Then run sudo sysctl -p to reload.

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

Create an

opensearch_custom_overrides.yamlfile to override the default values of the Helm chart.For a single-node cluster, use the following template file:

# opensearch_custom_overrides.yaml global: imagePullSecrets: - application-collection singleNode: true replicas: 1 persistence: enabled: true storageClass: local-path extraEnvs: - name: OPENSEARCH_INITIAL_ADMIN_PASSWORD value: "MySecurePass123" service: type: NodePort httpPort: 9200 transportPort: 9300 metricsPort: 9600 resources: limits: memory: "6Gi" cpu: "2" config: opensearch.yml: | plugins.security.disabled: trueFor a multi-node cluster, use the following template file:

# opensearch_custom_overrides.yaml global: imagePullSecrets: - application-collection singleNode: false replicas: 3 persistence: enabled: true storageClass: local-path extraEnvs: - name: OPENSEARCH_INITIAL_ADMIN_PASSWORD value: "MySecurePass123" - name: ES_JAVA_OPTS value: "-Xms3g -Xmx3g" service: type: NodePort httpPort: 9200 transportPort: 9300 metricsPort: 9600 resources: limits: memory: "6Gi" cpu: "2" requests: memory: "4Gi" cpu: "1" startupProbe: tcpSocket: port: 9200 initialDelaySeconds: 60 periodSeconds: 10 timeoutSeconds: 5 failureThreshold: 12 readinessProbe: tcpSocket: port: 9200 initialDelaySeconds: 60 periodSeconds: 10 timeoutSeconds: 5 failureThreshold: 6 livenessProbe: tcpSocket: port: 9200 initialDelaySeconds: 120 periodSeconds: 20 timeoutSeconds: 5 failureThreshold: 3 config: opensearch.yml: | plugins.security.disabled: trueAfter saving the override file as

opensearch_custom_overrides.yaml, apply its configuration with the following command.> helm upgrade --install \ opensearch oci://dp.apps.rancher.io/charts/opensearch \ -n <SUSE_AI_NAMESPACE> \ -f <opensearch_custom_overrides.yaml>Check that the pods and services are running.

> kubectl get pods -n <SUSE_AI_NAMESPACE> | grep "opensearch" opensearch-cluster-master-0 1/1 Running 0 5h34mA multi-node cluster configuration shows that the replicas are distributed across multiple nodes.

> kubectl get pods -n <SUSE_AI_NAMESPACE> | grep "opensearch" opensearch-cluster-master-0 1/1 Running 0 2m30s 10.42.1.32 mgmt-rancher-wkrgpu1 opensearch-cluster-master-1 1/1 Running 0 2m30s 10.42.1.33 mgmt-rancher-wkrgpu1 opensearch-cluster-master-2 1/1 Running 0 2m30s 10.42.0.27 mgmt-rancher

4.5.3 Integrating OpenSearch with Open WebUI #

To integrate OpenSearch with Open WebUI, follow these steps:

Edit the override file for Open WebUI,

owui_custom_overrides.yaml, and update theextraEnvVarssection as follows.Change the

VECTOR_DBvalue toopensearch.Remove the

MILVUS_URIvariable.Add all the OpenSearch-related environment variables.

extraEnvVars: - name: DEFAULT_MODELS value: "gemma:2b" - name: DEFAULT_USER_ROLE value: "pending" - name: ENABLE_SIGNUP value: "true" - name: GLOBAL_LOG_LEVEL value: INFO - name: RAG_EMBEDDING_MODEL value: "sentence-transformers/all-MiniLM-L6-v2" - name: INSTALL_NLTK_DATASETS value: "true" - name: VECTOR_DB value: "opensearch" #- name: MILVUS_URI # value: http://milvus.<SUSE_AI_NAMESPACE>.svc.cluster.local:19530 - name: OPENAI_API_KEY value: "0p3n-w3bu!" - name: OPENSEARCH_SSL value: "false" - name: OPENSEARCH_URI value: http://opensearch-cluster-master.<SUSE_AI_NAMESPACE>.svc.cluster.local:9200 - name: OPENSEARCH_USERNAME value: admin - name: OPENSEARCH_PASSWORD value: MySecurePass123 - name: OPENSEARCH_CERT_VERIFY value: "false"

Redeploy Open WebUI.

> helm upgrade --install \ open-webui oci://dp.apps.rancher.io/charts/open-webui \ -n <SUSE_AI_NAMESPACE> \ -f <owui_custom_overrides.yaml>Verify that

VECTOR_DBis set toopensearch.> kubectl exec -it open-webui-0 -n <SUSE_AI_NAMESPACE> \ -- sh -c 'echo "VECTOR_DB=$VECTOR_DB"' Defaulted container "open-webui" out of: open-webui, copy-app-data (init) VECTOR_DB=opensearch

4.5.4 Upgrading OpenSearch #

The OpenSearch chart receives application updates and updates of the Helm chart templates.

New versions may include changes that require manual steps.

These steps are listed in the corresponding README file.

All OpenSearch dependencies are updated automatically during an OpenSearch upgrade.

To upgrade OpenSearch, identify the new version number and run the following command below:

> helm upgrade --install \

opensearch oci://dp.apps.rancher.io/charts/opensearch \

-n <SUSE_AI_NAMESPACE> \

--version <VERSION_NUMBER> \

-f <opensearch_custom_overrides.yaml>If you omit the --version option, OpenSearch gets upgraded to the latest available version.

4.5.5 Uninstalling OpenSearch #

To uninstall OpenSearch, run the following command:

> helm uninstall opensearch -n <SUSE_AI_NAMESPACE>4.6 Installing Milvus #

Milvus is a scalable, high-performance vector database designed for AI applications. It enables efficient organization and searching of massive unstructured datasets, including text, images and multi-modal content. This procedure walks you through the installation of Milvus and its dependencies.

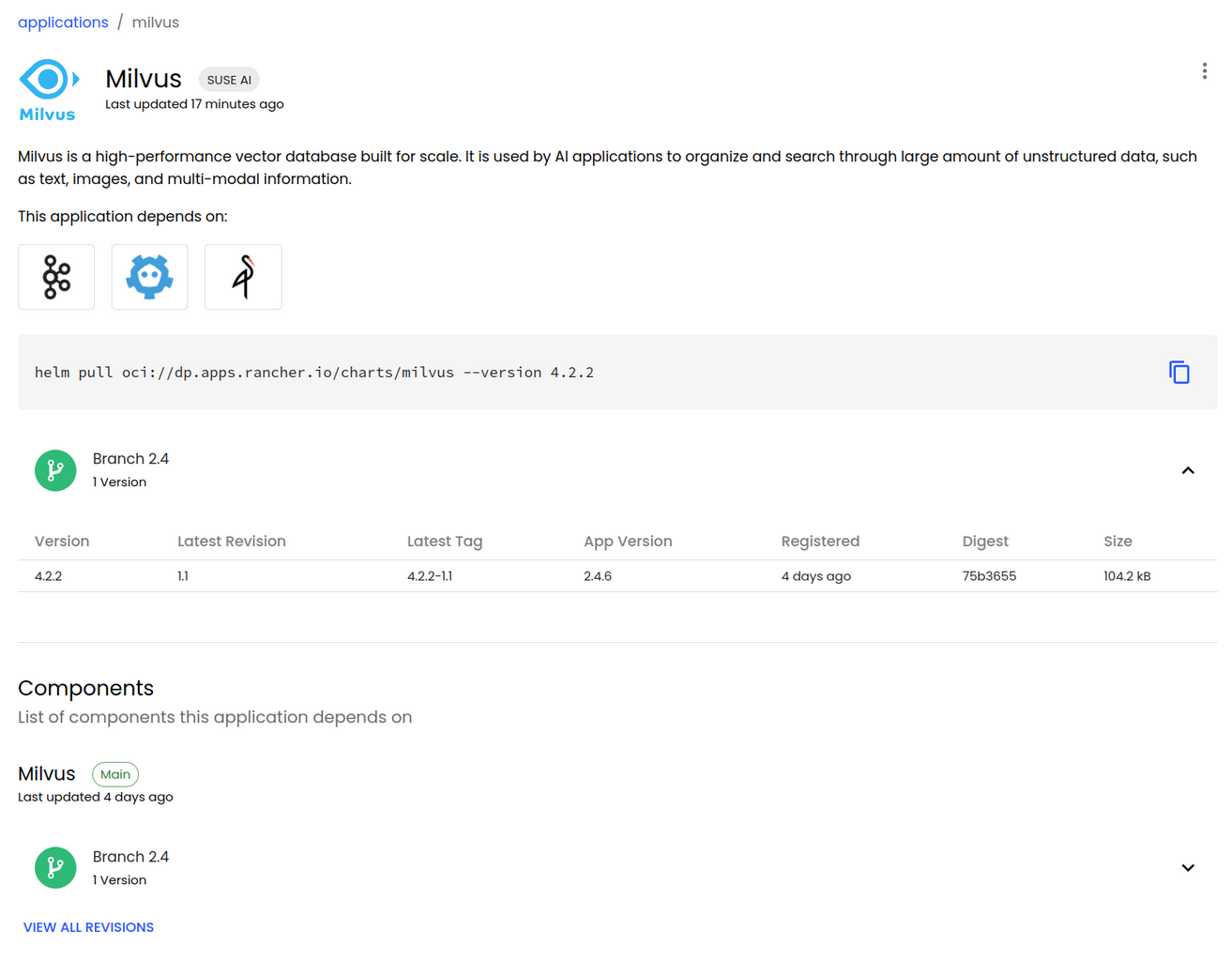

4.6.1 Details about the Milvus application #

Before deploying Milvus, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/milvusAlternatively, you can also refer to the Milvus Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/milvus. It contains Milvus dependencies, available versions and the link to pull the Milvus container image.

4.6.2 Milvus installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

When installed as part of SUSE AI, Milvus depends on etcd, MinIO and Apache Kafka. Because the Milvus chart uses a non-default configuration, create an override file

milvus_custom_overrides.yamlwith the following content.TipAs a template, you can download the Milvus Helm chart that includes the

values.yamlfile with the default configuration by running the following command:> helm pull oci://dp.apps.rancher.io/charts/milvus --version 4.2.2global: imagePullSecrets: - application-collection cluster: enabled: true standalone: persistence: persistentVolumeClaim: storageClassName: "local-path" etcd: replicaCount: 1 persistence: storageClassName: "local-path" minio: mode: distributed replicas: 4 rootUser: "admin" rootPassword: "adminminio" persistence: storageClass: "local-path" resources: requests: memory: 1024Mi kafka: enabled: true name: kafka replicaCount: 3 broker: enabled: true cluster: listeners: client: protocol: 'PLAINTEXT' controller: protocol: 'PLAINTEXT' persistence: enabled: true annotations: {} labels: {} existingClaim: "" accessModes: - ReadWriteOnce resources: requests: storage: 8Gi storageClassName: "local-path" extraConfigFiles: 1 user.yaml: |+ trace: exporter: jaeger sampleFraction: 1 jaeger: url: "http://opentelemetry-collector.observability.svc.cluster.local:14268/api/traces" 2The

extraConfigFilessection is optional, required only to receive telemetry data from Open WebUI.The URL of the OpenTelemetry Collector installed by the user.

TipThe above example uses local storage. For production environments, we recommend using an enterprise class storage solution such as SUSE Storage in which case the

storageClassNameoption must be set tolonghorn.Install the Milvus Helm chart using the

milvus_custom_overrides.yamloverride file.> helm upgrade --install \ milvus oci://dp.apps.rancher.io/charts/milvus \ -n <SUSE_AI_NAMESPACE> \ --version 4.2.2 -f <milvus_custom_overrides.yaml>

4.6.2.1 Using Apache Kafka with SUSE Storage #

When Milvus is deployed in cluster mode, it uses Apache Kafka as a message queue. If Apache Kafka uses SUSE Storage as a storage back-end, you need to create an XFS storage class and make it available for the Apache Kafka deployment. Otherwise deploying Apache Kafka with a storage class of an Ext4 file system fails with the following error:

"Found directory /mnt/kafka/logs/lost+found, 'lost+found' is not in the form of topic-partition or topic-partition.uniqueId-delete (if marked for deletion)"

To introduce the XFS storage class, follow these steps:

Create a file named

longhorn-xfs.yamlwith the following content:apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: longhorn-xfs provisioner: driver.longhorn.io allowVolumeExpansion: true reclaimPolicy: Delete volumeBindingMode: Immediate parameters: numberOfReplicas: "3" staleReplicaTimeout: "30" fromBackup: "" fsType: "xfs" dataLocality: "disabled" unmapMarkSnapChainRemoved: "ignored"Create the new storage class using the

kubectlcommand.> kubectl apply -f longhorn-xfs.yamlUpdate the Milvus overrides YAML file to reference the Apache Kafka storage class, as in the following example:

[...] kafka: enabled: true persistence: storageClassName: longhorn-xfs

4.6.3 Upgrading Milvus #

The Milvus chart receives application updates and updates of the Helm chart templates.

New versions may include changes that require manual steps.

These steps are listed in the corresponding README file.

All Milvus dependencies are updated automatically during Milvus upgrade.

To upgrade Milvus, identify the new version number and run the following command below:

> helm upgrade --install \

milvus oci://dp.apps.rancher.io/charts/milvus \

-n <SUSE_AI_NAMESPACE> \

--version <VERSION_NUMBER> \

-f milvus_custom_overrides.yaml4.6.4 Uninstalling Milvus #

To uninstall Milvus, run the following command:

> helm uninstall milvus -n <SUSE_AI_NAMESPACE>4.7 Installing Ollama #

Ollama is a tool for running and managing language models locally on your computer. It offers a simple interface to download, run and interact with models without relying on cloud resources.

When installing SUSE AI, Ollama is installed by the Open WebUI installation by default. If you decide to install Ollama separately, disable its installation during the installation of Open WebUI as outlined in Example 4.6, “Open WebUI override file with Ollama installed separately”.

4.7.1 Details about the Ollama application #

Before deploying Ollama, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/ollamaAlternatively, you can also refer to the Ollama Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/ollama. It contains the available versions and a link to pull the Ollama container image.

4.7.2 Ollama installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

Create the

ollama_custom_overrides.yamlfile to override the values of the parent Helm chart. Refer to Section 4.7.5, “Values for the Ollama Helm chart” for more details.Install the Ollama Helm chart using the

ollama-custom-overrides.yamloverride file.> helm upgrade \ --install ollama oci://dp.apps.rancher.io/charts/ollama \ -n <SUSE_AI_NAMESPACE> \ -f ollama_custom_overrides.yamlTip: Hugging Face modelsModels downloaded from Hugging Face need to be converted before they can be used by Ollama. Refer to https://github.com/ollama/ollama/blob/main/docs/import.md for more details.

4.7.3 Uninstalling Ollama #

To uninstall Ollama, run the following command:

> helm uninstall ollama -n <SUSE_AI_NAMESPACE>4.7.4 Upgrading Ollama #

You can upgrade Ollama to a specific version by running the following command:

> helm upgrade ollama oci://dp.apps.rancher.io/charts/ollama \

-n <SUSE_AI_NAMESPACE> \

--version <OLLAMA_VERSION_NUMBER> -f <ollama_custom_overrides.yaml>If you omit the --version option, Ollama gets upgraded to the latest available version.

4.7.4.1 Upgrading from version 0.x.x to 1.x.x #

The version 1.x.x introduces the ability to load models in memory at startup.

To reflect this, change ollama.models to ollama.models.pull in the Ollama Helm chart to avoid errors before upgrading, for example:

[...]

ollama:

models:

- "gemma:2b"

- "llama3.1"[...]

ollama:

models:

pull:

- "gemma:2b"

- "llama3.1"Without this change you may experience the following error when trying to upgrade from 0.x.x to 1.x.x.

coalesce.go:286: warning: cannot overwrite table with non table for

ollama.ollama.models (map[pull:[] run:[]])

Error: UPGRADE FAILED: template: ollama/templates/deployment.yaml:145:27:

executing "ollama/templates/deployment.yaml" at <.Values.ollama.models.pull>:

can't evaluate field pull in type interface {}4.7.5 Values for the Ollama Helm chart #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

Ollama can run optimized for NVIDIA GPUs if the following conditions are fulfilled:

The NVIDIA driver and NVIDIA GPU Operator are installed as described in Installing NVIDIA GPU Drivers on SLES or Installing NVIDIA GPU Drivers on SUSE Linux Micro.

The workloads are set to run on NVIDIA-enabled nodes as described in https://documentation.suse.com/suse-ai/1.0/html/AI-deployment-intro/index.html#ai-gpu-nodes-assigning.

If you do not want to use the NVIDIA GPU, remove the gpu section from ollama_custom_overrides.yaml or disable it.

ollama:

[...]

gpu:

enabled: false

type: 'nvidia'

number: 1global:

imagePullSecrets:

- application-collection

ingress:

enabled: false

defaultModel: "gemma:2b"

ollama:

models:

pull:

- "gemma:2b"

- "llama3.1"

run:

- "gemma:2b"

- "llama3.1"

gpu:

enabled: true

type: 'nvidia'

number: 1

nvidiaResource: "nvidia.com/gpu"

persistentVolume: 1

enabled: true

storageClass: local-path 2Without the | |

Use |

ollama:

models:

pull:

- llama2

run:

- llama2

persistentVolume:

enabled: true

storageClass: local-path 1

ingress:

enabled: true

hosts:

- host: <OLLAMA_API_URL>

paths:

- path: /

pathType: PrefixUse |

| Key | Type | Default | Description |

|---|---|---|---|

affinity | object | {} | Affinity for pod assignment |

autoscaling.enabled | bool | false | Enable autoscaling |

autoscaling.maxReplicas | int | 100 | Number of maximum replicas |

autoscaling.minReplicas | int | 1 | Number of minimum replicas |

autoscaling.targetCPUUtilizationPercentage | int | 80 | CPU usage to target replica |

extraArgs | list | [] | Additional arguments on the output Deployment definition. |

extraEnv | list | [] | Additional environment variables on the output Deployment definition. |

fullnameOverride | string | "" | String to fully override template |

global.imagePullSecrets | list | [] | Global override for container image registry pull secrets |

global.imageRegistry | string | "" | Global override for container image registry |

hostIPC | bool | false | Use the host’s IPC namespace |

hostNetwork | bool | false | Use the host’s network namespace |

hostPID | bool | false | Use the host’s PID namespace. |

image.pullPolicy | string | "IfNotPresent" | Image pull policy to use for the Ollama container |

image.registry | string | "dp.apps.rancher.io" | Image registry to use for the Ollama container |

image.repository | string | "containers/ollama" | Image repository to use for the Ollama container |

image.tag | string | "0.3.6" | Image tag to use for the Ollama container |

imagePullSecrets | list | [] | Docker registry secret names as an array |

ingress.annotations | object | {} | Additional annotations for the Ingress resource |

ingress.className | string | "" | IngressClass that is used to implement the Ingress (Kubernetes 1.18+) |

ingress.enabled | bool | false | Enable Ingress controller resource |

ingress.hosts[0].host | string | "ollama.local" | |

ingress.hosts[0].paths[0].path | string | "/" | |

ingress.hosts[0].paths[0].pathType | string | "Prefix" | |

ingress.tls | list | [] | The TLS configuration for host names to be covered with this Ingress record |

initContainers | list | [] | Init containers to add to the pod |

knative.containerConcurrency | int | 0 | Knative service container concurrency |

knative.enabled | bool | false | Enable Knative integration |

knative.idleTimeoutSeconds | int | 300 | Knative service idle timeout seconds |

knative.responseStartTimeoutSeconds | int | 300 | Knative service response start timeout seconds |

knative.timeoutSeconds | int | 300 | Knative service timeout seconds |

livenessProbe.enabled | bool | true | Enable livenessProbe |

livenessProbe.failureThreshold | int | 6 | Failure threshold for livenessProbe |

livenessProbe.initialDelaySeconds | int | 60 | Initial delay seconds for livenessProbe |

livenessProbe.path | string | "/" | Request path for livenessProbe |

livenessProbe.periodSeconds | int | 10 | Period seconds for livenessProbe |

livenessProbe.successThreshold | int | 1 | Success threshold for livenessProbe |

livenessProbe.timeoutSeconds | int | 5 | Timeout seconds for livenessProbe |

nameOverride | string | "" | String to partially override template (maintains the release name) |

nodeSelector | object | {} | Node labels for pod assignment |

ollama.gpu.enabled | bool | false | Enable GPU integration |

ollama.gpu.number | int | 1 | Specify the number of GPUs |

ollama.gpu.nvidiaResource | string | "nvidia.com/gpu" | Only for NVIDIA cards; change to |

ollama.gpu.type | string | "nvidia" | GPU type: 'nvidia' or 'amd.' If 'ollama.gpu.enabled' is enabled, the default value is 'nvidia.' If set to 'amd,' this adds the 'rocm' suffix to the image tag if 'image.tag' is not override. This is because AMD and CPU/CUDA are different images. |

ollama.insecure | bool | false | Add insecure flag for pulling at container startup |

ollama.models | list | [] | List of models to pull at container startup. The more you add, the longer the container takes to start if models are not present models: - llama2 - mistral |

ollama.mountPath | string | "" | Override ollama-data volume mount path, default: "/root/.ollama" |

persistentVolume.accessModes | list | ["ReadWriteOnce"] | Ollama server data Persistent Volume access modes. Must match those of existing PV or dynamic provisioner, see https://kubernetes.io/docs/concepts/storage/persistent-volumes/. |

persistentVolume.annotations | object | {} | Ollama server data Persistent Volume annotations |

persistentVolume.enabled | bool | false | Enable persistence using PVC |

persistentVolume.existingClaim | string | "" | If you want to bring your own PVC for persisting Ollama state, pass the name of the created + ready PVC here. If set, this Chart does not create the default PVC. Requires |

persistentVolume.size | string | "30Gi" | Ollama server data Persistent Volume size |

persistentVolume.storageClass | string | "" | If persistentVolume.storageClass is present, and is set to either a dash ('-') or empty string (''), dynamic provisioning is disabled. Otherwise, the storageClassName for persistent volume claim is set to the given value specified by persistentVolume.storageClass. If persistentVolume.storageClass is absent, the default storage class is used for dynamic provisioning whenever possible. See https://kubernetes.io/docs/concepts/storage/storage-classes/ for more details. |

persistentVolume.subPath | string | "" | Subdirectory of Ollama server data Persistent Volume to mount. Useful if the volume’s root directory is not empty. |

persistentVolume.volumeMode | string | "" | Ollama server data Persistent Volume Binding Mode. If empty (the default) or set to null, no volumeBindingMode specification is set, choosing the default mode. |

persistentVolume.volumeName | string | "" | Ollama server Persistent Volume name. It can be used to force-attach the created PVC to a specific PV. |

podAnnotations | object | {} | Map of annotations to add to the pods |

podLabels | object | {} | Map of labels to add to the pods |

podSecurityContext | object | {} | Pod Security Context |

readinessProbe.enabled | bool | true | Enable readinessProbe |

readinessProbe.failureThreshold | int | 6 | Failure threshold for readinessProbe |

readinessProbe.initialDelaySeconds | int | 30 | Initial delay seconds for readinessProbe |

readinessProbe.path | string | "/" | Request path for readinessProbe |

readinessProbe.periodSeconds | int | 5 | Period seconds for readinessProbe |

readinessProbe.successThreshold | int | 1 | Success threshold for readinessProbe |

readinessProbe.timeoutSeconds | int | 3 | Timeout seconds for readinessProbe |

replicaCount | int | 1 | Number of replicas |

resources.limits | object | {} | Pod limit |

resources.requests | object | {} | Pod requests |

runtimeClassName | string | "" | Specify runtime class |

securityContext | object | {} | Container Security Context |

service.annotations | object | {} | Annotations to add to the service |

service.nodePort | int | 31434 | Service node port when service type is 'NodePort' |

service.port | int | 11434 | Service port |

service.type | string | "ClusterIP" | Service type |

serviceAccount.annotations | object | {} | Annotations to add to the service account |

serviceAccount.automount | bool | true | Whether to automatically mount a ServiceAccount’s API credentials |

serviceAccount.create | bool | true | Whether a service account should be created |

serviceAccount.name | string | "" | The name of the service account to use. If not set and 'create' is 'true', a name is generated using the full name template. |

tolerations | list | [] | Tolerations for pod assignment |

topologySpreadConstraints | object | {} | Topology Spread Constraints for pod assignment |

updateStrategy | object | {"type":""} | How to replace existing pods. |

updateStrategy.type | string | "" | Can be 'Recreate' or 'RollingUpdate'; default is 'RollingUpdate' |

volumeMounts | list | [] | Additional volumeMounts on the output Deployment definition |

volumes | list | [] | Additional volumes on the output Deployment definition |

4.8 Installing Open WebUI #

Open WebUI is a user-friendly web interface for interacting with Large Language Models (LLMs). It supports various LLM runners, including Ollama and vLLM.

4.8.1 Details about the Open WebUI application #

Before deploying Open WebUI, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/open-webuiAlternatively, you can also refer to the Open WebUI Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/open-webui. It contains available versions and the link to pull the Open WebUI container image.

4.8.2 Open WebUI installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

An installed cert-manager. If cert-manager is not installed from previous Open WebUI releases, install it by following the steps in Section 4.4, “Installing cert-manager”.

Create the

owui_custom_overrides.yamlfile to override the values of the parent Helm chart. The file contains URLs for Milvus and Ollama, and specifies whether a stand-alone Ollama deployment is used or whether Ollama is installed as part of the Open WebUI installation. Find more details in Section 4.8.5, “Examples of Open WebUI Helm chart override files”. For a list of all installation options with examples, refer to Section 4.8.6, “Values for the Open WebUI Helm chart”.Install the Open WebUI Helm chart using the

owui_custom_overrides.yamloverride file.> helm upgrade --install \ open-webui oci://dp.apps.rancher.io/charts/open-webui \ -n <SUSE_AI_NAMESPACE> \ -f <owui_custom_overrides.yaml>

4.8.3 Upgrading Open WebUI #

To upgrade Open WebUI to a specific new version, run the following command:

> helm upgrade --install open-webui \

oci://dp.apps.rancher.io/charts/open-webui \

-n <SUSE_AI_NAMESPACE> \

--version <VERSION_NUMBER> \

-f <owui_custom_overrides.yaml>To upgrade Open WebUI to the latest version, run the following command:

> helm upgrade --install open-webui \

oci://dp.apps.rancher.io/charts/open-webui \

-n <SUSE_AI_NAMESPACE> \

-f <owui_custom_overrides.yaml>4.8.4 Uninstalling Open WebUI #

To uninstall Open WebUI, run the following command:

> helm uninstall open-webui -n <SUSE_AI_NAMESPACE>4.8.5 Examples of Open WebUI Helm chart override files #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

The following override file installs Ollama during the Open WebUI installation.

global:

imagePullSecrets:

- application-collection

ollamaUrls:

- http://open-webui-ollama.<SUSE_AI_NAMESPACE>.svc.cluster.local:11434

persistence:

enabled: true

storageClass: local-path 1

ollama:

enabled: true

ingress:

enabled: false

defaultModel: "gemma:2b"

ollama:

models: 2

pull:

- "gemma:2b"

- "llama3.1"

gpu: 3

enabled: true

type: 'nvidia'

number: 1

persistentVolume: 4

enabled: true

storageClass: local-path

pipelines:

enabled: true

persistence:

storageClass: local-path

extraEnvVars: 5

- name: PIPELINES_URLS 6

value: "https://raw.githubusercontent.com/SUSE/suse-ai-observability-extension/refs/heads/main/integrations/oi-filter/suse_ai_filter.py"

- name: OTEL_SERVICE_NAME 7

value: "Open WebUI"

- name: OTEL_EXPORTER_HTTP_OTLP_ENDPONT 8

value: "http://opentelemetry-collector.suse-observability.svc.cluster.local:4318"

- name: PRICING_JSON 9

value: "https://raw.githubusercontent.com/SUSE/suse-ai-observability-extension/refs/heads/main/integrations/oi-filter/pricing.json"

ingress:

enabled: true

class: ""

annotations:

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/proxy-body-size: "1024m"

host: suse-ollama-webui 10

tls: true

extraEnvVars:

- name: DEFAULT_MODELS 11

value: "gemma:2b"

- name: DEFAULT_USER_ROLE

value: "user"

- name: WEBUI_NAME

value: "SUSE AI"

- name: GLOBAL_LOG_LEVEL

value: INFO

- name: RAG_EMBEDDING_MODEL

value: "sentence-transformers/all-MiniLM-L6-v2"

- name: VECTOR_DB

value: "milvus"

- name: MILVUS_URI

value: http://milvus.<SUSE_AI_NAMESPACE>.svc.cluster.local:19530

- name: INSTALL_NLTK_DATASETS 12

value: "true"

- name: OMP_NUM_THREADS

value: "1"

- name: OPENAI_API_KEY 13

value: "0p3n-w3bu!"Use | |

Specifies that two large language models (LLM) will be loaded in Ollama when the container starts. | |

Enables GPU support for Ollama.

The | |

Without the | |

The environment variables that you are making available for the pipeline’s runtime container. | |

A list of pipeline URLs to be downloaded and installed by default.

Individual URLs are separated by a semicolon | |

The service name that appears in traces and topological representations in SUSE Observability. | |

The endpoint for the OpenTelemetry collector. Make sure to use the HTTP port of your collector. | |

A file for the model multipliers in cost estimation. You can customize it to match your actual infrastructure experimentally. | |

Specifies the default LLM for Ollama. | |

Specifies the host name for the Open WebUI interface. | |

Installs the Natural Language Toolkit (NLTK) datasets for Ollama. Refer to https://www.nltk.org/index.html for licensing information. | |

API key value for communication between Open WebUI and Open WebUI Pipelines. The default value is '0p3n-w3bu!'. |

The following override file installs Ollama separately from the Open WebUI installation.

global:

imagePullSecrets:

- application-collection

ollamaUrls:

- http://ollama.<SUSE_AI_NAMESPACE>.svc.cluster.local:11434

persistence:

enabled: true

storageClass: local-path 1

ollama:

enabled: false

pipelines:

enabled: False

persistence:

storageClass: local-path 2

ingress:

enabled: true

class: ""

annotations:

nginx.ingress.kubernetes.io/ssl-redirect: "true"

host: suse-ollama-webui

tls: true

extraEnvVars:

- name: DEFAULT_MODELS 3

value: "gemma:2b"

- name: DEFAULT_USER_ROLE

value: "user"

- name: WEBUI_NAME

value: "SUSE AI"

- name: GLOBAL_LOG_LEVEL

value: INFO

- name: RAG_EMBEDDING_MODEL

value: "sentence-transformers/all-MiniLM-L6-v2"

- name: VECTOR_DB

value: "milvus"

- name: MILVUS_URI

value: http://milvus.<SUSE_AI_NAMESPACE>.svc.cluster.local:19530

- name: ENABLE_OTEL 4

value: "true"

- name: OTEL_EXPORTER_OTLP_ENDPOINT 5

value: http://opentelemetry-collector.observability.svc.cluster.local:4317 6

- name: OMP_NUM_THREADS

value: "1"Use | |

Use | |

Specifies the default LLM for Ollama. | |

These values are optional, required only to receive telemetry data from Open WebUI. | |

These values are optional, required only to receive telemetry data from Open WebUI. | |

The URL of the OpenTelemetry Collector installed by the user. |

The following override file installs Ollama separately and enables Open WebUI pipelines. This simple filter adds a limit to the number of question and answer turns during the LLM chat.

Pipelines normally require additional configuration provided either via environment variables or specified in the Open WebUI Web UI.

global:

imagePullSecrets:

- application-collection

ollamaUrls:

- http://ollama.<SUSE_AI_NAMESPACE>.svc.cluster.local:11434

persistence:

enabled: true

storageClass: local-path

ollama:

enabled: false

pipelines:

enabled: true

persistence:

storageClass: local-path

extraEnvVars:

- name: PIPELINES_URLS 1

value: "https://raw.githubusercontent.com/SUSE/suse-ai-observability-extension/refs/heads/main/integrations/oi-filter/conversation_turn_limit_filter.py"

ingress:

enabled: true

class: ""

annotations:

nginx.ingress.kubernetes.io/ssl-redirect: "true"

host: suse-ollama-webui

tls: true

[...]A list of pipeline URLs to be downloaded and installed by default.

Individual URLs are separated by a semicolon |

The following example shows how to extend the extraEnvVars section of the Open WebUI override file to connect to vLLM.

Replace SUSE_AI_NAMESPACE with your Kubernetes namespace.

extraEnvVars:

[...]

- name: OPENAI_API_BASE_URL

value: "http://vllm-router-service.<SUSE_AI_NAMESPACE>.svc.cluster.local:80/v1"

- name: OPENAI_API_KEY

value: "dummy" 1Open WebUI will require you to provide the OpenAI API key. |

If the Open WebUI installation has pipelines enabled besides the vLLM deployment, you can extend the extraEnvVars section as follows.

extraEnvVars:

[...]

- name: OPENAI_API_BASE_URLS

value: "http://open-webui-pipelines.<SUSE_AI_NAMESPACE>.svc.cluster.local:9099;http://vllm-router-service.<SUSE_AI_NAMESPACE>.svc.cluster.local:80/v1"

- name: OPENAI_API_KEYS

value: "0p3n-w3bu!;dummy"You can install the open-webui-pipelines service as a stand-alone deployment, independent of the Open WebUI chart.

To install open-webui-pipelines as a stand-alone component, use the following command:

> helm upgrade --install open-webui-pipelines \

oci://dp.apps.rancher.io/charts/open-webui-pipelines \

-n <SUSE_AI_NAMESPACE> \

-f open-webui-pipelines-values.yamlFollowing is an example of the open-webui-pipelines-values.yaml override file.

global:

imagePullSecrets:

- application-collection

image:

registry: dp.apps.rancher.io

repository: containers/open-webui-pipelines

tag: <IMAGE_TAG>

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path

size: 10Gi4.8.6 Values for the Open WebUI Helm chart #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

| Key | Type | Default | Description |

|---|---|---|---|

affinity | object | {} | Affinity for pod assignment |

annotations | object | {} | |

cert-manager.enabled | bool | true | |

clusterDomain | string | "cluster.local" | Value of cluster domain |

containerSecurityContext | object | {} | Configure container security context, see https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-containe. |

extraEnvVars | list | [{"name":"OPENAI_API_KEY", "value":"0p3n-w3bu!"}] | Environment variables added to the Open WebUI deployment. Most up-to-date environment variables can be found in Environment Variable Configuration. |

extraEnvVars[0] | object | {"name":"OPENAI_API_KEY","value":"0p3n-w3bu!"} | Default API key value for Pipelines. It should be updated in a production deployment and changed to the required API key if not using Pipelines. |

global.imagePullSecrets | list | [] | Global override for container image registry pull secrets |

global.imageRegistry | string | "" | Global override for container image registry |

global.tls.additionalTrustedCAs | bool | false | |

global.tls.issuerName | string | "suse-private-ai" | |

global.tls.letsEncrypt.email | string | ||

global.tls.letsEncrypt.environment | string | "staging" | |

global.tls.letsEncrypt.ingress.class | string | "" | |

global.tls.source | string | "suse-private-ai" | The source of Open WebUI TLS keys, see Section 4.8.6.1, “TLS sources”. |

image.pullPolicy | string | "IfNotPresent" | Image pull policy to use for the Open WebUI container |

image.registry | string | "dp.apps.rancher.io" | Image registry to use for the Open WebUI container |

image.repository | string | "containers/open-webui" | Image repository to use for the Open WebUI container |

image.tag | string | "0.3.32" | Image tag to use for the Open WebUI container |

imagePullSecrets | list | [] | Configure imagePullSecrets to use private registry, see https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/. |

ingress.annotations | object | {"nginx.ingress.kubernetes.io/ssl-redirect":"true"} | Use appropriate annotations for your Ingress controller, such as |

ingress.class | string | "" | |

ingress.enabled | bool | true | |

ingress.existingSecret | string | "" | |

ingress.host | string | "" | |

ingress.tls | bool | true | |

nameOverride | string | "" | |

nodeSelector | object | {} | Node labels for pod assignment |

ollama.enabled | bool | true | Automatically install Ollama Helm chart from \oci://dp.apps.rancher.io/charts/ollama. Configure the following Helm values. |

ollama.fullnameOverride | string | "open-webui-ollama" | If enabling embedded Ollama, update fullnameOverride to your desired Ollama name value, or else it will use the default ollama.name value from the Ollama chart. |

ollamaUrls | list | [] | A list of Ollama API endpoints. These can be added instead of automatically installing the Ollama Helm chart, or in addition to it. |

openaiBaseApiUrl | string | "" | OpenAI base API URL to use.

Defaults to the Pipelines service endpoint when Pipelines are enabled, or to |

persistence.accessModes | list | ["ReadWriteOnce"] | If using multiple replicas, you must update accessModes to ReadWriteMany. |

persistence.annotations | object | {} | |

persistence.enabled | bool | true | |

persistence.existingClaim | string | "" | Use existingClaim to reuse an existing Open WebUI PVC instead of creating a new one. |

persistence.selector | object | {} | |

persistence.size | string | "2Gi" | |

persistence.storageClass | string | "" | |

pipelines.enabled | bool | false | Automatically install Pipelines chart to extend Open WebUI functionality using Pipelines. |

pipelines.extraEnvVars | list | [] | This section can be used to pass the required environment variables to your pipelines (such as the Langfuse host name). |

podAnnotations | object | {} | |

podSecurityContext | object | {} | Configure pod security context, see https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-containe. |

replicaCount | int | 1 | |

resources | object | {} | |

service | object | {"annotations":{},"containerPort":8080, "labels":{},"loadBalancerClass":"", "nodePort":"","port":80,"type":"ClusterIP"} | Service values to expose Open WebUI pods to cluster |

tolerations | list | [] | Tolerations for pod assignment |

topologySpreadConstraints | list | [] | Topology Spread Constraints for pod assignment |

4.8.6.1 TLS sources #

There are three recommended options where Open WebUI can obtain TLS certificates for secure communication.

- Self-Signed TLS certificate

This is the default method. You need to install

cert-manageron the cluster to issue and maintain the certificates. This method generates a CA and signs the Open WebUI certificate using the CA.cert-managerthen manages the signed certificate. For this method, use the following Helm chart option:global.tls.source=suse-private-ai- Let’s Encrypt

This method also uses

cert-manager, but it is combined with a special issuer for Let’s Encrypt that performs all actions—including request and validation—to get the Let’s Encrypt certificate issued. This configuration uses HTTP validation (HTTP-01) and therefore the load balancer must have a public DNS record and be accessible from the Internet. For this method, use the following Helm chart option:global.tls.source=letsEncrypt- Provide your own certificate

This method allows you to bring your own signed certificate to secure the HTTPS traffic. In this case, you must upload this certificate and associated key as PEM-encoded files named

tls.crtandtls.key. For this method, use the following Helm chart option:global.tls.source=secret

4.9 Installing vLLM #

vLLM is an open-source high-performance inference and serving engine for large language models (LLMs). It is designed to maximize throughput and reduce latency by using an efficient memory management system that handles dynamic batching and streaming outputs. In short, vLLM makes running LLMs cheaper and faster in production.

Deploying vLLM on Kubernetes is a scalable and efficient way to serve machine learning models. This guide walks you through deploying vLLM using its Helm chart, which is part of AI Library. The Helm chart deploys the full vLLM production stack and enables you to run optimized LLM inference workloads on NVIDIA GPU in your Kubernetes cluster. It consists of the following components:

Serving Engine runs the model inference.

Router handles OpenAI-compatible API requests.

LMCache (Optional) improves caching efficiency.

CacheServer (Optional) is a distributed KV cache back-end.

4.9.1 Details about the vLLM application #

Before deploying vLLM, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

> helm show values oci://dp.apps.rancher.io/charts/vllmAlternatively, you can also refer to the vLLM Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/vllm. It contains vLLM dependencies, available versions and the link to pull the vLLM container image.

4.9.2 vLLM installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

NVIDIA GPUs must be available in your Kubernetes cluster to successfully deploy and run vLLM.

The current release of SUSE AI vLLM does not support Ray and LoraController.

Create a

vllm_custom_overrides.yamlfile to override the default values of the Helm chart. Find examples of override files in Section 4.9.6, “Examples of vLLM Helm chart override files”.After saving the override file as

vllm_custom_overrides.yaml, apply its configuration with the following command.> helm upgrade --install \ vllm oci://dp.apps.rancher.io/charts/vllm \ -n <SUSE_AI_NAMESPACE> \ -f <vllm_custom_overrides.yaml>

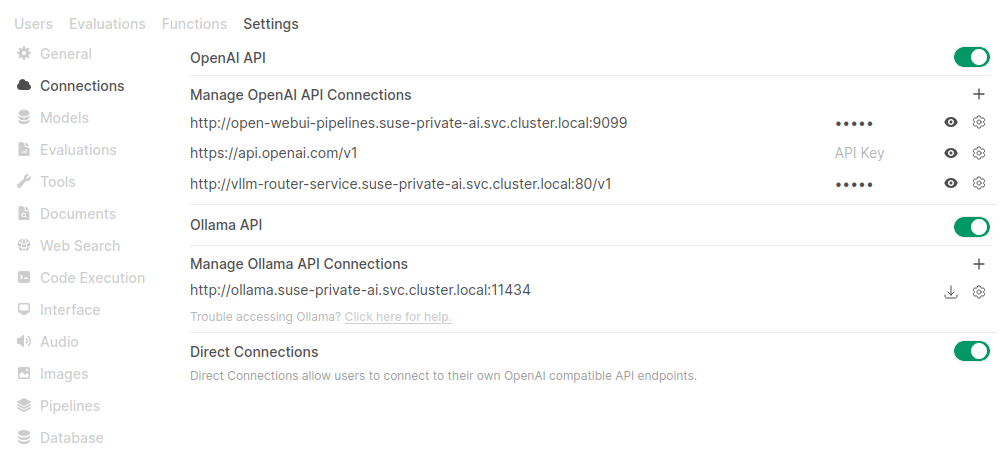

4.9.3 Integrating vLLM with Open WebUI #

You can integrate vLLM in Open WebUI either using the Open WebUI Web user interface, or updating Open WebUI override file during Open WebUI deployment (see Example 4.8, “Open WebUI override file with a connection to vLLM”).

Integrating vLLM with Open WebUI via the Web user interface.

You must have Open WebUI administrator privileges to access configuration screens or settings mentioned in this section.

In the bottom left of the Open WebUI window, click your avatar icon to open the user menu and select .

Click the tab and select from the left menu.

In the section, add a new connection URL to the vLLM router service, for example:

http://vllm-router-service.<SUSE_AI_NAMESPACE>.svc.cluster.local:80/v1

Confirm with .

Figure 4.2: Adding a vLLM connection to Open WebUI #

4.9.4 Upgrading vLLM #

The vLLM chart receives application updates and updates of the Helm chart templates.

New versions may include changes that require manual steps.

These steps are listed in the corresponding README file.

All vLLM dependencies are updated automatically during a vLLM upgrade.

To upgrade vLLM, identify the new version number and run the following command below:

> helm upgrade --install \

vllm oci://dp.apps.rancher.io/charts/vllm \

-n <SUSE_AI_NAMESPACE> \

--version <VERSION_NUMBER> \

-f <vllm_custom_overrides.yaml>If you omit the --version option, vLLM gets upgraded to the latest available version.

The helm upgrade command performs a rolling update on Deployments or StatefulSets with the following conditions:

The old pod stays running until the new pod passes readiness checks.

If the cluster is already at GPU capacity, the new pod cannot start because there is no GPU left to schedule it. This requires patching the deployment using the Recreate update strategy. The following commands identify the vLLM deployment name and patch its deployment.

> kubectl get deployments -n <SUSE_AI_NAMESPACE> > kubectl patch deployment <VLLM_DEPLOYMENT_NAME> \ -n <SUSE_AI_NAMESPACE> \ -p '{"spec": {"strategy": {"type": "Recreate", "rollingUpdate": null}}}'

4.9.5 Uninstalling vLLM #

To uninstall vLLM, run the following command:

> helm uninstall vllm -n <SUSE_AI_NAMESPACE>4.9.6 Examples of vLLM Helm chart override files #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

The following override file installs vLLM using a model that is publicly available.

global:

imagePullSecrets:

- application-collection

servingEngineSpec:

modelSpec:

- name: "phi3-mini-4k"

registry: "dp.apps.rancher.io"

repository: "containers/vllm-openai"

tag: "0.13.0"

imagePullPolicy: "IfNotPresent"

modelURL: "microsoft/Phi-3-mini-4k-instruct"

replicaCount: 1

requestCPU: 6

requestMemory: "16Gi"

requestGPU: 1Pulling the images can take a long time. You can monitor the status of the vLLM installation by running the following command:

> kubectl get pods -n <SUSE_AI_NAMESPACE> NAME READY STATUS RESTARTS AGE [...] vllm-deployment-router-7588bf995c-5jbkf 1/1 Running 0 8m9s vllm-phi3-mini-4k-deployment-vllm-79d6fdc-tx7 1/1 Running 0 8m9sPods for the vLLM deployment should transition to the states

ReadyandRunning.

Expose the

vllm-router-serviceport to the host machine:> kubectl port-forward svc/vllm-router-service \ -n <SUSE_AI_NAMESPACE> 30080:80Query the OpenAI-compatible API to list the available models:

> curl -o- http://localhost:30080/v1/modelsSend a query to the OpenAI

/completionendpoint to generate a completion for a prompt:> curl -X POST http://localhost:30080/v1/completions \ -H "Content-Type: application/json" \ -d '{ "model": "microsoft/Phi-3-mini-4k-instruct", "prompt": "Once upon a time,", "max_tokens": 10 }'# example output of generated completions { "id": "cmpl-3dd11a3624654629a3828c37bac3edd2", "object": "text_completion", "created": 1757530703, "model": "microsoft/Phi-3-mini-4k-instruct", "choices": [ { "index": 0, "text": " in a bustling city full of concrete and", "logprobs": null, "finish_reason": "length", "stop_reason": null, "prompt_logprobs": null } ], "usage": { "prompt_tokens": 5, "total_tokens": 15, "completion_tokens": 10, "prompt_tokens_details": null }, "kv_transfer_params": null }

The following vLLM override file includes basic configuration options.

Access to a Hugging Face token (

HF_TOKEN).The model

meta-llama/Llama-3.1-8B-Instructfrom this example is a gated model that requires you to accept the agreement to access it. For more information, see https://huggingface.co/meta-llama/Llama-3.1-8B-Instruct.Update the

storageClass:entry for eachmodelSpec.

# vllm_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection

servingEngineSpec:

modelSpec:

- name: "llama3" 1

registry: "dp.apps.rancher.io" 2

repository: "containers/vllm-openai" 3

tag: "0.13.0" 4

imagePullPolicy: "IfNotPresent"

modelURL: "meta-llama/Llama-3.1-8B-Instruct" 5

replicaCount: 1 6

requestCPU: 10 7

requestMemory: "16Gi" 8

requestGPU: 1 9

storageClass: <STORAGE_CLASS>

pvcStorage: "50Gi" 10

pvcAccessMode:

- ReadWriteOnce

vllmConfig:

enableChunkedPrefill: false 11

enablePrefixCaching: false 12

maxModelLen: 4096 13

dtype: "bfloat16" 14

extraArgs: ["--disable-log-requests", "--gpu-memory-utilization", "0.8"] 15

hf_token: <HF_TOKEN> 16The unique identifier for your model deployment. | |

The Docker image registry containing the model’s serving engine image. | |

The Docker image repository containing the model’s serving engine image. | |

The version of the model image to use. | |

The URL pointing to the model on Hugging Face or another hosting service. | |

The number of replicas for the deployment, which allows scaling for load. | |

The amount of CPU resources requested per replica. | |

Memory allocation for the deployment. Sufficient memory is required to load the model. | |

The number of GPUs to allocate for the deployment. | |

The Persistent Volume Claim (PVC) size for model storage. | |

Optimizes performance by prefetching model chunks. | |

Enables caching of prompt prefixes to speed up inference for repeated prompts. | |

The maximum sequence length the model can handle. | |

The data type for model weights, such as | |

Additional command-line arguments for vLLM, such as disabling request logging or setting GPU memory utilization. | |

Your Hugging Face token for accessing gated models.

Replace |

Prefetching models to a Persistent Volume Claim (PVC) prevents repeated downloads from Hugging Face during pod startup.

The process involves creating a PVC and a job to fetch the model.

This PVC is mounted at /models, where the prefetch job stores the model weights.

Subsequently, the vLLM modelURL is set to this path, which ensures that the model is loaded locally instead of being downloaded when the pod starts.

Define a PVC for model weights using the following YAML specification.

# pvc-models.yaml apiVersion: v1 kind: PersistentVolumeClaim metadata: name: models-pvc namespace: <SUSE_AI_NAMESPACE> spec: accessModes: ["ReadWriteOnce"] resources: requests: storage: 50Gi # Adjust size based on your model storageClassName: <STORAGE_CLASS>Save it as

pvc-models.yamland apply withkubectl apply -f pvc-models.yaml.Create a secret resource for the Hugging Face token.

> kubectl create secret -n <SUSE_AI_NAMESPACE> \ generic huggingface-credentials \ --from-literal=HUGGING_FACE_HUB_TOKEN=<HF_TOKEN>Create a YAML specification for prefetching the model and save it as

job-prefetch-llama3.1-8b.yaml.# job-prefetch-llama3.1-8b.yaml apiVersion: batch/v1 kind: Job metadata: name: prefetch-llama3.1-8b namespace: <SUSE_AI_NAMESPACE> spec: template: spec: restartPolicy: OnFailure containers: - name: hf-download image: python:3.10-slim env: - name: HF_TOKEN valueFrom: { secretKeyRef: { name: huggingface-credentials, key: <HUGGING_FACE_HUB_TOKEN> } } - name: HF_HUB_ENABLE_HF_TRANSFER value: "1" - name: HF_HUB_DOWNLOAD_TIMEOUT value: "60" command: ["bash","-lc"] args: - | set -e echo "Logging in..." echo "Installing Hugging Face CLI..." pip install "huggingface_hub[cli]" pip install "hf_transfer" hf auth login --token "${HF_TOKEN}" echo "Downloading Llama 3.1 8B Instruct to /models/llama-3.1-8b-it ..." hf download meta-llama/Llama-3.1-8B-Instruct --local-dir /models/llama-3.1-8b-it volumeMounts: - name: models mountPath: /models volumes: - name: models persistentVolumeClaim: claimName: models-pvcApply the specification with the following commands:

> kubectl apply -f job-prefetch-llama3.1-8b.yaml > kubectl -n <SUSE_AI_NAMESPACE> \ wait --for=condition=complete job/prefetch-llama3.1-8bUpdate the custom vLLM override file with support for PVC.

# vllm_custom_overrides.yaml global: imagePullSecrets: - application-collection servingEngineSpec: modelSpec: - name: "llama3" registry: "dp.apps.rancher.io" repository: "containers/vllm-openai" tag: "0.13.0" imagePullPolicy: "IfNotPresent" modelURL: "/models/llama-3.1-8b-it" replicaCount: 1 requestCPU: 10 requestMemory: "16Gi" requestGPU: 1 extraVolumes: - name: models-pvc persistentVolumeClaim: claimName: models-pvc 1 extraVolumeMounts: - name: models-pvc mountPath: /models 2 vllmConfig: maxModelLen: 4096 hf_token: <HF_TOKEN>Specify your PVC name.

The mount path must match the base directory of the

servingEngineSpec.modelSpec.modeURLvalue specified above.Save it as

vllm_custom_overrides.yamland apply withkubectl apply -f vllm_custom_overrides.yaml.The following example lists mounted PVCs for a pod.

> kubectl exec -it vllm-llama3-deployment-vllm-858bd967bd-w26f7 \ -n <SUSE_AI_NAMESPACE> -- ls -l /models drwxr-xr-x 1 root root 608 Aug 22 16:29 llama-3.1-8b-it

This example shows how to configure multiple models to run on different GPUs.

Remember to update the entries hf_token and storageClass.

Ray is currently not supported. Therefore, sharding a single large model across multiple GPUs is not supported.

# vllm_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection

servingEngineSpec:

modelSpec:

- name: "llama3"

registry: "dp.apps.rancher.io"

repository: "containers/vllm-openai"

tag: "0.13.0"

imagePullPolicy: "IfNotPresent"

modelURL: "meta-llama/Llama-3.1-8B-Instruct"

replicaCount: 1

requestCPU: 10

requestMemory: "16Gi"

requestGPU: 1

pvcStorage: "50Gi"

storageClass: <STORAGE_CLASS>

vllmConfig:

maxModelLen: 4096

hf_token: <HF_TOKEN_FOR_LLAMA_31>

- name: "mistral"

registry: "dp.apps.rancher.io"

repository: "containers/vllm-openai"

tag: "0.13.0"

imagePullPolicy: "IfNotPresent"

modelURL: "mistralai/Mistral-7B-Instruct-v0.2"

replicaCount: 1

requestCPU: 10

requestMemory: "16Gi"

requestGPU: 1

pvcStorage: "50Gi"

storageClass: <STORAGE_CLASS>

vllmConfig:

maxModelLen: 4096

hf_token: <HF_TOKEN_FOR_MISTRAL>This example demonstrates how to enable KV cache offloading to the CPU using LMCache in a vLLM deployment.

You can enable LMCache and set the CPU offloading buffer size using the lmcacheConfig field.

In the following example, the buffer is set to 20 GB, but you can adjust this value based on your workload.

Remember to update the entries hf_token and storageClass.

Setting lmcacheConfig.enabled to true implicitly enables the LMCACHE_USE_EXPERIMENTAL flag for LMCache.

These experimental features are only supported on newer GPU generations.

It is not recommended to enable them without a compelling reason.

# vllm_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection

}

servingEngineSpec:

modelSpec:

- name: "mistral"

registry: "dp.apps.rancher.io"

repository: "containers/lmcache-vllm-openai"

tag: "0.3.2"

imagePullPolicy: "IfNotPresent"

modelURL: "mistralai/Mistral-7B-Instruct-v0.2"

replicaCount: 1

requestCPU: 10

requestMemory: "40Gi"

requestGPU: 1

pvcStorage: "50Gi"

storageClass: <STORAGE_CLASS>

pvcAccessMode:

- ReadWriteOnce

vllmConfig:

maxModelLen: 32000

lmcacheConfig:

enabled: false

cpuOffloadingBufferSize: "20"

hf_token: <HF_TOKEN>This example shows how to enable remote KV cache storage using LMCache in a vLLM deployment.

The configuration defines a cacheserverSpec and uses two replicas.

Remember to replace the placeholder values for hf_token and storageClass before applying the configuration.

Setting lmcacheConfig.enabled to true implicitly enables the LMCACHE_USE_EXPERIMENTAL flag for LMCache.

These experimental features are only supported on newer GPU generations.

It is not recommended to enable them without a compelling reason.

# vllm_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection

servingEngineSpec:

modelSpec:

- name: "mistral"

registry: "dp.apps.rancher.io"

repository: "containers/lmcache-vllm-openai"

tag: "0.3.2"

imagePullPolicy: "IfNotPresent"

modelURL: "mistralai/Mistral-7B-Instruct-v0.2"

replicaCount: 2

requestCPU: 10

requestMemory: "40Gi"

requestGPU: 1

pvcStorage: "50Gi"

storageClass: <STORAGE_CLASS>

vllmConfig:

enablePrefixCaching: true

maxModelLen: 16384

lmcacheConfig:

enabled: false

cpuOffloadingBufferSize: "20"

hf_token: <HF_TOKEN>

initContainer:

name: "wait-for-cache-server"

image: "dp.apps.rancher.io/containers/lmcache-vllm-openai:0.3.2"

command: ["/bin/sh", "-c"]

args:

- |

timeout 60 bash -c '

while true; do

/opt/venv/bin/python3 /workspace/LMCache/examples/kubernetes/health_probe.py $(RELEASE_NAME)-cache-server-service $(LMCACHE_SERVER_SERVICE_PORT) && exit 0

echo "Waiting for LMCache server..."

sleep 2

done'

cacheserverSpec:

replicaCount: 1

containerPort: 8080

servicePort: 81

serde: "naive"

registry: "dp.apps.rancher.io"

repository: "containers/lmcache-vllm-openai"

tag: "0.3.2"

resources:

requests:

cpu: "4"

memory: "8G"

limits:

cpu: "4"

memory: "10G"

labels:

environment: "cacheserver"

release: "cacheserver"

routerSpec:

resources:

requests:

cpu: "1"

memory: "2G"

limits:

cpu: "1"

memory: "2G"

routingLogic: "session"

sessionKey: "x-user-id"4.10 Installing mcpo #

MCP (Model Context Protocol) is an open source standard for connecting AI applications—such as SUSE AI—to external systems. These external systems can include data sources like databases or local files, or tools like calculators or search engines.

mcpo is the MCP-to-OpenAPI proxy server provided by Open WebUI. It solves communication compatibility issues, enables cloud and UI integrations, and offers increased security and scalability.

4.10.1 Details about the mcpo application #

Before deploying mcpo, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

helm show values oci://dp.apps.rancher.io/charts/open-webui-mcpo

Alternatively, you can also refer to the mcpo Helm chart page on the SUSE Application Collection site at https://apps.rancher.io/applications/open-webui-mcpo. It contains mcpo dependencies, available versions and the link to pull the mcpo container image.

4.10.2 mcpo installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

Create a

mcpo_custom_overrides.yamlfile to override the default values of the Helm chart. The following file defines multiple MCP servers in theconfig.mcpServerssection. These servers will be added to the mcpo configuration fileconfig.json.# mcpo_custom_overrides.yaml global: imagePullSecrets: - application-collection config: mcpServers: memory: command: npx args: - -y - "@modelcontextprotocol/server-memory" time: command: uvx args: - mcp-server-time - --local-timezone=America/New_York fetch: command: uvx args: - mcp-server-fetch weather: command: uvx args: - --from - git+https://github.com/adhikasp/mcp-weather.git - mcp-weather env: - ACCUWEATHER_API_KEY: your_api_key_here</screen>After saving the override file as

mcpo_custom_overrides.yaml, apply its configuration with the following command.> helm upgrade --install \ mcpo oci://dp.apps.rancher.io/charts/open-webui-mcpo \ -n SUSE_AI_NAMESPACE \ -f mcpo_custom_overrides.yaml

Installing MCP servers. You can add new MCP servers by including them in the mcpo configuration file following the Claude Desktop MCP format. For detailed information on installing MCP servers with mcpo, refer to the mcpo Quick Usage guide.

4.10.3 Integrating mcpo with Open WebUI #

To integrate mcpo with Open WebUI, follow these steps:

You must have Open WebUI administrator privileges to access configuration screens or settings mentioned in this section.

In the bottom left of the Open WebUI window, click your avatar icon to open the user menu and select .

Click the tab and select from the left menu.

Under , click the

plusicon to add a new connection.For each MCP server:

Provide the server URL, name and description.

Set the visibility to to make it available to all users.

Check if the connection is successful and confirm with .

TipThe general URL format is: MCPO_URL/MCP_SERVER_NAME. For example, if mcpo was deployed as

mcpoin the namespacesuse-aiwith the default port configuration, the URL is:http://mcpo-open-webui-mcpo.suse-ai.svc.cluster.local:8000/MCP_SERVER_NAME

After you have configured at least one MCP server, you can enable them from the Open WebUI chat input field to make answers more specific. For more information, see Selecting mcpo services from the chat input field.

To enable selected MCP tools by default for a model, refer to Enabling default MCP services.

4.10.4 Upgrading mcpo #

The mcpo chart receives application updates and updates of the Helm chart templates.

New versions may include changes that require manual steps.

These steps are listed in the corresponding README file.

All mcpo dependencies are updated automatically during an mcpo upgrade.

To upgrade mcpo, identify the new version number and run the following command below:

> helm upgrade --install \ mcpo oci://dp.apps.rancher.io/charts/open-webui-mcpo \ -n SUSE_AI_NAMESPACE \ --version VERSION_NUMBER \ -f mcpo_custom_overrides.yaml

If you omit the --version option, mcpo gets upgraded to the latest available version.

4.10.5 Uninstalling mcpo #

To uninstall mcpo, run the following command:

> helm uninstall mcpo -n SUSE_AI_NAMESPACE

4.11 Installing PyTorch #

PyTorch is a widely used open-source deep-learning framework that supports both CPU and GPU acceleration. When deployed with the SUSE AI stack, the PyTorch Helm chart lets you inject your own training or inference code into the container and run it on NVIDIA GPUs available in your cluster.

4.11.1 Details about the PyTorch application #

Before deploying PyTorch, it is important to know more about the supported configurations and documentation. The following command provides the corresponding details:

helm show values oci://dp.apps.rancher.io/charts/pytorch

Alternatively, you can also refer to the PyTorch Helm chart page. It contains PyTorch dependencies, available versions and the link to pull the PyTorch container image.

4.11.2 PyTorch installation procedure #

Before the installation, you need to get user access to the SUSE Application Collection and SUSE Registry, create a Kubernetes namespace, and log in to the Helm registry as described in Section 4.3, “Installation procedure”.

Create a

pytorch_custom_overrides.yamlfile to override the values of the parent Helm chart. Find examples of PyTorch override files in Section 4.11.5, “Examples of PyTorch Helm chart override files” and a list of all valid options and their values in Section 4.11.6, “Values for the PyTorch Helm chart”.Install the PyTorch Helm chart using the

pytorch_custom_overrides.yamlfile using the following command.> helm upgrade --install \ pytorch oci://dp.apps.rancher.io/charts/pytorch \ -n SUSE_AI_NAMESPACE \ -f pytorch_custom_overrides.yaml

4.11.3 Upgrading PyTorch #

You can upgrade PyTorch to a specific version by running the following command:

> helm upgrade \ pytorch oci://dp.apps.rancher.io/charts/pytorch \ -n SUSE_AI_NAMESPACE \ --version VERSION_NUMBER \ -f pytorch_custom_overrides.yaml

If you omit the --version option, PyTorch gets upgraded to the latest available version.

4.11.4 Uninstalling PyTorch #

To uninstall PyTorch, run the following command:

> helm uninstall pytorch -n SUSE_AI_NAMESPACE

4.11.5 Examples of PyTorch Helm chart override files #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

# pytorch_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection 1

image:

registry: dp.apps.rancher.io

repository: containers/pytorch

tag: "2.7.0-nvidia"

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path 2

gpu:

enabled: true

type: 'nvidia'

number: 1Instructs Helm to use credentials from the SUSE Application Collection. For instructions on how to configure the image pull secrets for the SUSE Application Collection, refer to the official documentation. | |

Use |

To create a ConfigMap, run the following command:

> kubectl describe configmap \ MY_CONFIG_MAP -n SUSE_AI_NAMESPACE

# pytorch_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection

image:

registry: dp.apps.rancher.io 1

repository: containers/pytorch

tag: "2.7.0-nvidia"

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path 2

gpu:

enabled: true

type: 'nvidia'

number: 1

configMapExtFiles: "my-config-files" 3Instructs Helm to use credentials from the SUSE Application Collection. For instructions on how to configure the image pull secrets for the SUSE Application Collection, refer to the official documentation. | |

Use | |

Specifies ConfigMap files. |

Move the entrypoint.sh file plus any helper files under the scripts/ directory.

# pytorch_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection 1

image:

registry: dp.apps.rancher.io

repository: containers/pytorch

tag: "2.7.0-nvidia"

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path 2

gpu:

enabled: true

type: 'nvidia'

number: 1

entrypointscript:

filename: "entrypoint.sh" 3

arguments: [] 4Instructs Helm to use credentials from the SUSE Application Collection. For instructions on how to configure the image pull secrets for the SUSE Application Collection, refer to the official documentation. | |

Use | |

The file will be mounted and accessible at | |

Add custom command-line arguments if needed. |

# pytorch_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection 1

image:

registry: dp.apps.rancher.io

repository: containers/pytorch

tag: "2.7.0-nvidia"

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path 2

gpu:

enabled: true

type: 'nvidia'

number: 1

gitClone:

enabled: true

repository: "github.com/YOUR_ORGANIZATOIN/YOUR_REPO" 3

revision: "main" 4Instructs Helm to use credentials from the SUSE Application Collection. For instructions on how to configure the image pull secrets for the SUSE Application Collection, refer to the official documentation. | |

Use | |

Do not specify the protocol, such as | |

Specify a branch name, a tag name or a commit. |

# pytorch_custom_overrides.yaml

global:

imagePullSecrets:

- application-collection 1

image:

registry: dp.apps.rancher.io

repository: containers/pytorch

tag: "2.7.0-nvidia"

pullPolicy: IfNotPresent

persistence:

enabled: true

storageClass: local-path 2

gpu:

enabled: true

type: 'nvidia'

number: 1

gitClone:

enabled: true

repository: "github.com/YOUR_ORGANIZATOIN/YOUR_REPO" 3

revision: "main" 4

secretName: "MY_GIT_CREDENTIALS" 5Instructs Helm to use credentials from the SUSE Application Collection. For instructions on how to configure the image pull secrets for the SUSE Application Collection, refer to the official documentation. | |

Use | |

Do not specify the protocol, such as | |

Specify a branch name, a tag name or a commit. | |

Specify a preconfigured secret with username and password (or token). |

4.11.6 Values for the PyTorch Helm chart #

To override the default values during the Helm chart installation or update, you can create an override YAML file with custom values.

Then, apply these values by specifying the path to the override file with the -f option of the helm command.

Remember to replace <SUSE_AI_NAMESPACE> with your Kubernetes namespace.

| Key | Type | Default | Description |

|---|---|---|---|

| string |

| Global override for the container-image registry used by all chart images. |

| list(string) |

| Global list of image-pull secrets to attach to all pods. |

| string | Registry that hosts the PyTorch container image. | |

| string |

| Repository name (path) of the PyTorch container image. |

| string |

| Image tag to deploy (CUDA/NVIDIA build by default). |

| string |

| Kubernetes pull policy for the PyTorch image. |

| list(string) |

| Additional pull secrets (overrides global.imagePullSecrets). |

| string |

| Replace the chart name in resource names. |

| string |