41 Setting up the management cluster #

41.1 Introduction #

The management cluster is the part of SUSE Telco Cloud that is used to manage the provision and lifecycle of the runtime stacks. From a technical point of view, the management cluster contains the following components:

SUSE Linux Microas the OS. Depending on the use case, some configurations like networking, storage, users and kernel arguments can be customized.RKE2as the Kubernetes cluster. Depending on the use case, it can be configured to use specific CNI plugins, such asMultus,Cilium,Calico, etc.Rancheras the management platform to manage the lifecycle of the clusters.Metal3as the component to manage the lifecycle of the bare-metal nodes.CAPIas the component to manage the lifecycle of the Kubernetes clusters (downstream clusters). TheRKE2 CAPI Provideris used to manage the lifecycle of the RKE2 clusters.

With all components mentioned above, the management cluster can manage the lifecycle of downstream clusters, using a declarative approach to manage the infrastructure and applications.

For more information about SUSE Linux Micro, see: SUSE Linux Micro (Chapter 9, SUSE Linux Micro)

For more information about RKE2, see: RKE2 (Chapter 15, RKE2)

For more information about Rancher, see: Rancher (Chapter 5, Rancher)

For more information about Metal3, see: Metal3 (Chapter 10, Metal3)

41.2 Steps to set up the management cluster #

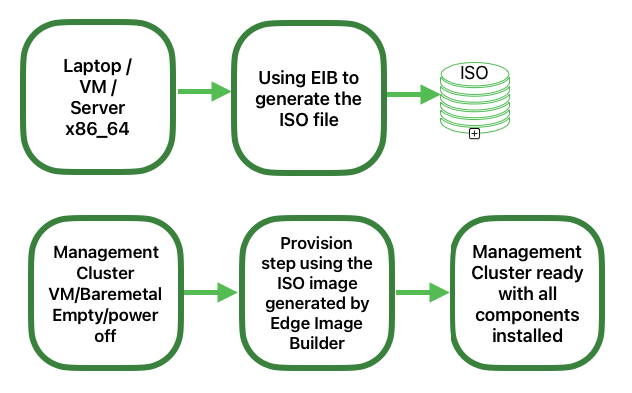

The following steps are necessary to set up the management cluster (using a single node):

The following are the main steps to set up the management cluster using a declarative approach:

Image preparation for connected environments (Section 41.3, “Image preparation for connected environments”): The first step is to prepare the manifests and files with all the necessary configurations to be used in connected environments.

Directory structure for connected environments (Section 41.3.1, “Directory structure”): This step creates a directory structure to be used by Edge Image Builder to store the configuration files and the image itself.

Management cluster definition file (Section 41.3.2, “Management cluster definition file”): The

mgmt-cluster.yamlfile is the main definition file for the management cluster. It contains the following information about the image to be created:Image Information: The information related to the image to be created using the base image.

Operating system: The operating system configurations to be used in the image.

Kubernetes: Helm charts and repositories, kubernetes version, network configuration, and the nodes to be used in the cluster.

Custom folder (Section 41.3.3, “Custom folder”): The

customfolder contains the configuration files and scripts to be used by Edge Image Builder to deploy a fully functional management cluster.Files: Contains the configuration files to be used by the management cluster.

Scripts: Contains the scripts to be used by the management cluster.

Kubernetes folder (Section 41.3.4, “Kubernetes folder”): The

kubernetesfolder contains the configuration files to be used by the management cluster.Manifests: Contains the manifests to be used by the management cluster.

Helm: Contains the Helm values files to be used by the management cluster.

Config: Contains the configuration files to be used by the management cluster.

Network folder (Section 41.3.5, “Networking folder”): The

networkfolder contains the network configuration files to be used by the management cluster nodes.

Image preparation for air-gap environments (Section 41.4, “Image preparation for air-gap environments”): The step is to show the differences to prepare the manifests and files to be used in an air-gap scenario.

Modifications in the definition file (Section 41.4.1, “Modifications in the definition file”): The

mgmt-cluster.yamlfile must be modified to include theembeddedArtifactRegistrysection with theimagesfield set to all container images to be included into the EIB output image.Modifications in the custom folder (Section 41.4.2, “Modifications in the custom folder”): The

customfolder must be modified to include the resources needed to run the management cluster in an air-gap environment.Register script: The

custom/scripts/99-register.shscript must be removed when you use an air-gap environment.

Modifications in the helm values folder (Section 41.4.3, “Modifications in the helm values folder”): The

helm/valuesfolder must be modified to include the configuration needed to run the management cluster in an air-gap environment.

Image creation (Section 41.5, “Image creation”): This step covers the creation of the image using the Edge Image Builder tool (for both, connected and air-gap scenarios). Check the prerequisites (Chapter 11, Edge Image Builder) to run the Edge Image Builder tool on your system.

Management Cluster Provision (Section 41.6, “Provision the management cluster”): This step covers the provisioning of the management cluster using the image created in the previous step (for both, connected and air-gap scenarios). This step can be done using a laptop, server, VM or any other AMD64/Intel 64 system with a USB port.

For more information about Edge Image Builder, see Edge Image Builder (Chapter 11, Edge Image Builder) and Edge Image Builder Quick Start (Chapter 3, Standalone clusters with Edge Image Builder).

41.3 Image preparation for connected environments #

Edge Image Builder is used to create the image for the management cluster, in this document we cover the minimal configuration necessary to set up the management cluster.

Edge Image Builder runs inside a container, so a container runtime is required such as Podman or Rancher Desktop. For this guide, we assume podman is available.

Also, as a prerequisite to deploy a highly available management cluster, you need to reserve three IPs in your network:

apiVIPfor the API VIP Address (used to access the Kubernetes API server).ingressVIPfor the Ingress VIP Address (consumed, for example, by the Rancher UI).metal3VIPfor the Metal3 VIP Address.

41.3.1 Directory structure #

When running EIB, a directory is mounted from the host, so the first thing to do is to create a directory structure to be used by EIB to store the configuration files and the image itself. This directory has the following structure:

eib

├── mgmt-cluster.yaml

├── network

│ └── mgmt-cluster-node1.yaml

├── os-files

│ └── var

│ └── lib

│ └── rancher

│ └── rke2

│ └── server

│ └── manifests

│ └── rke2-ingress-config.yaml

├── kubernetes

│ ├── manifests

│ │ ├── neuvector-namespace.yaml

│ │ ├── ingress-l2-adv.yaml

│ │ └── ingress-ippool.yaml

│ ├── helm

│ │ └── values

│ │ ├── rancher.yaml

│ │ ├── neuvector.yaml

│ │ ├── longhorn.yaml

│ │ ├── metal3.yaml

│ │ └── certmanager.yaml

│ └── config

│ └── server.yaml

├── custom

│ ├── scripts

│ │ ├── 99-register.sh

│ │ ├── 99-mgmt-setup.sh

│ │ └── 99-alias.sh

│ └── files

│ ├── rancher.sh

│ ├── mgmt-stack-setup.service

│ ├── metal3.sh

│ └── basic-setup.sh

└── base-imagesThe image SL-Micro.x86_64-6.1-Base-SelfInstall-GM.install.iso must be downloaded from the SUSE Customer Center or the SUSE Download page, and it must be located under the base-images folder.

You should check the SHA256 checksum of the image to ensure it has not been tampered with. The checksum can be found in the same location where the image was downloaded.

An example of the directory structure can be found in the SUSE Edge GitHub repository under the "telco-examples" folder.

41.3.2 Management cluster definition file #

The mgmt-cluster.yaml file is the main definition file for the management cluster. It contains the following information:

apiVersion: 1.3

image:

imageType: iso

arch: x86_64

baseImage: SL-Micro.x86_64-6.1-Base-SelfInstall-GM.install.iso

outputImageName: eib-mgmt-cluster-image.iso

operatingSystem:

isoConfiguration:

installDevice: /dev/sda

users:

- username: root

encryptedPassword: $ROOT_PASSWORD

packages:

packageList:

- jq

- open-iscsi

sccRegistrationCode: $SCC_REGISTRATION_CODE

kubernetes:

version: v1.33.5+rke2r1

helm:

charts:

- name: cert-manager

repositoryName: jetstack

version: 1.18.2

targetNamespace: cert-manager

valuesFile: certmanager.yaml

createNamespace: true

installationNamespace: kube-system

- name: longhorn-crd

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

- name: longhorn

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

valuesFile: longhorn.yaml

- name: metal3

version: 304.0.16+up0.12.6

repositoryName: suse-edge-charts

targetNamespace: metal3-system

createNamespace: true

installationNamespace: kube-system

valuesFile: metal3.yaml

- name: rancher-turtles

version: 304.0.6+up0.24.0

repositoryName: suse-edge-charts

targetNamespace: rancher-turtles-system

createNamespace: true

installationNamespace: kube-system

- name: neuvector-crd

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: neuvector

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: rancher

version: 2.12.2

repositoryName: rancher-prime

targetNamespace: cattle-system

createNamespace: true

installationNamespace: kube-system

valuesFile: rancher.yaml

repositories:

- name: jetstack

url: https://charts.jetstack.io

- name: rancher-charts

url: https://charts.rancher.io/

- name: suse-edge-charts

url: oci://registry.suse.com/edge/charts

- name: rancher-prime

url: https://charts.rancher.com/server-charts/prime

network:

apiHost: $API_HOST

apiVIP: $API_VIP

nodes:

- hostname: mgmt-cluster-node1

initializer: true

type: server

# - hostname: mgmt-cluster-node2

# type: server

# - hostname: mgmt-cluster-node3

# type: serverTo explain the fields and values in the mgmt-cluster.yaml definition file, we have divided it into the following sections.

Image section (definition file):

image:

imageType: iso

arch: x86_64

baseImage: SL-Micro.x86_64-6.1-Base-SelfInstall-GM.install.iso

outputImageName: eib-mgmt-cluster-image.isowhere the baseImage is the original image you downloaded from the SUSE Customer Center or the SUSE Download page. outputImageName is the name of the new image that will be used to provision the management cluster.

Operating system section (definition file):

operatingSystem:

isoConfiguration:

installDevice: /dev/sda

users:

- username: root

encryptedPassword: $ROOT_PASSWORD

packages:

packageList:

- jq

sccRegistrationCode: $SCC_REGISTRATION_CODEwhere the installDevice is the device to be used to install the operating system, the username and encryptedPassword are the credentials to be used to access the system, the packageList is the list of packages to be installed (jq is required internally during the installation process), and the sccRegistrationCode is the registration code used to get the packages and dependencies at build time and can be obtained from the SUSE Customer Center.

The encrypted password can be generated using the openssl command as follows:

openssl passwd -6 MyPassword!123This outputs something similar to:

$6$UrXB1sAGs46DOiSq$HSwi9GFJLCorm0J53nF2Sq8YEoyINhHcObHzX2R8h13mswUIsMwzx4eUzn/rRx0QPV4JIb0eWCoNrxGiKH4R31Kubernetes section (definition file):

kubernetes:

version: v1.33.5+rke2r1

helm:

charts:

- name: cert-manager

repositoryName: jetstack

version: 1.18.2

targetNamespace: cert-manager

valuesFile: certmanager.yaml

createNamespace: true

installationNamespace: kube-system

- name: longhorn-crd

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

- name: longhorn

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

valuesFile: longhorn.yaml

- name: metal3

version: 304.0.16+up0.12.6

repositoryName: suse-edge-charts

targetNamespace: metal3-system

createNamespace: true

installationNamespace: kube-system

valuesFile: metal3.yaml

- name: rancher-turtles

version: 304.0.6+up0.24.0

repositoryName: suse-edge-charts

targetNamespace: rancher-turtles-system

createNamespace: true

installationNamespace: kube-system

- name: neuvector-crd

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: neuvector

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: rancher

version: 2.12.2

repositoryName: rancher-prime

targetNamespace: cattle-system

createNamespace: true

installationNamespace: kube-system

valuesFile: rancher.yaml

repositories:

- name: jetstack

url: https://charts.jetstack.io

- name: rancher-charts

url: https://charts.rancher.io/

- name: suse-edge-charts

url: oci://registry.suse.com/edge/charts

- name: rancher-prime

url: https://charts.rancher.com/server-charts/prime

network:

apiHost: $API_HOST

apiVIP: $API_VIP

nodes:

- hostname: mgmt-cluster-node1

initializer: true

type: server

# - hostname: mgmt-cluster-node2

# type: server

# - hostname: mgmt-cluster-node3

# type: serverThe helm section contains the list of Helm charts to be installed, the repositories to be used, and the version configuration for all of them.

The network section contains the configuration for the network, like the apiHost and apiVIP to be used by the RKE2 component.

The apiVIP should be an IP address that is not used in the network and should not be part of the DHCP pool (in case we use DHCP). Also, when we use the apiVIP in a multi-node cluster, it is used to access the Kubernetes API server.

The apiHost is the name resolution to apiVIP to be used by the RKE2 component.

The nodes section contains the list of nodes to be used in the cluster. In this example, a single-node cluster is being used, but it can be extended to a multi-node cluster by adding more nodes to the list (by uncommenting the lines).

The names of the nodes must be unique in the cluster.

Optionally, use the

initializerfield to specify the bootstrap host, otherwise it will be the first node in the list.The names of the nodes must be the same as the host names defined in the Network Folder (Section 41.3.5, “Networking folder”) when network configuration is required.

41.3.3 Custom folder #

The custom folder contains the following subfolders:

...

├── custom

│ ├── scripts

│ │ ├── 99-register.sh

│ │ ├── 99-mgmt-setup.sh

│ │ └── 99-alias.sh

│ └── files

│ ├── rancher.sh

│ ├── mgmt-stack-setup.service

│ ├── metal3.sh

│ └── basic-setup.sh

...The

custom/filesfolder contains the configuration files to be used by the management cluster.The

custom/scriptsfolder contains the scripts to be used by the management cluster.

The custom/files folder contains the following files:

basic-setup.sh: contains configuration parameters forMetal3,RancherandMetalLB. Only modify this file if you want to change the namespaces to be used.#!/bin/bash # Pre-requisites. Cluster already running export KUBECTL="/var/lib/rancher/rke2/bin/kubectl" export KUBECONFIG="/etc/rancher/rke2/rke2.yaml" ################## # METAL3 DETAILS # ################## export METAL3_CHART_TARGETNAMESPACE="metal3-system" ########### # METALLB # ########### export METALLBNAMESPACE="metallb-system" ########### # RANCHER # ########### export RANCHER_CHART_TARGETNAMESPACE="cattle-system" export RANCHER_FINALPASSWORD="adminadminadmin" die(){ echo ${1} 1>&2 exit ${2} }metal3.sh: contains the configuration for theMetal3component to be used (no modifications needed). In future versions, this script will be replaced to use insteadRancher Turtlesto make it easy.#!/bin/bash set -euo pipefail BASEDIR="$(dirname "$0")" source ${BASEDIR}/basic-setup.sh METAL3LOCKNAMESPACE="default" METAL3LOCKCMNAME="metal3-lock" trap 'catch $? $LINENO' EXIT catch() { if [ "$1" != "0" ]; then echo "Error $1 occurred on $2" ${KUBECTL} delete configmap ${METAL3LOCKCMNAME} -n ${METAL3LOCKNAMESPACE} fi } # Get or create the lock to run all those steps just in a single node # As the first node is created WAY before the others, this should be enough # TODO: Investigate if leases is better if [ $(${KUBECTL} get cm -n ${METAL3LOCKNAMESPACE} ${METAL3LOCKCMNAME} -o name | wc -l) -lt 1 ]; then ${KUBECTL} create configmap ${METAL3LOCKCMNAME} -n ${METAL3LOCKNAMESPACE} --from-literal foo=bar else exit 0 fi # Wait for metal3 while ! ${KUBECTL} wait --for condition=ready -n ${METAL3_CHART_TARGETNAMESPACE} $(${KUBECTL} get pods -n ${METAL3_CHART_TARGETNAMESPACE} -l app.kubernetes.io/name=metal3-ironic -o name) --timeout=10s; do sleep 2 ; done # Get the ironic IP IRONICIP=$(${KUBECTL} get cm -n ${METAL3_CHART_TARGETNAMESPACE} ironic -o jsonpath='{.data.IRONIC_IP}') # If LoadBalancer, use metallb, else it is NodePort if [ $(${KUBECTL} get svc -n ${METAL3_CHART_TARGETNAMESPACE} metal3-metal3-ironic -o jsonpath='{.spec.type}') == "LoadBalancer" ]; then # Wait for metallb while ! ${KUBECTL} wait --for condition=ready -n ${METALLBNAMESPACE} $(${KUBECTL} get pods -n ${METALLBNAMESPACE} -l app.kubernetes.io/component=controller -o name) --timeout=10s; do sleep 2 ; done # Do not create the ippool if already created ${KUBECTL} get ipaddresspool -n ${METALLBNAMESPACE} ironic-ip-pool -o name || cat <<-EOF | ${KUBECTL} apply -f - apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: name: ironic-ip-pool namespace: ${METALLBNAMESPACE} spec: addresses: - ${IRONICIP}/32 serviceAllocation: priority: 100 serviceSelectors: - matchExpressions: - {key: app.kubernetes.io/name, operator: In, values: [metal3-ironic]} EOF # Same for L2 Advs ${KUBECTL} get L2Advertisement -n ${METALLBNAMESPACE} ironic-ip-pool-l2-adv -o name || cat <<-EOF | ${KUBECTL} apply -f - apiVersion: metallb.io/v1beta1 kind: L2Advertisement metadata: name: ironic-ip-pool-l2-adv namespace: ${METALLBNAMESPACE} spec: ipAddressPools: - ironic-ip-pool EOF fi # If rancher is deployed if [ $(${KUBECTL} get pods -n ${RANCHER_CHART_TARGETNAMESPACE} -l app=rancher -o name | wc -l) -ge 1 ]; then cat <<-EOF | ${KUBECTL} apply -f - apiVersion: management.cattle.io/v3 kind: Feature metadata: name: embedded-cluster-api spec: value: false EOF # Disable Rancher webhooks for CAPI ${KUBECTL} delete --ignore-not-found=true mutatingwebhookconfiguration.admissionregistration.k8s.io mutating-webhook-configuration ${KUBECTL} delete --ignore-not-found=true validatingwebhookconfigurations.admissionregistration.k8s.io validating-webhook-configuration ${KUBECTL} wait --for=delete namespace/cattle-provisioning-capi-system --timeout=300s fi # Clean up the lock cm ${KUBECTL} delete configmap ${METAL3LOCKCMNAME} -n ${METAL3LOCKNAMESPACE}rancher.sh: contains the configuration for theRanchercomponent to be used (no modifications needed).#!/bin/bash set -euo pipefail BASEDIR="$(dirname "$0")" source ${BASEDIR}/basic-setup.sh RANCHERLOCKNAMESPACE="default" RANCHERLOCKCMNAME="rancher-lock" if [ -z "${RANCHER_FINALPASSWORD}" ]; then # If there is no final password, then finish the setup right away exit 0 fi trap 'catch $? $LINENO' EXIT catch() { if [ "$1" != "0" ]; then echo "Error $1 occurred on $2" ${KUBECTL} delete configmap ${RANCHERLOCKCMNAME} -n ${RANCHERLOCKNAMESPACE} fi } # Get or create the lock to run all those steps just in a single node # As the first node is created WAY before the others, this should be enough # TODO: Investigate if leases is better if [ $(${KUBECTL} get cm -n ${RANCHERLOCKNAMESPACE} ${RANCHERLOCKCMNAME} -o name | wc -l) -lt 1 ]; then ${KUBECTL} create configmap ${RANCHERLOCKCMNAME} -n ${RANCHERLOCKNAMESPACE} --from-literal foo=bar else exit 0 fi # Wait for rancher to be deployed while ! ${KUBECTL} wait --for condition=ready -n ${RANCHER_CHART_TARGETNAMESPACE} $(${KUBECTL} get pods -n ${RANCHER_CHART_TARGETNAMESPACE} -l app=rancher -o name) --timeout=10s; do sleep 2 ; done until ${KUBECTL} get ingress -n ${RANCHER_CHART_TARGETNAMESPACE} rancher > /dev/null 2>&1; do sleep 10; done RANCHERBOOTSTRAPPASSWORD=$(${KUBECTL} get secret -n ${RANCHER_CHART_TARGETNAMESPACE} bootstrap-secret -o jsonpath='{.data.bootstrapPassword}' | base64 -d) RANCHERHOSTNAME=$(${KUBECTL} get ingress -n ${RANCHER_CHART_TARGETNAMESPACE} rancher -o jsonpath='{.spec.rules[0].host}') # Skip the whole process if things have been set already if [ -z $(${KUBECTL} get settings.management.cattle.io first-login -ojsonpath='{.value}') ]; then # Add the protocol RANCHERHOSTNAME="https://${RANCHERHOSTNAME}" TOKEN="" while [ -z "${TOKEN}" ]; do # Get token sleep 2 TOKEN=$(curl -sk -X POST ${RANCHERHOSTNAME}/v3-public/localProviders/local?action=login -H 'content-type: application/json' -d "{\"username\":\"admin\",\"password\":\"${RANCHERBOOTSTRAPPASSWORD}\"}" | jq -r .token) done # Set password curl -sk ${RANCHERHOSTNAME}/v3/users?action=changepassword -H 'content-type: application/json' -H "Authorization: Bearer $TOKEN" -d "{\"currentPassword\":\"${RANCHERBOOTSTRAPPASSWORD}\",\"newPassword\":\"${RANCHER_FINALPASSWORD}\"}" # Create a temporary API token (ttl=60 minutes) APITOKEN=$(curl -sk ${RANCHERHOSTNAME}/v3/token -H 'content-type: application/json' -H "Authorization: Bearer ${TOKEN}" -d '{"type":"token","description":"automation","ttl":3600000}' | jq -r .token) curl -sk ${RANCHERHOSTNAME}/v3/settings/server-url -H 'content-type: application/json' -H "Authorization: Bearer ${APITOKEN}" -X PUT -d "{\"name\":\"server-url\",\"value\":\"${RANCHERHOSTNAME}\"}" curl -sk ${RANCHERHOSTNAME}/v3/settings/telemetry-opt -X PUT -H 'content-type: application/json' -H 'accept: application/json' -H "Authorization: Bearer ${APITOKEN}" -d '{"value":"out"}' fi # Clean up the lock cm ${KUBECTL} delete configmap ${RANCHERLOCKCMNAME} -n ${RANCHERLOCKNAMESPACE}mgmt-stack-setup.service: contains the configuration to create the systemd service to run the scripts during the first boot (no modifications needed).[Unit] Description=Setup Management stack components Wants=network-online.target # It requires rke2 or k3s running, but it will not fail if those services are not present After=network.target network-online.target rke2-server.service k3s.service # At least, the basic-setup.sh one needs to be present ConditionPathExists=/opt/mgmt/bin/basic-setup.sh [Service] User=root Type=forking # Metal3 can take A LOT to download the IPA image TimeoutStartSec=1800 ExecStartPre=/bin/sh -c "echo 'Setting up Management components...'" # Scripts are executed in StartPre because Start can only run a single one ExecStartPre=/opt/mgmt/bin/rancher.sh ExecStartPre=/opt/mgmt/bin/metal3.sh ExecStart=/bin/sh -c "echo 'Finished setting up Management components'" RemainAfterExit=yes KillMode=process # Disable & delete everything ExecStartPost=rm -f /opt/mgmt/bin/rancher.sh ExecStartPost=rm -f /opt/mgmt/bin/metal3.sh ExecStartPost=rm -f /opt/mgmt/bin/basic-setup.sh ExecStartPost=/bin/sh -c "systemctl disable mgmt-stack-setup.service" ExecStartPost=rm -f /etc/systemd/system/mgmt-stack-setup.service [Install] WantedBy=multi-user.target

The custom/scripts folder contains the following files:

99-alias.shscript: contains the alias to be used by the management cluster to load the kubeconfig file at first boot (no modifications needed).#!/bin/bash echo "alias k=kubectl" >> /etc/profile.local echo "alias kubectl=/var/lib/rancher/rke2/bin/kubectl" >> /etc/profile.local echo "export KUBECONFIG=/etc/rancher/rke2/rke2.yaml" >> /etc/profile.local99-mgmt-setup.shscript: contains the configuration to copy the scripts during the first boot (no modifications needed).#!/bin/bash # Copy the scripts from combustion to the final location mkdir -p /opt/mgmt/bin/ for script in basic-setup.sh rancher.sh metal3.sh; do cp ${script} /opt/mgmt/bin/ done # Copy the systemd unit file and enable it at boot cp mgmt-stack-setup.service /etc/systemd/system/mgmt-stack-setup.service systemctl enable mgmt-stack-setup.service99-register.shscript: contains the configuration to register the system using the SCC registration code. The${SCC_ACCOUNT_EMAIL}and${SCC_REGISTRATION_CODE}have to be set properly to register the system with your account.#!/bin/bash set -euo pipefail # Registration https://www.suse.com/support/kb/doc/?id=000018564 if ! which SUSEConnect > /dev/null 2>&1; then zypper --non-interactive install suseconnect-ng fi SUSEConnect --email "${SCC_ACCOUNT_EMAIL}" --url "https://scc.suse.com" --regcode "${SCC_REGISTRATION_CODE}"

41.3.4 Kubernetes folder #

The kubernetes folder contains the following subfolders:

...

├── kubernetes

│ ├── manifests

│ │ ├── rke2-ingress-config.yaml

│ │ ├── neuvector-namespace.yaml

│ │ ├── ingress-l2-adv.yaml

│ │ └── ingress-ippool.yaml

│ ├── helm

│ │ └── values

│ │ ├── rancher.yaml

│ │ ├── neuvector.yaml

│ │ ├── metal3.yaml

│ │ └── certmanager.yaml

│ └── config

│ └── server.yaml

...The kubernetes/config folder contains the following files:

server.yaml: By default, theCNIplug-in installed by default isCilium, so you do not need to create this folder and file. Just in case you need to customize theCNIplug-in, you can use theserver.yamlfile under thekubernetes/configfolder. It contains the following information:cni: - multus - cilium write-kubeconfig-mode: '0644' selinux: true system-default-registry: registry.rancher.com

This is an optional file to define certain Kubernetes customization, like the CNI plug-ins to be used or many options you can check in the official documentation.

The os-files/var/lib/rancher/rke2/server/manifests folder contains the following file:

rke2-ingress-config.yaml: contains the configuration to create theIngressservice for the management cluster (no modifications needed).apiVersion: helm.cattle.io/v1 kind: HelmChartConfig metadata: name: rke2-ingress-nginx namespace: kube-system spec: valuesContent: |- controller: config: use-forwarded-headers: "true" enable-real-ip: "true" publishService: enabled: true service: enabled: true type: LoadBalancer externalTrafficPolicy: Local

The HelmChartConfig must be included via os-files to the /var/lib/rancher/rke2/server/manifests directory, not via kubernetes/manifests as described in previous releases.

The kubernetes/manifests folder contains the following files:

neuvector-namespace.yaml: contains the configuration to create theNeuVectornamespace (no modifications needed).apiVersion: v1 kind: Namespace metadata: labels: pod-security.kubernetes.io/enforce: privileged name: neuvectoringress-l2-adv.yaml: contains the configuration to create theL2Advertisementfor theMetalLBcomponent (no modifications needed).apiVersion: metallb.io/v1beta1 kind: L2Advertisement metadata: name: ingress-l2-adv namespace: metallb-system spec: ipAddressPools: - ingress-ippoolingress-ippool.yaml: contains the configuration to create theIPAddressPoolfor therke2-ingress-nginxcomponent. The${INGRESS_VIP}has to be set properly to define the IP address reserved to be used by therke2-ingress-nginxcomponent.apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: name: ingress-ippool namespace: metallb-system spec: addresses: - ${INGRESS_VIP}/32 serviceAllocation: priority: 100 serviceSelectors: - matchExpressions: - {key: app.kubernetes.io/name, operator: In, values: [rke2-ingress-nginx]}

The kubernetes/helm/values folder contains the following files:

rancher.yaml: contains the configuration to create theRanchercomponent. The${INGRESS_VIP}must be set properly to define the IP address to be consumed by theRanchercomponent. The URL to access theRanchercomponent will behttps://rancher-${INGRESS_VIP}.sslip.io.hostname: rancher-${INGRESS_VIP}.sslip.io bootstrapPassword: "foobar" replicas: 1 global: cattle: systemDefaultRegistry: "registry.rancher.com"neuvector.yaml: contains the configuration to create theNeuVectorcomponent (no modifications needed).controller: replicas: 1 ranchersso: enabled: true manager: enabled: false cve: scanner: enabled: false replicas: 1 k3s: enabled: true crdwebhook: enabled: false registry: "registry.rancher.com" global: cattle: systemDefaultRegistry: "registry.rancher.com"longhorn.yaml: contains the configuration to create theLonghorncomponent (no modifications needed).global: cattle: systemDefaultRegistry: "registry.rancher.com"metal3.yaml: contains the configuration to create theMetal3component. The${METAL3_VIP}must be set properly to define the IP address to be consumed by theMetal3component.global: ironicIP: ${METAL3_VIP} enable_vmedia_tls: false additionalTrustedCAs: false metal3-ironic: global: predictableNicNames: "true" persistence: ironic: size: "5Gi"

The Media Server is an optional feature included in Metal3 (by default is disabled). To use the Metal3 feature, you need to configure it on the previous manifest. To use the Metal3 media server, specify the following variable:

add the

enable_metal3_media_servertotrueto enable the media server feature in the global section.include the following configuration about the media server where ${MEDIA_VOLUME_PATH} is the path to the media volume in the media (e.g

/home/metal3/bmh-image-cache)metal3-media: mediaVolume: hostPath: ${MEDIA_VOLUME_PATH}

An external media server can be used to store the images, and in the case you want to use it with TLS, you will need to modify the following configurations:

set to

truetheadditionalTrustedCAsin the previousmetal3.yamlfile to enable the additional trusted CAs from the external media server.include the following secret configuration in the folder

kubernetes/manifests/metal3-cacert-secret.yamlto store the CA certificate of the external media server.apiVersion: v1 kind: Namespace metadata: name: metal3-system --- apiVersion: v1 kind: Secret metadata: name: tls-ca-additional namespace: metal3-system type: Opaque data: ca-additional.crt: {{ additional_ca_cert | b64encode }}

The additional_ca_cert is the base64-encoded CA certificate of the external media server. You can use the following command to encode the certificate and generate the secret doing manually:

kubectl -n meta3-system create secret generic tls-ca-additional --from-file=ca-additional.crt=./ca-additional.crtcertmanager.yaml: contains the configuration to create theCert-Managercomponent (no modifications needed).installCRDs: true

41.3.5 Networking folder #

The network folder contains as many files as nodes in the management cluster. In our case, we have only one node, so we have only one file called mgmt-cluster-node1.yaml.

The name of the file must match the host name defined in the mgmt-cluster.yaml definition file into the network/node section described above.

If you need to customize the networking configuration, for example, to use a specific static IP address (DHCP-less scenario), you can use the mgmt-cluster-node1.yaml file under the network folder. It contains the following information:

${MGMT_GATEWAY}: The gateway IP address.${MGMT_DNS}: The DNS server IP address.${MGMT_MAC}: The MAC address of the network interface.${MGMT_NODE_IP}: The IP address of the management cluster.

routes:

config:

- destination: 0.0.0.0/0

metric: 100

next-hop-address: ${MGMT_GATEWAY}

next-hop-interface: eth0

table-id: 254

dns-resolver:

config:

server:

- ${MGMT_DNS}

- 8.8.8.8

interfaces:

- name: eth0

type: ethernet

state: up

mac-address: ${MGMT_MAC}

ipv4:

address:

- ip: ${MGMT_NODE_IP}

prefix-length: 24

dhcp: false

enabled: true

ipv6:

enabled: falseIf you want to use DHCP to get the IP address, you can use the following configuration (the MAC address must be set properly using the ${MGMT_MAC} variable):

## This is an example of a dhcp network configuration for a management cluster

interfaces:

- name: eth0

type: ethernet

state: up

mac-address: ${MGMT_MAC}

ipv4:

dhcp: true

enabled: true

ipv6:

enabled: falseDepending on the number of nodes in the management cluster, you can create more files like

mgmt-cluster-node2.yaml,mgmt-cluster-node3.yaml, etc. to configure the rest of the nodes.The

routessection is used to define the routing table for the management cluster.

41.4 Image preparation for air-gap environments #

This section describes how to prepare the image for air-gap environments showing only the differences from the previous sections. The following changes to the previous section (Image preparation for connected environments (Section 41.3, “Image preparation for connected environments”)) are required to prepare the image for air-gap environments:

The

mgmt-cluster.yamlfile must be modified to include theembeddedArtifactRegistrysection with theimagesfield set to all container images to be included into the EIB output image.The

mgmt-cluster.yamlfile must be modified to includerancher-turtles-airgap-resourceshelm chart.The

custom/scripts/99-register.shscript must be removed when use an air-gap environment.

41.4.1 Modifications in the definition file #

The mgmt-cluster.yaml file must be modified to include the embeddedArtifactRegistry section.

In this section the images field must contain the list of all container images to be included in the output image.

The following is an example of the mgmt-cluster.yaml file with the embeddedArtifactRegistry section included.

Make sure to the listed images contain the component versions you need.

The rancher-turtles-airgap-resources helm chart must also be added, this creates resources as described in the Rancher Turtles Airgap Documentation. This also requires a turtles.yaml values file for the rancher-turtles chart to specify the necessary configuration.

apiVersion: 1.3

image:

imageType: iso

arch: x86_64

baseImage: SL-Micro.x86_64-6.1-Base-SelfInstall-GM.install.iso

outputImageName: eib-mgmt-cluster-image.iso

operatingSystem:

isoConfiguration:

installDevice: /dev/sda

users:

- username: root

encryptedPassword: $ROOT_PASSWORD

packages:

packageList:

- jq

sccRegistrationCode: $SCC_REGISTRATION_CODE

kubernetes:

version: v1.33.5+rke2r1

helm:

charts:

- name: cert-manager

repositoryName: jetstack

version: 1.18.2

targetNamespace: cert-manager

valuesFile: certmanager.yaml

createNamespace: true

installationNamespace: kube-system

- name: longhorn-crd

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

- name: longhorn

version: 107.1.0+up1.9.1

repositoryName: rancher-charts

targetNamespace: longhorn-system

createNamespace: true

installationNamespace: kube-system

valuesFile: longhorn.yaml

- name: metal3

version: 304.0.16+up0.12.6

repositoryName: suse-edge-charts

targetNamespace: metal3-system

createNamespace: true

installationNamespace: kube-system

valuesFile: metal3.yaml

- name: rancher-turtles

version: 304.0.6+up0.24.0

repositoryName: suse-edge-charts

targetNamespace: rancher-turtles-system

createNamespace: true

installationNamespace: kube-system

valuesFile: turtles.yaml

- name: rancher-turtles-airgap-resources

version: 304.0.6+up0.24.0

repositoryName: suse-edge-charts

targetNamespace: rancher-turtles-system

createNamespace: true

installationNamespace: kube-system

- name: neuvector-crd

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: neuvector

version: 107.0.1+up2.8.8

repositoryName: rancher-charts

targetNamespace: neuvector

createNamespace: true

installationNamespace: kube-system

valuesFile: neuvector.yaml

- name: rancher

version: 2.12.2

repositoryName: rancher-prime

targetNamespace: cattle-system

createNamespace: true

installationNamespace: kube-system

valuesFile: rancher.yaml

repositories:

- name: jetstack

url: https://charts.jetstack.io

- name: rancher-charts

url: https://charts.rancher.io/

- name: suse-edge-charts

url: oci://registry.suse.com/edge/charts

- name: rancher-prime

url: https://charts.rancher.com/server-charts/prime

network:

apiHost: $API_HOST

apiVIP: $API_VIP

nodes:

- hostname: mgmt-cluster-node1

initializer: true

type: server

# - hostname: mgmt-cluster-node2

# type: server

# - hostname: mgmt-cluster-node3

# type: server

# type: server

embeddedArtifactRegistry:

images:

- name: registry.rancher.com/rancher/hardened-cluster-autoscaler:v1.10.2-build20250611

- name: registry.rancher.com/rancher/hardened-cni-plugins:v1.7.1-build20250611

- name: registry.rancher.com/rancher/hardened-coredns:v1.12.2-build20250611

- name: registry.rancher.com/rancher/hardened-k8s-metrics-server:v0.8.0-build20250704

- name: registry.rancher.com/rancher/hardened-multus-cni:v4.2.1-build20250627

- name: registry.rancher.com/rancher/klipper-helm:v0.9.8-build20250709

- name: registry.rancher.com/rancher/mirrored-cilium-cilium:v1.17.6

- name: registry.rancher.com/rancher/mirrored-cilium-operator-generic:v1.17.6

- name: registry.rancher.com/rancher/mirrored-longhornio-csi-attacher:v4.9.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-csi-node-driver-registrar:v2.14.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-csi-provisioner:v5.3.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-csi-resizer:v1.14.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-csi-snapshotter:v8.3.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-livenessprobe:v2.16.0-20250709

- name: registry.rancher.com/rancher/mirrored-longhornio-longhorn-engine:v1.9.1

- name: registry.rancher.com/rancher/mirrored-longhornio-longhorn-instance-manager:v1.9.1

- name: registry.rancher.com/rancher/mirrored-longhornio-longhorn-manager:v1.9.1

- name: registry.rancher.com/rancher/mirrored-longhornio-longhorn-share-manager:v1.9.1

- name: registry.rancher.com/rancher/mirrored-longhornio-longhorn-ui:v1.9.1

- name: registry.rancher.com/rancher/mirrored-sig-storage-snapshot-controller:v8.2.0

- name: registry.rancher.com/rancher/neuvector-compliance-config:1.0.6

- name: registry.rancher.com/rancher/neuvector-controller:5.4.5

- name: registry.rancher.com/rancher/neuvector-enforcer:5.4.5

- name: registry.rancher.com/rancher/nginx-ingress-controller:v1.12.4-hardened2

- name: registry.suse.com/rancher/cluster-api-addon-provider-fleet:v0.11.0

- name: registry.rancher.com/rancher/cluster-api-operator:v0.18.1

- name: registry.rancher.com/rancher/fleet-agent:v0.13.1

- name: registry.rancher.com/rancher/fleet:v0.13.1

- name: registry.rancher.com/rancher/hardened-node-feature-discovery:v0.15.7-build20250425

- name: registry.rancher.com/rancher/rancher-webhook:v0.8.1

- name: registry.rancher.com/rancher/rancher/turtles:v0.24.0

- name: registry.rancher.com/rancher/rancher:v2.12.1

- name: registry.rancher.com/rancher/shell:v0.4.1

- name: registry.rancher.com/rancher/system-upgrade-controller:v0.16.0

- name: registry.suse.com/rancher/cluster-api-controller:v1.10.5

- name: registry.suse.com/rancher/cluster-api-provider-metal3:v1.10.2

- name: registry.suse.com/rancher/cluster-api-provider-rke2-bootstrap:v0.20.1

- name: registry.suse.com/rancher/cluster-api-provider-rke2-controlplane:v0.20.1

- name: registry.suse.com/rancher/hardened-sriov-network-operator:v1.5.0-build20250425

- name: registry.suse.com/rancher/ip-address-manager:v1.10.2

- name: registry.rancher.com/rancher/kubectl:v1.32.2

- name: registry.rancher.com/rancher/mirrored-cluster-api-controller:v1.9.541.4.2 Modifications in the custom folder #

The

custom/scripts/99-register.shscript must be removed when using an air-gap environment. As you can see in the directory structure, the99-register.shscript is not included in thecustom/scriptsfolder.

41.4.3 Modifications in the helm values folder #

The

turtles.yaml: contains the configuration required to specify airgapped operation for Rancher Turtles, note this depends on installation of the rancher-turtles-airgap-resources chart.cluster-api-operator: cluster-api: core: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"core\"}}" rke2: bootstrap: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"rke2-bootstrap\"}}" controlPlane: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"rke2-control-plane\"}}" metal3: infrastructure: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"metal3\"}}" ipam: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"metal3ipam\"}}" fleet: addon: fetchConfig: selector: "{\"matchLabels\": {\"provider-components\": \"fleet\"}}"

41.5 Image creation #

Once the directory structure is prepared following the previous sections (for both, connected and air-gap scenarios), run the following command to build the image:

podman run --rm --privileged -it -v $PWD:/eib \

registry.suse.com/edge/3.4/edge-image-builder:1.3.0 \

build --definition-file mgmt-cluster.yamlThis creates the ISO output image file that, in our case, based on the image definition described above, is eib-mgmt-cluster-image.iso.

41.6 Provision the management cluster #

The previous image contains all components explained above, and it can be used to provision the management cluster using a virtual machine or a bare-metal server (using the virtual-media feature).

41.7 Dual-stack considerations and configuration #

The examples shown in the previous sections provide guidance and examples on how to set up a single-stack IPv4 management cluster. Such a management cluster is independent of the operational status of downstream clusters, which can be individually configured to operate in either IPv4/IPv6 single-stack or dual-stack configuration, once deployed. However, they way the management cluster is configured has a direct impact on the communication protocols that can be used during the provisioning phase, where both the in-band and out-of-band communications must happen according to which protocols are supported by the management cluster and downstream host. In case some or all of the BMCs and/or downstream cluster nodes are expected to use IPv6, a dual-stack setup for the management cluster is then required.

Single-stack IPv6 management clusters are not yet supported.

In order to achieve dual-stack functionality, Kubernetes must be provided with both IPv4 and IPv6 CIDRs for PODs and Services. However, other components also require specific tuning before building the management cluster image with EIB. The Metal3 provisioning services (Ironic) can be configured in different ways, depending on your infrastructure or requirements:

The Ironic services can be configured to listen on all the interfaces on the system rather than a single IP address, thus, as long as the management cluster host(s) has both IPv4 and IPv6 addresses assigned to the relevant interface, any of them can potentially be used during the provisioning. Note that at this time only one of these addresses can be selected for the URL generation (for consumption by other services, e.g. the Baremetal Operator, BMCs, etc.); as a consequence, to enable IPv6 communications with the BMCs, the Baremetal Operator can be instructed to expose and pass on an IPv6 URL when dealing BMH definitions including an IPv6 address. In other words, when a BMC is identified as IPv6 capable, the provisioning will be performed via IPv6 only, and via IPv4 in all the other cases.

A single hostname, resolving to both IPv4 and IPv6, can be used by Metal3 to let Ironic use those addresses for binding and URL creation. This approach allows for an easy configuration and flexible behavior (both IPv4 and IPv6 remain viable at each provisioning step), but requires an infrastructure with preexisting DNS servers, IP allocations and records already in place.

In both cases, Kubernetes will need to know what CIDRs to use for both IPv4 and IPv6, so you can add the following lines to your kubernetes/config/server.yaml in the EIB directory, making sure to list IPv4 first:

service-cidr: 10.96.0.0/12,fd12:4567:789c::/112

cluster-cidr: 193.168.0.0/18,fd12:4567:789b::/48Some containers leverage the host networking, so modify the network configuration for the host(s), under the network directory, to enable IPv6 connectivity:

routes:

config:

- destination: 0.0.0.0/0

next-hop-address: ${MGMT_GATEWAY_V4}

next-hop-interface: eth0

- destination: ::/0

next-hop-address: ${MGMT_GATEWAY_V6}

next-hop-interface: eth0

dns-resolver:

config:

server:

- ${MGMT_DNS}

- 8.8.8.8

- 2001:4860:4860::8888

interfaces:

- name: eth0

type: ethernet

state: up

mac-address: ${MGMT_MAC}

ipv4:

address:

- ip: ${MGMT_CLUSTER_IP_V4}

prefix-length: 24

dhcp: false

enabled: true

ipv6:

address:

- ip: ${MGMT_CLUSTER_IP_V6}

prefix-length: 128

dhcp: false

autoconf: false

enabled: trueReplace the placeholders with the gateway IP addresses, additional DNS server (if needed), the MAC address of the network interface and the the IP addressed of the management cluster. If address autoconfiguration is preferred instead, refer to the following excerpt and just set the ${MGMT_MAC} variable:

interfaces:

- name: eth0

type: ethernet

state: up

mac-address: ${MGMT_MAC}

ipv4:

enabled: true

dhcp: true

ipv6:

enabled: false

dhcp: true

autoconf: trueWe can now define the remaining files for a single node configuration, starting from the first option, by creating kubernetes/helm/values/metal3.yaml as:

global:

ironicIP: ${MGMT_CLUSTER_IP_V4}

enable_vmedia_tls: false

additionalTrustedCAs: false

metal3-ironic:

global:

predictableNicNames: true

listenOnAll: true

persistence:

ironic:

size: "5Gi"

service:

type: NodePort

metal3-baremetal-operator:

baremetaloperator:

externalHttpIPv6: ${MGMT_CLUSTER_IP_V6}and kubernetes/helm/values/rancher.yaml as:

hostname: rancher-${MGMT_CLUSTER_IP_V4}.sslip.io

bootstrapPassword: "foobar"

replicas: 1

global:

cattle:

systemDefaultRegistry: "registry.rancher.com"where ${MGMT_CLUSTER_IP_V4} and ${MGMT_CLUSTER_IP_V6} are the IP addresses previously assigned to the host.

Alternatively, to use the hostname in place of the IP addresses, modify kubernetes/helm/values/metal3.yaml to:

global:

provisioningHostname: `${MGMT_CLUSTER_HOSTNAME}`

enable_vmedia_tls: false

additionalTrustedCAs: false

metal3-ironic:

global:

predictableNicNames: true

persistence:

ironic:

size: "5Gi"

service:

type: NodePortand kubernetes/helm/values/rancher.yaml to:

hostname: rancher-${MGMT_CLUSTER_HOSTNAME}.sslip.io

bootstrapPassword: "foobar"

replicas: 1

global:

cattle:

systemDefaultRegistry: "registry.rancher.com"where ${MGMT_CLUSTER_HOSTNAME} should be a Fully Qualified Domain Name resolving to your host IP addresses.

For more information visit SUSE Edge GitHub repository under the "dual-stack" folder, where an example directory structure can be found.