Administration Guide

- About This Guide

- I Installation, Setup and Upgrade

- II Configuration and Administration

- 6 Configuration and Administration Basics

- 7 Configuring and Managing Cluster Resources with Hawk2

- 8 Configuring and Managing Cluster Resources (Command Line)

- 9 Adding or Modifying Resource Agents

- 10 Fencing and STONITH

- 11 Storage Protection and SBD

- 12 Access Control Lists

- 13 Network Device Bonding

- 14 Load Balancing

- 15 Geo Clusters (Multi-Site Clusters)

- 16 Executing Maintenance Tasks

- III Storage and Data Replication

- IV Appendix

- Glossary

- E GNU Licenses

4 Using the YaST Cluster Module #

Abstract#

The YaST cluster module allows you to set up a cluster manually (from scratch) or to modify options for an existing cluster.

However, if you prefer an automated approach for setting up a cluster,

see the Inicialização Rápida de Instalação e Configuração. It describes how to install the

needed packages and leads you to a basic two-node cluster, which is

set up with the ha-cluster-bootstrap scripts.

You can also use a combination of both setup methods, for example: set up one node with YaST cluster and then use one of the bootstrap scripts to integrate more nodes (or vice versa).

4.1 Definition of Terms #

Several key terms used in the YaST cluster module and in this chapter are defined below.

- Bind Network Address (

bindnetaddr) The network address the Corosync executive should bind to. To simplify sharing configuration files across the cluster, Corosync uses network interface netmask to mask only the address bits that are used for routing the network. For example, if the local interface is

192.168.5.92with netmask255.255.255.0, setbindnetaddrto192.168.5.0. If the local interface is192.168.5.92with netmask255.255.255.192, setbindnetaddrto192.168.5.64.Note: Network Address for All Nodes

As the same Corosync configuration will be used on all nodes, make sure to use a network address as

bindnetaddr, not the address of a specific network interface.conntrackToolsAllow interaction with the in-kernel connection tracking system for enabling stateful packet inspection for iptables. Used by the High Availability Extension to synchronize the connection status between cluster nodes. For detailed information, refer to http://conntrack-tools.netfilter.org/.

- Csync2

A synchronization tool that can be used to replicate configuration files across all nodes in the cluster, and even across Geo clusters. Csync2 can handle any number of hosts, sorted into synchronization groups. Each synchronization group has its own list of member hosts and its include/exclude patterns that define which files should be synchronized in the synchronization group. The groups, the host names belonging to each group, and the include/exclude rules for each group are specified in the Csync2 configuration file,

/etc/csync2/csync2.cfg.For authentication, Csync2 uses the IP addresses and pre-shared keys within a synchronization group. You need to generate one key file for each synchronization group and copy it to all group members.

For more information about Csync2, refer to http://oss.linbit.com/csync2/paper.pdf

- Existing Cluster

The term “existing cluster” is used to refer to any cluster that consists of at least one node. Existing clusters have a basic Corosync configuration that defines the communication channels, but they do not necessarily have resource configuration yet.

- Multicast

A technology used for a one-to-many communication within a network that can be used for cluster communication. Corosync supports both multicast and unicast. If multicast does not comply with your corporate IT policy, use unicast instead.

Note: Switches and Multicast

To use multicast for cluster communication, make sure your switches support multicast.

- Multicast Address (

mcastaddr) IP address to be used for multicasting by the Corosync executive. The IP address can either be IPv4 or IPv6. If IPv6 networking is used, node IDs must be specified. You can use any multicast address in your private network.

- Multicast Port (

mcastport) The port to use for cluster communication. Corosync uses two ports: the specified

mcastportfor receiving multicast, andmcastport -1for sending multicast.- Redundant Ring Protocol (RRP)

Allows the use of multiple redundant local area networks for resilience against partial or total network faults. This way, cluster communication can still be kept up as long as a single network is operational. Corosync supports the Totem Redundant Ring Protocol. A logical token-passing ring is imposed on all participating nodes to deliver messages in a reliable and sorted manner. A node is allowed to broadcast a message only if it holds the token.

When having defined redundant communication channels in Corosync, use RRP to tell the cluster how to use these interfaces. RRP can have three modes (

rrp_mode):If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.If set to

none, RRP is disabled.

- Unicast

A technology for sending messages to a single network destination. Corosync supports both multicast and unicast. In Corosync, unicast is implemented as UDP-unicast (UDPU).

4.2 YaST Cluster Module #

Start YaST and select › . Alternatively, start the module from command line:

sudo yast2 cluster

The following list shows an overview of the available screens in the YaST cluster module. It also mentions whether the screen contains parameters that are required for successful cluster setup or whether its parameters are optional.

- Communication Channels (required)

Allows you to define one or two communication channels for communication between the cluster nodes. As transport protocol, either use multicast (UDP) or unicast (UDPU). For details, see Section 4.3, “Defining the Communication Channels”.

Important: Redundant Communication Paths

For a supported cluster setup two or more redundant communication paths are required. The preferred way is to use network device bonding as described in Chapter 13, Network Device Bonding.

If this is impossible, you need to define a second communication channel in Corosync.

- Security (optional but recommended)

Allows you to define the authentication settings for the cluster. HMAC/SHA1 authentication requires a shared secret used to protect and authenticate messages. For details, see Section 4.4, “Defining Authentication Settings”.

- Configure Csync2 (optional but recommended)

Csync2 helps you to keep track of configuration changes and to keep files synchronized across the cluster nodes. For details, see Section 4.5, “Transferring the Configuration to All Nodes”.

- Configure conntrackd (optional)

Allows you to configure the user space

conntrackd. Use the conntrack tools for stateful packet inspection for iptables. For details, see Section 4.6, “Synchronizing Connection Status Between Cluster Nodes”.- Service (required)

Allows you to configure the service for bringing the cluster node online. Define whether to start the Pacemaker service at boot time and whether to open the ports in the firewall that are needed for communication between the nodes. For details, see Section 4.7, “Configuring Services”.

If you start the cluster module for the first time, it appears as a wizard, guiding you through all the steps necessary for basic setup. Otherwise, click the categories on the left panel to access the configuration options for each step.

Note: Settings in the YaST Cluster Module

Some settings in the YaST cluster module apply only to the current node. Other settings may automatically be transferred to all nodes with Csync2. Find detailed information about this in the following sections.

4.3 Defining the Communication Channels #

For successful communication between the cluster nodes, define at least one communication channel. As transport protocol, either use multicast (UDP) or unicast (UDPU) as described in Procedure 4.1 or Procedure 4.2, respectively. If you want to define a second, redundant channel (Procedure 4.3), both communication channels must use the same protocol.

All settings defined in the YaST

screen are written to /etc/corosync/corosync.conf. Find example

files for a multicast and a unicast setup in

/usr/share/doc/packages/corosync/.

If you are using IPv4 addresses, node IDs are optional. If you are using IPv6 addresses, node IDs are required. Instead of specifying IDs manually for each node, the YaST cluster module contains an option to automatically generate a unique ID for every cluster node.

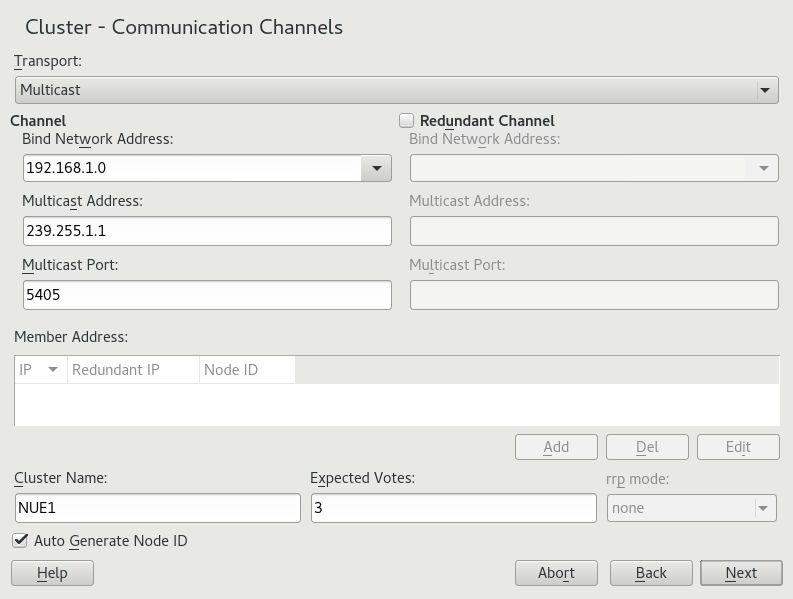

Procedure 4.1: Defining the First Communication Channel (Multicast) #

When using multicast, the same bindnetaddr,

mcastaddr, and mcastport

will be used for all cluster nodes. All nodes in the cluster will know each

other by using the same multicast address. For different clusters, use

different multicast addresses.

Start the YaST cluster module and switch to the category.

Set the protocol to

Multicast.Define the . Set the value to the subnet you will use for cluster multicast.

Define the .

Define the .

To automatically generate a unique ID for every cluster node keep enabled.

Define a .

Enter the number of . This is important for Corosync to calculate quorum in case of a partitioned cluster. By default, each node has

1vote. The number of must match the number of nodes in your cluster.Confirm your changes.

If needed, define a redundant communication channel in Corosync as described in Procedure 4.3, “Defining a Redundant Communication Channel”.

Figure 4.1: YaST Cluster—Multicast Configuration #

If you want to use unicast instead of multicast for cluster communication, proceed as follows.

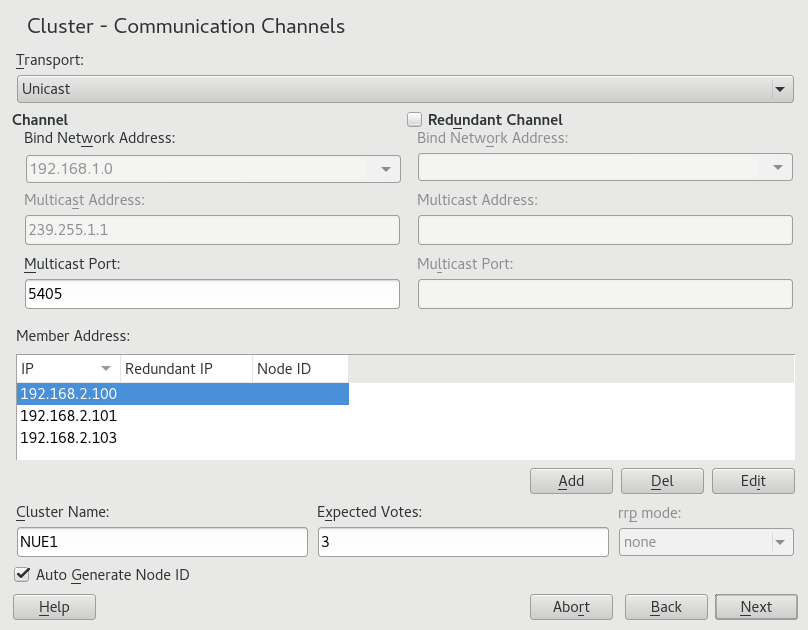

Procedure 4.2: Defining the First Communication Channel (Unicast) #

Start the YaST cluster module and switch to the category.

Set the protocol to

Unicast.Define the .

For unicast communication, Corosync needs to know the IP addresses of all nodes in the cluster. For each node that will be part of the cluster, click and enter the following details:

(only required if you use a second communication channel in Corosync)

(only required if the option is disabled)

To modify or remove any addresses of cluster members, use the or buttons.

To automatically generate a unique ID for every cluster node keep enabled.

Define a .

Enter the number of . This is important for Corosync to calculate quorum in case of a partitioned cluster. By default, each node has

1vote. The number of must match the number of nodes in your cluster.Confirm your changes.

If needed, define a redundant communication channel in Corosync as described in Procedure 4.3, “Defining a Redundant Communication Channel”.

Figure 4.2: YaST Cluster—Unicast Configuration #

If network device bonding cannot be used for any reason, the second best choice is to define a redundant communication channel (a second ring) in Corosync. That way, two physically separate networks can be used for communication. If one network fails, the cluster nodes can still communicate via the other network.

The additional communication channel in

Corosync will form a second token-passing ring. In

/etc/corosync/corosync.conf, the first channel you

configured is the primary ring and gets the ringnumber

0. The second ring (redundant channel) gets the ringnumber

1.

When having defined redundant communication channels in Corosync, use RRP to tell the cluster how to use these interfaces. With RRP, two physically separate networks are used for communication. If one network fails, the cluster nodes can still communicate via the other network.

RRP can have three modes:

If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.If set to

none, RRP is disabled.

Procedure 4.3: Defining a Redundant Communication Channel #

Important: Redundant Rings and /etc/hosts

If multiple rings are configured in Corosync, each node can

have multiple IP addresses. This needs to be reflected in the

/etc/hosts file of all nodes.

Start the YaST cluster module and switch to the category.

Activate . The redundant channel must use the same protocol as the first communication channel you defined.

If you use multicast, enter the following parameters: the to use, the and the for the redundant channel.

If you use unicast, define the following parameters: the to use, and the . Enter the IP addresses of all nodes that will be part of the cluster.

To tell Corosync how and when to use the different channels, select the to use:

If only one communication channel is defined, is automatically disabled (value

none).If set to

active, Corosync uses both interfaces actively. However, this mode is deprecated.If set to

passive, Corosync sends messages alternatively over the available networks.

When RRP is used, the High Availability Extension monitors the status of the current rings and automatically re-enables redundant rings after faults.

Alternatively, check the ring status manually with

corosync-cfgtool. View the available options with-h.Confirm your changes.

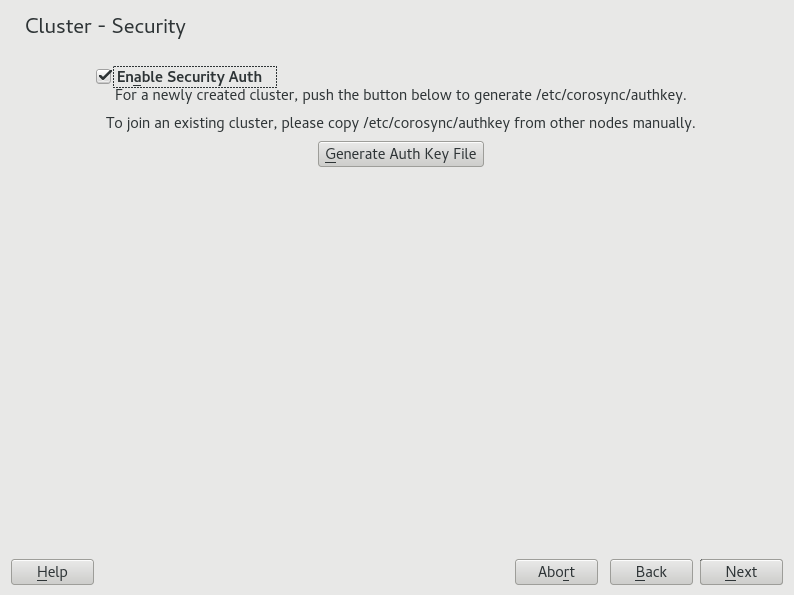

4.4 Defining Authentication Settings #

To define the authentication settings for the cluster, you can use HMAC/SHA1 authentication. This requires a shared secret used to protect and authenticate messages. The authentication key (password) you specify will be used on all nodes in the cluster.

Procedure 4.4: Enabling Secure Authentication #

Start the YaST cluster module and switch to the category.

Activate .

For a newly created cluster, click . An authentication key is created and written to

/etc/corosync/authkey.If you want the current machine to join an existing cluster, do not generate a new key file. Instead, copy the

/etc/corosync/authkeyfrom one of the nodes to the current machine (either manually or with Csync2).Confirm your changes. YaST writes the configuration to

/etc/corosync/corosync.conf.

Figure 4.3: YaST Cluster—Security #

4.5 Transferring the Configuration to All Nodes #

Instead of copying the resulting configuration files to all nodes

manually, use the csync2 tool for replication across

all nodes in the cluster.

This requires the following basic steps:

Csync2 helps you to keep track of configuration changes and to keep files synchronized across the cluster nodes:

You can define a list of files that are important for operation.

You can show changes to these files (against the other cluster nodes).

You can synchronize the configured files with a single command.

With a simple shell script in

~/.bash_logout, you can be reminded about unsynchronized changes before logging out of the system.

Find detailed information about Csync2 at http://oss.linbit.com/csync2/ and http://oss.linbit.com/csync2/paper.pdf.

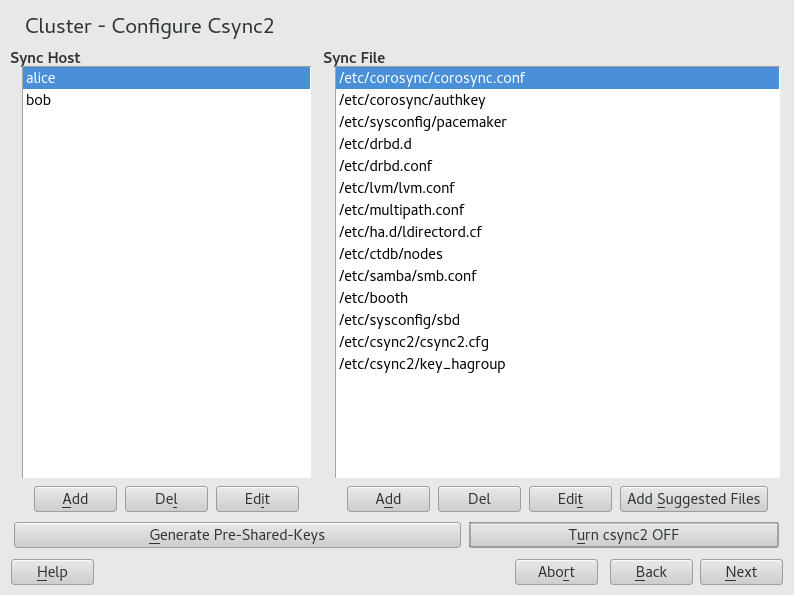

4.5.1 Configuring Csync2 with YaST #

Start the YaST cluster module and switch to the category.

To specify the synchronization group, click in the group and enter the local host names of all nodes in your cluster. For each node, you must use exactly the strings that are returned by the

hostnamecommand.Tip: Host Name Resolution

If host name resolution does not work properly in your network, you can also specify a combination of host name and IP address for each cluster node. To do so, use the string HOSTNAME@IP such as

alice@192.168.2.100, for example. Csync2 will then use the IP addresses when connecting.Click to create a key file for the synchronization group. The key file is written to

/etc/csync2/key_hagroup. After it has been created, it must be copied manually to all members of the cluster.To populate the list with the files that usually need to be synchronized among all nodes, click .

If you want to , or files from the list of files to be synchronized use the respective buttons. You must enter the absolute path name for each file.

Activate Csync2 by clicking . This will execute the following command to start Csync2 automatically at boot time:

root #systemctlenable csync2.socketConfirm your changes. YaST writes the Csync2 configuration to

/etc/csync2/csync2.cfg.To start the synchronization process now, proceed with Section 4.5.2, “Synchronizing Changes with Csync2”.

Figure 4.4: YaST Cluster—Csync2 #

4.5.2 Synchronizing Changes with Csync2 #

To successfully synchronize the files with Csync2, the following requirements must be met:

The same Csync2 configuration is available on all cluster nodes.

The same Csync2 authentication key is available on all cluster nodes.

Csync2 must be running on all cluster nodes.

Before the first Csync2 run, you therefore need to make the following preparations:

Procedure 4.5: Preparing for Initial Synchronization with Csync2 #

Copy the file

/etc/csync2/csync2.cfgmanually to all nodes after you have configured it as described in Section 4.5.1, “Configuring Csync2 with YaST”.Copy the file

/etc/csync2/key_hagroupthat you have generated on one node in Step 3 of Section 4.5.1 to all nodes in the cluster. It is needed for authentication by Csync2. However, do not regenerate the file on the other nodes—it needs to be the same file on all nodes.Execute the following command on all nodes to start the service now:

root #systemctlstart csync2.socket

Procedure 4.6: Synchronizing the Configuration Files with Csync2 #

To initially synchronize all files once, execute the following command on the machine that you want to copy the configuration from:

root #csync2-xvThis will synchronize all the files once by pushing them to the other nodes. If all files are synchronized successfully, Csync2 will finish with no errors.

If one or several files that are to be synchronized have been modified on other nodes (not only on the current one), Csync2 reports a conflict. You will get an output similar to the one below:

While syncing file /etc/corosync/corosync.conf: ERROR from peer hex-14: File is also marked dirty here! Finished with 1 errors.

If you are sure that the file version on the current node is the “best” one, you can resolve the conflict by forcing this file and resynchronizing:

root #csync2-f/etc/corosync/corosync.confroot #csync2-x

For more information on the Csync2 options, run

csync2 -help

Note: Pushing Synchronization After Any Changes

Csync2 only pushes changes. It does not continuously synchronize files between the machines.

Each time you update files that need to be synchronized, you need to

push the changes to the other machines: Run csync2

-xv on the machine where you did the changes. If you run

the command on any of the other machines with unchanged files, nothing will

happen.

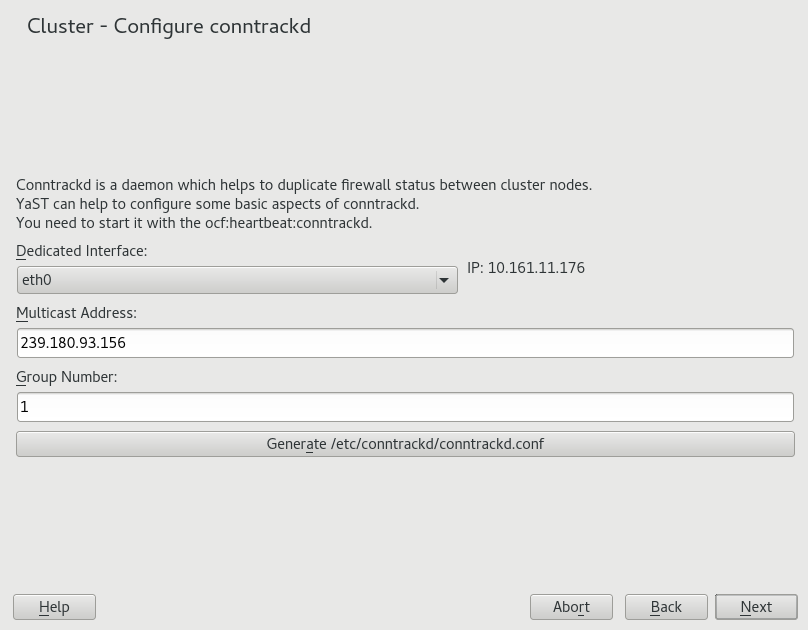

4.6 Synchronizing Connection Status Between Cluster Nodes #

To enable stateful packet inspection for iptables, configure and use the conntrack tools. This requires the following basic steps:

Configuring a resource for

conntrackd(class:ocf, provider:heartbeat). If you use Hawk2 to add the resource, use the default values proposed by Hawk2.

After having configured the conntrack tools, you can use them for Linux Virtual Server, see Load Balancing.

Procedure 4.7: Configuring the conntrackd with YaST #

Use the YaST cluster module to configure the user space

conntrackd. It needs a

dedicated network interface that is not used for other communication

channels. The daemon can be started via a resource agent afterward.

Start the YaST cluster module and switch to the category.

Select a for synchronizing the connection status. The IPv4 address of the selected interface is automatically detected and shown in YaST. It must already be configured and it must support multicast.

Define the to be used for synchronizing the connection status.

In , define a numeric ID for the group to synchronize the connection status to.

Click to create the configuration file for

conntrackd.If you modified any options for an existing cluster, confirm your changes and close the cluster module.

For further cluster configuration, click and proceed with Section 4.7, “Configuring Services”.

Figure 4.5: YaST Cluster—conntrackd #

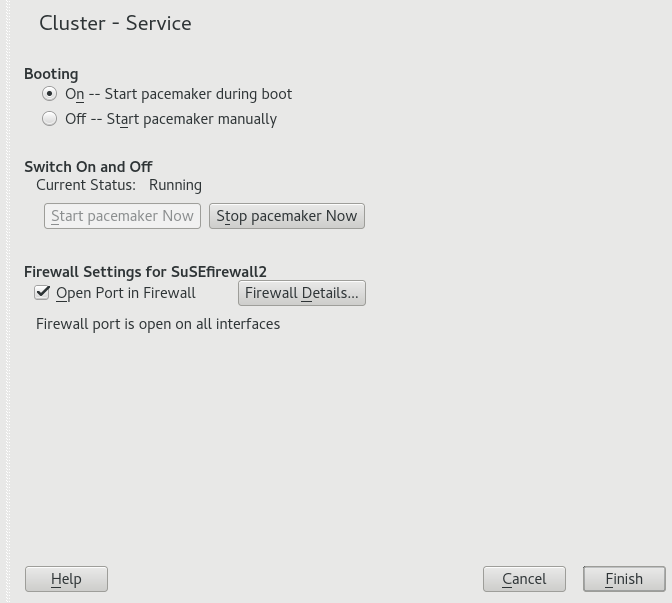

4.7 Configuring Services #

In the YaST cluster module define whether to start certain services on a node at boot time. You can also use the module to start and stop the services manually. To bring the cluster nodes online and start the cluster resource manager, Pacemaker must be running as a service.

Procedure 4.8: Enabling Pacemaker #

In the YaST cluster module, switch to the category.

To start Pacemaker each time this cluster node is booted, select the respective option in the group. If you select in the group, you must start Pacemaker manually each time this node is booted. To start Pacemaker manually, use the command:

root #systemctlstart pacemakerTo start or stop Pacemaker immediately, click the respective button.

To open the ports in the firewall that are needed for cluster communication on the current machine, activate . The configuration is written to

/etc/sysconfig/SuSEfirewall2.d/services/cluster.Confirm your changes. Note that the configuration only applies to the current machine, not to all cluster nodes.

Figure 4.6: YaST Cluster—Services #

4.8 Bringing the Cluster Online #

After the initial cluster configuration is done, start the Pacemaker service on each cluster node to bring the stack online:

Procedure 4.9: Starting Pacemaker and Checking the Status #

Log in to an existing node.

Check if the service is already running:

root #systemctlstatus pacemakerIf not, start Pacemaker now:

root #systemctlstart pacemakerRepeat the steps above for each of the cluster nodes.

On one of the nodes, check the cluster status with the

crm statuscommand. If all nodes are online, the output should be similar to the following:root #crm status Last updated: Thu Jul 3 11:07:10 2014 Last change: Thu Jul 3 10:58:43 2014 Current DC: alice (175704363) - partition with quorum 2 Nodes configured 0 Resources configured Online: [ alice bob ]This output indicates that the cluster resource manager is started and is ready to manage resources.

After the basic configuration is done and the nodes are online, you can start to configure cluster resources. Use one of the cluster management tools like the crm shell (crmsh) or the HA Web Konsole. For more information, see Chapter 8, Configuring and Managing Cluster Resources (Command Line) or Chapter 7, Configuring and Managing Cluster Resources with Hawk2.